Trust Through Consensus: Mira’s Core Mechanism.People are hesitant to communicate with advanced AI systems, which is understandable: there is a certain pause after reading a confident answer. The answer does not just look wrong. It's that it looks too certain. That is the very conflict between fluency and trust which Mira solves with the help of its defining principle: Trust Through Consensus: Mira Core Mechanism. Instead of the users having to trust the authority of a single model, Mira trusts in a structural way, which means that the validation is provided within the base of the system.

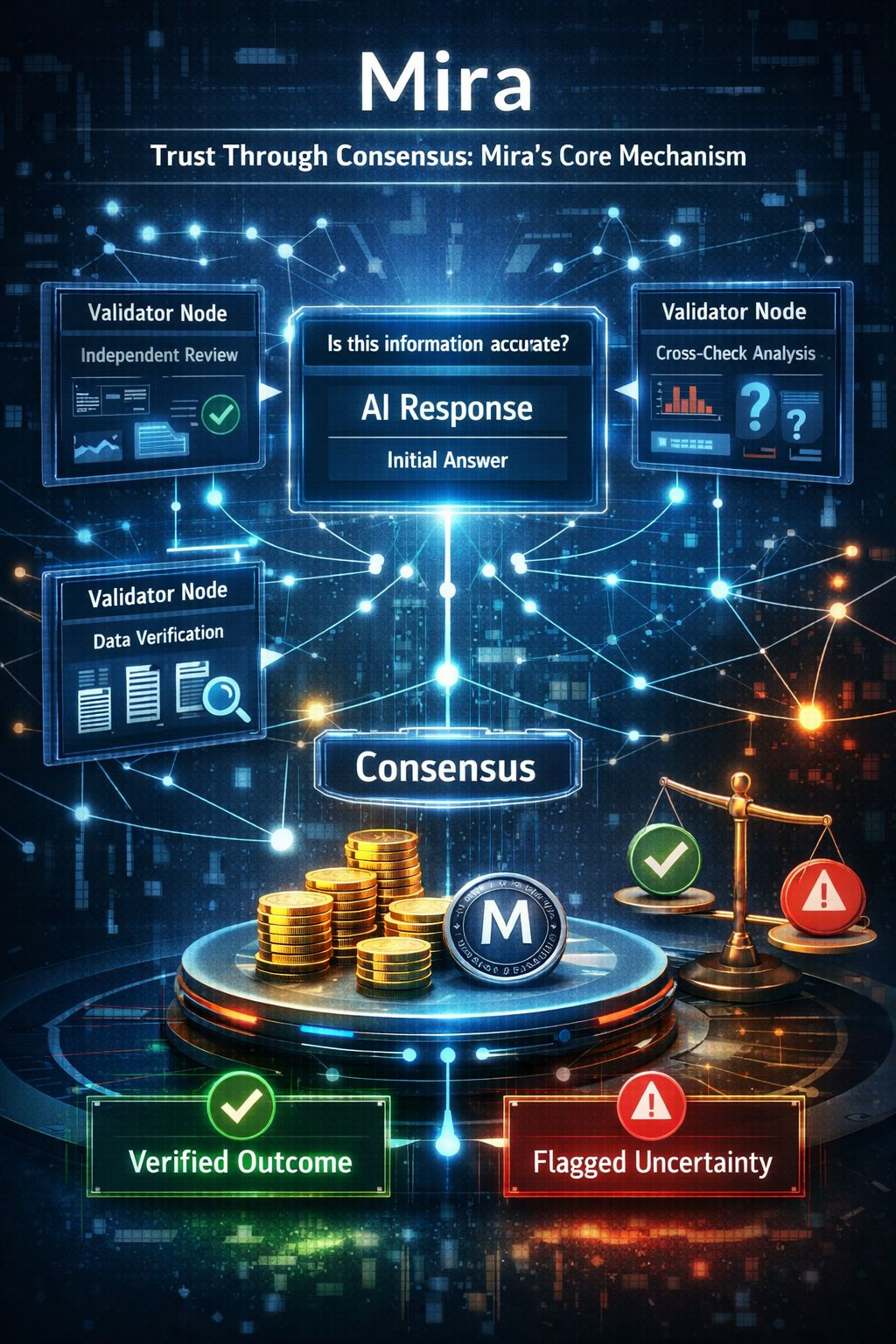

On the surface, Mira is a verification infrastructure based on the AI, which is imposed on the decentralized ecosystems. But the true difference of it is found below--in its way of operationalizing consensus. Mira does not believe the output of one model and instead uses a distributed validation network to go through responses. The mutual evaluation of claims, logic and factual consistency is done by numerous independent validators onto the same output. Trust is never proclaimed, it is negotiated in an algorithmic manner.

The fundamental mechanism of this is consensus, but consensus not in the sense of a social agreement, but as organized convergence between independent systems of thought. When a number of validators are present, which is programmed with an architectural or data diversity, the system will give greater confidence to the result. In case of disagreement arising, Mira does not suppress it. Rather, it brings about doubts. That is a very important design decision. It converts doubt as a sign of weakness to a sign.

Hallucinations are usually refined in classical AI models. Falsehoods are integrated into logical stories. Users often do not find reason to question the structure at once since the structure feels solid. Mira intrudes at that very vulnerable point. This is because it makes it less likely that a thread will run unnoticed by any single validator by making the outputs be evaluated by multiple validators. There is an addition of friction to finalization by the mechanism. Reason being that friction is not wildest.

It is beneficial and incomplete to compare it to blockchain consensus. Blockchain networks develop an agreement concerning the state of transaction--who is the owner, which transaction takes precedence. Mira goes even further to informational reliability. Rather than the network concurring on financial condition, they agree on epistemic equilibrium. What is the likelihood of this statement to be true when it is looked at separately? This is what the mechanism that Mira is based around asks.

Structurally, the process takes place in layers. In the first step, a major AI model provides a response. Second, the response is analysed by independent validators in terms of logical consistency, the consistency of data and the conformity to context. Third, there is an aggregation layer to assess the extent of approval or disagreement between the validators. Lastly Mira determines the score of confidence or uncertainty flag. Every action supports the concept that trust should be achieved by corroborating.

This is a stratified design that creates tradeoffs. Further validation makes latency and computational cost higher. Stability is compromised on speed. The philosophy of Mira indicates that in numerous situations, such as proposals of governance, automation of financial processes, synthesis of research findings, measured reliability has gained more importance than immediate output. The mechanism presupposes that long term credibility is superior to the short term fluency.

Mira has also an incentive layer built in their ecosystem. Validators are not onlookers of events; they are participants of participants who are economically aligned. Mira motivates validators to focus on accuracy through the use of staking, reputation systems or token incentives. Validation is risky in case it is incorrect or careless. Validity is an augmented position in the network. Trust is hence strengthened technically as well as economically.

Consciousness is however not a guarantee that is correct. Teams fall into fallacious beliefs. The defense that Mira has towards this is structural independence. Validators should not be similar in their architectures, data paths or evaluation plans. When diversity is merely skin deep, it becomes unanimity. In the event that diversity is real, convergence is valuable. The long-term survival of this independence is critical to the strength of the core mechanism that Mira maintains.

Mira is situated within a general trend of crypto and decentralized AI that intelligence is not enough. Systems must be accountable. With the integration of AI in decentralized applications in governance, trading strategies, and managing treasuries, the price of misinformation grows. One hallucinated result can produce financial impact. Mira implements the verification at the protocol level, which relocates the reliability as a feature to the basis of infrastructure.

The model of consent by Mira also reprocesses the interpretation of AI outputs psychologically towards the users. Users do not receive binary answers i.e. correct or incorrect but they receive graded confidence. Conflict between validators is manifested. Uncertainty is quantified. This promotes the thinking style of probability. Confidence is measured instead of presumed.

Still, risks remain. By having numerous validators, the complexity of the system is raised. The incentive structures should not go skewed. It is possible that validators are maximizing an agreement and not an independent accuracy. And there is the issue of scalability: is consensus efficient as the network expands?

Nevertheless, in spite of such ambiguities, the essence of the mechanism of Mira is a significant change in architecture. It contests the existing centralized AI power. Mira does not depict intelligence as singular and decisive, but as a distributed and corroborated intelligence. Trust is not ascertained but is achieved through correspondence among independent reviewers.

Verification in most aspects is the real product that Mira treats. The AI deliverable is just a draft. The refinement process is known as consensus. Making its formalization of that refinement into infrastructure, Mira can also position itself as not just another AI project but as a reliability layer to decentralised intelligence.

The adoption of this model as a standard is yet to be witnessed. Adoption is based on priorities of the developers, economic feasibility, and willingness of users to have slight delays in order to have quantifiable confidence. There are indications that verification will cease to be voluntary as AI systems start to gain more influence in the financial and governance systems.

Simply put, "Trust Through Consensus: Mira Core Mechanism" cannot simply be considered the technical description. It is a philosophy of digital credibility. It presupposes that certainty does not have to be carried out but tested. It interred doubt in architecture and did not leave it to the intuition of the user. And in the digital domain where trust is simple to feign, developing mechanisms in which consensus should be won not given may be the most practical means of reinstating trust.

Provided that such a foundation exists, the consensus model suggested by Mira might not further work to diminish hallucinations, but it can transform the credibility of decentralized intelligence as a whole.$MIRA @Mira - Trust Layer of AI #Mira