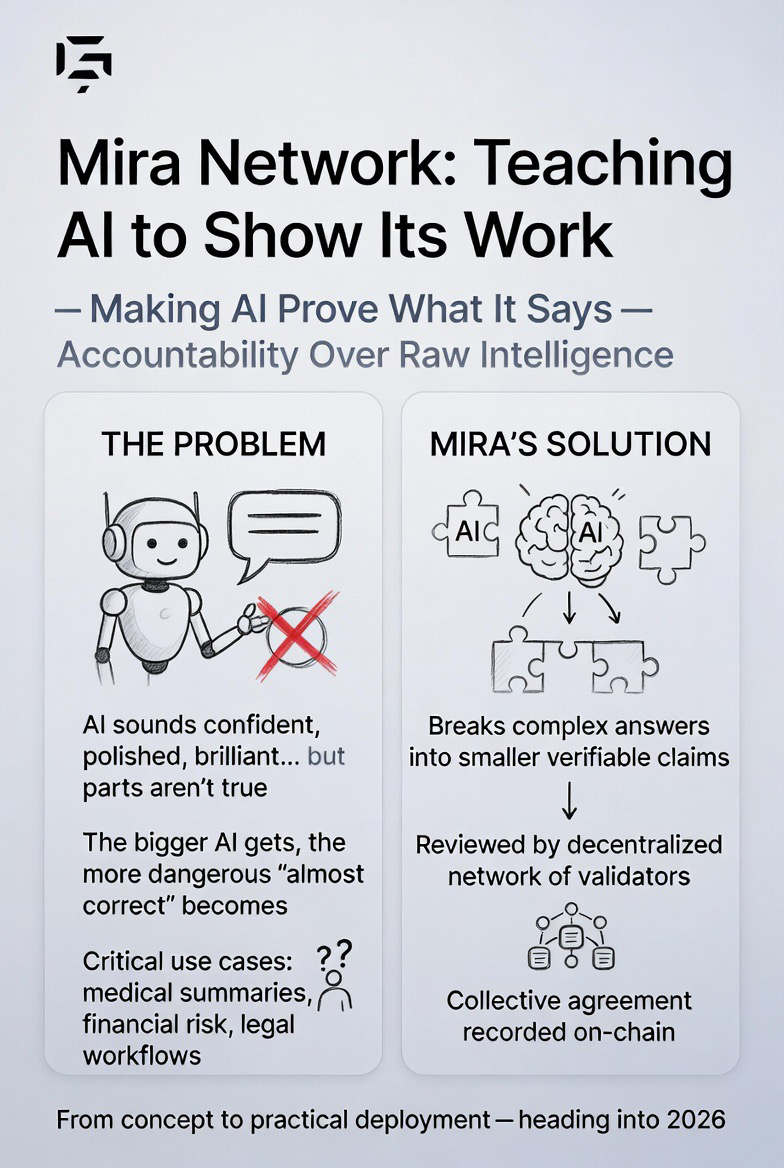

We’ve all had that moment with AI — it sounds confident, polished, even brilliant… and then you realize part of it isn’t true. The bigger AI becomes, the more uncomfortable that feeling gets. When models are helping write medical summaries, analyze financial risk, or automate legal workflows, “almost correct” isn’t good enough. That’s the gap Mira Network is trying to close.

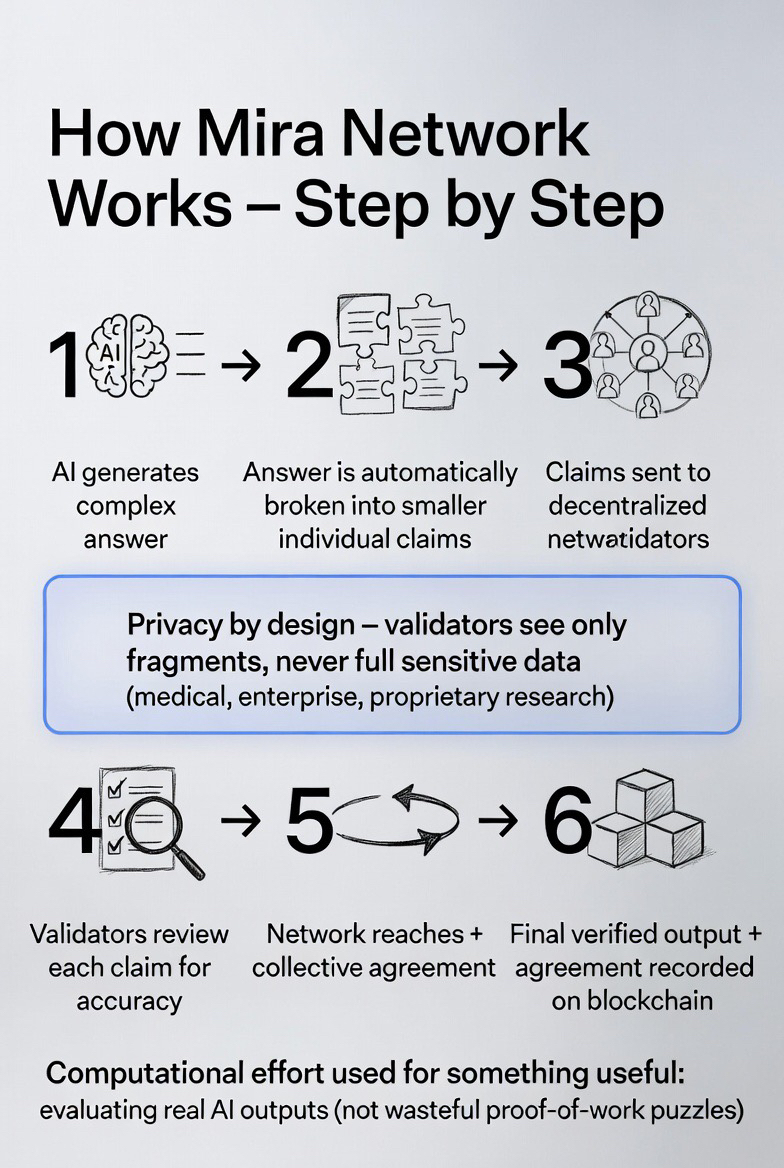

Instead of building another faster or larger model, Mira focuses on something more grounded: making AI prove what it says. The idea is simple but powerful. When an AI produces a complex answer, Mira breaks it down into smaller claims — individual pieces that can be checked. Those fragments are then reviewed by a decentralized network of validators. No single authority decides what’s true. The system reaches agreement collectively, and that agreement is recorded on-chain.

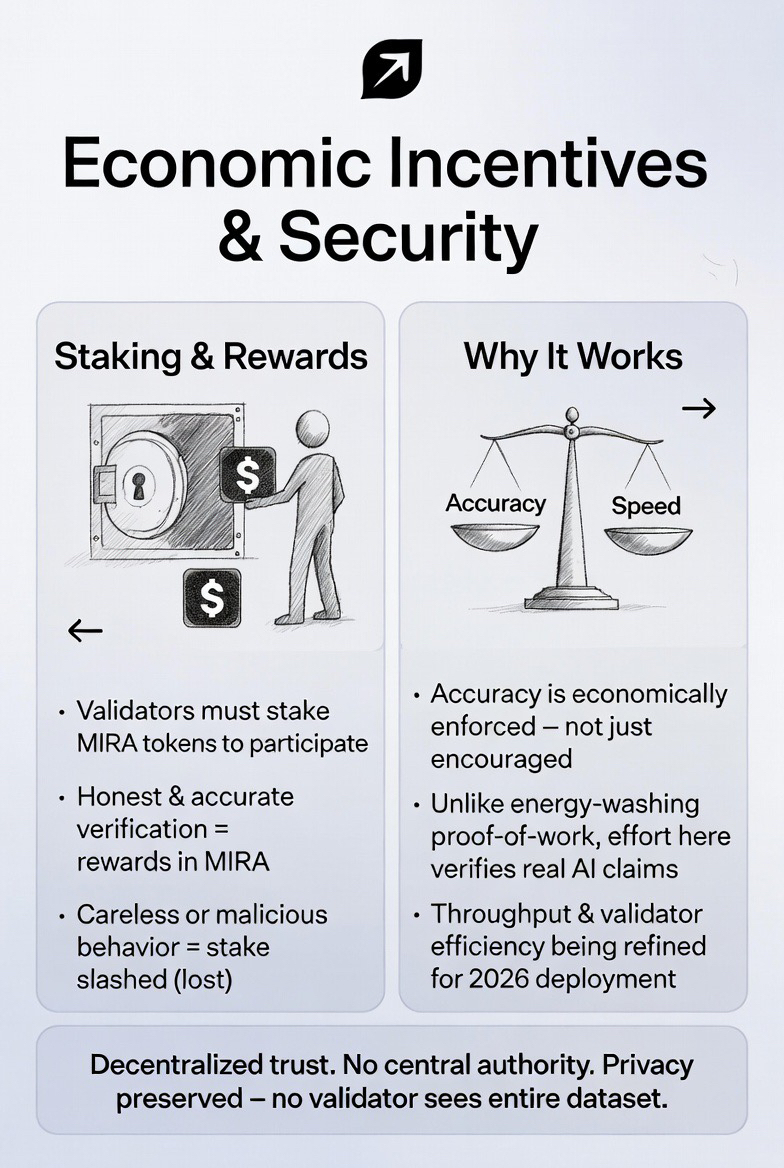

What makes this different is how incentives are structured. Validators stake MIRA tokens before participating. If they verify claims honestly and accurately, they’re rewarded. If they act carelessly or maliciously, they risk losing their stake. In other words, accuracy isn’t just encouraged — it’s economically enforced. And unlike traditional proof-of-work systems that burn energy on abstract puzzles, the computational effort here goes toward something useful: evaluating AI outputs.

Privacy is part of the design as well. Validators don’t see an entire dataset at once. AI outputs are fragmented into pieces, so no single participant can reconstruct sensitive information. That matters if the system is verifying enterprise data, medical content, or proprietary research.

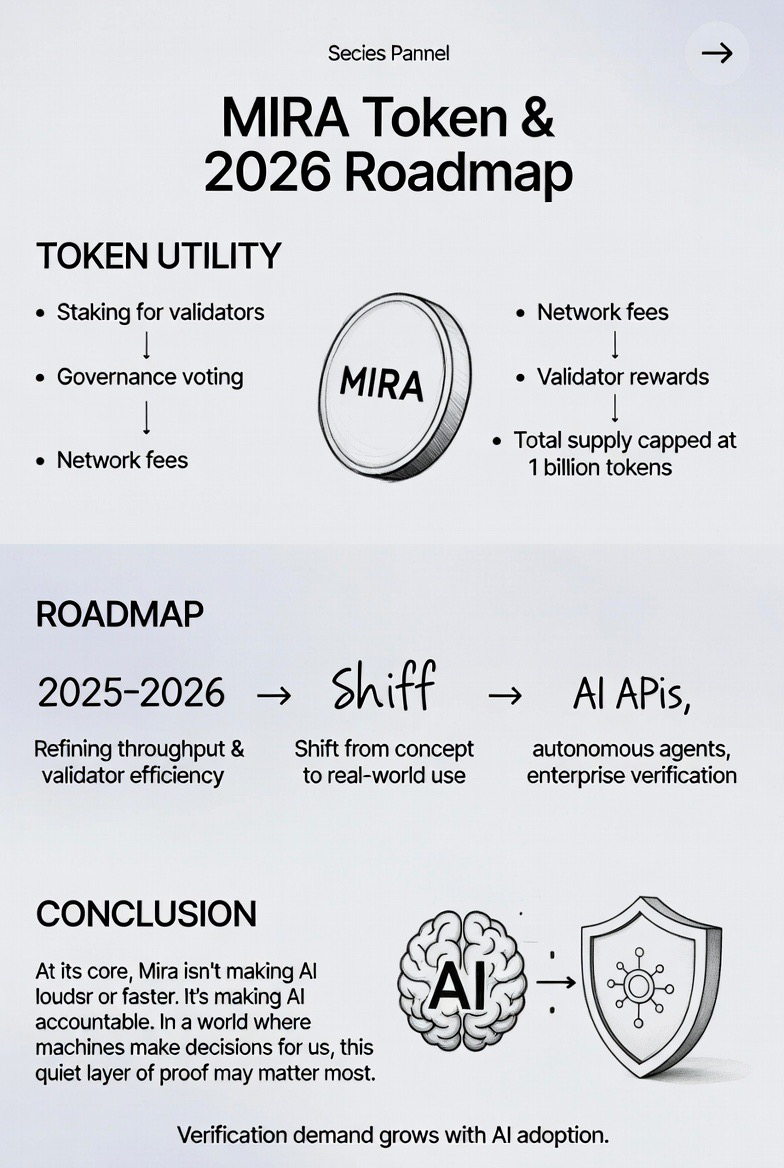

As of recent development updates heading into 2026, Mira has been refining throughput and validator efficiency, aiming to reduce the delay between generation and verification. That signals a shift from concept to practical deployment — especially for businesses experimenting with AI APIs and autonomous agents.

The MIRA token ties it all together. It’s used for staking, governance, network fees, and validator rewards, with a capped supply of one billion tokens. Its long-term relevance depends less on speculation and more on whether verification demand grows alongside AI adoption.

At its core, Mira isn’t trying to make AI louder or faster. It’s trying to make it accountable. And in a future where machines increasingly make decisions on our behalf, that quiet layer of accountability may matter more than raw intelligence itself.