Most people have a small habit when they read something online. Before trusting it, they check it again somewhere else. Maybe they open another tab. Maybe they scroll through comments.

Sometimes they just pause and think for a moment: does this actually make sense? It’s not a formal process. Just a quiet instinct people develop after years of watching information move too fast on the internet.

That instinct — the need to verify something before accepting it — sits quietly behind a lot of digital behavior today. And oddly enough, it’s the same instinct that sits behind parts of the Mira ecosystem.

The interesting thing is that Mira didn’t begin with the goal of building another flashy AI platform. The focus was narrower, almost practical in a way. If machines are generating claims — predictions, model outputs, data interpretations — then someone needs to check those claims. Otherwise the system becomes a black box. It produces answers, but nobody really knows if those answers deserve trust.

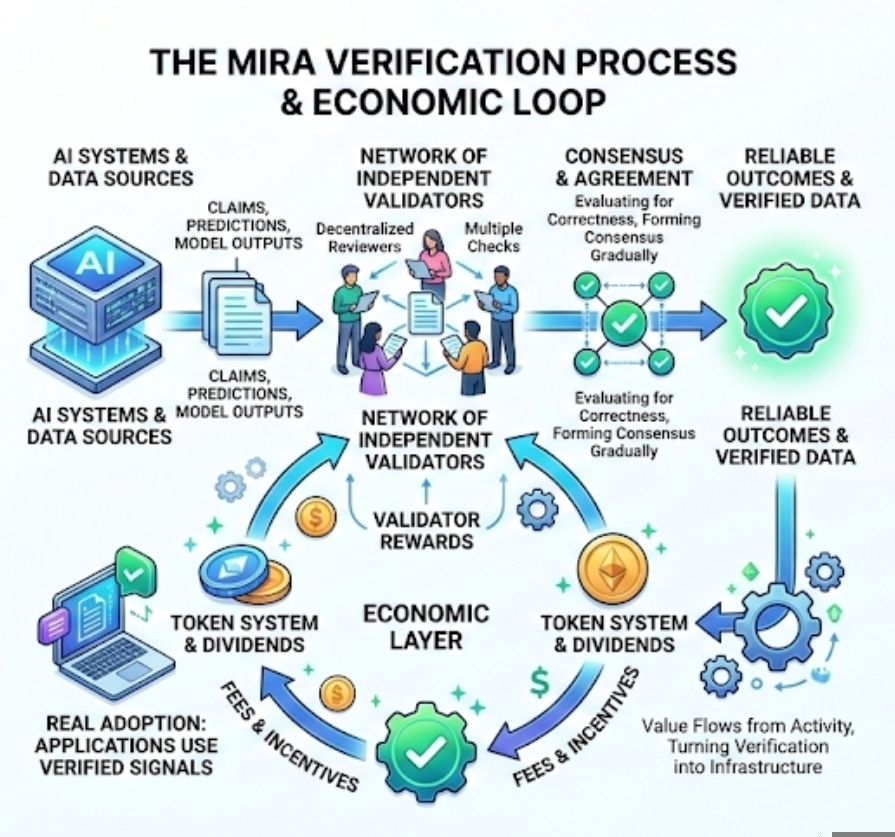

Verification sounds simple when described like that. In reality it’s messy work. Claims appear in large numbers. Some are trivial, others are complicated enough that evaluating them requires careful checking. Mira approaches this problem by letting independent participants in the network examine those claims. Instead of one authority deciding whether something is correct, multiple validators look at the same information and form agreement gradually.

You can think of it less like a single referee and more like a group of reviewers looking over the same page.

What makes the system different from a typical review process is the economic layer behind it. Validators don’t only participate out of curiosity or goodwill. They are rewarded through the network’s token system when their evaluations contribute to reliable outcomes. That simple detail changes behavior quite a bit. When verification becomes something people can earn from, it slowly shifts from a technical task into a piece of infrastructure.

And infrastructure tends to grow in quiet ways.

At first the activity revolves around the basic function: checking claims produced by AI systems. But once that mechanism exists, other uses begin to appear. Data feeds can be verified. Automated decisions can be verified. Predictions generated by algorithms can be reviewed by multiple participants before they are treated as reliable signals.

The network becomes less about one application and more about a service — a place where digital claims are tested.

That’s where the idea of dividends enters the conversation. Not in the traditional corporate sense where a company distributes profit to shareholders. The comparison is a little different here. In Mira’s case, the value flows from activity inside the network itself. When applications rely on the verification layer, they generate fees or incentives. Those rewards circulate back to the participants who maintain the system.

It’s a bit like a road that slowly becomes busy. The more vehicles use it, the more valuable the road becomes to the people maintaining it.

But none of this happens automatically. Networks like this only work if enough real activity appears. That’s something people often overlook when discussing token systems. A reward mechanism can exist on paper, but if the network isn’t actually verifying meaningful claims, the economic layer has very little substance behind it.

Adoption matters more than the design.

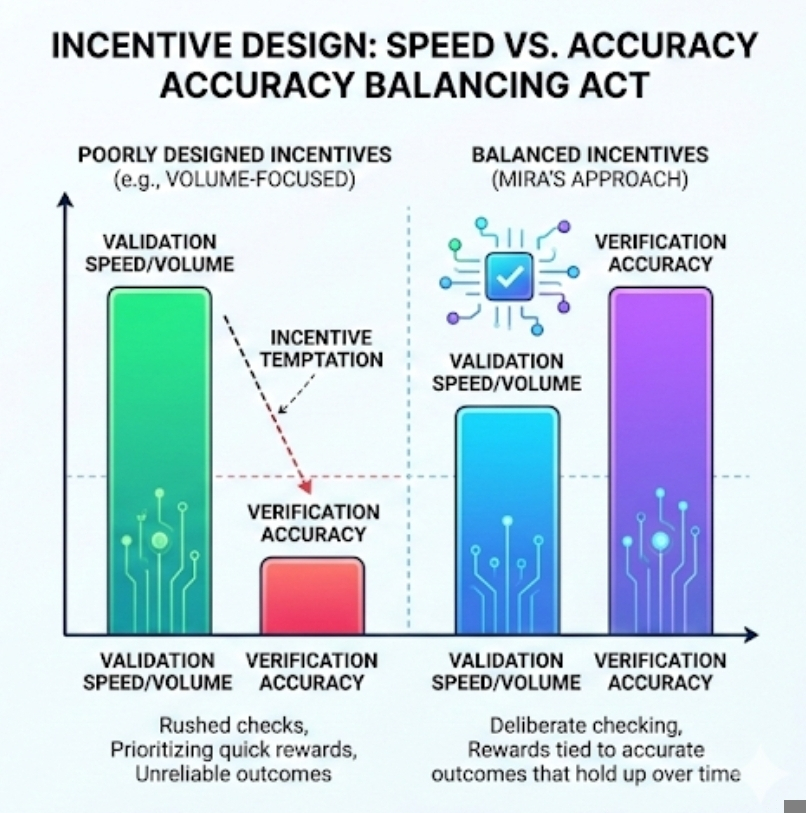

There’s another side to the incentive structure as well, and it’s not always comfortable to talk about. When validators receive rewards, the system has to guard against the temptation to prioritize speed over accuracy. People naturally respond to incentives. If the network pays more for volume than careful work, participants may rush through verification tasks.

Designing incentives that reward correct evaluation rather than quick participation becomes a delicate balancing act. Mira’s model attempts to tie rewards to outcomes that hold up over time, but realistically that kind of system will always require adjustment as the network grows.

One thing that has quietly influenced how people talk about these systems is the environment where the conversation happens. Platforms like Binance Square have their own ranking systems and visibility signals. Posts that only shout price predictions rarely stay visible for long. The algorithm tends to favor something different — explanations, infrastructure discussions, ideas that hold attention a little longer.

You can see it in how creators gradually change their tone.

A few months ago many discussions were dominated by quick trading calls. Now more posts explore how networks actually function. How validators work. How incentives move through the system. Part of that shift is human curiosity, but part of it is also the ranking logic behind the platform. Visibility often follows depth rather than excitement.

That feedback loop shapes behavior more than people admit.

Writers who want credibility slowly move toward explaining systems instead of promoting them. The audience becomes slightly more patient as well. When readers understand how verification and rewards interact, the conversation becomes less about speculation and more about structure.

And structure is really the quiet story behind Mira.

The network’s value does not come from promising that AI will suddenly become perfect. Anyone who spends time around machine learning knows that models make mistakes constantly. The real question is whether those mistakes can be detected and evaluated in a transparent way.

Verification layers try to answer that question.

Still, there are risks in assuming the model will scale smoothly. Coordination across a decentralized network is never simple. Participants live in different regions, follow different incentives, and interpret rules differently. Even small disagreements about validation standards could cause friction as the system grows.

That uncertainty doesn’t really weaken the idea. If anything, it simply shows how difficult it is to build systems that depend on distributed trust instead of one central authority.

And maybe that’s the more interesting perspective here. The Mira ecosystem is often described through its tokens or reward mechanisms, but those are only visible parts of a deeper experiment. What the network is really testing is whether verification itself can become an economic activity — something people maintain because it provides value.

For years, verification on the internet has been treated as an afterthought. Platforms focused on speed, reach, and engagement. Accuracy usually came later, sometimes much later.

Now there is a quiet attempt to reverse that order. Build the verification layer first. Let the economic incentives grow around it.

Whether that approach succeeds will depend on something very ordinary: people deciding that careful checking is worth their time. Not because someone told them it should be, but because the system makes that effort meaningful.

And if that habit spreads — the habit of verifying before trusting — the dividends may end up being cultural as much as financial.