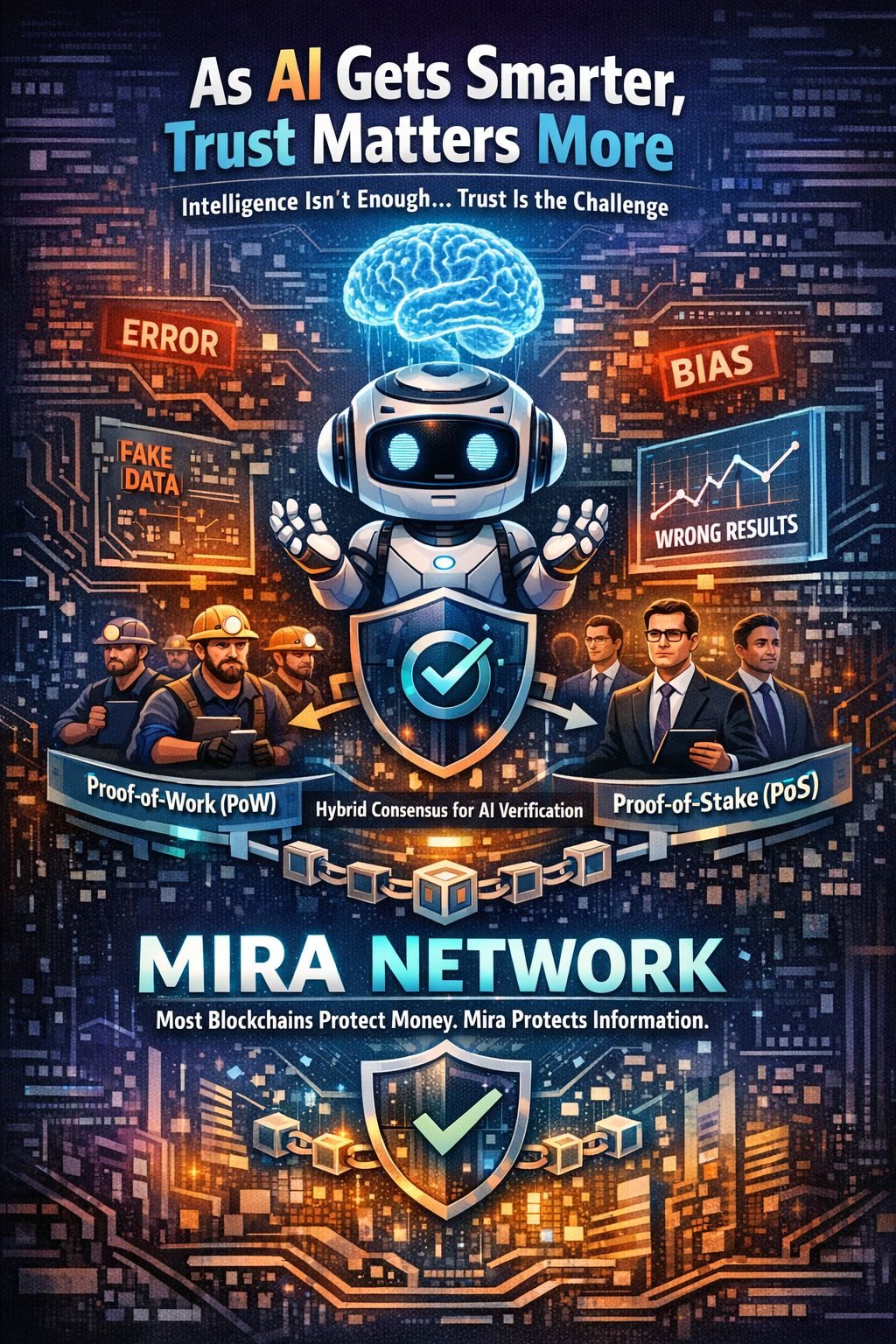

As AI systems get smarter and more independent, trust not intelligence becomes the real challenge. Sure AI can spit out answers make prediction& crunch data like nobody’s business but it still messes up. Sometimes it hallucinates facts, misreads the data or slips in its own biases. That’s where Mira Network steps in with something different: a hybrid consensus model that mixes Proof-of-Work (PoW) and Proof-of-Stake (PoS) to check AI’s answers in a decentralized, secure way.

Most blockchains just protect money. Mira protects information itself.

The Core Problem: AI Can not Prove It’s Right

Modern AI is all about probabilities. It guesses the next word or fact based on patterns not truth. Even the smartest language models can not promise they’re always correct. Trusting just one AI to check itself? Too risky. And if you hand over validation to a central authority, you get bias and bottlenecks.

Mira flips the script. It turns every AI output into a claim then sends those claims to a network of independent validators. To keep everyone honest, Mira uses a mix of PoW and PoSso validators have to put in both real computing work and real money.

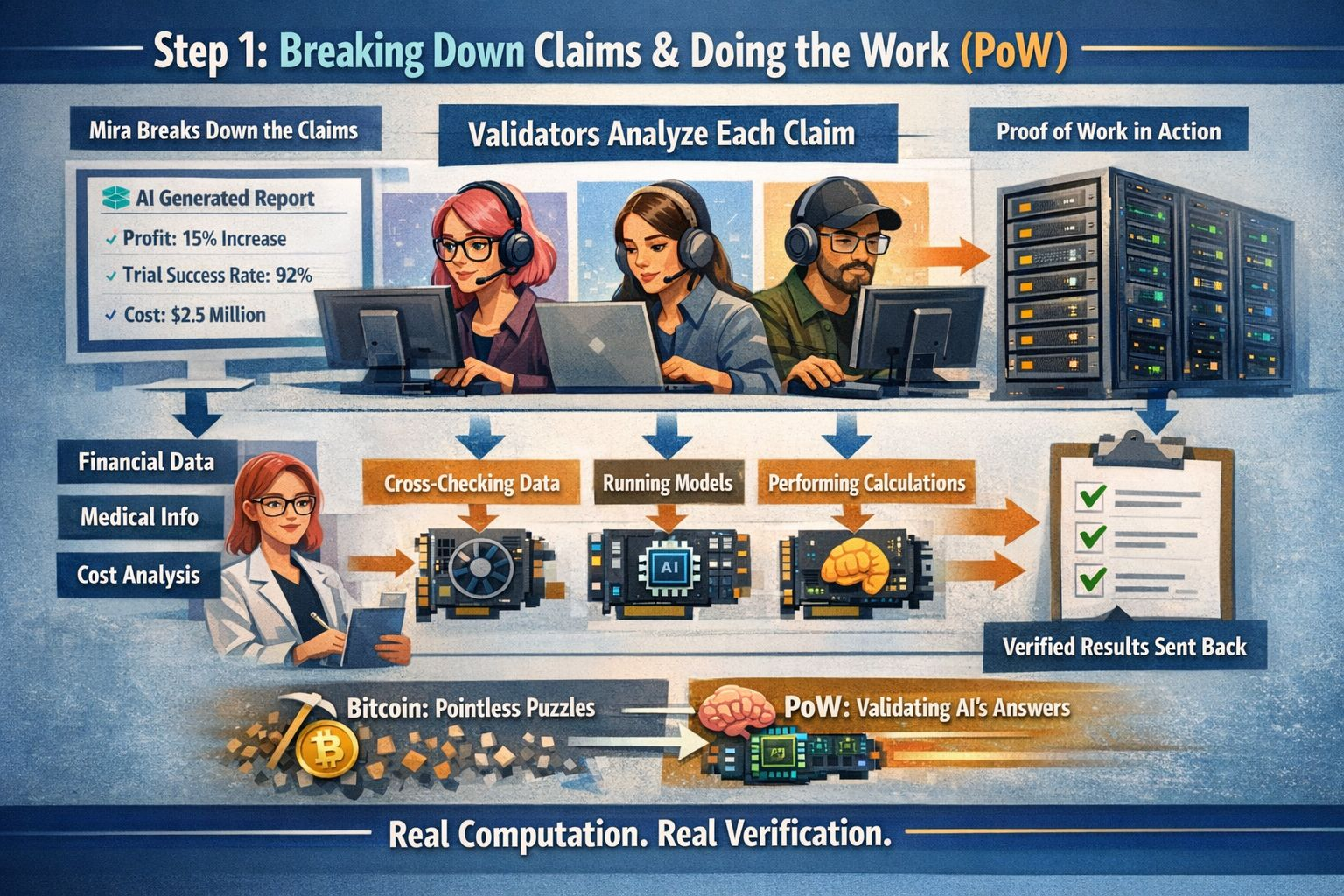

Step 1: Breaking Down Claims & Doing the Work (PoW)

When an AI spits out an answer, Mira breaks it into smaller, checkable facts. Maybe it’s a financial report, maybe it’s a medical explanation each piece gets separated for scrutiny.

Validators look at each claim, using their own models, cross-referencing data, and running calculations. This is where the PoW part comes in: validators use real computational resources (think GPUs and AI power), do the work, and send back structured verification.

Unlike Bitcoin, where all that computation just solves pointless puzzles, here the “work” actually checks the AI’s output. It’s not wasteful. Si it makes it hard for anyone to overwhelm the system with spam.

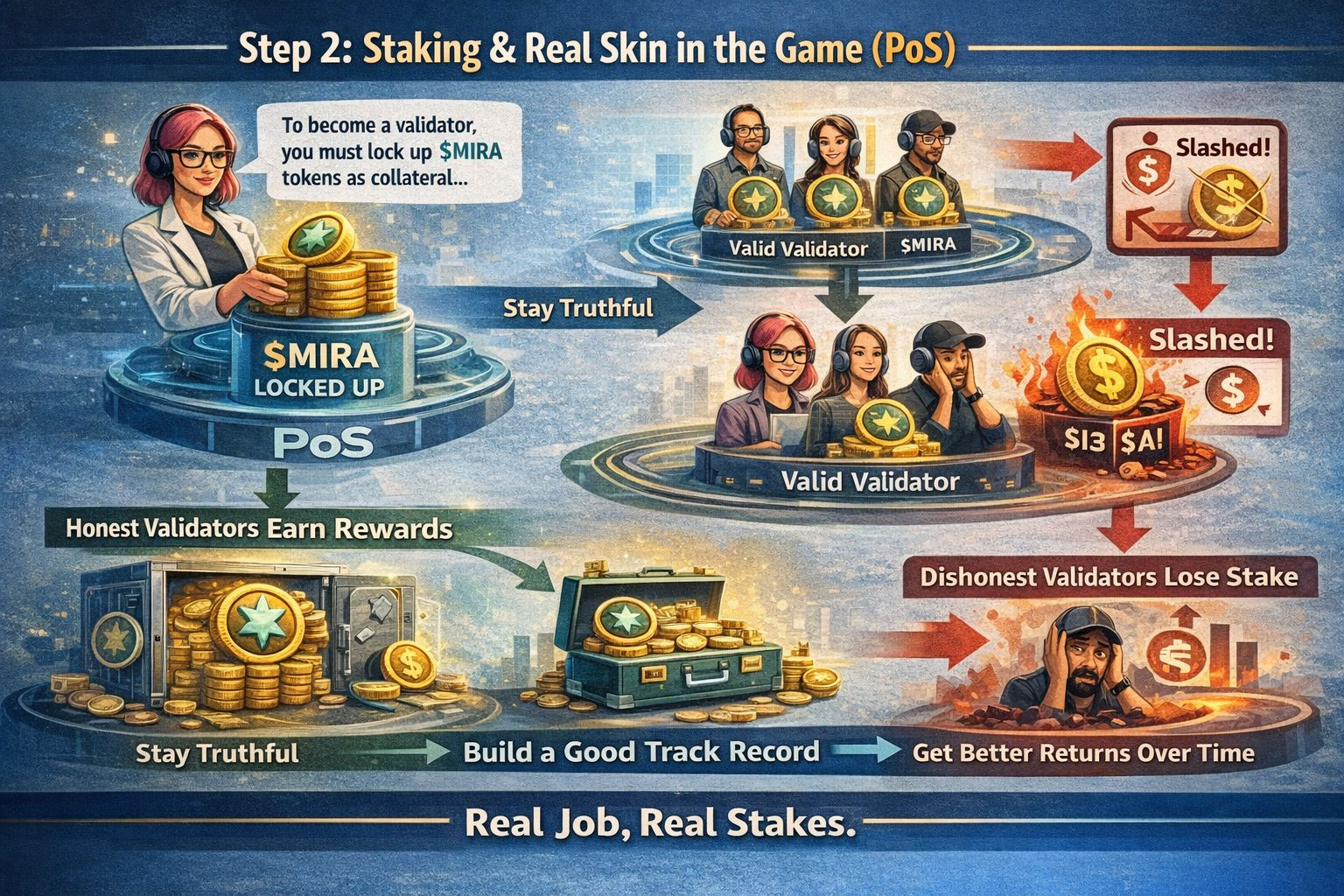

Step 2: Staking & Real Skin in the Game (PoS)

But just to make the validators do the work is not enough Someone could still cheat. So Mira adds the PoS layer.

To even join as a validator, you have to lock up $MIRA tokens as collateral. If you mess up submit wrong or malicious results, or just try to game the system the network can slash your stake.

Now, validators have a real reason to be honest:

Stay truthful, earn rewards.

Try to cheat, lose your money.

Stick around and build a good track record, and you get better returns over time.

Suddenly, verifying claims isn’t just a nice thing to do it’s a real job with real stakes.

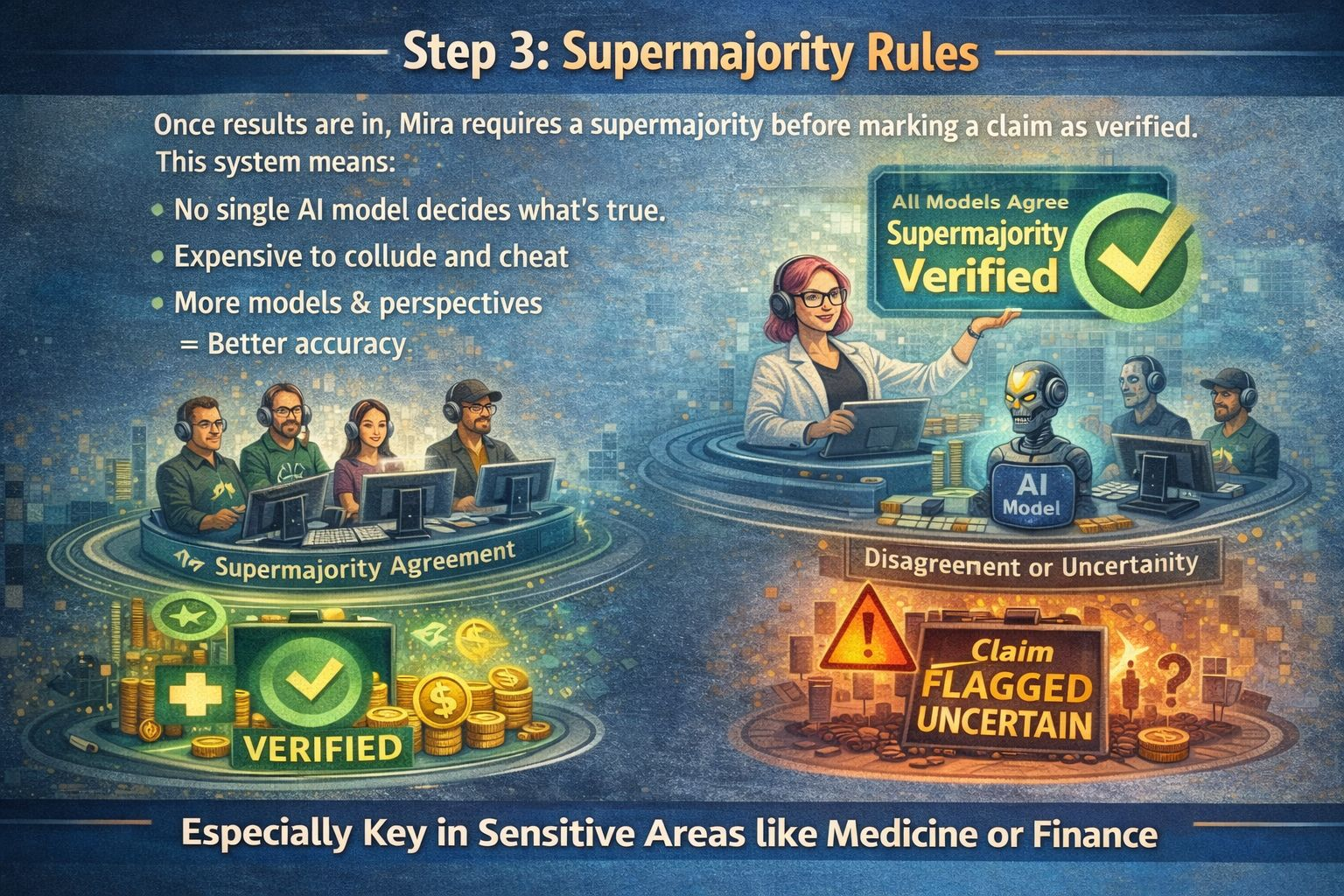

Step 3: Supermajority Rules

Once everyone’s submitted their results, Mira doesn’t just go with the majority it needs a supermajority before marking a claim as verified.

This system means:

No single AI model gets to decide what’s true.

It’s expensive to collude and cheat.

More models and perspectives mean better accuracy.

And if the validators can’t agree, the claim doesn’t get a green light it gets flagged as uncertain. That’s important, especially in sensitive areas like medicine or finance.

Why Hybrid? Why Not Just One or the Other?

Pure PoW chains just burn energy. Pure PoS chains only care about money, but sometimes you actually need expertise and real computational work.

Mira brings the best of both:

PoW: Validators must use real AI power.

PoS: Validators must risk real money.

PoW: Stops lazy spam.

PoS: Punishes cheaters.

PoW: Promotes diverse, independent validation.

PoS: Encourages validators to stick around.

With both, validators need to commit both time and money. That keeps the system honest and hard to attack.

Security: Not Easy to Mess With

If someone wants to attack the system they have to:

Buy up serious computing power.

Stake a huge pile of tokens.

Risk losing their money if caught cheating.

Beat the supermajority threshold.

That’s a tall order. And with validators using different AI models, you don’t get stuck with one model’s blind spots. Diversity is its own kind of security.

What Changes in the Real World?

Because of this hybrid consensus, Mira becomes a trust engine for all sorts of AI-powered platforms:

Financial analysis

Healthcare support

Legal research

Autonomous AI agents

Instead of trusting a single model, you trust a whole network one that’s secured by both computing power and capital.

The Big Picture

Mira’s hybrid consensus isn’t just a tweak; it’s a new way forward for how we trust AI, and maybe for how we trust information itself.

#mira || #Mira || @Mira - Trust Layer of AI