Introduction: Why Mira Exists at All

Let me start with a feeling most of us recognize. You ask an AI a simple question, and it answers with confidence. Sometimes it’s brilliant. Sometimes it’s wrong. And the scary part is that it can sound equally sure in both cases. That gap between confidence and correctness is what people mean when they talk about AI hallucinations and bias. Mira Network is built around one central idea: if AI is going to be used for important things, we need a way to check it that doesn’t depend on one company, one model, or one “trust me, it’s fine” authority.

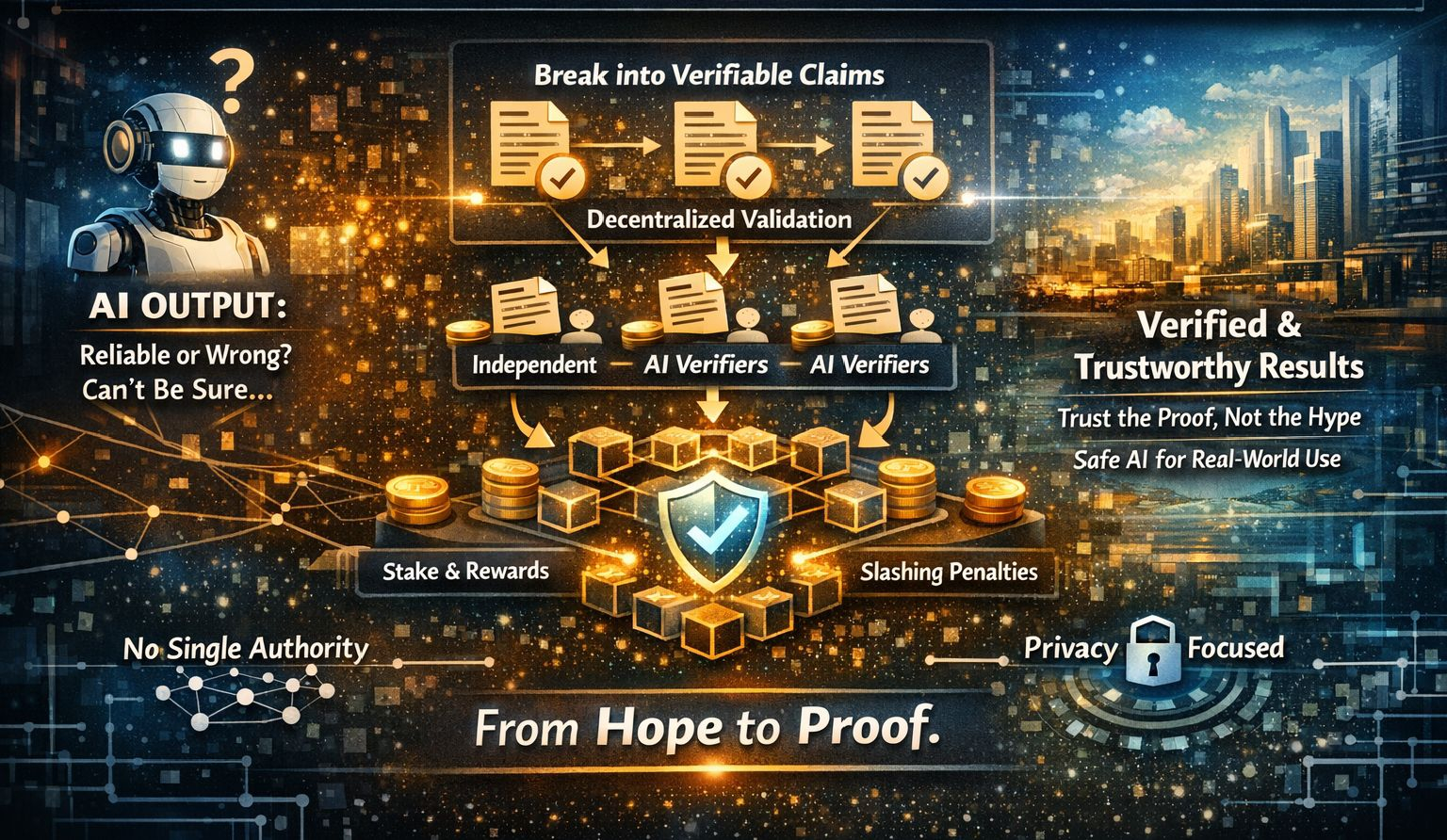

When I’m explaining Mira to a friend, I describe it like this: Mira is trying to turn AI output from “a persuasive answer” into “a checked answer.” Not checked by a single fact-checker, and not checked by the same AI that produced it, but checked by a distributed group of independent verifiers, with incentives that make honesty the best strategy most of the time. That’s the emotional heart of the project: moving from hope to proof, from vibes to verification.

The Problem Mira Is Targeting: Why Better Models Alone Don’t Fix It

It’s tempting to think the solution is simply to build smarter AI. Mira’s argument is more subtle and more human: even as models get stronger, the reliability issues don’t disappear cleanly, because different kinds of errors pull against each other. A model can be trained to be safer and more cautious, but that can also make it refuse correct answers or become overly conservative. Or it can be trained to be more helpful and confident, but that can increase the risk of hallucinating when it doesn’t know. In real life, this shows up as a painful truth: even “great” AI can still be unreliable in critical moments.

There’s also a social layer. If one organization chooses which models verify which outputs, then their choices and incentives become the system’s reality. Mira leans into a different approach: reliability through decentralized participation. In simple terms, they’re saying: even if you collect a bunch of models, it still isn’t the same as a network where many independent operators bring their own tools and compete to be accurate.

The Core Idea: Break Big Answers into Small Claims

Here’s the trick that makes the whole machine workable. Instead of trying to verify an entire essay or verify a whole complicated answer as one blob, Mira transforms content into smaller pieces called claims. A claim is a statement that can be checked more cleanly. For example, a sentence with two facts becomes two separate claims. That might sound obvious, but it matters a lot, because multiple verifiers can only agree meaningfully if they are verifying the same thing with the same context. Standardization is the difference between real consensus and a messy argument.

Think of this like turning a long story into a set of simple checkpoints. Once you do that, you can distribute those checkpoints across many verifiers and combine their results into a final verdict. If you’ve ever watched friends cross-check a rumor by comparing details rather than arguing about the whole thing at once, you already understand the vibe.

How Mira Works Step by Step, in Everyday Language

Step 1: A user asks for verification

In Mira’s flow, a customer could be an app, an AI agent, a company, or a human using a tool. They submit content and ask the network to verify it. They also specify what kind of verification they want, because different situations need different strictness. Some use cases may want very high agreement before calling something verified, while others may accept a softer threshold.

Step 2: The content becomes verifiable claims

This is where the content is broken into those smaller checkpoints. The goal is to preserve meaning while making each claim easy to evaluate. This transformation step is the quiet engine of the whole system. Without it, the network would be stuck arguing about complex paragraphs instead of checking clear, simple statements.

Step 3: Claims are sent to independent verifier nodes

Now the network distributes claims to many nodes run by independent participants. Each node uses one or more AI models as verifiers to judge the claim. The key point is that no single model gets to be the final judge of its own work. Instead, multiple verifiers cross-check, and the network aggregates their judgments.

Step 4: Consensus turns many opinions into one outcome

Once enough verifiers respond, the network combines results into a consensus outcome for each claim. Depending on the threshold chosen, a claim might be treated as verified, rejected, or uncertain. The important part is that the outcome comes from distributed agreement rather than a single authority.

Step 5: A cryptographic proof is produced

After consensus, Mira produces a cryptographic certificate for the verification result. That certificate is the “receipt” that says: this result came from a real verification process and wasn’t quietly edited or forged later. This is how Mira tries to make verification portable, so another system can trust the output without trusting whoever delivered it.

Why Mira Uses Economic Incentives: The Design Logic Behind Staking and Penalties

Now we get to the part that sounds like crypto, but the intuition is simple. If you want people to verify things, you need to make honest verification profitable and dishonest verification painful. Mira is built around the idea that economic incentives can keep a large distributed network behaving well most of the time, even when participants don’t know or trust each other personally.

In practice, this usually means participants stake value to participate, earn rewards for contributing correctly, and face penalties if they repeatedly behave badly. Staking is important because it’s a psychological and economic anchor. If you have something to lose, you tend to care more. And if the system can slash dishonest behavior, it becomes much harder to profit from manipulation.

Mira also addresses a tricky challenge: some verification tasks look like multiple-choice questions. That means a lazy attacker might try random guessing. The network’s response is: don’t rely on “effort” alone. Require “skin in the game.” Over time, if a node behaves like a guesser, it should lose money. That turns cheating from a clever shortcut into a losing strategy.

Privacy: Why Mira Doesn’t Want Any One Node to See Everything

A lot of verification systems accidentally create a privacy nightmare. If verifiers see the full content, then private documents become visible to strangers. Mira tries to reduce that risk by splitting content and distributing pieces across nodes so no single node operator can reconstruct the entire original content. The goal is data minimization: give each verifier only what it needs to judge a claim, not the full context of a user’s private world.

There’s a practical reality here too: in the early stages of many networks, some components are more centralized. Mira’s broader idea is that decentralization can increase over time. If It becomes more distributed without breaking privacy, that strengthens the “trustless” promise. If it stays centralized, that becomes a weak point.

What Makes Mira Different From Just Asking Many Models

At first glance, you might ask: why not just query five different AI models and compare answers? That’s a fair question. Mira’s answer is that verification is not just about asking multiple models. It’s about turning verification into a structured network with incentives, reputation, standardized claim transformation, transparent consensus rules, and cryptographic proof that the process happened as described.

Without that structure, you’re mostly doing informal voting. And informal voting is easy to game, easy to bias, and hard to audit. Mira is trying to make verification into infrastructure, not a guess-and-check habit.

What Real Metrics Matter for Judging Mira’s Health

Price gets attention, but it’s not the best measure of whether the network is actually becoming useful. The deeper health signals are more practical.

One important signal is real verification demand. Are builders actually using the network because it reduces costly mistakes? If real apps pay to verify results, that’s the strongest sign the product is solving a real problem.

Another signal is verifier diversity. Mira’s reliability story depends on independent operators and genuinely diverse verification models. If verification power becomes concentrated in a few hands, the network starts to lose the main reason it exists.

Another key signal is stake distribution and security. If the security model depends on honest stake being dominant, then you want to see healthy participation and a network that can withstand attempted manipulation. A system that looks secure on paper but isn’t secure in practice is fragile.

You also watch speed and cost. If verification is too slow or too expensive, most real-time AI use cases won’t bother. The best future for Mira is one where verification becomes fast enough and cheap enough that it feels normal, like a safety check you barely notice.

Finally, you watch adoption paths. Are developers integrating it into tools that matter? Are there real workflows built around it? Are there signs of long-term commitment? We’re seeing a broader shift in the world toward caring about AI reliability, but each project has to prove it can deliver reliability in practice.

Main Risks and Weaknesses: What Could Go Wrong

The first risk is collusion. If enough verifiers coordinate, they could try to push consensus toward a false result. Incentives and staking can make collusion expensive, but it’s still a real risk. Any consensus system must constantly defend against coordinated manipulation.

The second risk is model monoculture. If the network ends up relying on similar models trained on similar data, then diversity becomes an illusion. The system could amplify shared blind spots instead of reducing them. Mira’s promise depends on sustaining true variety and independence.

The third risk is early centralization. If key parts of the pipeline remain centralized for too long, the “trustless” story weakens. Many networks struggle with this transition. It’s not a moral failing; it’s a hard engineering and coordination problem.

The fourth risk is privacy leakage. Even with sharding, claims can reveal sensitive meaning. And repeated verification patterns can expose more than intended. Privacy is not something you “solve once.” It’s something you protect continuously.

The fifth risk is incentive mismatch. If rewards are too low, honest participants won’t show up. If rewards are too high without real demand, the network can become dependent on subsidies and speculation. A healthy network needs real customers who pay for real value.

A Realistic Future: What Mira Could Become

The optimistic but realistic future for Mira is not that it becomes a magical truth machine. The realistic future is that it becomes a useful layer of infrastructure for AI systems that need reliability. First, it could become the verification step for agents that take actions, such as sending messages, making recommendations, or handling sensitive workflows. Then it could evolve toward more advanced forms of “verified generation,” where outputs are designed from the beginning to be easier to check and certify.

In the longer run, if the network is widely used, something quietly powerful could happen: verification stops being an optional add-on and becomes normal. Like seatbelts. Like locks. Like checksums. People don’t use these because they’re exciting. They use them because they make life safer and calmer.

And there’s a human element here that matters. Reliability doesn’t only protect money or data. It protects confidence. It reduces anxiety. It makes it easier to use powerful tools without feeling like you’re standing on a trapdoor.

A Note About Exchanges

If you ever hear about trading the token, keep it simple and careful. If you need to mention an exchange at all, the only one worth naming here is Binance. But the deeper point is this: the real success of Mira won’t be measured by where it trades. It will be measured by whether people actually rely on it to verify and certify AI output in the real world.

Closing: Calm Hope, Not Hype

There’s a reason projects like Mira exist now. The world is trying to use AI for serious things, and the old approach of trusting whatever the model says is starting to feel irresponsible. Mira is one attempt to answer that responsibly, by building a network where answers can be checked, certified, and audited without handing all power to a single gatekeeper.

I’m not asking you to believe in perfection. I’m saying there’s something encouraging about the direction. When humans build systems that reward honesty, punish manipulation, and respect privacy, we’re building toward a world where powerful technology feels less frightening and more supportive. They’re trying to make AI output something you can lean on, not something you always second-guess.

If you take one idea from this, let it be gentle: trust doesn’t have to be blind. Trust can be built. Trust can be earned. And when we put verification into the foundations, the future doesn’t feel like a gamble. It feels like a path. We’re seeing that shift begin, and it leaves room for steady courage, calm hope, and the motivation to keep building wisely.

@Mira - Trust Layer of AI #Mira $MIRA