When I first heard about Mira, I’ll be honest, I thought it was just another crypto project trying to sound futuristic. “AI verification at Layer 1” felt like one of those phrases that looks powerful on paper but doesn’t mean much in reality. But the deeper I looked, the more I realized they’re actually trying to solve something very real, something that’s quietly becoming one of the biggest problems of our time.

We’re living in a world where artificial intelligence can write essays, generate research, summarize legal documents, create code, and even give medical-style explanations in seconds. The speed is impressive. The confidence is convincing. But the accuracy? That’s where things get complicated. AI doesn’t just produce brilliance. It also produces mistakes, hallucinations, and subtle errors that are hard to detect. If it becomes normal for machines to generate knowledge, then naturally we need machines to help verify that knowledge too. That’s the simple but powerful idea behind Mira.

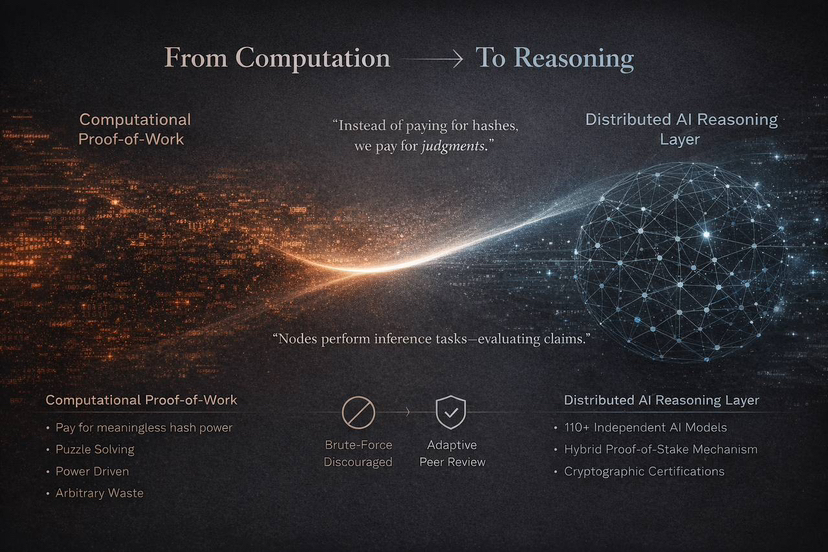

Traditional blockchains like Bitcoin secure their networks using proof-of-work. Miners solve complex mathematical puzzles to validate transactions. Those puzzles are intentionally difficult, but they don’t create useful knowledge. They exist to make cheating expensive. Mira looks at that system and asks a different question. Instead of spending energy solving meaningless puzzles, what if that same energy could be used to check facts, verify claims, and evaluate AI outputs? That shift sounds small at first, but it changes the entire philosophy of what blockchain work can mean.

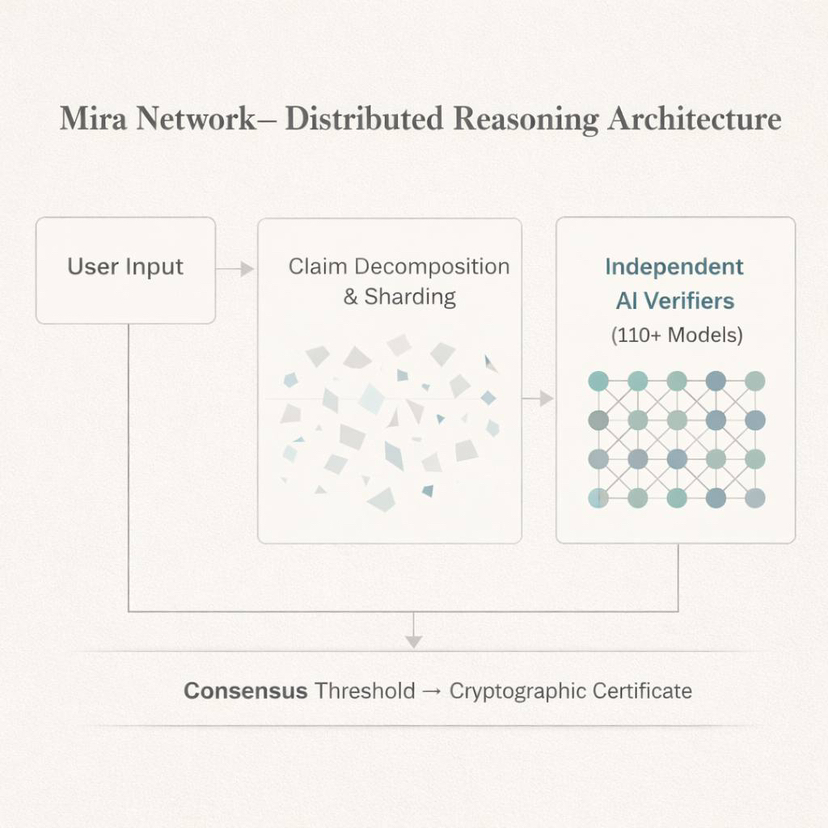

In Mira’s system, when content is submitted, it isn’t just stored or hashed. It’s broken into smaller claims that can be evaluated separately. These claims are distributed across independent nodes in the network. Each node runs AI models that analyze the claim and return a judgment. Some models may specialize in legal reasoning. Others might focus on technical accuracy or medical consistency. The network then gathers these independent responses and calculates a level of agreement. Once enough consensus is reached, it produces a cryptographic certificate showing how many models participated and how confident the system is in the result.

When I think about it, it feels like automated peer review. Instead of sending a paper to human reviewers who might take weeks, the network sends claims to machine reviewers and gets feedback at machine speed. They’re not competing on who has the biggest hardware setup. They’re contributing reasoning. And that’s an important difference.

Of course, any system that involves distributed participants needs economic incentives. Mira uses staking to align behavior. Validators deposit tokens as collateral, and if they act dishonestly or consistently provide poor-quality verification, they risk losing part of that stake. This creates accountability. If I’m running a node, I’m not just clicking approve or reject randomly. I’m financially exposed. That design encourages quality over volume.

The architecture itself is built for scale. Mira uses sharding, which means claims are distributed randomly across different segments of the network. No single node sees everything. This improves both scalability and privacy. The system currently runs on Base, benefiting from lower fees while still being connected to the broader Ethereum ecosystem. It also integrates across chains like Ethereum and Solana, and relies on decentralized storage layers such as Irys to keep verification records permanent. They’re clearly designing this as infrastructure, not as a closed environment.

From a developer’s perspective, Mira tries to remove complexity. Instead of integrating multiple AI APIs separately, developers can use a unified SDK that manages routing and model coordination. This lowers the barrier to building applications that require verified outputs. If I’m creating a chatbot or research assistant, I can embed verification directly into the workflow instead of trusting raw generation blindly. That’s powerful, especially as AI applications become more embedded in everyday tools.

But I also think about the tradeoffs. Distributed reasoning takes time. It can’t always be instant. If a query requires multiple models to evaluate it independently, latency increases. Mira addresses this with caching and optimization, but some delay is unavoidable. The real question becomes whether users value higher confidence enough to accept slightly slower responses. If trust becomes more important than speed, this tradeoff makes sense.

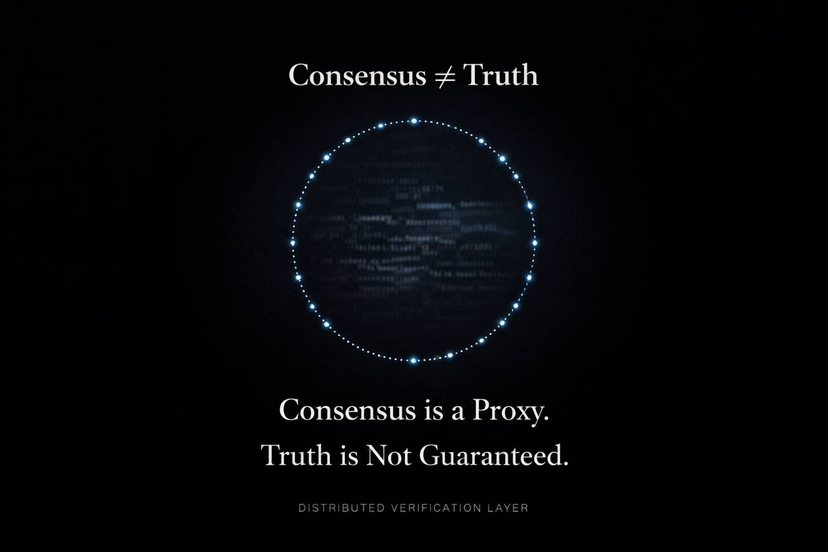

There are also deeper concerns. Consensus does not automatically equal truth. If multiple models are trained on similar datasets, they may share similar biases. Correlated errors are possible. Sharding and diversity reduce this risk, but they don’t eliminate it entirely. And like any token-based network, sustainability depends on incentives. Running advanced AI models requires significant computing resources. If rewards fall below operating costs, validators might leave. That’s an economic reality they’ll need to manage carefully.

Regulation is another unknown. When a network verifies claims that could influence financial, medical, or legal decisions, questions about responsibility naturally arise. Who is accountable if something verified turns out to be wrong? The validators? The developers? The network itself? These aren’t easy questions, and they won’t disappear as adoption grows.

Still, I can’t ignore the bigger picture. We’re moving toward a world where AI generates more information than humans can manually review. Verification cannot stay manual if generation is automated. If it becomes the standard to verify AI outputs before relying on them, we’re seeing the early shape of a new digital infrastructure layer.

Mira isn’t just trying to be another blockchain with a token. They’re experimenting with the idea that trust itself can be decentralized and programmable. That’s ambitious. It’s risky. But it’s also necessary.

When I step back, I don’t just see technology. I see an attempt to answer a fundamental question of our era. If machines are going to speak, write, analyze, and advise us, then who ensures they’re right? Maybe the answer isn’t one authority, one model, or one company. Maybe it’s a network.

And maybe what Mira is really building isn’t just verification software. Maybe it’s a system that forces us to rethink how we define truth in an age where intelligence is no longer purely human.