Completion metrics fooled me for about eight weeks.

The dashboards looked perfect. Throughput up. Completion rate up. Queue latency down.

But one number kept drifting the wrong direction.

My operator score.

Nothing was failing. Tasks were completing.

But verification challenges were arriving later and settling differently than expected.

At first it looked like noise.

Then it started looking like a pattern.

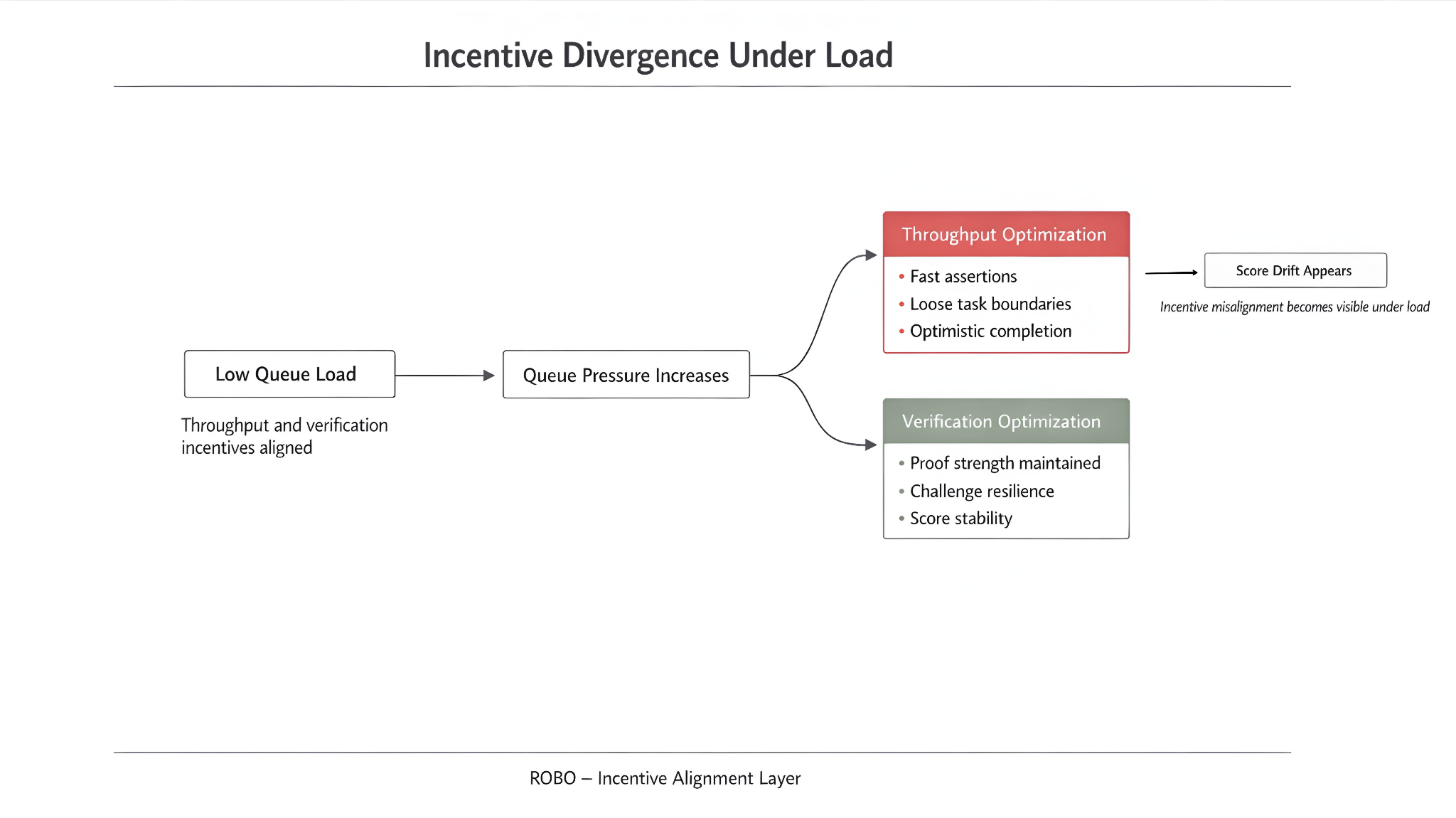

That was when it clicked. The integration and the protocol wanted different things.

Throughput cares about how many outcomes you produce.

Verification cares about whether those outcomes survive challenge.

When the queue is light those incentives point in the same direction.

When the queue gets busy they quietly diverge.

The divergence doesn’t announce itself.

It shows up as score drift.

I think about the cost in three places.

Verification pressure.

Score decay.

And something I started calling optimistic completion.

Verification pressure appears first.

When operators are rewarded for throughput, verification overhead becomes friction to minimize.

Task boundaries loosen. Assertions arrive faster. Challenges become someone else’s problem.

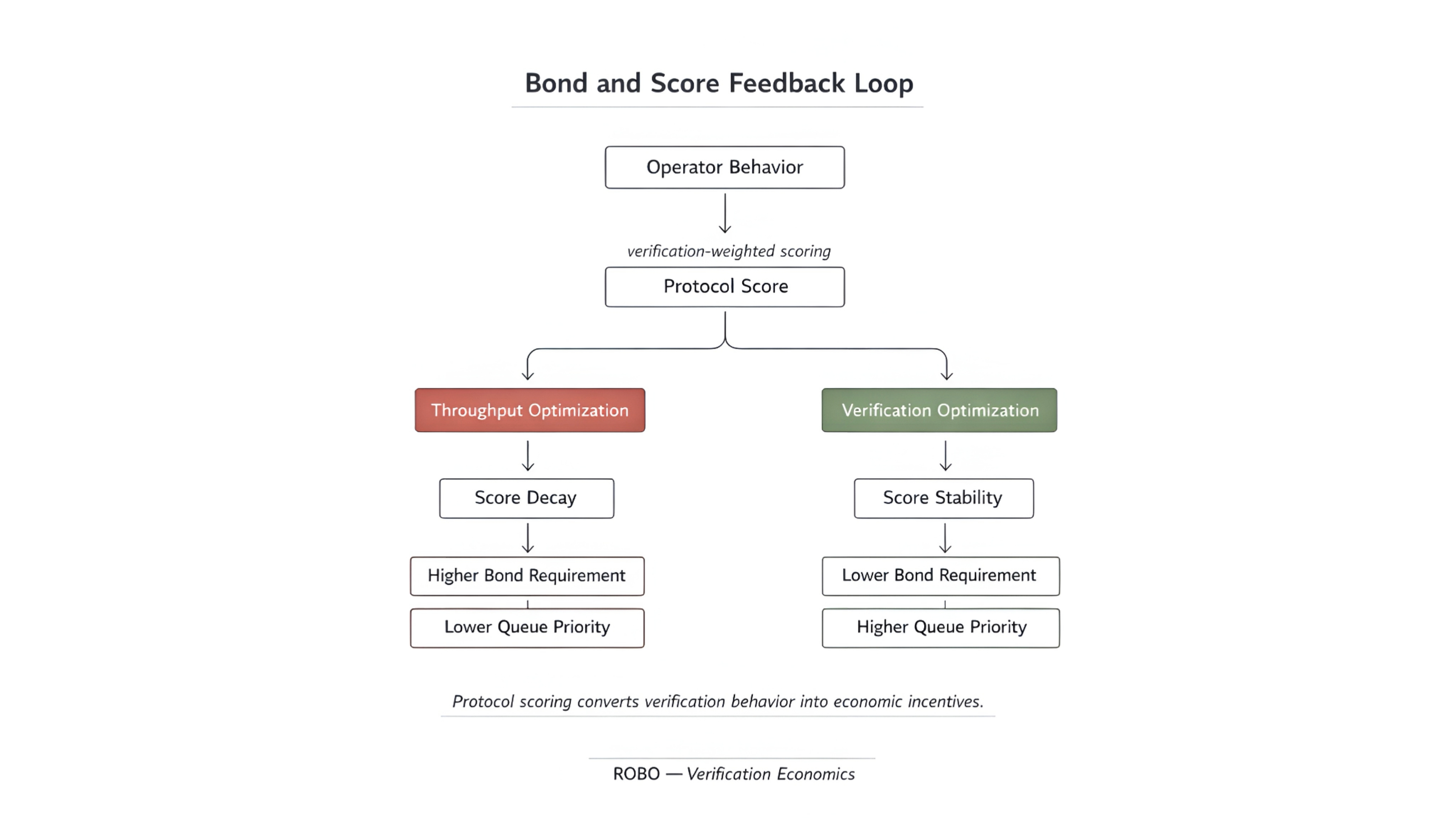

Score decay appears second.

Protocol scores that weight verification quality drift slowly when throughput becomes the default optimization.

Not dramatically. A fraction here. A point there.

Most operators notice only when queue access or bond requirements change.

Optimistic completion is the third place the cost appears.

Tasks complete in ways that look verified without absorbing verification cost.

Loose claim definitions. Fast assertion cycles. Minimum viable proof submission.

Everything technically valid. Nothing particularly resilient.

A fast success that looks verified but isn’t is not speed.

It’s deferred liability with a clean audit trail.

Only late in the analysis does a token matter.

$ROBO doesn’t fix misalignment by existing.

It only works if bond mechanics and scoring rules make verification-weighted behavior the rational choice when the network is busy.

That’s the test.

Pick a busy week.

Compare scores between throughput-optimized integrations and verification-weighted ones.

Watch which operators get queue priority and lower bond requirements.

If throughput wins, the network is rewarding optimistic completion.

If verification wins, the incentives are doing their job.

I haven’t watched ROBO through enough busy weeks to answer that cleanly.

But the fact that the bond mechanics exist at all suggests someone thought about this problem before it became expensive.

That matters more than most people give it credit for.

Incentive design is a load-bearing wall. Nobody notices it until the weight shifts.