I reviewed a team's AI pipeline last week and noticed something odd. Their system flagged an output as "verified," but three engineers still argued about which sentence was wrong. The verification label passed. The trust did not. @Mira - Trust Layer of AI is building around this exact failure mode.

The Isolation Problem No One Talks About

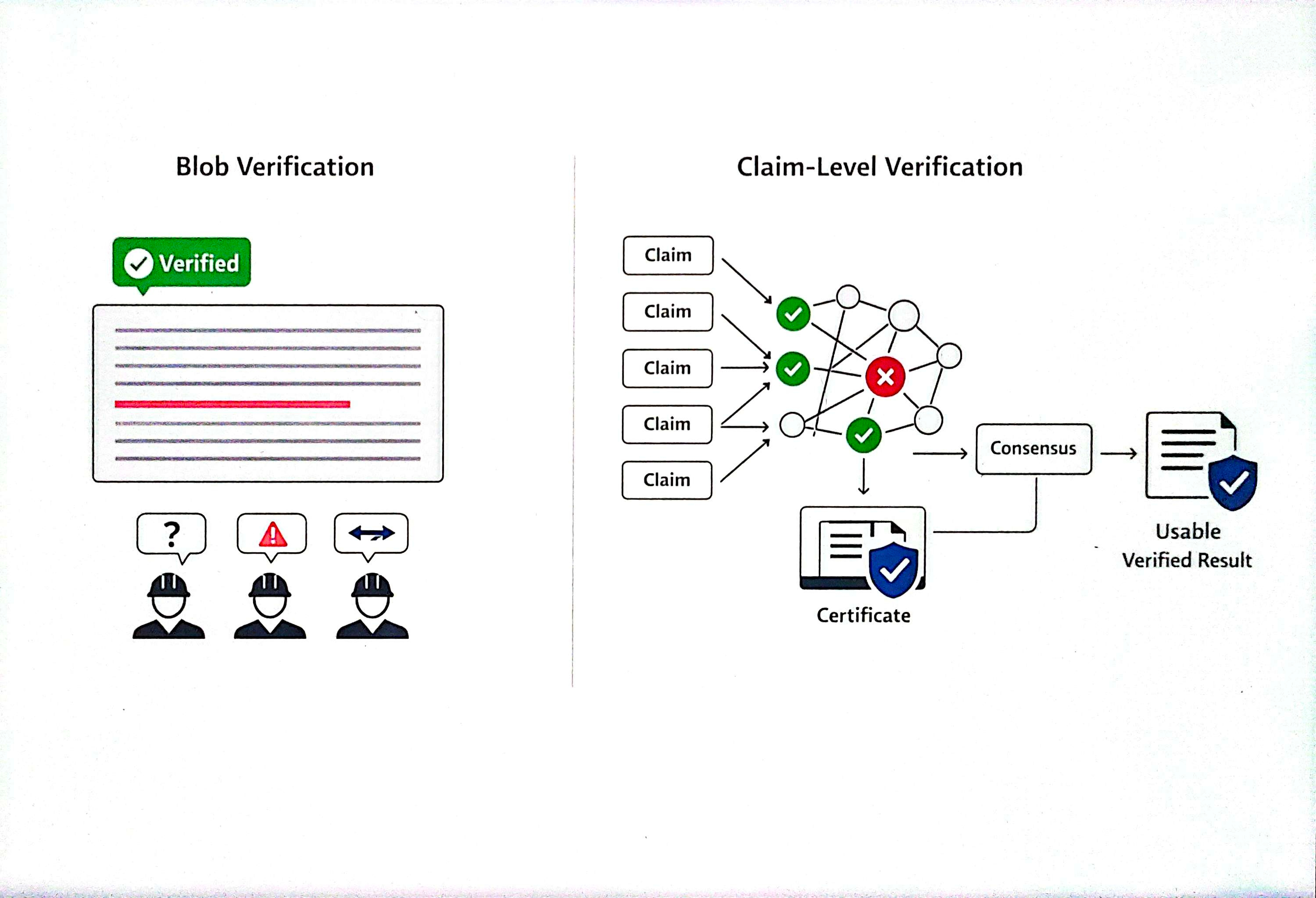

Most AI verification systems check outputs as a single blob. A paragraph goes in, a confidence score comes out. But hallucinations show up as one wrong sentence buried inside plausible text. The system sees green. The operator sees a problem to solve manually. Mira's whitepaper describes a different approach: break complex output into independently verifiable statements, route each through distributed nodes, aggregate results through consensus, and return a cryptographic certificate.

Where Sizing the Unit Gets Hard

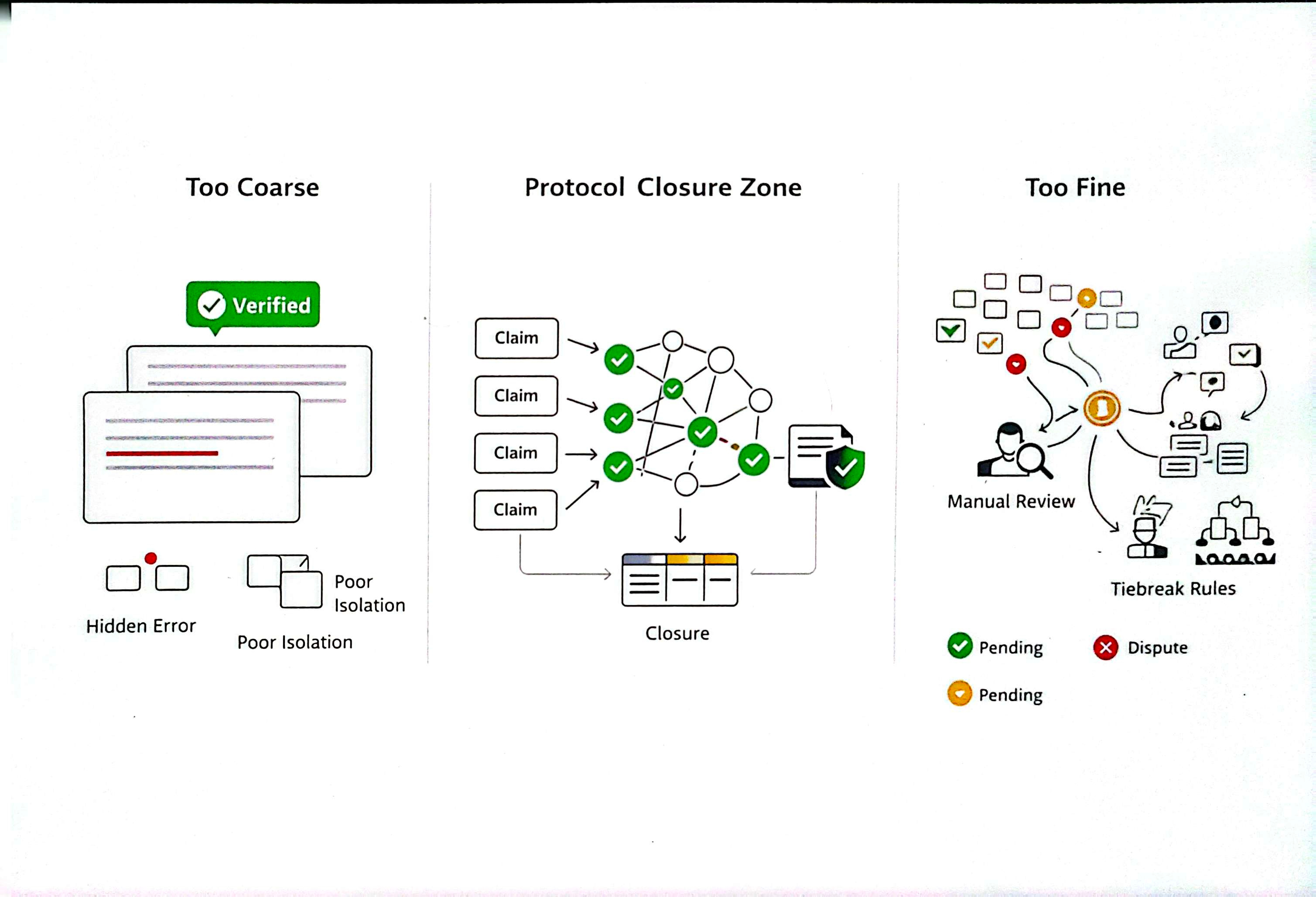

Here is the challenge. If your verification boundaries are too large, errors hide inside verified blocks. If too small, you create a coordination box where pending states pile up and operators fall back to manual review. Mira treats this transformation layer as a first-class protocol responsibility. The network handles distribution, consensus management, and certificate issuance. This pushes shared cost into the protocol once instead of forcing every integrator to rebuild private extraction logic.

Why the Economics Deserve a Red Flag Review

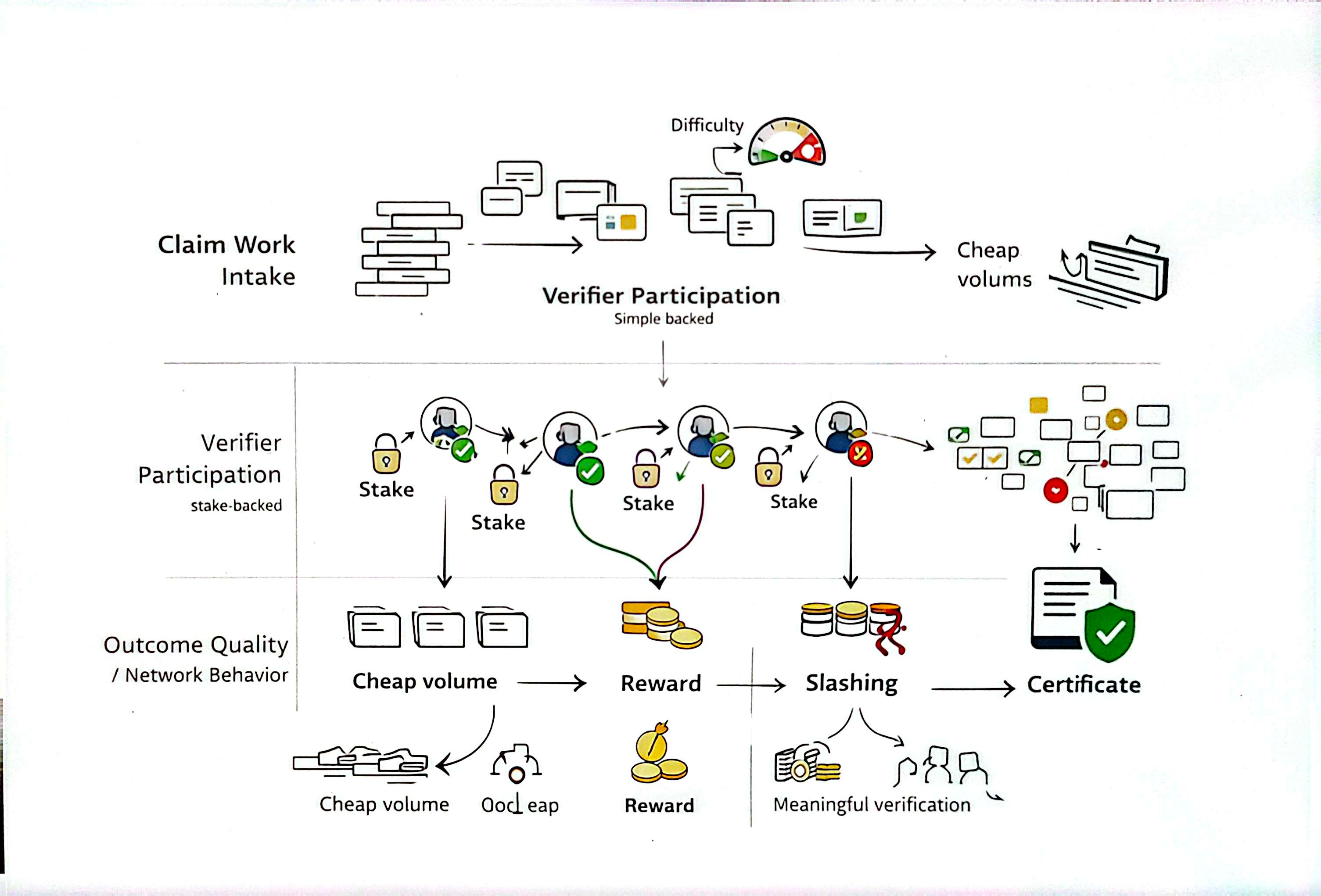

A lot of verification projects treat incentive design as an afterthought. Mira does not. The whitepaper highlights a core problem: once verification becomes standardized, random guessing becomes attractive unless verifiers carry stake at risk. Mira's model combines stake-backed participation with slashing for dishonest behavior. The reward structure needs to price difficulty correctly or the network drifts toward verifying what is cheap.

$MIRA should earn its place as operating capital and security collateral for verification work.

The Code Level Question

I want to learn from what Mira ships, not from documentation promises. Mira exposes an SDK with a unified API, multi-model routing, and standard operations. The real test: when a customer needs a fast response and a clean API, does the verification step collapse into a single usable output? Or does every downstream app build its own tiebreak logic? Mira's success depends on answering this under production pressure.

What the Competition Misses

Most AI trust projects focus on accuracy numbers. Mira focuses on closure. An accuracy score tells you how often the model was right. A cryptographic certificate tied to a specific claim tells you what was checked, who checked, and the consensus threshold. One is a metric. The other is an auditable packet of trust.

Public analyses from Binance Research describe the same thesis and report growing usage for #Mira , though some figures deserve independent audit. The word trending gets tossed around loosely in crypto. Mira's case stands apart. If the protocol keeps boundaries stable while adversarial behavior scales, the free market will separate infrastructure from theater. The trading activity matters less than whether integrators stop building private workarounds. On any given day, I think Mira is building the right layer.