I keep noticing the same tension when I use modern AI tools because the more fluent they get the easier it is to miss when they are wrong. At first I assumed better prompting would fix this and then I assumed the next model would fix it but lately I am more convinced it is a systems problem which is why Mira’s Dynamic Validator Network called DVN is worth thinking through while I test whether it actually holds up.

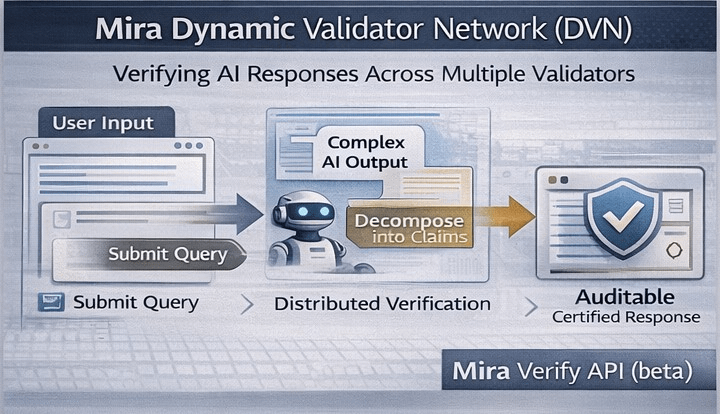

The basic move is straightforward and it shifts where you place the burden of proof. You do not let one model’s answer go straight to a person or into an automated workflow. You take the output and turn it into smaller claims that can be checked on their own and then you send those claims out to multiple independent validators. Mira’s whitepaper describes a process that breaks complex text into verifiable statements and routes them to verifier nodes and gathers their judgments into a consensus result and finally produces a cryptographic certificate that records what happened.

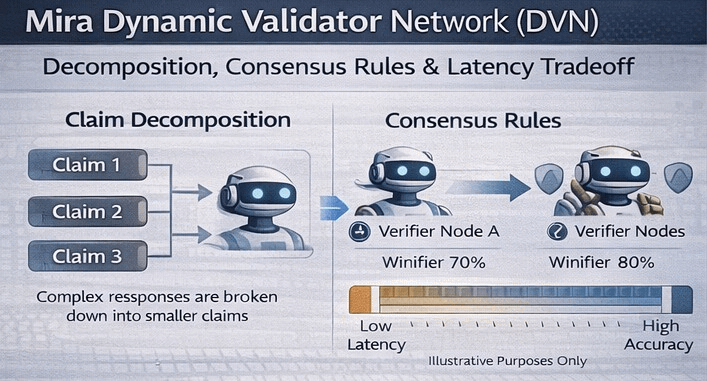

I find it helpful to think of this as formalizing a second opinion and doing it at scale. A single model can hallucinate with complete confidence yet several validators run by different parties are less likely to make the same mistake in the same way at the same moment which is basically the ensemble intuition in plain clothes. Mira has also published work on ensemble validation that reports large gains in precision when additional models are used as cross checkers while still noting the real costs such as extra delay and the need to shape tasks so validators can respond cleanly.

The dynamic part matters because the validator set and the decision rule do not have to stay fixed across every request. In the whitepaper the requester can specify what kind of verification they want by setting a domain focus and a consensus threshold such as strict agreement or a strong majority depending on what feels appropriate for the job.

That flexibility matters because truth does not show up in one uniform form and I do not want the same standard applied to every situation. Sometimes I want speed plus a reasonable safety margin and other times I want a slower pass with a higher bar. This is getting attention now because the pressure point has shifted from chatting to acting and the outputs are not just read by humans but used to drive next steps. Models are being wired into workflows that write and summarize and support customers and they increasingly sit inside agent loops where one output triggers the next step. Human review can catch mistakes yet it becomes a bottleneck as soon as volume rises or the whole point is to run unattended.

One thing I notice people wanting is an audit trail that does not rely on trust alone. Messari describes Mira as a verification layer inside the pipeline that returns an auditable certificate showing what was checked and how validators voted. Mira Verify which is presented as a beta API describes the same flow in plainer language where multiple models cross check each claim and you can audit the path from input to consensus.

What takes this beyond simply running two models is the incentive design and I think that is where the story gets more interesting. The whitepaper argues that validators often answer structured questions and that random guessing can be tempting unless being wrong has a real cost. To address that nodes stake value to participate and can be penalized when their behavior repeatedly diverges from the network pattern.

I do not treat that as a magic shield because economic incentives can be gamed and coordinated actors exist in every system that pays people to behave. Still it is a serious attempt to make verification something you can lean on without trusting a single operator’s goodwill. I also think it is risky to talk about consensus as if it automatically equals truth because validators can share training data and cultural assumptions and blind spots so agreement can sometimes mean shared bias. Another fragile point is decomposition itself since turning a paragraph into checkable claims is powerful yet it can flatten nuance and anything that is not turned into a claim will not be validated.

There is also the question of speed since every validator is another inference call. Adding validators usually improves reliability yet it also adds delay which matters when you are trying to keep an interactive system responsive without making users feel like the tool is thinking forever.

Mira’s real move isn’t discovering hallucinations or popularizing ensembles it’s operationalizing correctness. It makes you negotiate, test, and document truth in a repeatable workflow, so uncertainty stays visible. Builders can earn trust per claim instead of asking for belief in the whole output.

@Mira - Trust Layer of AI #Mira #mira $MIRA