The model responded fast.

Too fast.

Output looked clean. Structured. Confident. Perfect JSON. Nothing broken.

I didn’t trust it.

Because I’ve watched certainty break systems before.

Fragments had already started peeling off. Entity. Claim. Unit. Evidence hash. Routed to nodes across Mira’s decentralized validator network.

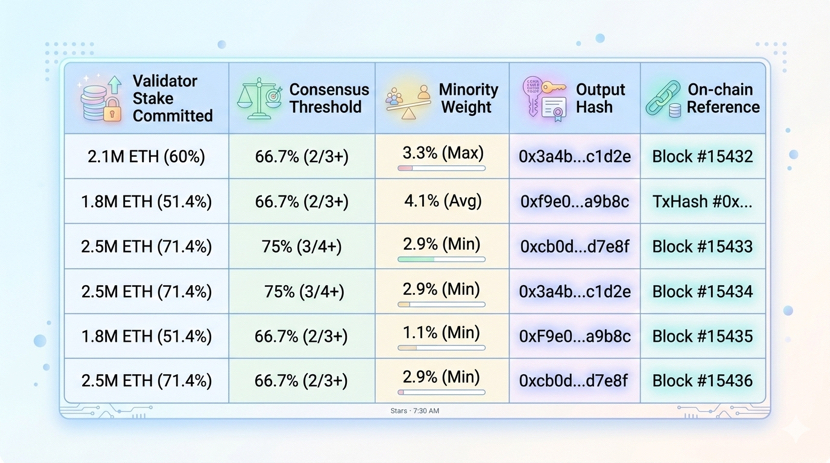

I hovered over the console. The first fragment hit two validators. Green. One abstained. Weight climbing. Close to supermajority, but not there yet.

Fragment two followed. Smaller, easier. Certified almost instantly.

Fragment three? Still limping. Partial quorum. Not red. Not rejected. Just… incomplete.

I realized then: the dashboard made it look done. But “done” was a lie. The paragraph wasn’t safe.

I could have exported what was sealed. Two fragments green. One hanging. My client would have read it as verified. Portable. Ready. Dangerous.

Weight redistributed under the hood. Minority dissent breathing quietly. Stake still moving. Consensus wasn’t final.

I paused. Adjusted my perspective. Mira doesn’t care about appearances. It cares about proof. Cryptographic certificates anchor outputs. Fragments don’t wait for each other. They move when ready. And that timing gap? That’s where most errors hide.

Validators stake capital. Correct verification yields reward. Misalignment triggers penalties. Economic incentives keep the jury honest. No dashboard can lie here. Stake speaks.

I watched the final fragment cross the supermajority line. Certificate recomputed. Output hash changed. Same sentence. Different reality.

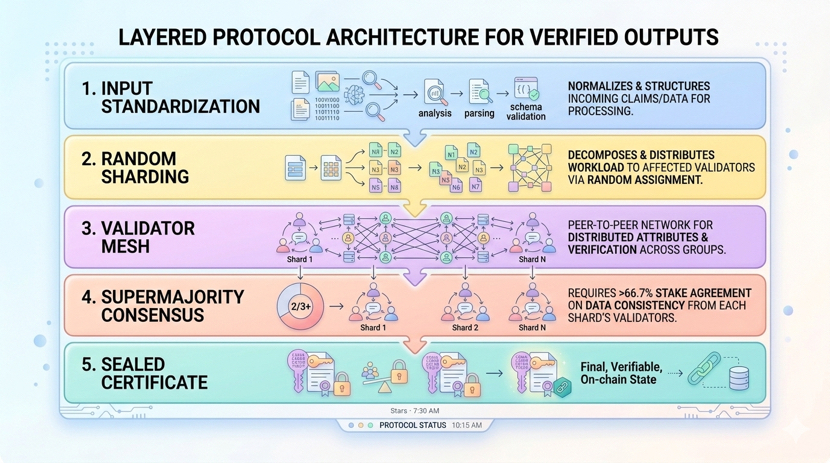

The system works in layers:

Standardization prevents context drift before reaching validators.

Random sharding protects privacy and balances load.

Supermajority aggregation ensures that what emerges isn’t just majority noise.

Not a feature. An operational requirement. Proof that each claim was inspected, recorded, and economically defended.

Downstream agents don’t read debate logs. They read status. Certified. Portable. Anchorable. Traceable.

The economic layer converts accountability from aspiration into infrastructure. Stake at risk. Consensus weighted. Rewards earned. Negligence penalized. Transparency baked in.

Latency exists. Millisecond-sensitive workflows notice. Legal frameworks remain external. But direction matters.

AI will keep accelerating. Confidence alone won’t be enough. Organizations that scale will not be the ones with the flashiest models. They’ll be the ones that can show exactly what was checked, when, and under which economic commitments.

I leaned back. Monitored the logs. Felt the micro-friction of early fragments racing ahead. Felt the final certificate click into place.

Not a benchmark score. Not a promise. Infrastructure. Defensible, auditable, economic.

Mira isn’t just making AI more accurate. It’s making AI verifiable. Trust earned, not assumed.

#Mira @Mira - Trust Layer of AI $MIRA