Mira Network is a decentralized protocol that enhances AI reliability by verifying outputs through a consensus-based system on blockchain. It addresses AI’s trust issues, such as hallucinations and biases, by decomposing responses into individual claims, distributing them to independent validator nodes (each running diverse AI models), and achieving consensus to produce confidence-scored, auditable results. This creates a transparent “trust layer” for AI, with incentives for accurate validations and immutable records for compliance.

Verification Process Breakdown

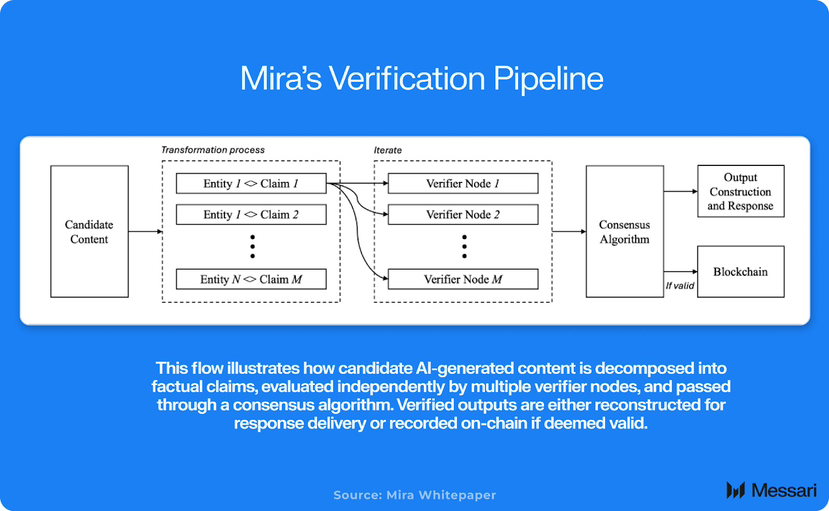

The process begins with an AI-generated output being broken down into factual claims. These are then evaluated by multiple verifier nodes across the network.

As shown in this diagram, candidate content is transformed into entities and claims, iterated through verifier nodes, passed through a consensus algorithm, and reconstructed into a verified output or recorded on-chain if valid. This ensures diversity in models reduces single-point biases.

Validators are rewarded for aligning with consensus and penalized for deviations, fostering honest participation. The blockchain ledger records all steps for transparency.

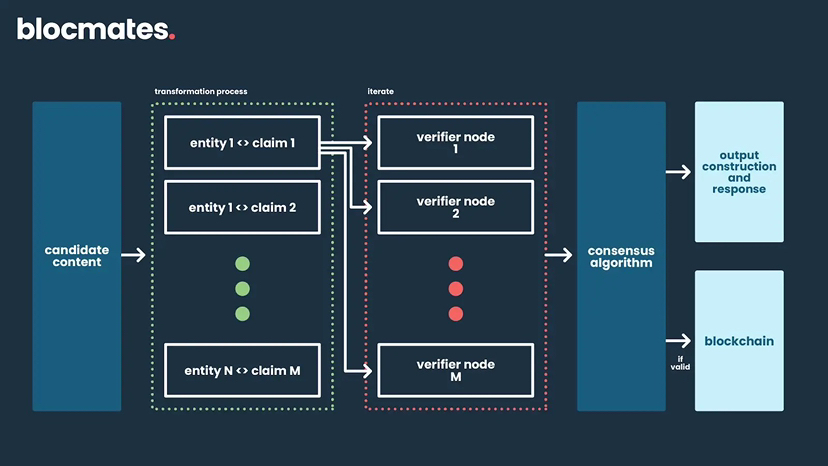

Here, a similar flowchart illustrates the iteration over claims, verifier involvement, and final consensus leading to output or blockchain validation, emphasizing the decentralized nature.

Risk Assessment and Reliability

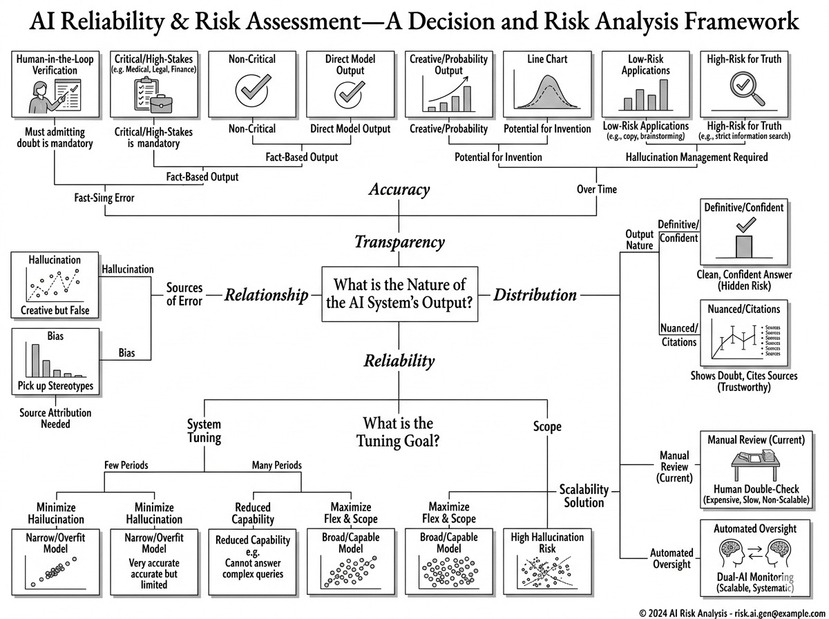

Mira shifts AI from absolute answers to measurable confidence, useful for critical sectors like finance or compliance where errors are costly.

This risk assessment framework highlights how AI outputs are categorized by reliability, sources of error (e.g., hallucinations, bias), and tuning goals like minimizing errors or maximizing scalability, aligning with Mira’s approach to verifiable intelligence.

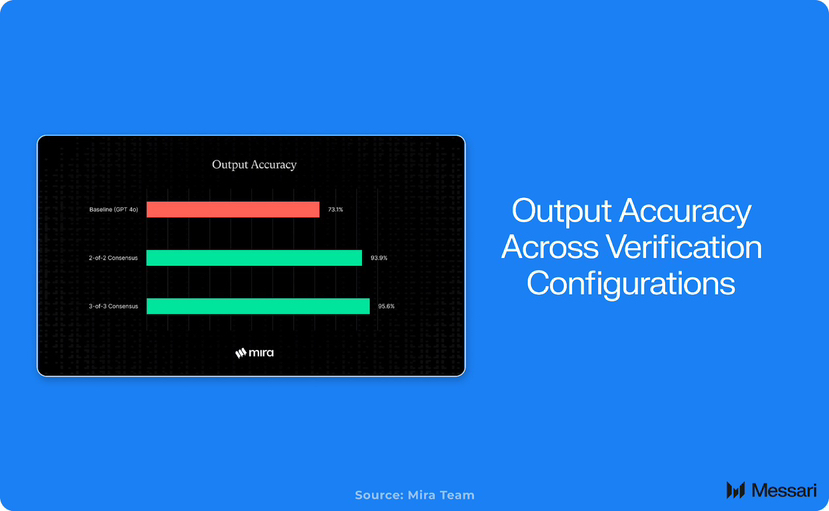

Empirical data shows improved accuracy with multi-model consensus.

For instance, baseline models achieve lower accuracy compared to 2-of-3 or 3-of-3 consensus configurations, demonstrating Mira’s effectiveness in boosting output reliability.

Architectural Insights

The system integrates blockchain for immutability and incentives, similar to decentralized networks in other domains.

This simple sketch depicts blockchain validation as interconnected blocks with trust elements like awards and likes, symbolizing the network’s focus on reputation and data integrity.

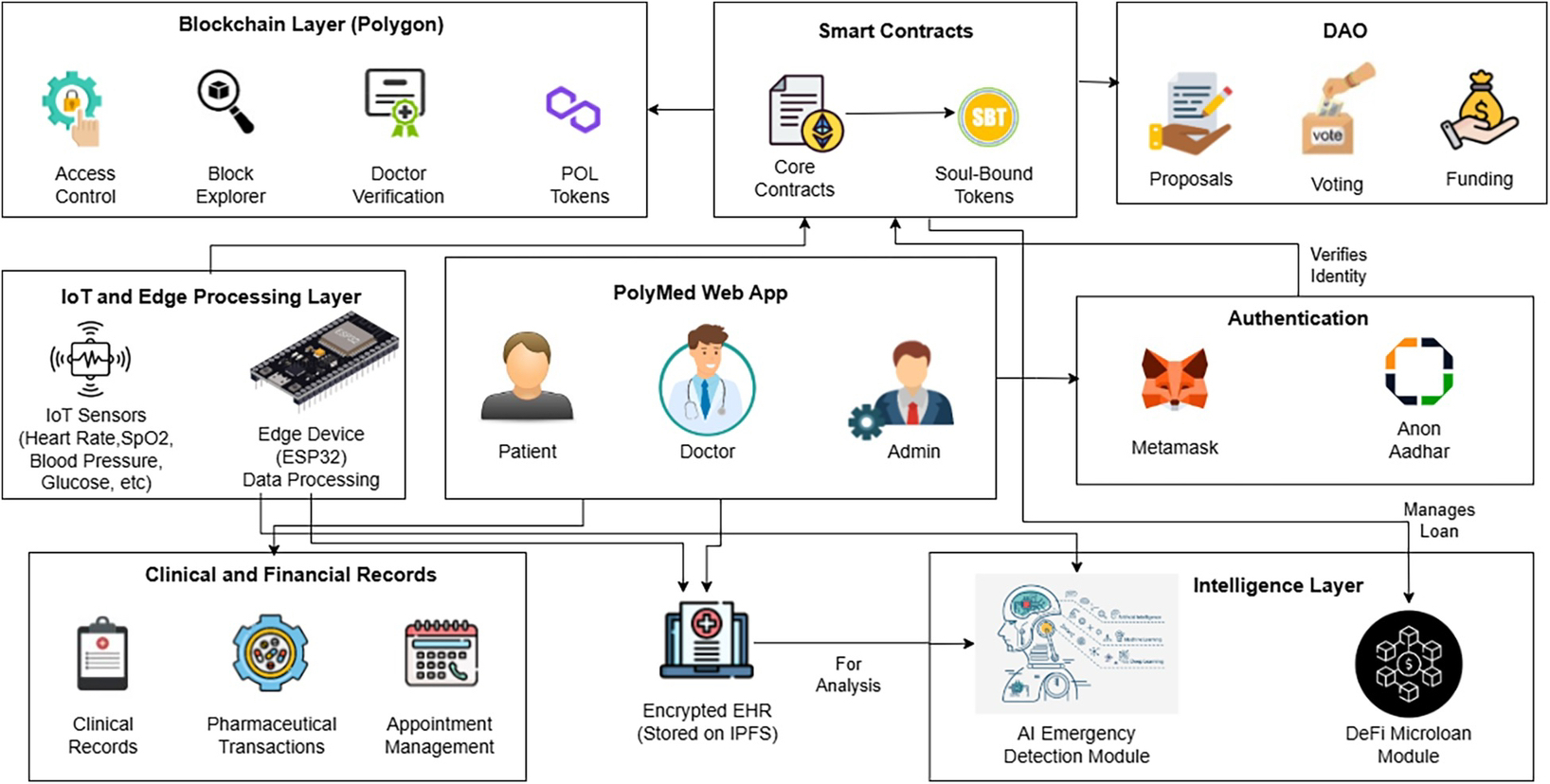

In broader applications, Mira’s structure supports autonomous AI agents.

Comparable to this health ecosystem diagram, it layers blockchain (e.g., smart contracts, DAO), intelligence (AI modules), and user interfaces for secure, verified operations.

Consensus and Output Validation

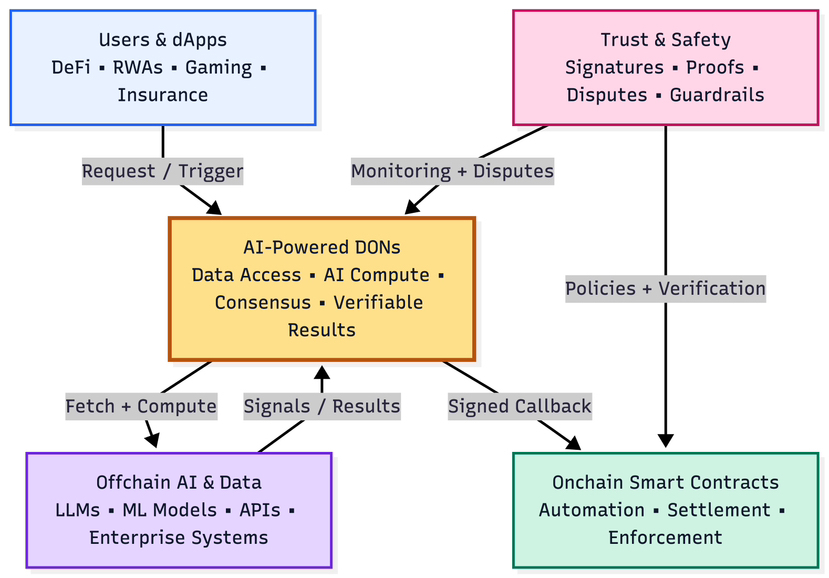

Core to Mira is the consensus mechanism, where nodes vote on claims.

This flowchart outlines AI-powered decentralized oracle networks (DONs) fetching off-chain data, computing verifiable results, and enforcing on-chain via smart contracts—mirroring Mira’s validation flow.

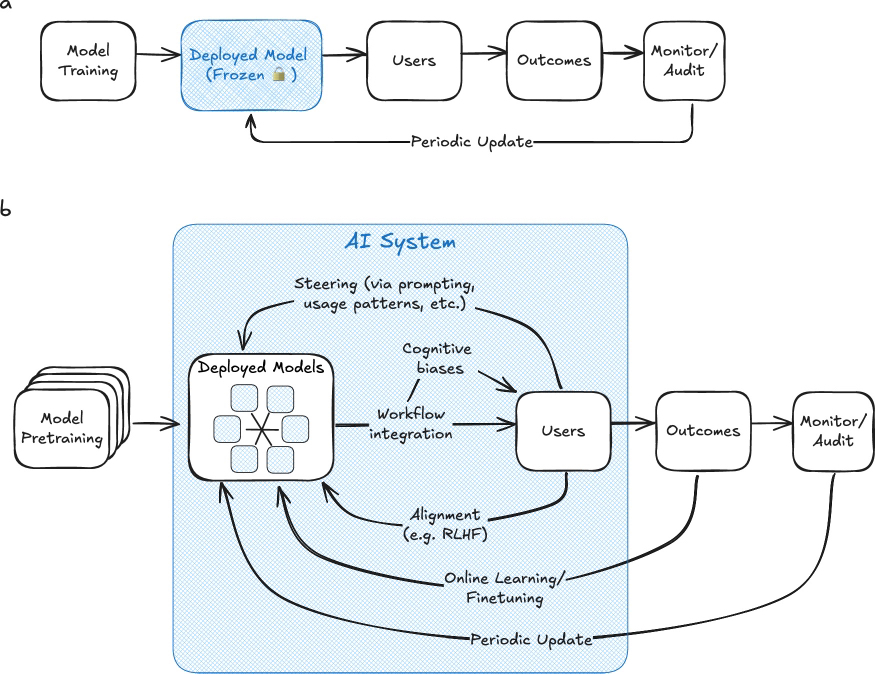

Deployment involves periodic updates and monitoring.

As illustrated, traditional frozen models evolve into adaptive AI systems with steering, alignment, and online learning, ensuring ongoing reliability.

Network Dynamics and Incentives

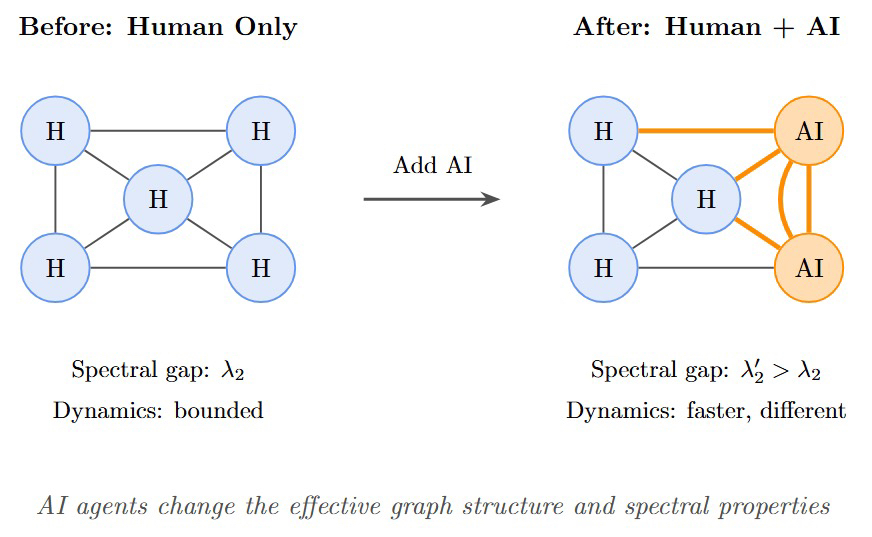

Diversity in validators prevents dominance by any single model.

This spectral graph shows how adding AI nodes changes dynamics from bounded to faster, more complex interactions, reflecting Mira’s distributed setup.

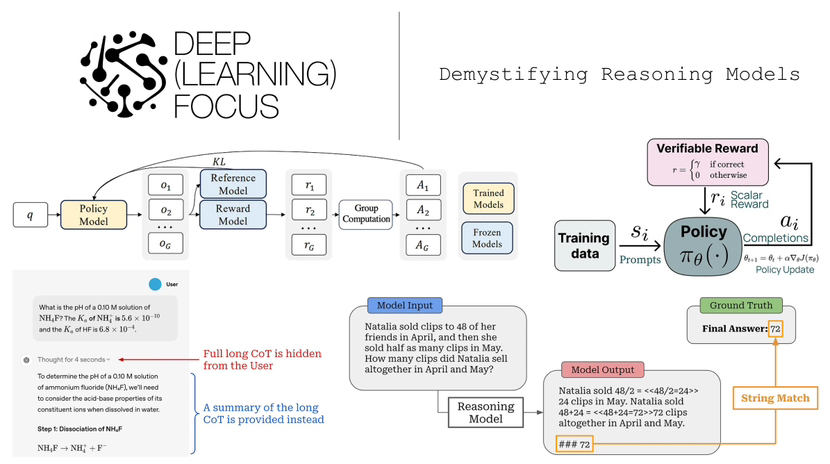

Training involves reference models and rewards for correct outputs.

Here, the reasoning model flow includes policy models generating completions, group computation, and verifiable rewards based on scalar policies—key to Mira’s incentive structure.

Incentives and Transparency

Participants stake tokens and earn rewards for accurate verifications, with penalties for inconsistencies. Every cycle is logged on-chain for audits, making Mira ideal for regulated environments.

Future Implications

As AI integrates into autonomous systems, Mira’s tools enable seamless verification in pipelines, potentially becoming an invisible reliability layer like internet encryption. Scalability and adoption will determine its impact, but it addresses a core need: distinguishing predictions from dependable knowledge.