I spend a lot of time poking around early stage AI tools,especially the ones small teams rush to turn into products.Something keeps jumping out at me:these projects usually show off bold technical ideas,but their reliability just isn’t there yet.The models are impressive,sure,but founders quietly admit they still need people behind the scenes double checking the results.That gap between what the tech can do and what people actually trust ends up being where most of the real engineering work lands now.

Honestly,building an AI model isn’t the main headache for startups anymore.Open source models,APIs,and fine tuning have made it much easier to tinker and experiment.The tough part is convincing people that your outputs are solid enough to use in real workflows.Hallucinated answers,logic that jumps around,and results you can’t verify these are real risks.A demo might look slick, but when accuracy actually matters,that’s when things break down.So teams either slow everything down with manual checks or decide to live with a level of uncertainty that makes investors and users nervous.

It’s a bit like trying to do your company’s accounting on calculators that sometimes just make up numbers.

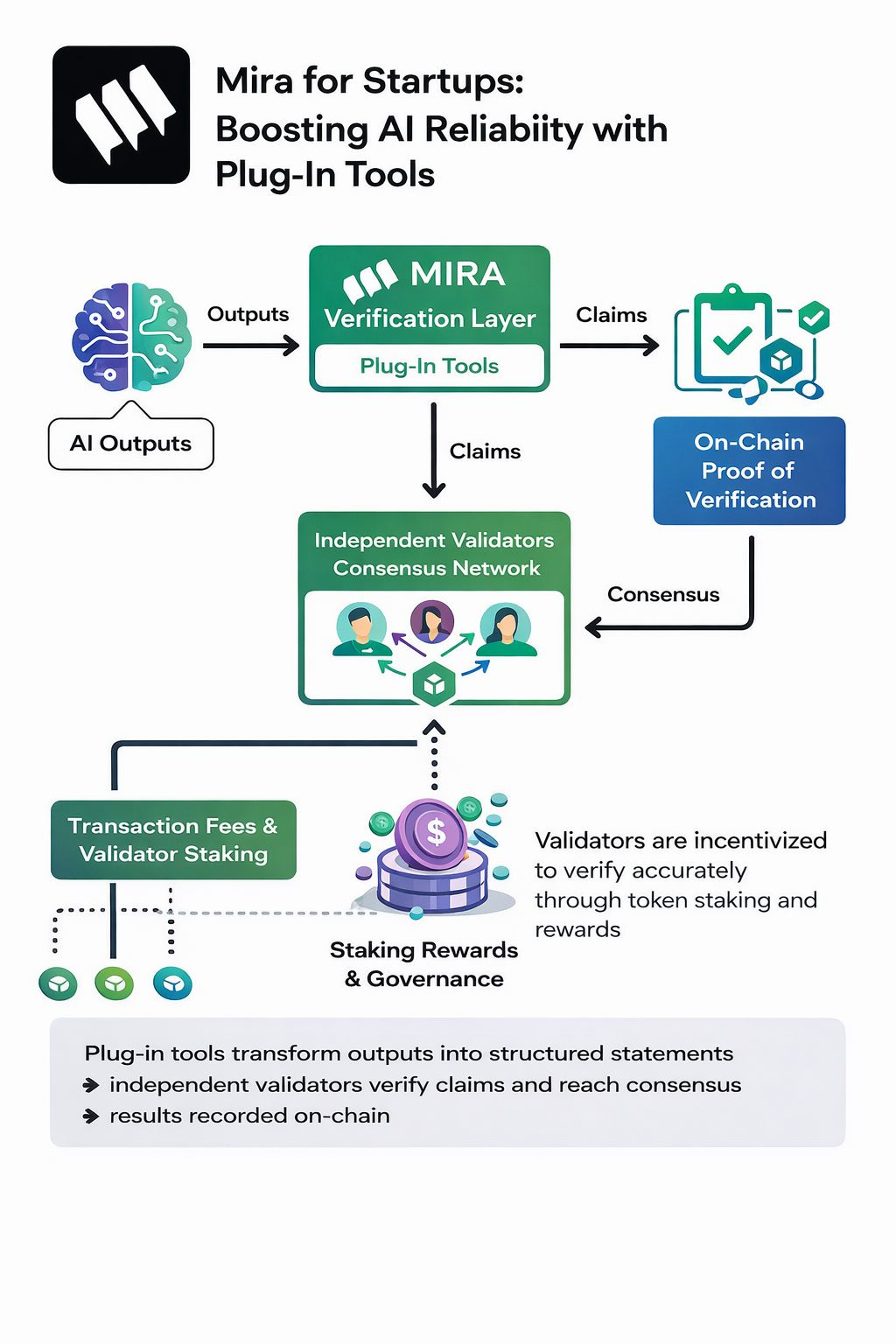

That’s where Mira Network comes in.Instead of focusing on training models to be more reliable,Mira attacks the problem from the verification side.It doesn’t just take AI outputs at face value.Instead,it treats every answer as a claim that needs to be checked by someone else.When an AI spits out a result, Mira breaks it down into structured statements chunks you can actually test. These get passed through a network of independent validators who check if those claims hold up.Each check gets logged,so instead of just “the AI said so,” you get a trail you can actually follow.

Under the hood,the process is pretty layered. First,raw AI outputs get turned into machine readable claims basically,turning text or reasoning into clear statements.Then,a verification layer sends those claims off to validators picked through a consensus process,which helps keep bias in check. Validators check claims however they want using their own tools or sources then submit signed votes.Those votes get rolled up into a consensus outcome,which gets recorded on chain.With cryptographic signatures and verifiable logs at each step,you don’t just end up with a black box answer;you get proof that the network checked things according to agreed rules.

Why does this matter for startups? Most can’t afford big teams to build custom safety checks.With Mira,they can just plug in tools that send AI outputs through this external verification layer.The network turns into a reliability service.It doesn’t swap out your model;it just wraps around what you already have and tells you whether the answers stand up to outside scrutiny.

There’s also an economic layer that keeps everyone honest.Validators stake tokens to join in,and they earn rewards when their votes match up with the consensus.If they try to cheat or just get it wrong,they risk losing that stake.Transaction fees fund the process, and governance lets participants tweak things like how validators get chosen or how disputes get resolved.The token’s value, then,comes from actual demand for verification not just hype about model performance.

Of course,this approach isn’t perfect. Distributed verification takes time and costs money,so it won’t work for every real time need.The system’s accuracy also depends on having a diverse,well designed pool of validators and solid claim structuring.If either gets lazy or concentrated,you lose the reliability edge.

And there are always unknowns.AI moves fast,and today’s verification tricks won’t always work as models get more complex. Mira’s framework isn’t about stopping hallucinations forever it’s about building a process that can adapt as new AI behaviors pop up.

Here’s what’s interesting for startups: reliability stops being something you have to build and maintain yourself.Instead,it turns into a shared infrastructure layer.Mira reframes trust in AI outputs not as something that lives inside your model,but as something you can negotiate,verify,and record across a distributed network.Whether startups jump on board probably comes down to how easily these verification tools fit into the rapid fire development cycles they rely on.

@Mira - Trust Layer of AI $MIRA #Mira