Fragment 31 was already in the queue when I noticed the delay.

Not a network delay.Round propagated normally on Mira consensus. Evidence hash anchored, claim decomposition clean. Same arrival time across the validator set.

One model just… wasn’t answering.

Independent AI validators usually land in a tight burst...affirm, reject, abstai... and you feel the round start leaning as stake-weighted consensus attaches to the first finishes.

This time the first four responses dropped fast.

affirm

affirm

reject

affirm

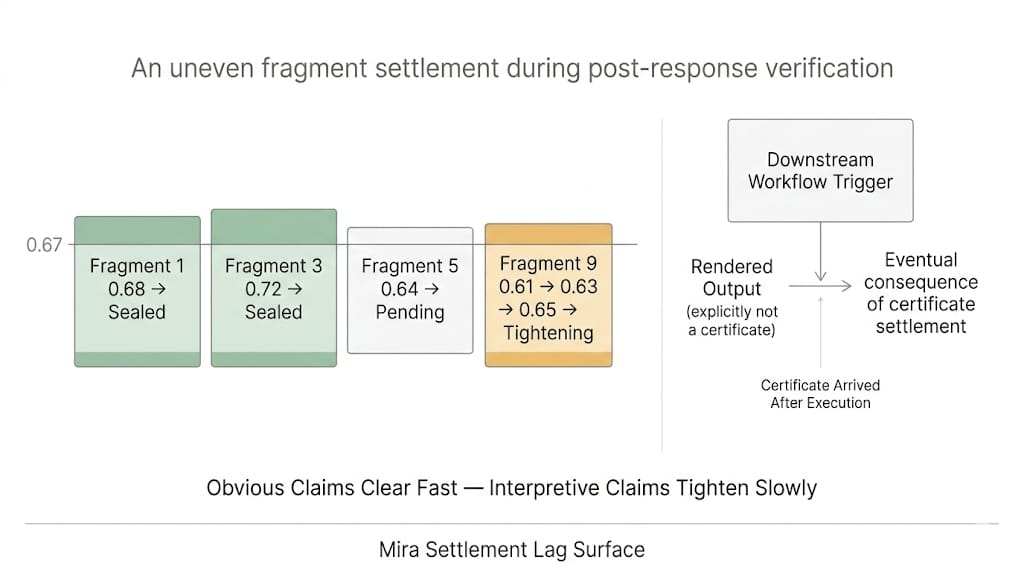

Confidence climbed to 0.61.

Then nothing.

Mira validator panel still showed one model evaluating. Spinner icon beside the node address. Not failed. Just running like it had all day.

High-entropy claim, apparently.

I opened the evaluation trace.

The fragment wasn’t long. One regulatory interpretation tied to a citation chain deep enough that the model had to follow multiple hops. Mira's verification Evidence path branching across linked references instead of one clean source.

Most validators finished in under four seconds.

This one crossed ten.

And you could see the behavior change. People stopped voting. The round didn’t stall... it leaned, without the slow vote. Everyone acted like the missing weight was already an answer.

I checked the validator stake map.

That lagging model belonged to a mid-weight validator cluster. Not the biggest pool, but heavy enough that the rest of us were waiting to see which way it would land.

Another validator pushed an affirm anyway, trying to close the round on momentum.

The spinner kept turning.

I pulled the compute delegation trace. That validator had offloaded evaluation to a secondary inference node. Cheaper tier, maybe. Or just overloaded. Compute delegation saved cost. It spent certainty.

Round timer kept running.

Queue behind Fragment 31 started building. Not a dramatic spike... just enough that the scheduler started routing around it. Fast validators on Mira kept clearing easy fragments; this one sat there like a toll gate.

I caught myself staring at the spinner.

Waiting for someone else’s machine to finish thinking.

The server rack beside me hummed louder than usual. Cooling fans spun up as load shifted across the cluster. Warm air brushing past the side of the desk. I wanted it to time out. That’s… not great.

The coordination channel started twitching.

“Model stuck?”

“No. Still evaluating.”

Same citation path. Different evaluation latency. Not a mystery. Just model variance showing up where it hurts.

Evaluation time crossed twenty seconds.

Then the response arrived.

reject.

Confidence didn’t drift down. It dropped.

Back under.

The round that had been leaning toward a seal just opened again.

Late stake hit the round like a shove. Not because the slow model is “more correct.”... weight arrives after everyone else has already mentally priced in an outcome.

Everyone who affirmed early just inherited the review cost.

Now every validator that affirmed early had to decide whether they were going to stick with it or revisit the evidence-hash chain they’d already skimmed.

The queue behind Fragment 31 stopped moving entirely.

The easy fragments kept sealing. Fragment 31 kept the parent response in provisional.

Not because the network broke.

The same validators that close rounds keep taking the rewards.

I watched the fragment state again.

cert_state: provisional.

lean_state: true.

No hardened seal.

The lagging validator model on Mira validator consensus had finished.

The round hadn’t.

And the worst part wasn’t the reject. It was the time it took to arrive... long enough for the rest of the network to start acting like it didn’t exist.

@Mira - Trust Layer of AI #Mira $MIRA