A few weeks ago, I was reading about different artificial intelligence projects entering the Web3 space. Many of them were promising faster models, larger datasets, and more powerful AI capabilities. But one thought kept coming to my mind: speed is impressive, but accuracy is more important.

This is where @mira_network started to feel different from many other AI-focused projects.

Instead of competing in the race to build bigger models, Mira focuses on something more foundational verification. In simple terms, the project is trying to answer a question that most AI systems still struggle with: How can we prove that an AI-generated answer is correct?

The Problem Most AI Systems Ignore

Anyone who has used AI tools regularly has seen this problem. AI models often provide answers that sound confident and convincing, but sometimes those answers are incorrect. In technical terms, this is known as AI hallucination.

For casual conversations this may not matter much. But imagine AI being used for financial analysis, legal documents, medical research, or automated trading systems. In those cases, incorrect information can create serious consequences.

From my perspective, this is one of the biggest gaps in the current AI ecosystem. Most companies are focused on generation, while very few are focused on verification.

That is the gap Mira is trying to fill.

Mira’s Core Idea: Verification as Infrastructure

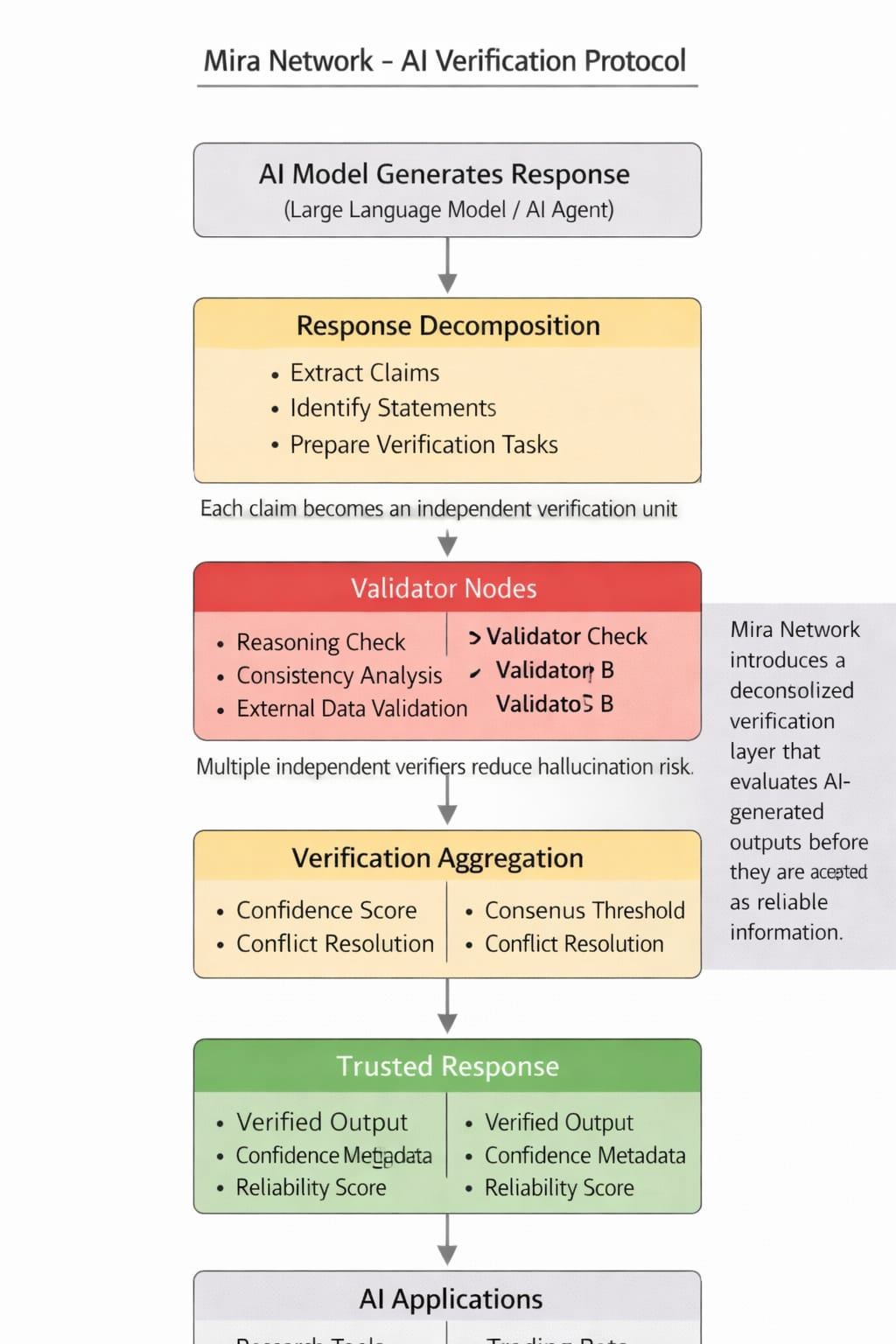

The central idea behind MIRA is surprisingly straightforward. Instead of assuming that an AI output is reliable, Mira introduces a system where AI responses can be verified through a decentralized network.

This means the process does not rely on a single authority. Instead, multiple participants in the network can validate whether an AI-generated response meets certain verification standards.

In practice, this creates something similar to a trust layer for AI outputs.

Think about how blockchain technology verifies financial transactions. Before a transaction becomes final, the network confirms it through consensus mechanisms. Mira is exploring a similar concept but applied to AI-generated information.This is what makes the project conceptually interesting.

How the Verification Layer Could Work

The architecture Mira is developing focuses on a few important components.

First, the network can evaluate AI outputs using verification mechanisms that check consistency, reasoning, and correctness. Instead of relying on the AI model itself to confirm accuracy, external verification processes are involved.

Second, the system is designed to support decentralized participation. Validators or contributors within the ecosystem may help review or confirm outputs, depending on how the verification framework evolves.

Third, the project aims to make verification integratable for other AI applications. In other words, Mira is not just building a single AI tool. It is creating infrastructure that developers can potentially plug into their own AI systems.

If this works effectively, it could turn Mira into something like a reliability layer for AI platforms.

Why This Matters for Developers

From a developer’s perspective, verification can save significant time and risk.

Today, teams building AI-powered applications often need to design their own systems to filter incorrect outputs. This can involve complex validation pipelines, additional models, or manual review processes.

If Mira provides a reliable verification infrastructure, developers may be able to integrate that layer instead of building it from scratch.

That could be useful in several scenarios:

AI research tools verifying generated insights

Automated financial analysis systems checking predictions

AI assistants confirming factual responses before presenting them to users

Enterprise platforms ensuring AI outputs meet reliability standards

These types of use cases highlight why verification may become an important part of the AI stack.

The Role of the MIRA Token

Projects like Mira also rely on token-driven ecosystems to coordinate participation.

The MIRA token may serve several roles within the network, such as incentivizing participants who contribute to verification processes or supporting governance decisions related to how the verification system evolves.

Token mechanisms can also encourage long-term participation from validators, researchers, and developers who help maintain the reliability of the network.

While token economics will likely continue to evolve as the project grows, the key idea is aligning incentives around accuracy and trust.

Ecosystem Growth and Future Potential

One thing I personally find interesting about Mira is that its value may increase as AI adoption continues to expand.

The more industries rely on AI systems, the more important verification and accountability become.

If AI outputs start influencing financial decisions, research conclusions, or automated systems, people will naturally demand stronger ways to confirm accuracy.

This is where Mira’s infrastructure could become relevant.

Rather than replacing AI models, the project is positioning itself as something that supports and strengthens the AI ecosystem itself.

A Personal Perspective

After exploring several AI-related crypto projects, I noticed that many focus heavily on the excitement of new models and capabilities.But infrastructure layers often create the most lasting impact.

When I look at Mira, I see a project that is addressing a practical issue rather than chasing hype. The idea of verifiable AI outputs might sound technical at first, but it directly connects to a basic human need: trust.

In my opinion, if Mira continues developing strong verification mechanisms and attracts developers to its ecosystem, it could quietly become one of the more important pieces in the broader AI infrastructure landscape.

Because in the future of AI, generating answers will be easy.Proving those answers are correct may be what really matters.

@Mira - Trust Layer of AI #Mira $MIRA