I still remember the small moment when the thought first unsettled me. It wasn’t dramatic or particularly important at the time. I was simply reading about new systems designed to verify information more carefully, especially in the world of artificial intelligence. The idea sounded sensible at first. If machines can generate endless answers, then someone has to check whether those answers are actually true.

But somewhere in the middle of that reading, a quiet question appeared in my mind and stayed there longer than I expected: What if the effort required to prove something eventually becomes larger than the thing we’re trying to prove?

It’s a strange thought once it settles in.

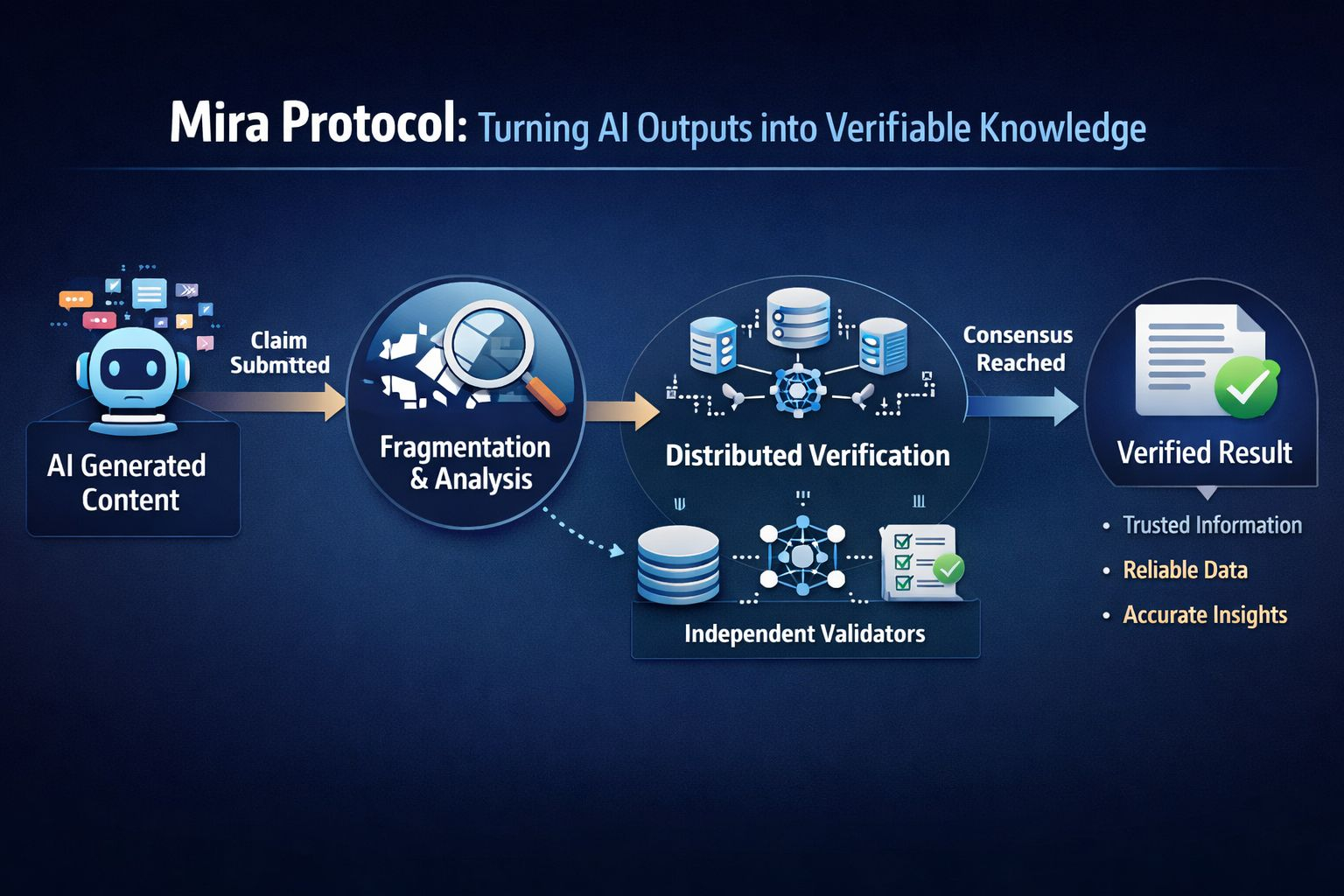

Some newer verification systems—like Mira Protocol—work by taking a statement and breaking it into smaller parts. Each small piece, or fragment, is evaluated separately. Different systems or validators examine these fragments, compare their conclusions, and only when enough agreement appears does the original claim begin to look trustworthy.

The intention behind this approach is careful and understandable. Artificial intelligence has a habit of producing answers that sound confident even when the information isn’t fully reliable. Systems like Mira Protocol are trying to build something like a trust layer around machine-generated knowledge.

At first I appreciated the caution built into that idea. It felt responsible.

But the longer I thought about it, the more I noticed a subtle tension hiding inside the process.

A single statement rarely stays single for long in such systems. Once it’s divided into fragments, each fragment demands attention. It must be reviewed, compared, validated, and sometimes debated by different models or participants. Slowly the structure around the claim grows—more checks, more confirmations, more steps designed to remove doubt.

And then something unexpected happens.

The process of verification begins to outweigh the claim itself.

What once started as a simple statement now sits beneath layers of proof. The claim becomes smaller while the machinery designed to confirm it becomes larger. It’s a quiet reversal that’s easy to overlook.

The interesting part is that this pattern doesn’t belong only to technology.

You can see it in academic work, where years of effort might revolve around confirming a very narrow hypothesis. You can see it online, where a simple observation is sometimes met with long chains of references and evidence meant to defend or challenge it. Evidence is important, of course. But sometimes the evidence grows so large that the original idea almost disappears inside it.

Fragments have that effect.

A fragment carries information, but it also carries a sense of incompleteness. When something is broken into parts, the surrounding context often fades away. Reassembling the meaning requires interpretation, and interpretation is rarely as clean as we hope.

Verification systems try to solve this by gathering more fragments and more signals of agreement. The assumption is that enough small confirmations will eventually produce certainty.

But certainty has a cost.

Every check requires time. Every validator requires resources. Every layer of consensus adds complexity to the system that supports it. Gradually the question shifts from Is this true? to something more practical: Is this worth the effort required to prove it?

That shift is subtle but important.

Some truths absolutely deserve heavy verification. Scientific discoveries, medical conclusions, and legal evidence require careful proof because the consequences of being wrong are serious. But many statements in our daily flow of information are smaller than that. They help us think, explore ideas, or understand a situation more clearly, even if they are not tested through elaborate systems of validation.

When the infrastructure of proof becomes too large, something delicate begins to disappear.

The original thought loses its simplicity.

The more I reflected on this, the more I realized the discomfort I felt wasn’t about technology itself. It was about our relationship with certainty. We often assume that adding more verification will automatically lead to deeper understanding. Sometimes it does. But sometimes it simply builds a larger structure around a very small piece of truth.

Fragments can protect accuracy.

But fragments can also quietly reshape the way we pursue knowledge.

And the realization that stayed with me, long after I finished reading about these systems, was surprisingly simple.

Finding the truth is difficult.

But deciding how much we are willing to spend to prove it might be even har