I’ve been around crypto long enough to notice when a new narrative starts forming. Sometimes it begins loudly with hype and bold promises, and other times it grows quietly in the background until people slowly start paying attention. The intersection between AI and blockchain feels like one of those areas right now. Over the past year, I’ve seen more and more projects trying to connect these two worlds. Some focus on decentralized compute, others talk about data marketplaces or autonomous agents. But when I first came across Mira Network, I noticed the conversation around it was slightly different.

Instead of trying to build the smartest AI model or the biggest decentralized GPU network, Mira seems to focus on something that people often overlook when talking about artificial intelligence: reliability. Anyone who has used AI tools regularly has probably experienced the same thing I have. You ask a question and the answer sounds perfect. It’s structured well, the explanation flows logically, and the tone feels confident. But then you double-check the details and realize parts of it were simply wrong. Sometimes it’s a small factual mistake. Other times it’s something that never existed at all.

These so-called hallucinations have become a normal part of working with modern AI models. Most of the time they’re harmless, especially when the AI is used for casual tasks like writing ideas or brainstorming. But the situation starts to look different when AI is used for research, automation, financial analysis, or decision making. If those systems are going to operate more independently in the future, the reliability problem becomes much harder to ignore.

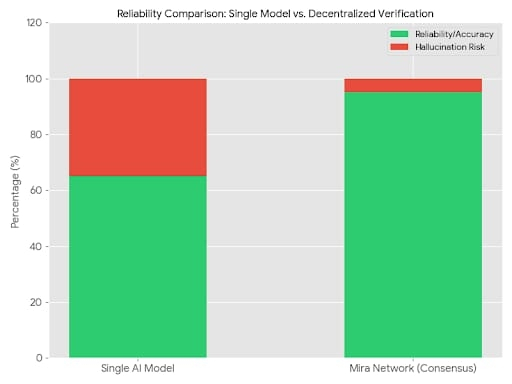

That’s the issue Mira Network appears to be thinking about. From what I’ve observed, the project is trying to create a decentralized verification layer for AI outputs. Instead of simply trusting one model’s answer, the system treats each response almost like a set of claims that need confirmation. The network breaks complex AI responses into smaller pieces of information and distributes them across a network of independent AI models that verify whether those claims are correct.

When I first read about this mechanism, it reminded me of something that has always been at the core of blockchain technology: consensus. In crypto networks, we don’t rely on a single authority to confirm whether a transaction is valid. Instead, multiple participants independently verify the same information. Mira seems to apply that same philosophy to AI. Rather than trusting a single system’s output, the network allows multiple models to examine the same claim and reach a collective conclusion.

If enough of them agree, the information can be treated as verified. If they disagree, the result remains uncertain rather than being presented as fact. It’s a simple idea in theory, but the implications are interesting. AI systems today are powerful, but they’re also probabilistic. They generate responses based on patterns and likelihood rather than guaranteed accuracy. Mira’s approach attempts to introduce a kind of verification layer on top of that uncertainty.

The architecture behind the network also follows familiar crypto patterns. Node operators contribute computing resources and participate in verifying AI outputs. These participants are economically incentivized through rewards, while incorrect verification or dishonest behavior can lead to penalties. It’s a structure that echoes many other decentralized networks where security depends on aligning incentives with honest participation.

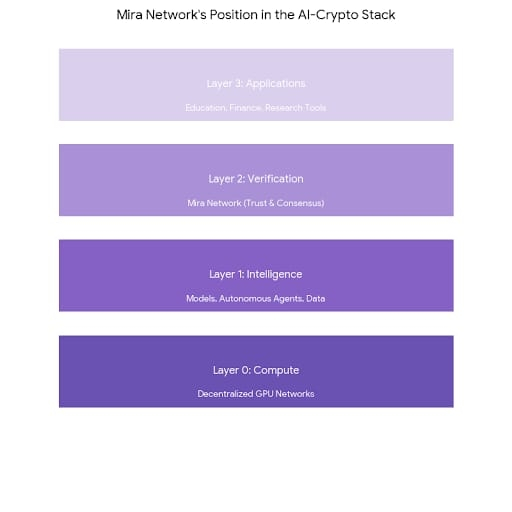

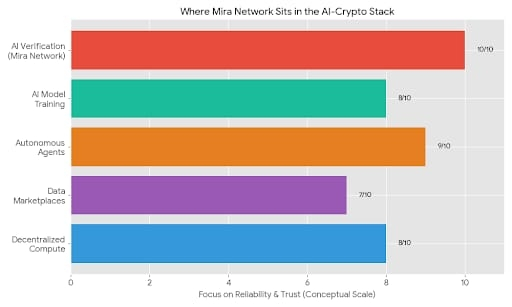

I’ve noticed that several projects in the AI-crypto space are exploring similar economic coordination models. The difference with Mira is that the network isn’t trying to compete directly with major AI models or centralized cloud infrastructure. Instead, it positions itself as a layer that sits above those systems, validating the information they produce.

This positioning is interesting because it doesn’t necessarily require Mira to replace existing AI tools. In theory, it could work alongside them. Different models could generate outputs, and the network would act as a mechanism that evaluates and verifies those results before they are trusted.

Another aspect that caught my attention is the early ecosystem activity surrounding the project. Reports suggest that Mira’s applications and tools have already attracted a few million users interacting with the system in different ways. A significant portion of this activity seems to come from community participation campaigns and incentive programs. The project has been running global leaderboard events where users interact with AI tools, verify content, or contribute to the ecosystem while earning points and recognition.

If you’ve been in crypto for a while, this kind of early participation strategy probably looks familiar. Many networks bootstrap their communities through reward systems before real economic demand develops. It helps generate attention and gives people a reason to explore the technology. But it also means that early numbers don’t always reflect long-term adoption.

That’s something I’ve seen happen many times before. A project launches with strong engagement during its incentive phase, but once rewards slow down, activity drops sharply. The networks that survive are usually the ones where developers continue building and users keep returning even without extra incentives.

So when I look at Mira, one of the main things I’m curious about is how developers respond to the verification layer concept. Infrastructure in crypto only becomes meaningful when builders start integrating it into real applications. If AI tools begin relying on Mira’s verification process to improve reliability, that could create a natural demand for the network.

Another factor that often determines success in crypto infrastructure is ecosystem gravity. Over time, certain platforms attract developers, liquidity, and users because they become useful hubs. Ethereum did this through smart contracts. Other networks did it through trading speed or specialized features. The question for Mira is whether verified AI outputs can become a strong enough use case to create that kind of gravitational pull.

In theory, there are several areas where reliable AI could be extremely valuable. Educational tools, research platforms, automated assistants, and even financial analysis systems could benefit from stronger verification mechanisms. If AI responses could be accompanied by cryptographic proof that multiple models confirmed the underlying claims, that might change how people interact with automated systems.

At the same time, there are still plenty of open questions. Verification across multiple models could require significant computational resources. Coordinating those systems in a decentralized network might introduce delays or costs that limit real-time usage. These are the kinds of practical challenges that often determine whether an idea works outside of whitepapers.

The broader AI-crypto landscape is also evolving quickly. Over the past year I’ve seen a growing number of projects focusing on decentralized compute markets, AI agent frameworks, and data networks. Each of them is trying to occupy a different part of the stack. Some provide raw computing power, others focus on model training, and some aim to support autonomous digital agents.

Mira seems to sit in a different layer — closer to verification and trust. It’s almost like an oracle system for AI truth, which is an interesting place to position a network. But it’s still early enough that the long-term structure of this ecosystem isn’t clear yet.

One thing I’ve learned from watching crypto cycles is that the projects that eventually matter are often not the ones that dominate headlines in the beginning. Infrastructure sometimes grows slowly and quietly before it becomes essential. At the same time, there are also plenty of ambitious ideas that simply fade once the initial excitement disappears.

Right now, Mira feels like it’s somewhere in that early observation stage. The concept of verifying AI outputs through decentralized consensus is thoughtful and addresses a real weakness in current AI systems. The project has already attracted a growing community and early ecosystem activity, which suggests people are at least curious about the approach.

But curiosity and long-term adoption are very different things. The real test will come when the network has to support real applications, real developers, and real demand beyond early participation campaigns.

For now, I find the idea worth watching. The problem it’s trying to solve is genuine, and the combination of AI verification with blockchain consensus is a creative direction. At the same time, it’s still too early to know whether this approach will become a foundational part of the AI ecosystem or remain an experimental concept.

Like many things in crypto, the answer will probably reveal itself slowly over time. For the moment, Mira Network is simply another project on the radar — something to observe, something to revisit later, and something that might become more interesting once real activity starts flowing through the system.

#Mira @Mira - Trust Layer of AI $MIRA