In the fast-moving world of artificial intelligence, most projects chase the same goals: more speed, more scale, and more impressive outputs. But Mira Network approaches the problem from a very different angle. Instead of focusing on how powerful AI can become, it focuses on a harder and more uncomfortable question: What happens when people start trusting AI answers too easily?

This question sits at the center of Mira’s philosophy.

Today, many AI systems are judged by how smoothly they generate language. If an answer sounds confident, structured, and intelligent, people tend to accept it. The problem is that fluency is not the same as reliability. An AI model can produce a polished explanation that sounds convincing while still containing subtle errors, misinterpretations, or exaggerated conclusions.

And once an answer appears complete, most users rarely stop to verify it. They read it, accept it, and move forward. That behavior creates a quiet but serious risk: AI can be wrong in a very persuasive way.

Mira Network seems to understand this problem better than most projects in the AI-crypto space.

Instead of trying to make AI outputs more impressive, Mira focuses on making trust harder to give without verification. This shifts the conversation away from pure performance and toward something more important—judgment and accountability.

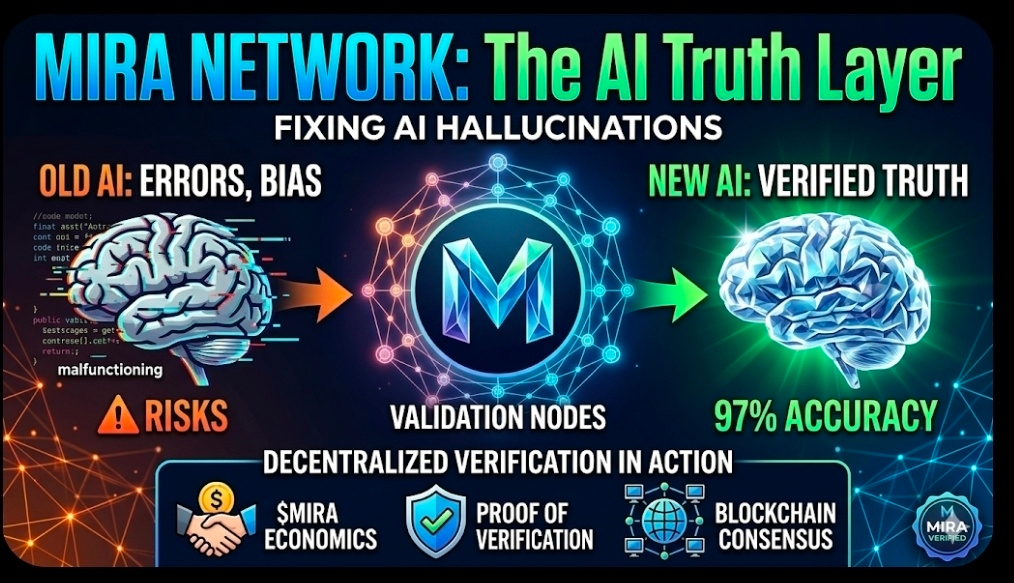

At the core of Mira’s approach is a simple but powerful idea: AI outputs should not be trusted just because one system produced them. They should be verified.

This means claims made by an AI system should pass through a process where they are checked and validated before being treated as reliable. Confidence should come after verification, not before it.

While that concept sounds obvious, most of the current AI ecosystem still assumes that better models will eventually solve the trust problem on their own. Improved training, larger datasets, stronger retrieval systems, and better interfaces may reduce mistakes—but they cannot eliminate them entirely.

Even the most advanced model can still produce a convincing error.

Mira starts from a more disciplined assumption: the trust problem in AI is not only about better models—it is about building systems that verify outputs.

Interestingly, this philosophy aligns closely with the principles behind blockchain technology. Crypto was originally built on skepticism toward centralized trust. Instead of relying on a single authority, blockchain systems use distributed validation to confirm information.

Mira applies that same mindset to artificial intelligence.

Rather than assuming intelligence automatically deserves trust, the project attempts to create a framework where AI outputs must earn credibility through verification.

This makes Mira less about AI production and more about AI accountability.

Another reason the project feels grounded is that it reflects real user behavior. In practice, people rarely double-check AI responses. Most users are busy and prefer quick answers. When an AI response looks polished and complete, it naturally lowers the urge to question it.

Mira appears designed with that reality in mind. Instead of expecting users to become perfect fact-checkers, it tries to build verification directly into the system.

This approach becomes increasingly important as AI starts influencing decisions rather than just generating text.

The next phase of AI is not just about writing summaries or answering questions. It will increasingly help people interpret information, evaluate opportunities, analyze risks, and make decisions.

When AI operates in those areas, mistakes are no longer harmless.

A flawed output could influence investments, governance decisions, research conclusions, or business strategies. At that point, the consequences of error become real. AI mistakes stop being embarrassing glitches—they become operational risks.

That is where Mira’s thesis starts to gain strength.

The project is essentially exploring whether trust in AI output can become a form of infrastructure, rather than something users simply assume. Instead of asking AI systems to generate more answers, Mira asks whether the environment around those answers can make false confidence harder to create.

Few projects are currently working at that layer.

Most AI platforms compete on capability—who can generate faster responses, smarter text, or more advanced automation. Mira, by contrast, is trying to compete on credibility.

That is a much more difficult market to build. Verification introduces friction. It can add time, cost, and complexity. Developers and users will only accept those trade-offs if the benefits are clearly visible.

This becomes Mira’s biggest challenge.

The success of the project will depend on whether verification becomes practically necessary, not just theoretically appealing. If people admire the concept but avoid using it because it feels inconvenient, Mira could remain a strong idea without widespread adoption.

However, if unverified AI outputs begin to feel risky—especially in environments where decisions carry real consequences—verification could become essential.

When that happens, systems like Mira could shift from being optional tools to becoming basic infrastructure, similar to security layers in the internet.

Invisible systems often become the most important once technology matures. When verification works well, users may barely notice it. They simply experience fewer misleading outputs gaining trust.

That absence of error can be difficult to market, but its value can be enormous.

Ultimately, Mira Network is not simply another AI project connected to blockchain technology. It represents an attempt to formalize skepticism in an age where machines can speak convincingly.

Instead of trusting answers because they sound intelligent, Mira tries to create a process where answers are trusted because they survived verification.

That ambition is narrower than many AI narratives, but it is also deeper. The project is not chasing the broadest story about artificial intelligence. Instead, it is exploring a specific and increasingly important problem: how to build trust in AI-generated information.

As AI becomes more involved in how people interpret data, evaluate risks, and make decisions, that problem will only grow more relevant.

Mira is positioning itself directly inside that gap between appearance and reliability.