Artificial intelligence is changing fast. Tools powered by language models can write code analyze data and even make decisions.. There is a big problem: reliability. Even the best models can give answers that are wrong. In low-risk situations this might not matter. However when AI handles money, automation or governance reliability becomes very important.

This is the challenge that @Mira - Trust Layer of AI Network wants to solve. Its ecosystem focuses on creating a system where AI outputs can be checked before they are trusted. This helps make autonomous AI systems safer to use.

The Core Problem With Current AI Models

Most AI systems rely on language models trained on huge datasets. While they are powerful they still have issues:

* Hallucinations

AI models sometimes generate information that sounds right but is actually false.

* Lack of Verifiability

It can be hard to confirm if an AI-generated answer is accurate without validation.

* Inconsistent Outputs

The same question asked twice can produce responses.

* Risk in High-Stakes Environments

If AI agents manage assets execute contracts or make operational decisions these inconsistencies could cause serious problems.

Because of these factors many organizations are hesitant to automate decision-making using AI alone.

Why Reliability Matters for Autonomous AI

Autonomous AI systems are designed to act on their own. Of waiting for human approval they analyze information and perform tasks automatically.

Examples could include:

* AI trading agents

* governance tools

* Intelligent infrastructure management

* Autonomous software development agents

However autonomy only works if outputs are dependable. Without verification mechanisms an incorrect AI decision could spread quickly through automated systems. This is where blockchain-based verification models are gaining attention.

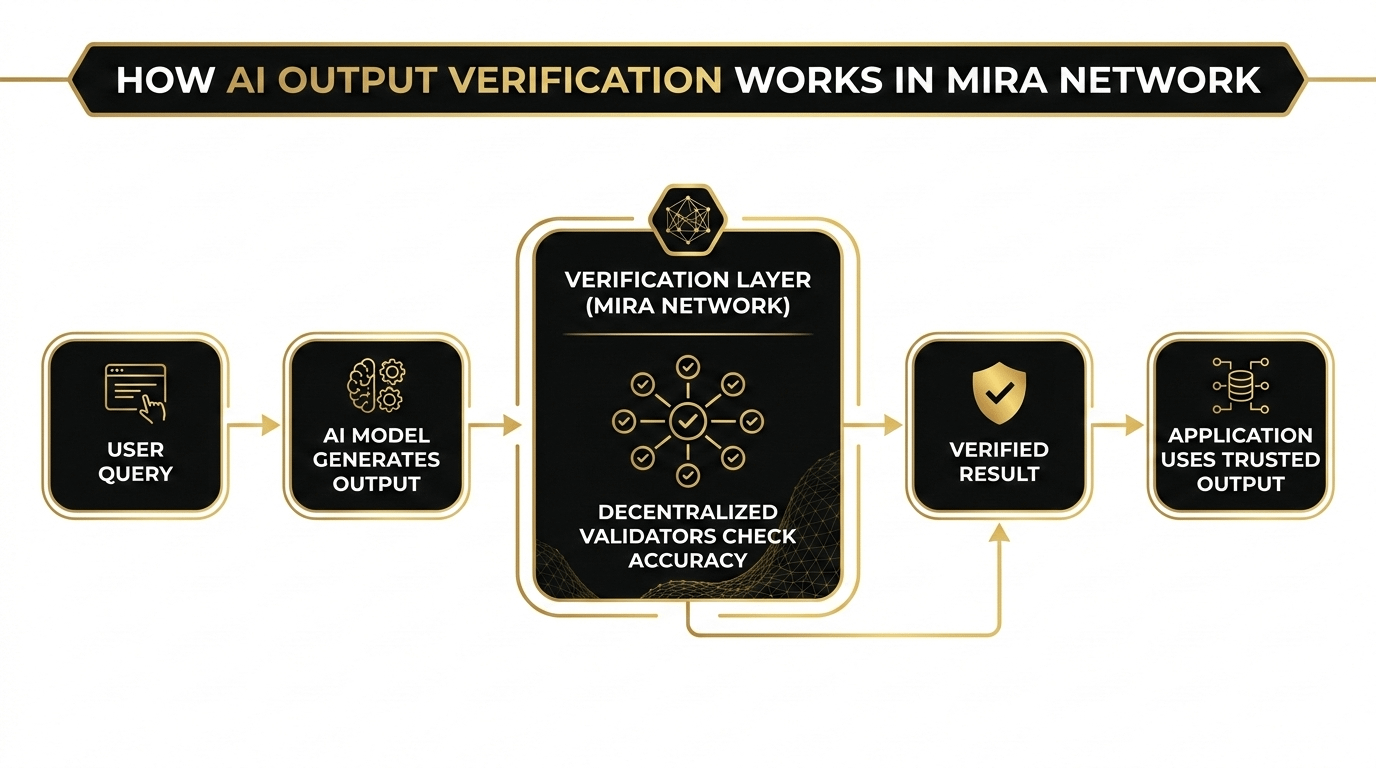

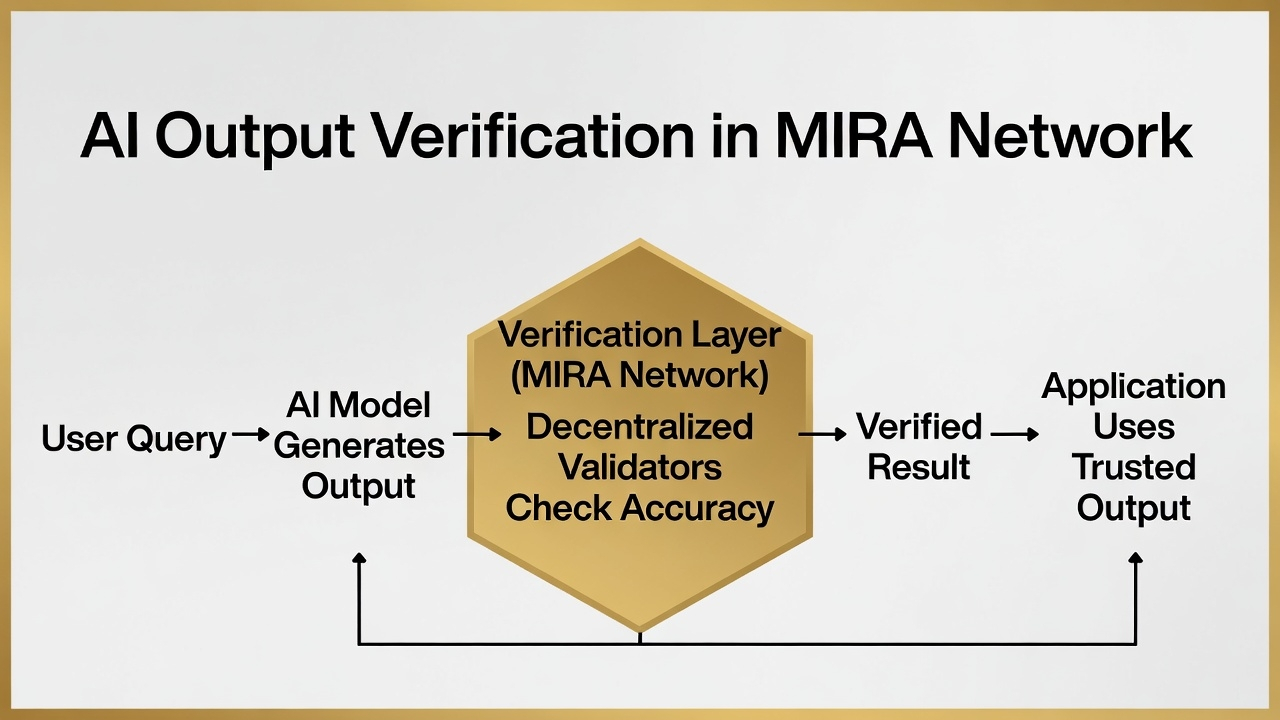

How MIRA Network Approaches the Problem

MIRA Network is exploring an architecture where AI outputs can be validated through systems. Than relying on a single model’s response MIRA focuses on creating a verification layer that evaluates AI results. The goal is to make AI interactions more trustworthy before they are used in applications.

In terms the process involves:

* AI generates an output

* The output is verified through the network

* Validated results can then be used by applications

This extra step could help reduce errors and increase confidence when AI systems are used in environments.

The Role of the MIRA Token

The ecosystem includes the MIRA token, which supports activity within the network. While details may evolve tokens in verification ecosystems typically help with:

* Incentivizing validation

* Supporting network operations

* Aligning participants who contribute to verification processes

As with blockchain projects the token becomes part of the economic layer that helps sustain the system.

Potential Impact on the Future of AI.

If reliability challenges can be addressed AI could move beyond its current role as a productivity tool.

Possible future applications include:

* autonomous trading strategies

* AI-driven on-chain governance

* Smart infrastructure management

* AI agents interacting across blockchain ecosystems

Projects, like MIRA Network explore how verification systems could make these ideas safer to implement.

Final Thoughts

Artificial intelligence is already transforming how people interact with technology. However, reliability remains one of the biggest barriers preventing widespread deployment in high-stakes environments.

By focusing on verification layers for AI outputs, MIRA Network is experimenting with a model that could make autonomous systems more dependable.

It’s still an emerging concept, but the idea highlights an important shift the future of AI may depend not only on smarter models, but also on stronger trust infrastructure.