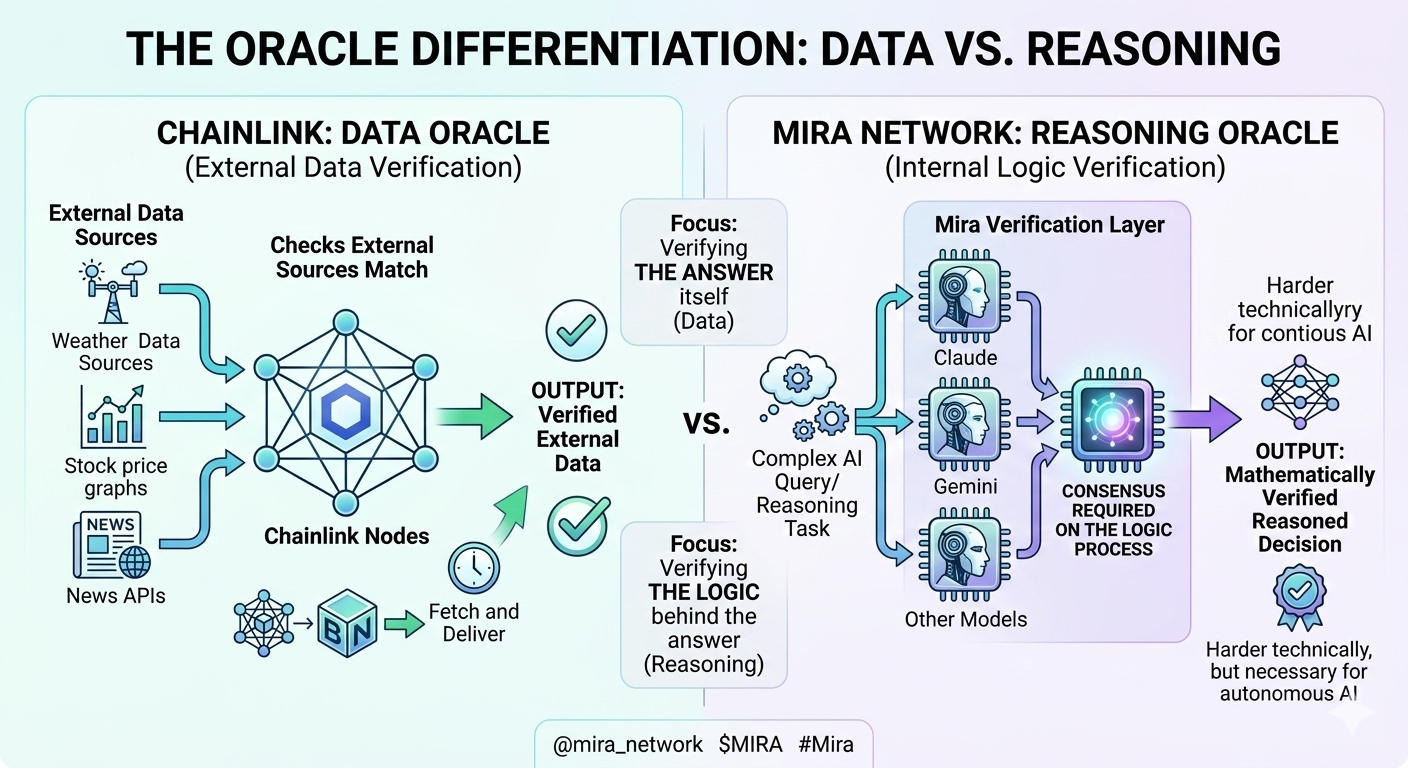

Mira Network positions itself as the infrastructure layer that makes AI outputs trustworthy through decentralized verification. Multiple AI models process the same query and must reach consensus before outputs get accepted as reliable. The concept addresses a genuine problem with AI hallucinations and unreliable outputs that prevent deployment in critical applications. The question is whether organizations actually need decentralized verification enough to pay for it versus using simpler approaches that cost less.

The core thesis makes sense on paper. Current AI systems produce hallucinations and errors requiring constant human oversight. Healthcare providers can’t deploy AI for diagnosis when mistakes could harm patients. Financial institutions won’t use AI for trading when unreliable outputs could trigger massive losses. Legal firms can’t trust AI research when citations might be fabricated. These high-stakes domains need verification that current AI doesn’t provide.

Mira’s solution routes outputs through multiple independent AI models requiring agreement between them before accepting results as trustworthy. This consensus-based approach theoretically eliminates single points of failure and creates mathematically verifiable results without human intervention. The verification happens in real-time maintaining performance while adding reliability that enables autonomous AI deployment in critical domains.

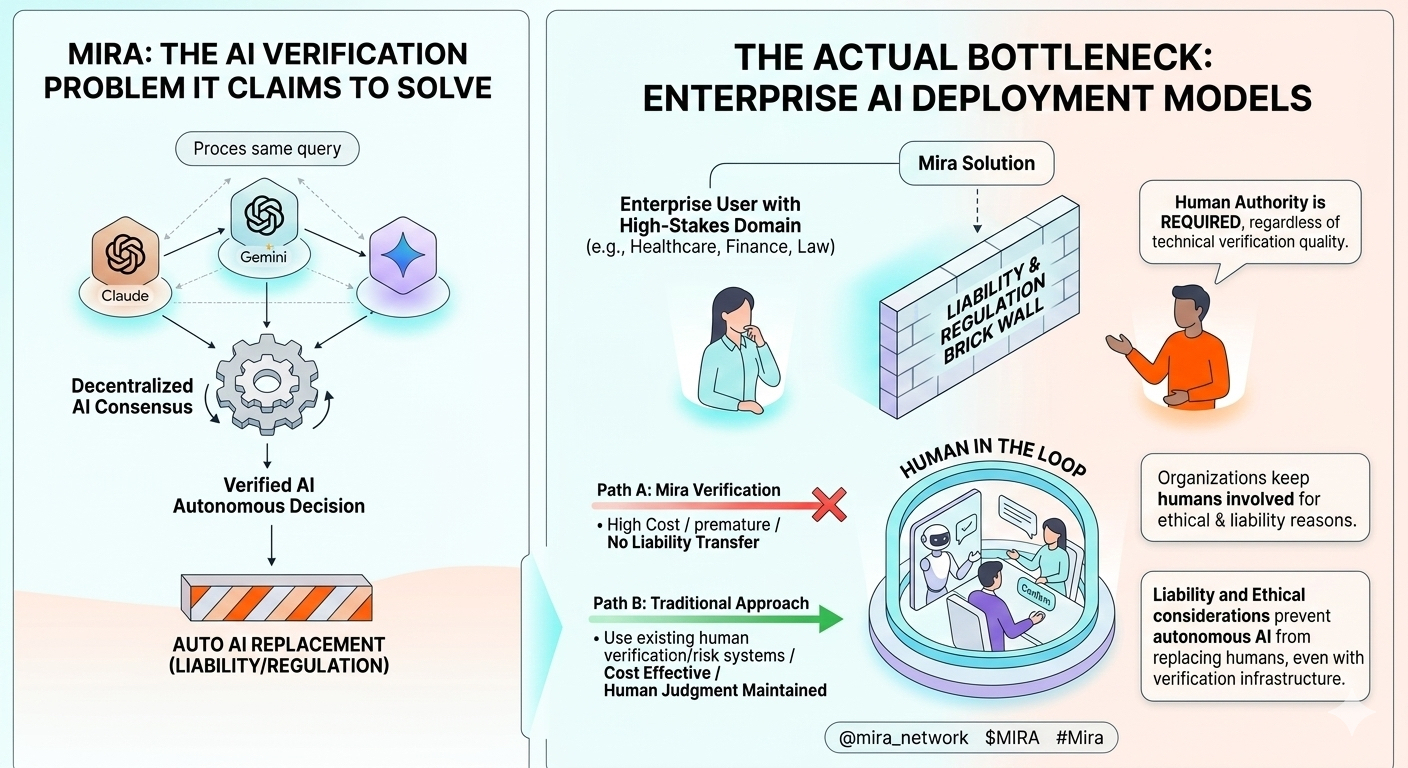

The challenge is that organizations facing AI reliability problems are solving them differently without needing decentralized verification infrastructure. Healthcare systems using AI keep humans in the loop for final decisions rather than pursuing full autonomy through verification protocols. Financial institutions run AI outputs through internal validation processes and risk management systems they already built. Legal firms verify AI research through traditional fact-checking rather than paying for consensus verification.

I talked to a technology director at a hospital system about AI deployment in clinical settings. They’ve implemented AI assistance for radiology analysis and diagnostic suggestions. When I asked whether decentralized verification infrastructure would help them deploy AI more broadly, the response revealed why Mira’s market might not exist at assumed scale.

“We’re not looking to make AI fully autonomous in clinical decisions. We want AI augmenting physician capabilities with humans maintaining final authority. The liability and ethical considerations mean we’ll always have clinicians making ultimate decisions regardless of AI verification quality. Adding verification infrastructure doesn’t change our deployment model because autonomous AI in healthcare isn’t our goal. We need AI that helps doctors work better, not AI that replaces medical judgment.”

This pattern repeated across conversations with potential enterprise users. Organizations aren’t trying to achieve fully autonomous AI that would need Mira’s verification. They’re implementing AI as assistance tools with humans staying involved. The verification infrastructure solves for autonomy levels that most organizations aren’t pursuing because liability, regulation, and trust considerations keep humans in control loops regardless of technical verification capabilities.

Financial services showed similar dynamics. AI gets deployed for analysis, pattern recognition, and suggestion generation. But actual trading decisions, compliance approvals, and risk management involve human oversight that internal processes already verify. Adding decentralized verification doesn’t enable removing human oversight because regulations and internal controls require humans staying involved regardless of how reliable AI verification becomes.

The pricing model for verification services also creates adoption barriers. Organizations implementing AI assistance are optimizing costs by using AI to augment expensive human labor. Adding verification infrastructure increases costs potentially eliminating the economic benefits that made AI adoption attractive. If verification costs approach human oversight costs, organizations might prefer keeping humans involved rather than paying for automated verification infrastructure.

Mira’s market exists if organizations are blocked from AI deployment by verification gaps and would pay for infrastructure solving those gaps. But enterprise conversations suggest organizations are either deploying AI with human oversight or not deploying it due to factors beyond verification quality. The verification infrastructure addresses a problem that might not be the actual deployment bottleneck for most potential customers.

The $MIRA token economics depend on transaction volume from verification requests as AI adoption scales. If organizations implement AI without needing decentralized verification, the transaction volume doesn’t materialize. The infrastructure might work perfectly while serving a market substantially smaller than assumed because enterprises solve reliability differently or maintain human oversight making verification infrastructure unnecessary for their deployment models.

Current market data shows $MIRA trading around $0.09 with market cap near $19 million. Token price dropped 96% from all-time highs suggesting market skepticism about verification demand materializing at scale justifying infrastructure investment. The price performance indicates investors are questioning whether enterprises will pay for decentralized AI verification versus solving reliability through simpler approaches that cost less.

For anyone evaluating $MIRA, the critical question is whether organizations deploy AI autonomously at scale requiring verification infrastructure or whether they implement AI as human-assisted tools where verification isn’t the deployment bottleneck. Enterprise conversations suggest the latter, which would make verification infrastructure premature by years if not solving the actual barriers preventing broader AI adoption in critical domains.