Artificial intelligence is rapidly transforming digital systems. From data analysis to automated decision-making, AI is becoming a central component of modern technology. However, as AI capabilities expand, one challenge continues to grow: how do we verify the reliability of AI outputs?

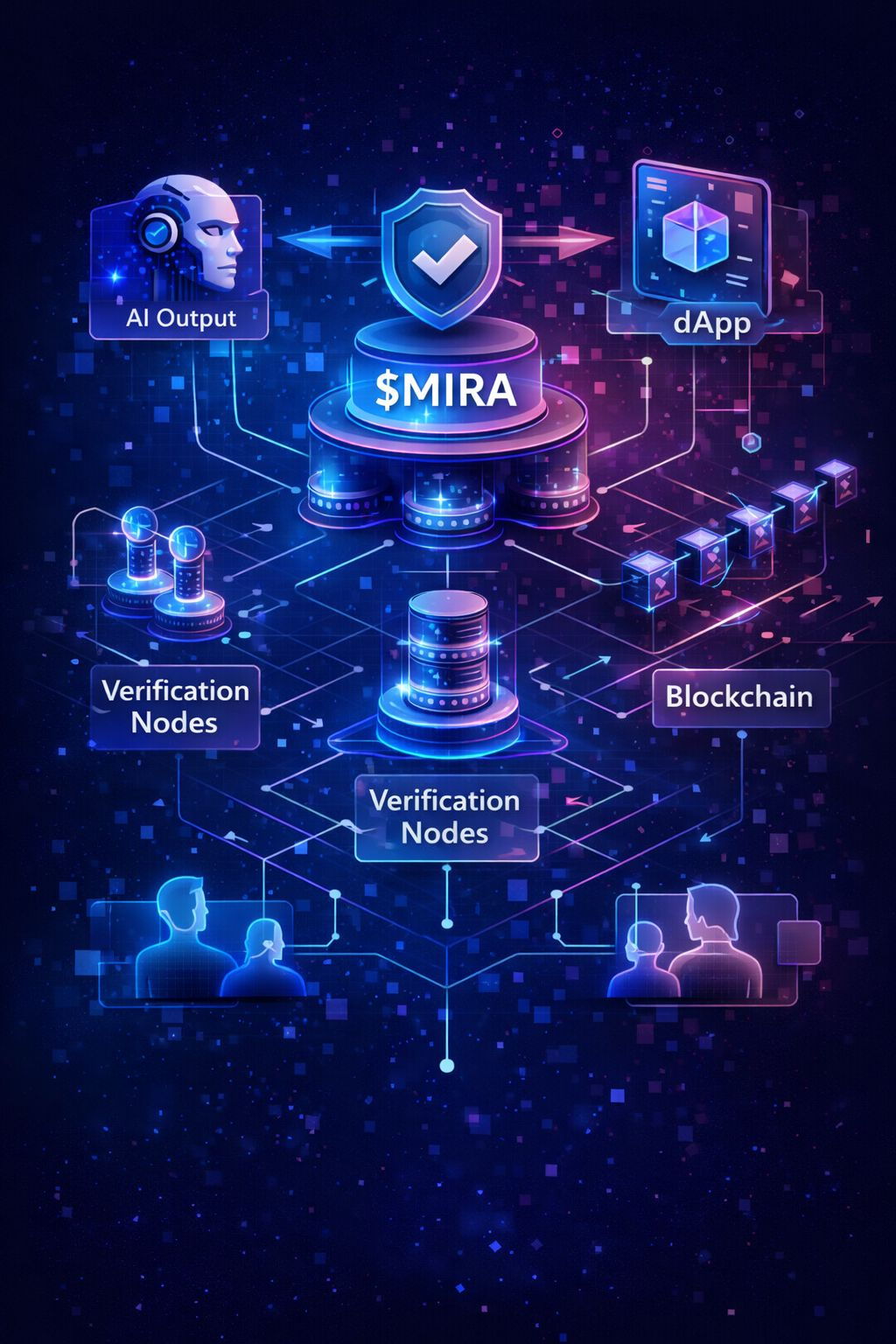

This question becomes even more important in decentralized ecosystems where users interact without relying on centralized authorities. In such environments, trust must come from transparent systems rather than reputation alone.

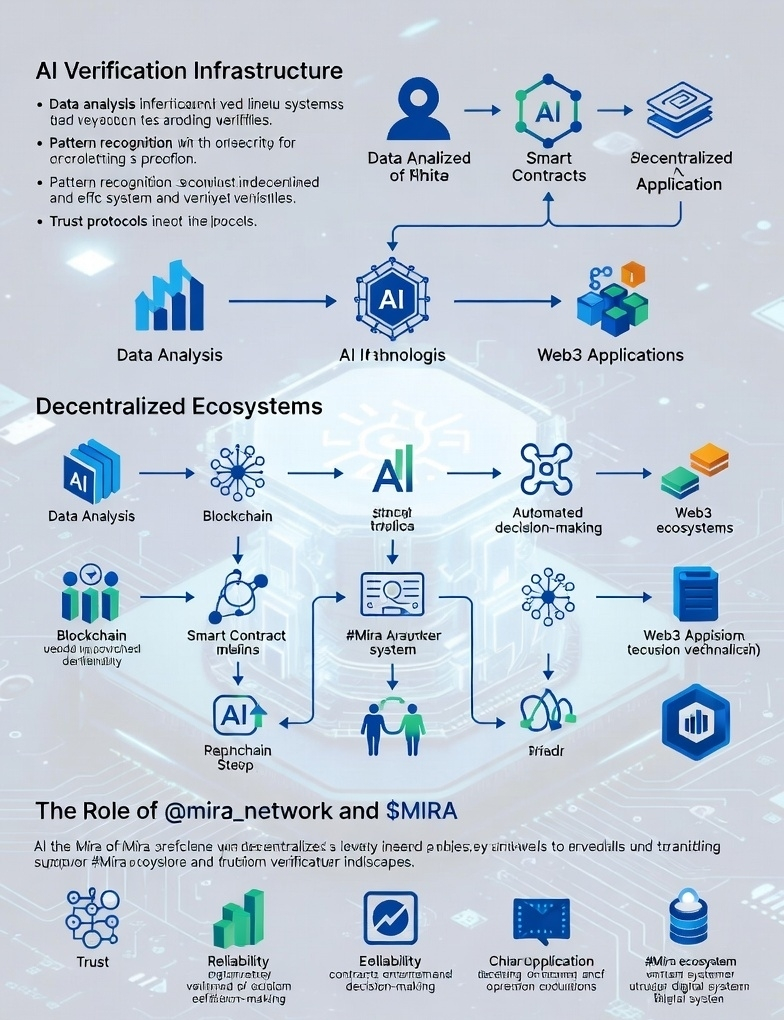

This is why projects exploring AI verification infrastructure are attracting attention. While learning about @mira_network, I became interested in the concept behind $MIRA — a trust layer designed to help ensure AI outputs can be verified within decentralized environments.

In many ways, AI systems can produce answers faster than humans can validate them. That gap between generation and verification is where new infrastructure could play an important role. As Web3 applications continue integrating AI, reliability mechanisms may become essential.

The #Mira ecosystem is exploring how decentralized systems might support this verification process. Whether analyzing data, assisting decision-making, or interacting with decentralized applications, AI systems will require frameworks that allow users to trust the results they receive.

Observing these developments during the campaign is fascinating because they highlight an emerging conversation: the future of AI may depend not only on intelligence but also on trust and verification.