The first time I seriously thought about trust in crypto infrastructure was not while trading, it was while signing a transaction.

Anyone who has used DeFi long enough knows the feeling. You open a wallet, connect to a dApp, approve a token, confirm a transaction, wait for the network, then watch the interface update. Every step asks you to trust something you cannot fully see. The contract. The interface. The RPC. The wallet. Even the chain itself.

Crypto people talk a lot about trustless systems. But when you actually use them every day, you realize something subtle. The experience still relies heavily on trust.

Not trust in people, but trust in systems behaving correctly.

Lately I have started to notice a similar pattern with AI tools. Many of us now use them daily, sometimes without even thinking about it. They summarize research, write scripts, explain contracts, and generate trading ideas. The answers often arrive instantly and sound confident. But sometimes they are simply wrong.

Not malicious, just wrong.

And when the answer matters, that small uncertainty changes how you behave.

You double check the result. You open another tab. You ask the same question again with slightly different wording. That hesitation is familiar to anyone who has used DeFi in its early days.

You do not fully trust the system yet, so you verify everything yourself.

This is where infrastructure becomes interesting.

Recently I came across Mira Network, not as something to trade or speculate on, but as an idea that feels very aligned with how crypto systems usually solve problems.

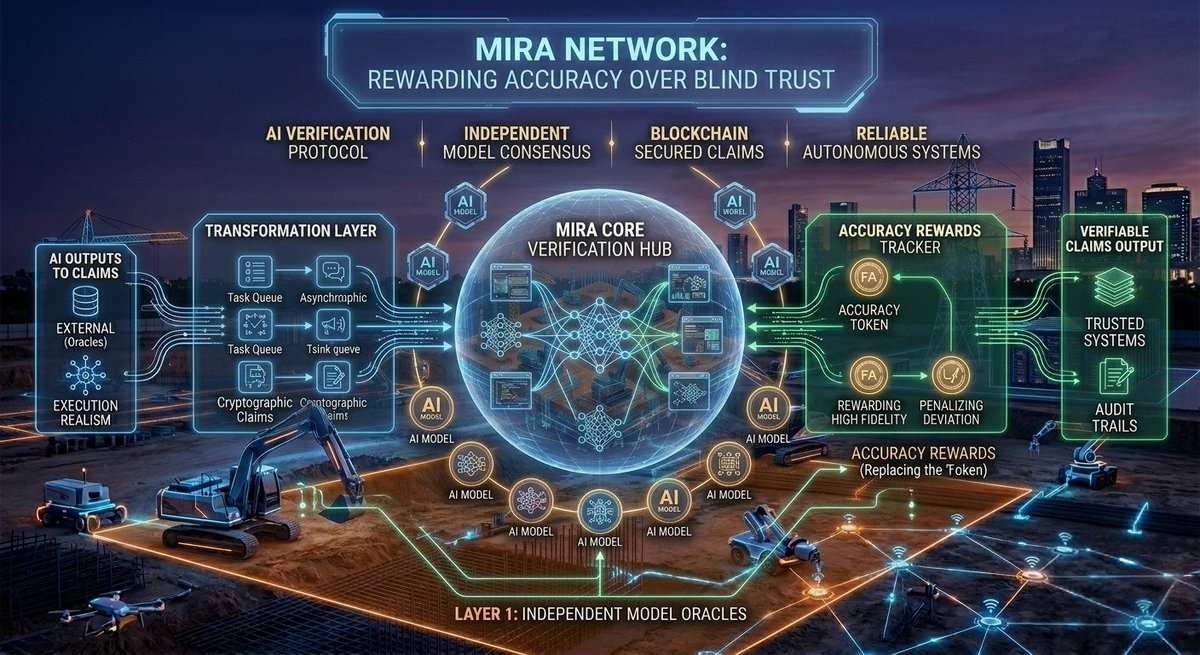

Instead of trying to build the perfect AI model, the network focuses on verifying AI outputs. When an AI generates a response, the system breaks the result into smaller factual claims and distributes them to independent verifier models across the network. Those claims are then checked and confirmed through a consensus process before the result is considered reliable.

If you have spent enough time around blockchain systems, the architecture feels strangely familiar.

One node saying something does not mean much. A network agreeing on something creates confidence.

What I find interesting is not the technology itself, but how it changes the mental model of the user.

Most AI tools today operate like assistants. You ask a question, they respond. The interaction feels conversational, but the trust layer is thin.

You trust the model because it usually works, not because there is a mechanism guaranteeing correctness.

A verification network changes that relationship.

Instead of trusting the model directly, you trust the process that checks the model.

Crypto has always been built around that idea.

Blockchains do not assume that every participant is honest. They assume the opposite. Systems are designed so that incorrect behavior is either rejected by consensus or made economically irrational.

Mira’s approach seems to apply that same philosophy to AI outputs.

When the network receives an AI generated result, it converts the content into smaller statements that can be independently verified. Those statements are then checked by multiple models and nodes, and the system only accepts the output once agreement is reached.

This reduces the risk of a single model producing an incorrect answer that slips through unnoticed.

The effect on user psychology is subtle but meaningful.

When people interact with AI today, they behave cautiously. Even if the answer looks correct, there is always a small voice asking whether it might be hallucinated.

Anyone who has watched an AI confidently invent a statistic or misinterpret a document knows the feeling.

That uncertainty becomes a bigger problem when AI starts interacting with financial systems.

More traders are experimenting with automation. Bots analyze markets, parse news feeds, monitor on chain activity, and sometimes execute trades automatically.

In those environments, incorrect information does not just create confusion.

It creates losses.

A system that can verify AI generated data before it is used becomes much more than a productivity tool. It becomes infrastructure.

That shift reminds me of the difference between centralized exchanges and decentralized exchanges.

Centralized exchanges feel smooth because the system hides complexity. Orders execute instantly. Balances update immediately. You do not worry about signatures or mempools.

DeFi exposes the mechanics. Approvals, gas fees, block confirmations, transaction hashes.

The experience is slower, but the system becomes more transparent.

Verification networks for AI might introduce a similar tradeoff.

Instead of instant answers from a single model, the output might pass through a verification process before it reaches the user. The extra step may add complexity under the hood, but it also adds a layer of confidence.

In crypto, confidence rarely comes from promises. It comes from mechanisms.

Another detail that stands out is how the system aligns incentives.

Verification nodes are economically incentivized to provide accurate results and can be penalized if they behave dishonestly.

Again, this feels very familiar.

Crypto rarely tries to force honesty through rules. Instead, it builds systems where honesty becomes the most rational strategy.

Validators secure blockchains. Oracles secure price feeds. Relayers secure cross chain bridges.

Each layer depends on incentives rather than trust.

AI verification networks appear to follow the same pattern.

Another angle that I find interesting is decentralization.

Many AI platforms today are controlled by a small number of companies. The models, the infrastructure, and the access policies are all centralized.

For casual use that may not matter much. But if AI systems start making decisions that affect financial markets, identity systems, or automated contracts, the trust model becomes important.

A decentralized verification layer spreads that responsibility across participants instead of concentrating it inside a single platform.

This is similar to how decentralized oracles replaced single data feeds in many DeFi protocols.

The information might be similar, but the way it is validated changes how comfortable people feel relying on it.

Of course, infrastructure alone does not guarantee adoption.

Crypto history is full of technically impressive systems that never gained real usage because the user experience was too complicated.

Wallet friction, gas management, transaction signatures, and network switching are still barriers for many people.

If AI verification layers are going to matter, they will likely need to become invisible to the end user.

The same way most people using Ethereum today never think about validator sets or consensus algorithms.

They simply trust that the system works.

Perhaps AI will eventually reach a similar stage.

Instead of asking whether an AI response is correct, users might assume the answer has already been verified by the network behind it.

That kind of trust does not appear overnight.

It grows slowly, through repeated interactions where the system behaves exactly as expected.

Crypto has spent more than a decade building systems that allow strangers to coordinate without trusting each other.

Applying that same philosophy to AI feels like a natural next step.

And maybe the most interesting part is this.

The infrastructure that shapes how people trust technology is rarely the loudest or most visible layer.

It is usually the quiet network running in the background, verifying everything while most users never notice it.

@Mira - Trust Layer of AI #Mira