Over the past few years in crypto, I’ve noticed something that took me a while to fully understand: the nicest-looking metrics can sometimes hide the biggest weaknesses. I’ve seen tokens with impressive trading volume, constant social media hype, new exchange listings, and a story that sounds perfect on paper. But sooner or later the same question always comes up for me — can the network actually deal with bad actors when real value is at stake?

That’s the perspective I had in mind when I started digging into MIRA. At first, most people talk about it as a project that verifies AI outputs, which is already an interesting idea. But the detail that really caught my attention was something deeper than that. The network tries to make incorrect verification economically painful through slashing. For me, that’s not a small technical feature — it’s a serious design choice, and I think a lot of people overlook how important that can be.

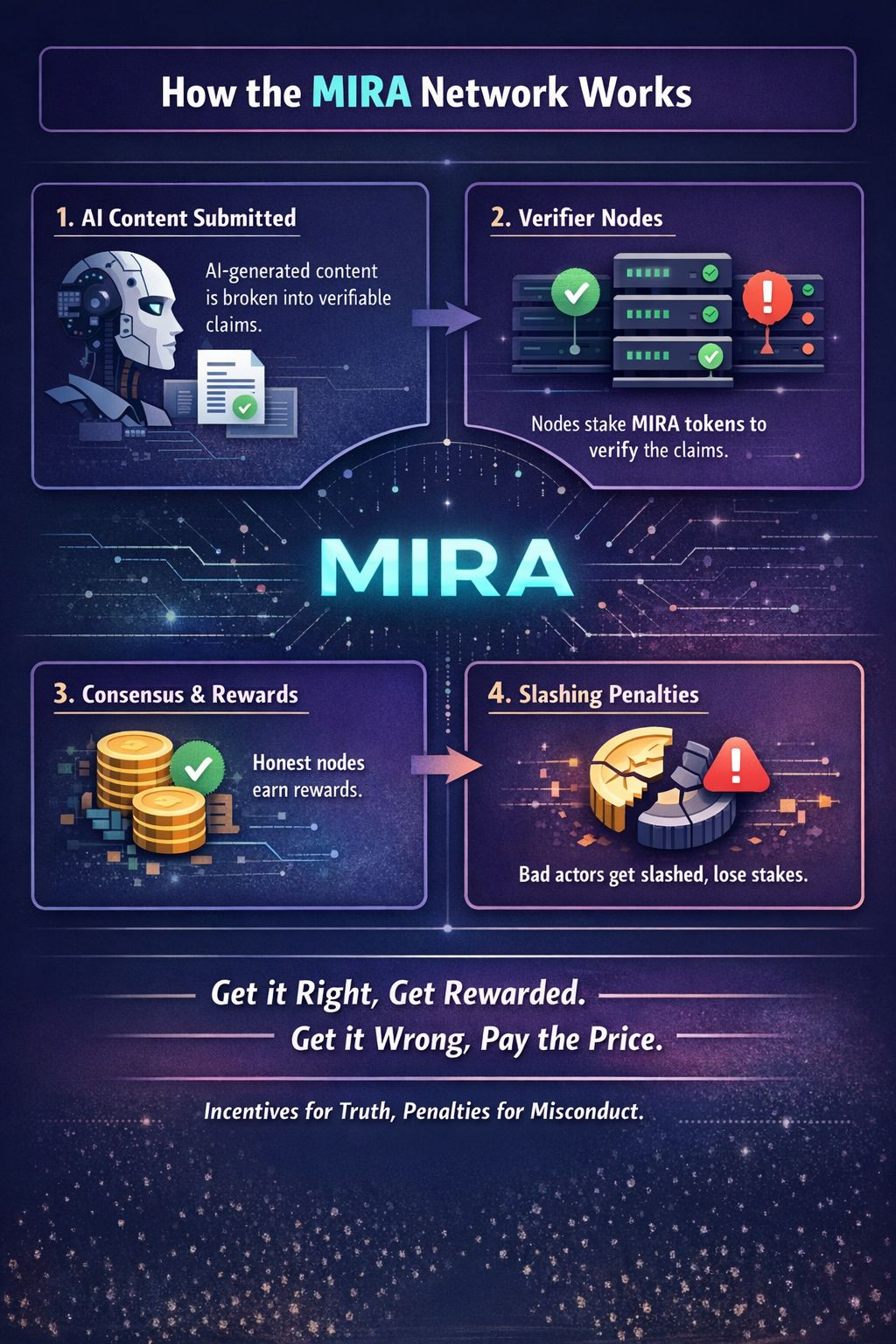

From what I’ve read and understood, the core concept behind MIRA is actually pretty simple. The project aims to build a decentralized layer that can verify content generated by AI systems. According to the whitepaper, the process works by breaking a piece of content into smaller claims that can be checked independently. Those claims are then sent to separate verifier nodes, and the network eventually reaches consensus about whether the output should be considered valid.

Where things start to get more interesting is the security structure around that process. Based on the token documentation, node operators have to stake MIRA tokens in order to participate in verification. If their evaluation turns out to be dishonest or incorrect, they risk facing slashing penalties. What also stood out to me is that delegators aren’t completely protected either. If the validator they support ends up misbehaving, they can also lose part of their stake.

To me, that signals something important about how the system is designed. It’s not relying only on rewards to motivate participants — it also relies on consequences. And from an investor or trader’s perspective, that difference matters because slashing reshapes the incentive structure completely. A network that only distributes rewards can easily attract opportunistic capital that comes and goes quickly. But when penalties are part of the system, it at least encourages participation from operators who believe they can perform their role honestly and consistently.

In simple terms, the message I take from MIRA is fairly clear: if you help maintain the trust layer around AI outputs, you get rewarded. But if your actions damage that trust, you bear the cost. Personally, I find that model more straightforward than many crypto systems that talk constantly about security but never really make dishonest behavior expensive.

The market hasn’t ignored MIRA either, although it still feels like an early-stage project. When I checked the numbers on BaseScan, the token had roughly 13,000 holders. The maximum supply is set at 1 billion tokens, with around 244.9 million currently circulating. At the time I looked, the price was sitting close to $0.0828, which placed the circulating market cap somewhere around $20 million.

I also checked CoinGecko and CoinMarketCap and saw a very similar price range, with daily trading volume sitting in the mid single-digit millions. To me, that suggests there is real trading interest around the project. At the same time, it’s probably still too early to assume that strong network effects are already established.

This is where I think the retention question becomes important. Slashing can definitely improve security, but it only works if enough reliable operators stay active in the network. If the ecosystem becomes dominated by short-term speculation and serious verifiers don’t remain involved, the system could eventually struggle with weaker participation, concentrated stake, or lower-quality consensus.

For now, I’m mostly watching how that balance evolves. Incentives, penalties, and long-term participation will likely determine whether MIRA’s verification model can really hold up as the network grows.$MIRA @Mira - Trust Layer of AI #Mira