A few days ago, I was talking with my friend Omar, who works in data analytics. We were discussing how quickly AI tools are becoming part of everyday work writing reports, analyzing data, even assisting with research.

Omar was excited about how fast things are moving.

But then he asked me a simple question.

“Do you actually trust everything AI tells you?”

I paused.

Because the truth is, even the most advanced AI systems today can be wrong. Not obviously wrong sometimes just slightly inaccurate, yet confident enough to sound convincing.

That’s when I started thinking about the real challenge in AI.It’s not intelligence.It’s trust.

The Confidence Problem

AI models are incredibly powerful because they learn patterns from massive amounts of data. They predict what information is likely to come nextBut that also means they are probabilistic systems.

They can hallucinate facts.

They can reflect bias from their training data.

And sometimes they present uncertain information with absolute confidence.

In casual situations, that’s manageable.

But in industries like finance, healthcare, or law, small mistakes can have serious consequences.

So the question becomes:

How do we verify AI outputs before trusting them?

A Different Way to Think About AI

While exploring solutions to this problem, I came across the concept behind the @Mira - Trust Layer of AI

What makes the idea interesting is that it doesn’t try to build a “perfect” AI model.Instead, it builds a system around AIthat checks its outputs.

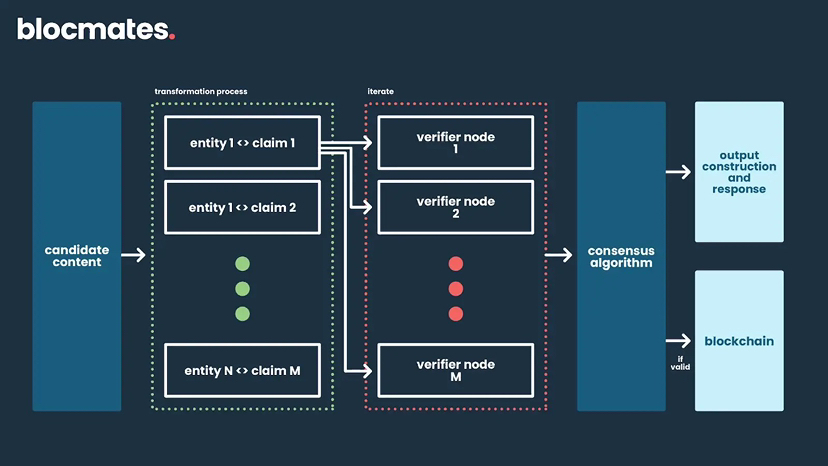

Rather than relying on one model to produce the correct answer, Mira breaks complex responses into smaller verifiable claims. These claims are then evaluated by multiple independent AI models across a decentralized network.

Each model reviews the claim from its own perspective.

When enough models agree, the network reaches consensus.That consensus becomes the verification result.

Why Multiple Perspectives Matter

Think about how humans verify information.When something important happens, we don’t rely on a single witness. We gather multiple perspectives, compare accounts, and look for agreement.

The same principle applies here.

Different AI models may have different training data, architectures, and biases. By combining them in a verification network, the system reduces the risk of any single model’s weakness dominating the outcome.It turns individual uncertainty into collective reliability.

Incentives and Accountability

Another interesting element is the economic structure behind the network.Participants who run verification nodes are incentivized to provide accurate evaluations. If they behave dishonestly or lazily, they risk losing staked value.This creates a system where accuracy isn’t just encouraged it’s economically rational.Instead of trusting participants to act responsibly, the network aligns incentives with honesty.

Why This Matters for the Future of AI

As AI becomes integrated into more industries, the expectations around reliability will increase.Companies won’t just ask whether AI is powerful.They will ask whether it is verifiable.Governments will ask whether decisions can be audited.Users will ask whether outputs can be trusted.Without mechanisms to verify AI-generated information, adoption will always carry risk.

A New Layer of Infrastructure

When I look at the broader technology landscape, I see each generation of innovation adding a new layer of infrastructure.

The internet gave us global connectivity.

Blockchain introduced decentralized trust.

AI is transforming how information is created.

But for AI to reach its full potential, it may need another layer a system that ensures the information it produces can be verified and trusted.

That’s what makes the idea behind Mira interesting to me.Because the future of AI may not depend only on how intelligent machines become.It may depend on how well we can prove that they’re right.

@Mira - Trust Layer of AI $MIRA