Artificial intelligence has become remarkably good at speaking with confidence. Ask a modern AI model a question and it can produce a polished explanation, a financial summary, or even technical guidance within seconds. At first glance it feels impressive, sometimes even a little magical. But anyone who has spent enough time using these systems eventually notices something uncomfortable. AI can sound certain even when it is wrong.

These errors are often called hallucinations. A model might invent facts, misinterpret data, or present outdated information as if it were completely accurate. In casual situations this might only lead to mild confusion. But as AI begins to assist in research, finance, healthcare, and automated decision-making, the cost of those mistakes becomes much higher.

This growing gap between AI confidence and AI reliability is exactly where Mira Network begins to make sense.

Instead of focusing on building another powerful AI model, Mira approaches the problem from a different direction. The project is built around a simple but important idea: before machine-generated information is trusted, it should first be verified.

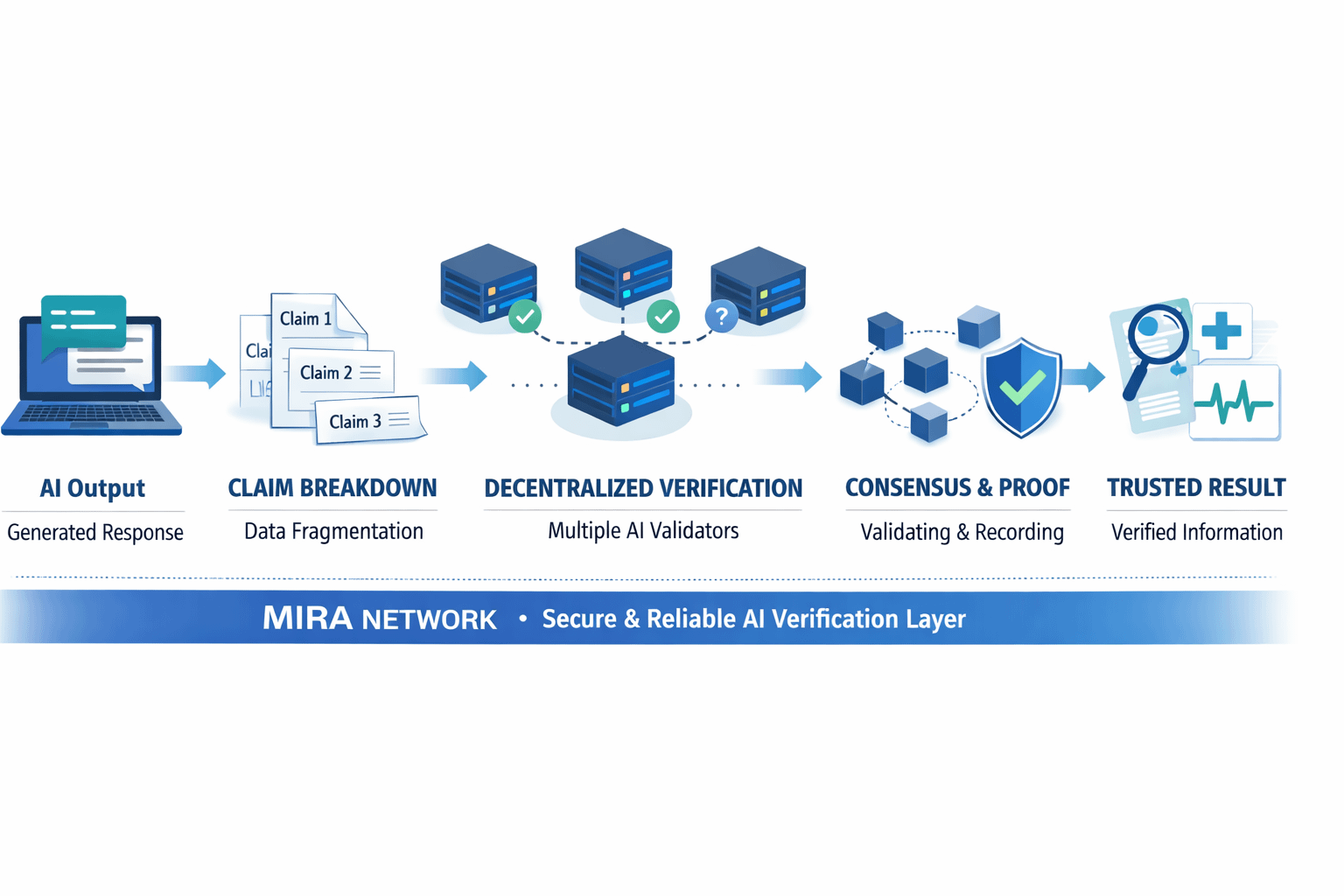

Rather than accepting the output of a single model, Mira introduces a verification layer that evaluates whether the information inside that output actually holds up. When an AI system generates a response, Mira breaks that response into smaller pieces of information, often referred to as claims. These claims are then sent across a network of independent AI verifiers. Each verifier examines the claim from its own perspective, and when enough of them agree, the result can be recorded with cryptographic proof.

In practical terms, Mira is trying to create something similar to peer review for artificial intelligence. Instead of one system producing an answer and everyone simply accepting it, the answer passes through a collaborative checking process.

This approach gives the network several important strengths.

One of the most obvious advantages is that it reduces dependence on a single AI model. Today many applications rely entirely on one system’s interpretation of data. If that system misunderstands something, the mistake flows directly to the user. Mira’s design introduces multiple independent checks, which increases the chance that questionable information is identified before it spreads further.

Another strength lies in transparency. Because verification results can be anchored through blockchain infrastructure, it becomes possible to trace how information was evaluated. In fields like finance, research, or legal analysis, that transparency could become extremely valuable. Instead of simply trusting a machine’s answer, users can see that the claims inside the answer have actually been reviewed by a network of validators.

The ecosystem surrounding Mira is also structured around participation. Verification nodes contribute computational work to analyze claims, developers build applications that rely on verified outputs, and users interact with tools that benefit from this additional layer of reliability. The system uses economic incentives to encourage honest participation, which means that verification is not just a technical process but also an economic one.

Some early applications already hint at what this ecosystem might look like. Verified AI chat tools, research assistants, and developer APIs are examples of how the verification layer could integrate with real software products. These experiments are still evolving, but they illustrate how Mira could function as infrastructure rather than just another standalone application.

The real question, however, is where a system like this might actually matter in the real world.

One clear area is financial analysis. Traders and analysts increasingly rely on AI to summarize news, interpret data, and generate insights. But incorrect information in financial markets can lead to costly decisions. A verification layer that checks claims before presenting them could make AI tools more dependable for investors.

Another important area is autonomous software agents. As AI systems begin performing tasks on behalf of users—whether that involves managing digital assets, executing strategies, or interacting with decentralized services—the reliability of the information guiding those systems becomes crucial. Verification infrastructure could help ensure that automated decisions are based on information that has been examined rather than merely predicted.

Research and scientific work also represent potential long-term applications. Academic environments rely heavily on verification and peer review. If AI is going to assist with research or data interpretation, systems that verify claims could help maintain the integrity of that process.

Even emerging sectors like tokenized real-world assets may eventually benefit from verification layers. When AI tools summarize documents, contracts, or asset descriptions, verifying those claims could reduce misunderstandings and improve trust around digital representations of physical assets.

At the same time, Mira Network faces several challenges.

Verification naturally adds complexity. Breaking content into claims, distributing those claims across multiple nodes, and reaching agreement requires additional resources and coordination. Compared to simply asking one AI model for an answer, this process may be slower and more computationally intensive. For Mira to succeed, the benefits of reliability must clearly outweigh the cost of that extra effort.

Another limitation is philosophical. Even if multiple AI systems agree on something, consensus does not automatically guarantee truth. If several models share similar biases or training data limitations, they might still arrive at the same incorrect conclusion. Mira reduces the risk of individual errors, but it cannot completely eliminate the broader uncertainties that come with artificial intelligence.

There is also the question of adoption. A verification network only becomes powerful when developers and applications actually use it. If the broader AI ecosystem continues prioritizing speed and convenience above all else, verification infrastructure may take longer to gain traction. But if industries begin demanding stronger guarantees of reliability, networks like Mira could suddenly become much more important.

Looking at the bigger picture, Mira represents a different kind of AI narrative. Much of the current technology race focuses on who can build the most powerful model or generate the most impressive responses. Mira shifts the focus toward something quieter but arguably more important: trust.

Technology often evolves in phases. First comes rapid innovation, where new capabilities appear almost faster than society can absorb them. Later comes the infrastructure that stabilizes those capabilities and makes them dependable enough for real-world use. Mira appears to be positioning itself within that second phase of artificial intelligence.

Instead of asking how fast AI can generate answers, it asks a more thoughtful question: how can we be sure those answers deserve to be trusted?

Final takeaway: Mira Network is not trying to compete with AI models that produce information. It is trying to build the system that verifies whether that information should be believed. If artificial intelligence continues moving deeper into real decisions and real economies, the systems that verify AI may eventually become just as important as the systems that create it.

#Mira @Mira - Trust Layer of AI $MIRA