I have been thinking a lot about the tension between privacy and verification in artificial intelligence systems. On one hand, AI models often rely on sensitive datasets, proprietary algorithms, or confidential operational environments. On the other hand, as these systems begin influencing financial decisions, infrastructure management, and automated services, the demand for verifiable records of their behavior continues to grow. Balancing these two requirements is not easy. That is partly why I started looking more closely at how Mira Network approaches privacy-preserving machine learning. The phrase “privacy-preserving AI” is often used in research circles, but the practical implications become clearer when AI systems move beyond controlled environments. Machine learning models may process medical records, financial transactions, proprietary business data, or confidential operational information. Organizations deploying those systems often need to demonstrate that the models behave responsibly without exposing the underlying data itself. Traditional approaches usually rely on centralized infrastructure. A company runs the AI model internally, keeps the training data private, and produces reports explaining how the system behaves. For internal operations, that model can work reasonably well. But as AI systems interact with external institutions or automated networks, questions about verification begin to arise.

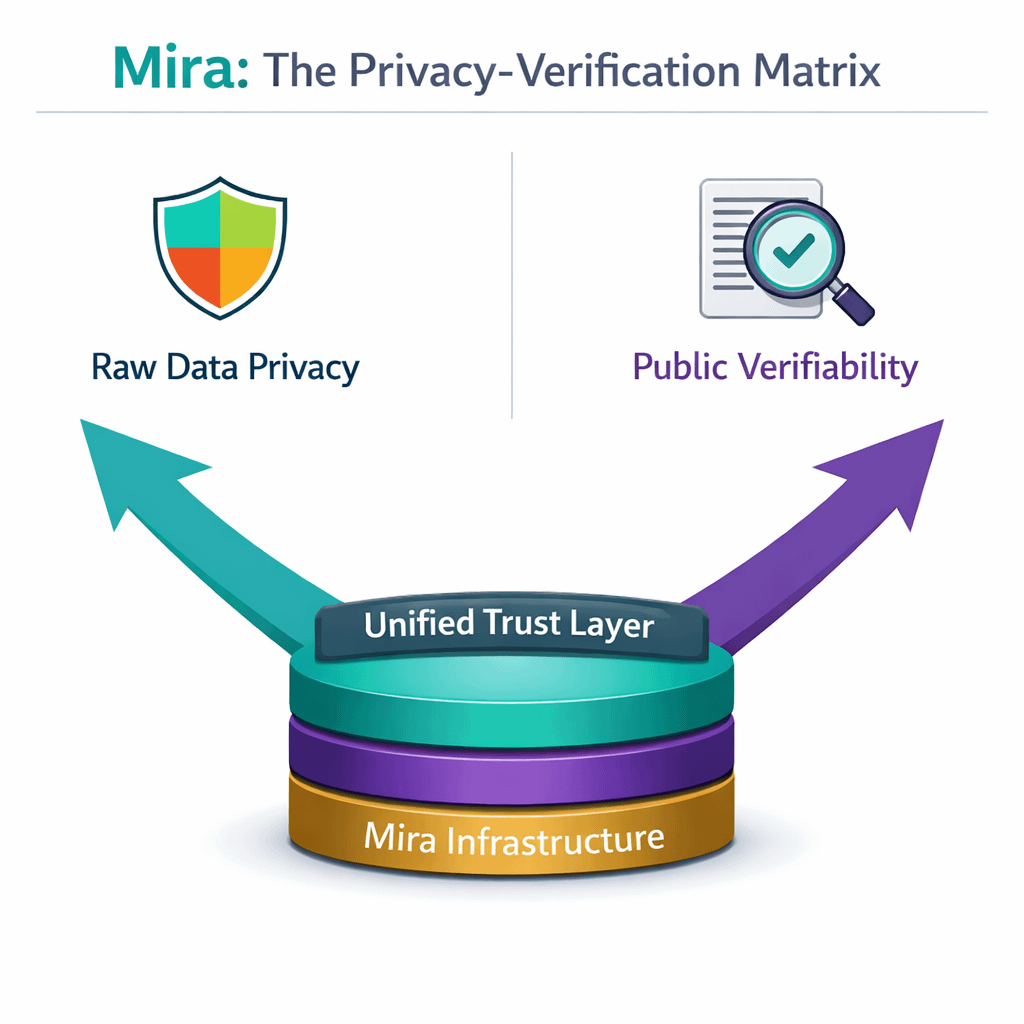

Other participants may want proof that the model followed certain constraints or used specific data sources. At the same time, the organization operating the system may not be willing to expose its raw data or model architecture. That is where cryptographic techniques start to come into the conversation. From what I can see, Mira’s infrastructure attempts to use cryptographic verification methods to bridge this gap. Instead of revealing the entire dataset or model internals, the network focuses on verifying certain properties of the computation itself. The system can demonstrate that an AI model executed under defined conditions without necessarily exposing the sensitive information behind that execution. In simple terms, the network attempts to prove that something happened without revealing every detail about how it happened. I find that idea interesting because it aligns with a broader shift occurring in cryptographic systems. Techniques like zero-knowledge proofs and secure verification methods are increasingly used to validate transactions or computations without exposing underlying data. Applying similar ideas to machine learning introduces the possibility of verifying AI behavior while still protecting private information. Still, I try to avoid assuming that these systems automatically solve the problem.

Other participants may want proof that the model followed certain constraints or used specific data sources. At the same time, the organization operating the system may not be willing to expose its raw data or model architecture. That is where cryptographic techniques start to come into the conversation. From what I can see, Mira’s infrastructure attempts to use cryptographic verification methods to bridge this gap. Instead of revealing the entire dataset or model internals, the network focuses on verifying certain properties of the computation itself. The system can demonstrate that an AI model executed under defined conditions without necessarily exposing the sensitive information behind that execution. In simple terms, the network attempts to prove that something happened without revealing every detail about how it happened. I find that idea interesting because it aligns with a broader shift occurring in cryptographic systems. Techniques like zero-knowledge proofs and secure verification methods are increasingly used to validate transactions or computations without exposing underlying data. Applying similar ideas to machine learning introduces the possibility of verifying AI behavior while still protecting private information. Still, I try to avoid assuming that these systems automatically solve the problem.

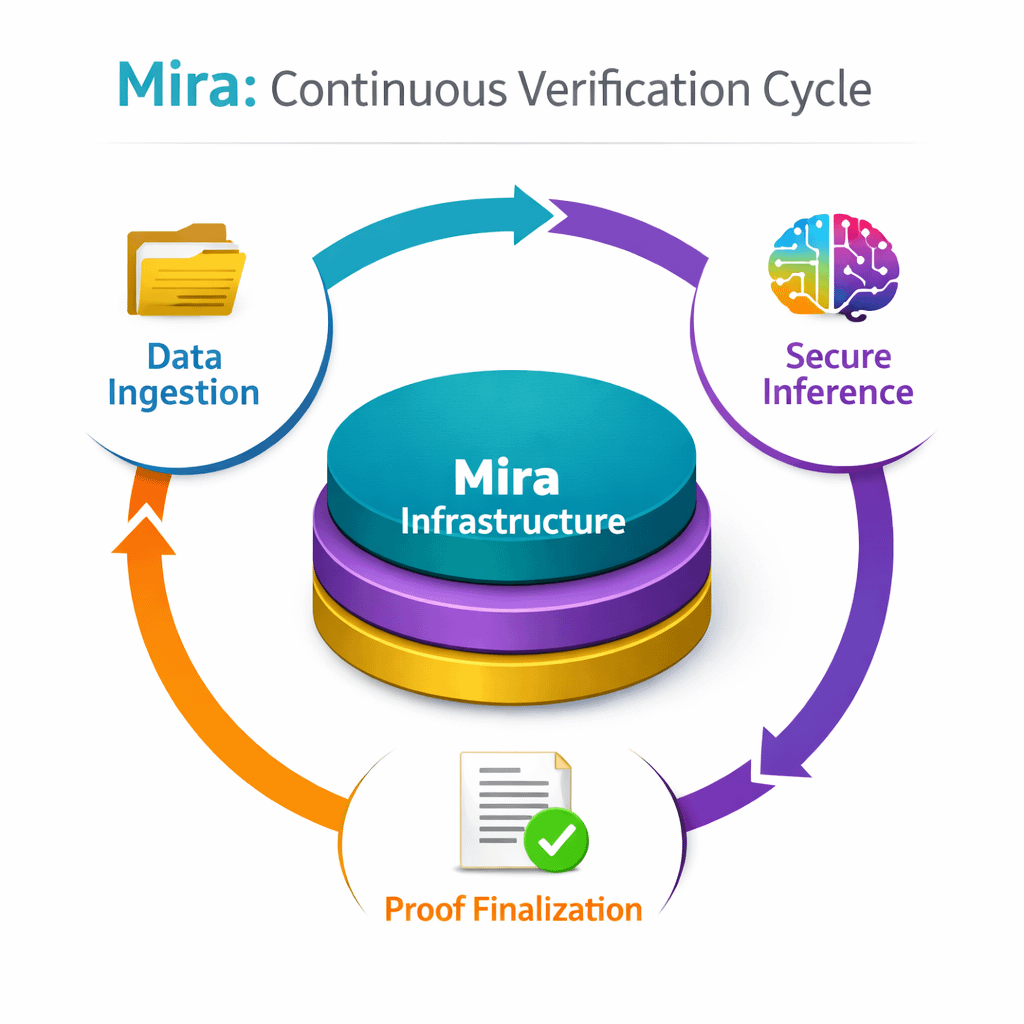

Machine learning pipelines are complex environments. Data ingestion, preprocessing, model training, and inference each involve multiple layers of computation. Integrating cryptographic verification into those processes requires careful engineering. If verification mechanisms become too computationally heavy, they could slow down systems that already demand significant processing resources. Another question I keep pondering involves incentives. Decentralized verification networks rely on participants who validate records and maintain consensus about what occurred. Those participants need reliable mechanisms to confirm AI behavior without having direct access to the data itself. Designing incentive structures that encourage accurate validation while preserving privacy can be challenging. There is also the challenge of integration with existing AI infrastructure. Developers already use complex toolchains to train and deploy machine learning systems. Introducing cryptographic verification layers into those pipelines must be done in a way that fits naturally with existing workflows. If the process becomes too complicated or costly, organizations may continue relying on traditional centralized auditing systems. Despite these challenges, the direction Mira is exploring seems increasingly relevant. AI systems are becoming more autonomous and are beginning to operate across institutional boundaries. Financial platforms, research environments, and automated services may all depend on machine learning systems that process sensitive information. In those environments, the ability to verify AI activity without exposing private data becomes valuable. From my perspective, Mira’s approach reflects an attempt to create infrastructure that supports both verification and privacy simultaneously. Whether that infrastructure ultimately becomes widely adopted remains uncertain. Cryptographic systems often seem promising in theory but require years of practical experimentation before they become reliable components of real-world technology stacks. For now, I see Mira Network’s work less as a finished solution and more as an exploration of how cryptography might reshape the relationship between machine learning and trust. If AI systems continue expanding into areas where both transparency and privacy matter, the need for such mechanisms may gradually become more apparent. And when that happens, the ability to verify machine learning behavior without revealing sensitive data could become one of the most important pieces of infrastructure supporting the next generation of AI systems.

Machine learning pipelines are complex environments. Data ingestion, preprocessing, model training, and inference each involve multiple layers of computation. Integrating cryptographic verification into those processes requires careful engineering. If verification mechanisms become too computationally heavy, they could slow down systems that already demand significant processing resources. Another question I keep pondering involves incentives. Decentralized verification networks rely on participants who validate records and maintain consensus about what occurred. Those participants need reliable mechanisms to confirm AI behavior without having direct access to the data itself. Designing incentive structures that encourage accurate validation while preserving privacy can be challenging. There is also the challenge of integration with existing AI infrastructure. Developers already use complex toolchains to train and deploy machine learning systems. Introducing cryptographic verification layers into those pipelines must be done in a way that fits naturally with existing workflows. If the process becomes too complicated or costly, organizations may continue relying on traditional centralized auditing systems. Despite these challenges, the direction Mira is exploring seems increasingly relevant. AI systems are becoming more autonomous and are beginning to operate across institutional boundaries. Financial platforms, research environments, and automated services may all depend on machine learning systems that process sensitive information. In those environments, the ability to verify AI activity without exposing private data becomes valuable. From my perspective, Mira’s approach reflects an attempt to create infrastructure that supports both verification and privacy simultaneously. Whether that infrastructure ultimately becomes widely adopted remains uncertain. Cryptographic systems often seem promising in theory but require years of practical experimentation before they become reliable components of real-world technology stacks. For now, I see Mira Network’s work less as a finished solution and more as an exploration of how cryptography might reshape the relationship between machine learning and trust. If AI systems continue expanding into areas where both transparency and privacy matter, the need for such mechanisms may gradually become more apparent. And when that happens, the ability to verify machine learning behavior without revealing sensitive data could become one of the most important pieces of infrastructure supporting the next generation of AI systems.