I keep noticing that most conversations about AI reliability circle back to one idea make the model better bigger nets more data fresher fine tuning but there’s a different risk that gets less attention dependency on a single model as the de facto source of truth.

That single point epistemic failure looks simple on paper and dangerous in practice if the model is biased miscalibrated or simply unlucky on a slice of data the whole stack inherits that error.

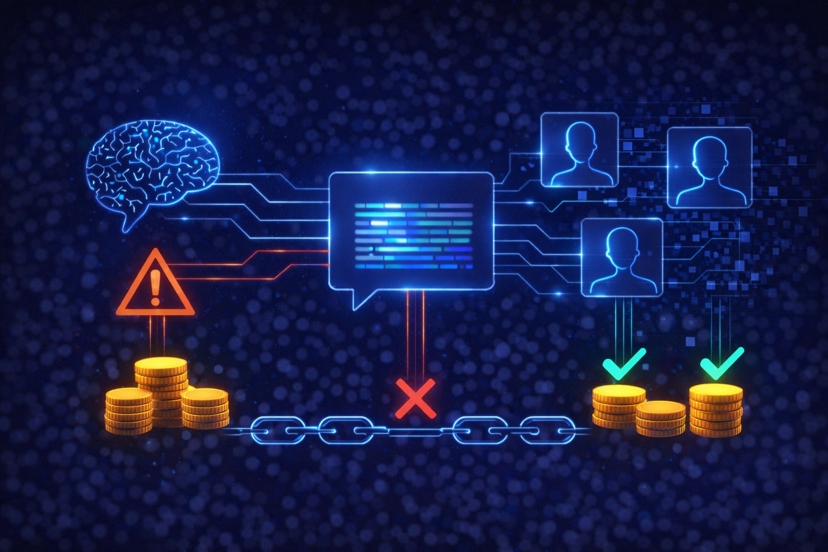

What @Mira - Trust Layer of AI seems to do is change the question instead of asking one model to be the final arbiter Mira turns outputs into verifiable claims and farms them out to multiple independent verifiers greement across those verifiers not one model’s confidence score becomes the signal we trust.

That reduces the chance that one model’s blind spot becomes everyone’s mistake it also surfaces disagreement early which is useful information not noise.

Of course, distributing verification brings tradeoffs. More verifiers mean more cost coordination and a design that must anticipate collusion or incentive failure latency can rise governance questions appear.

Still shifting reliability from a single model to a coordination layer changes where we engineer safety it makes verification a system property rather than a feature bolted onto a single model.

If Mira can make that coordination practical and economically robust it moves us away from single model risk and toward a more resilient way of trusting AI.

$MIRA #Mira @Mira - Trust Layer of AI $DEGO $SOL #ETH #dego #MarketSentimentToday #Binance