What keeps pulling my attention in Pixels isn’t trust.

It’s the part where they call it trust while using it like a gate.

Reputation. Trust Score. Clean names. Soft language. It sounds like you’re earning something social — credibility, community standing, a signal that you’re a “good player.”

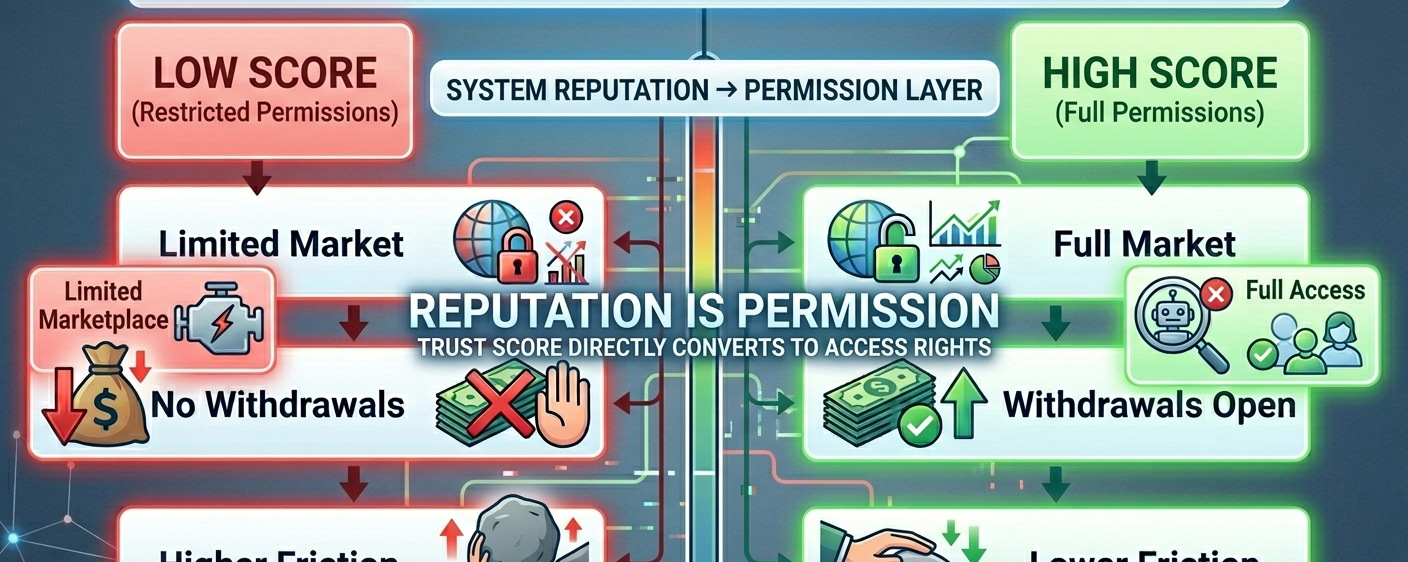

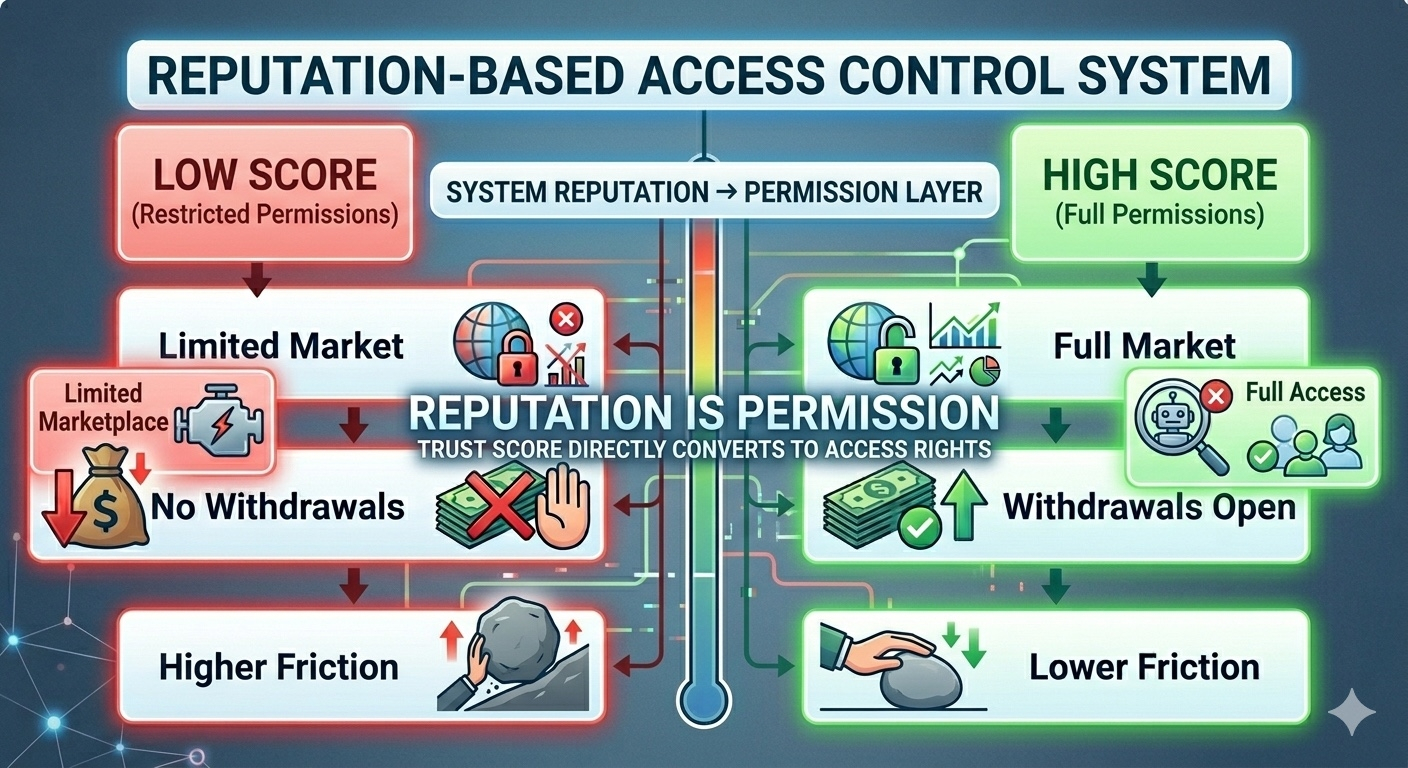

Then you follow where it actually matters.

Trade access depends on it.

Withdrawals sit behind it.

Marketplace participation shifts with it.

Guild creation unlocks through it.

Even fee treatment moves depending on where you land.

That’s not just a badge.

That’s infrastructure.

And it doesn’t behave like something passive. It sits right in the middle of the economy, quietly shaping who gets to move freely and who doesn’t.

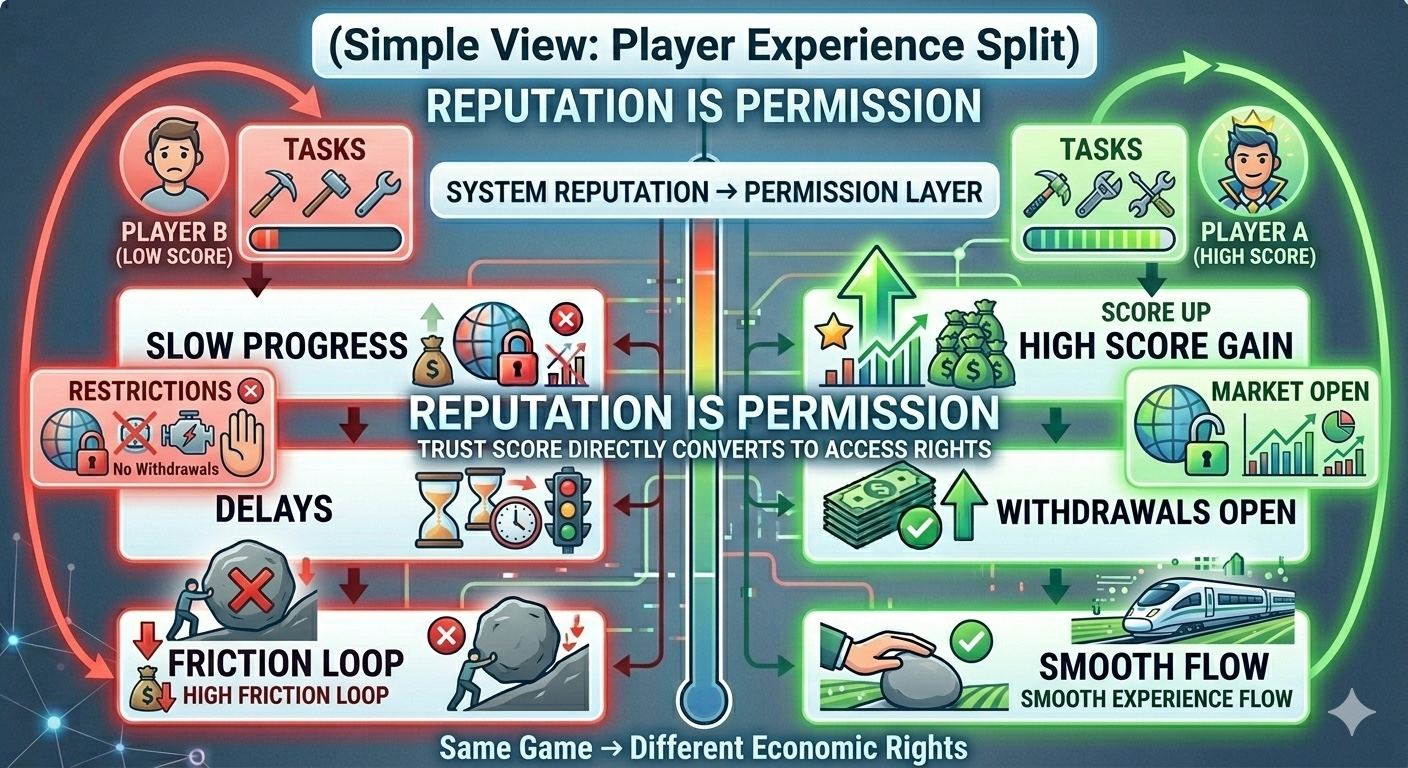

You start noticing it in real play.

One player stays inside the loop — doing tasks, building score, getting closer to full access. The system gradually opens up. Trading becomes easier. Withdrawals unlock. Movement feels smoother.

Another player is still doing the same farming, the same gathering, the same basic loop — but keeps hitting walls.

Marketplace access is partial.

Withdrawals are locked.

Progress feels slower, not because of effort, but because of permission.

Same world.

Different rights.

You can call that trust if you want.

It doesn’t feel like trust.

Because trust, in most systems, reflects behavior.

This feels like something that actively manages access.

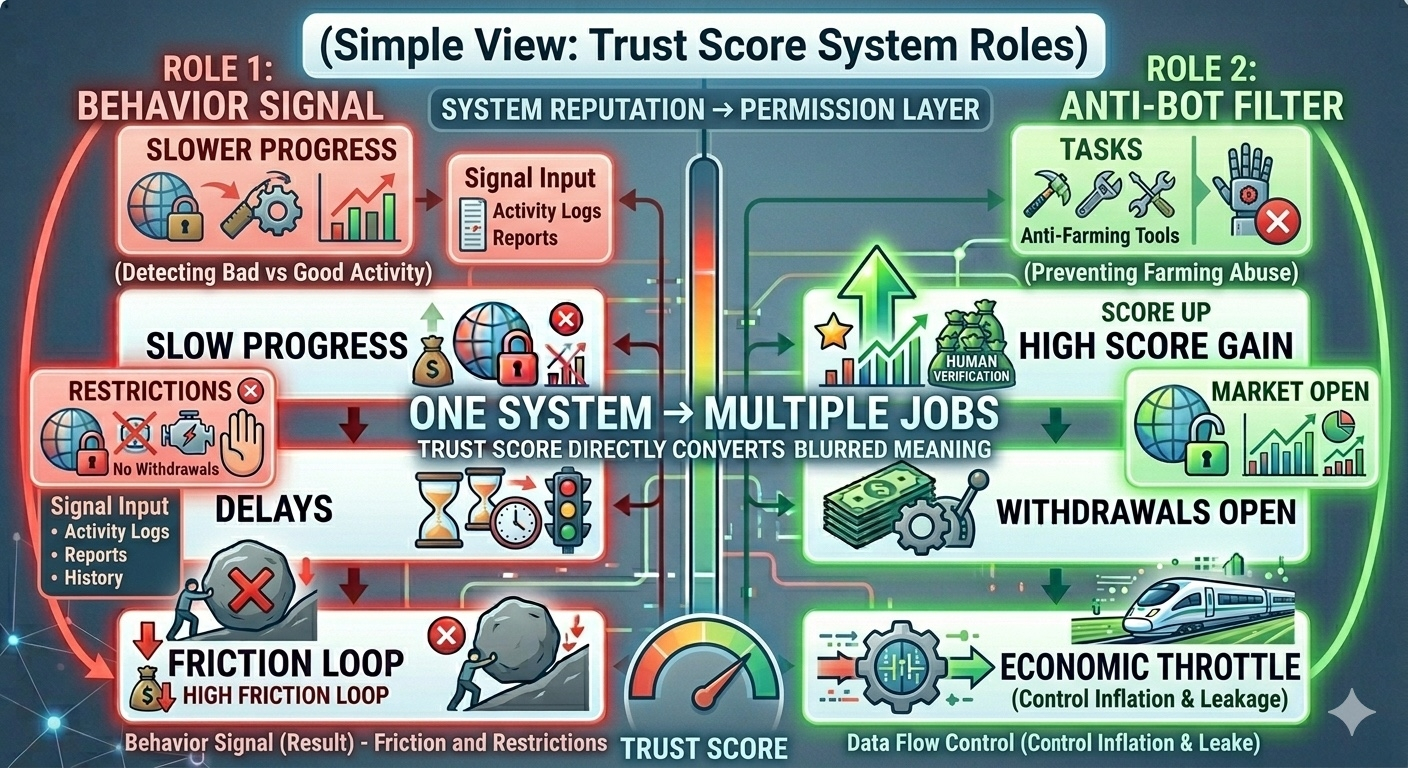

And it’s not just about stopping bots.

If it were only that, the design would be simpler. Detect abuse, block it, move on.

But here, the score is doing more.

It signals “good behavior.”

It filters automated farming.

It controls how much value can move through the system.

That’s three different jobs.

When one system starts doing three jobs at once, something subtle happens.

It stops being transparent.

A player thinks they’re building reputation.

The system is deciding how much access to allow.

That difference matters.

Because now the score isn’t just measuring activity. It’s reacting to pressure inside the economy.

If farming becomes too efficient, the system needs to slow things down.

If value starts leaking too easily, the system needs tighter control.

And the easiest place to apply that control is the same layer already tied to access:

The Trust Score.

That’s where it shifts from “social design” into something else.

It starts acting like economic border control.

Not in an obvious way. Nothing aggressive. No hard stops that feel like punishment.

Just gradual shaping.

More friction here.

More requirements there.

More effort needed before access opens.

From the player side, it still looks like progression.

From the system side, it’s regulation.

The interesting part is how clean it feels on the surface.

You’re not told you’re being restricted.

You’re told to keep playing. Keep building. Keep improving your score.

And that’s where the framing does most of the work.

Because “trust” suggests something earned socially.

But what’s actually being handed out is permission.

Permission to trade.

Permission to withdraw.

Permission to operate with fewer constraints.

That’s not just reputation.

That’s access control wrapped in softer language.

And it makes sense why it exists.

Open reward systems in Web3 games don’t hold up well under pressure. Players optimize quickly. Extraction scales. Economies get drained.

So systems like this appear.

Layers that slow things down.

Filters that separate users.

Mechanisms that decide how fast value can move.

From a design perspective, it’s rational.

Probably necessary.

But it changes how the system feels once you see it clearly.

Because now you’re not just playing a game.

You’re moving through a set of permissions.

You’re not just building reputation.

You’re unlocking economic rights.

And those rights aren’t fixed — they can shift depending on how the system needs to behave.

That’s the uncomfortable question sitting underneath it.

When the economy gets tense, what is the Trust Score really measuring?

Actual participation?

Consistent behavior?

Or how tightly the system needs to keep the gate closed at that moment?

Because if the same score is responsible for signaling behavior, filtering abuse, and controlling value flow…

Then at least one of those roles is going to influence the others.

And the player won’t always know which one.

Pixels ($PIXEL) calls it trust.

And maybe part of it is.

But once that score decides who can move freely and who stays restricted, it stops feeling purely social.

It starts feeling like a gate.

And the more important the economy becomes, the more that gate starts to matter.