I remember hesitating before signing a transaction, not because I didn’t trust the protocol, but because I understood it too well.

Every action was visible. Not just the transaction, but the pattern around it. Timing, wallet history, counterparties. It wasn’t exposure in a dramatic sense. It was quieter than that. A kind of persistent transparency that made me second, guess perfectly rational decisions.

I signed anyway.

But the hesitation stayed with me. Ki

Over time, I began noticing how normalized this discomfort had become.

We talk about transparency as if it’s inherently good. And in many ways, it is. It reduces fraud, improves verification, and builds shared confidence without intermediaries.

But there’s a tradeoff we rarely examine.

When everything is visible, behavior adapts.

Not always dishonestly, just strategically. People optimize not only for outcomes, but for how those outcomes will be perceived. Positions are adjusted, timing becomes cautious, and decision making starts to account for external observation.

In a system like that, transparency doesn’t just reveal behavior.

It shapes it.

I came across @MidnightNetwork in that context.

At first, this felt like a familiar narrative, another privacy focused chain attempting to obscure data in a system built on openness.

Privacy in crypto often feels binary. Either everything is visible, or everything is hidden. And both extremes introduce their own inefficiencies.

But Midnight didn’t frame privacy as absence of visibility.

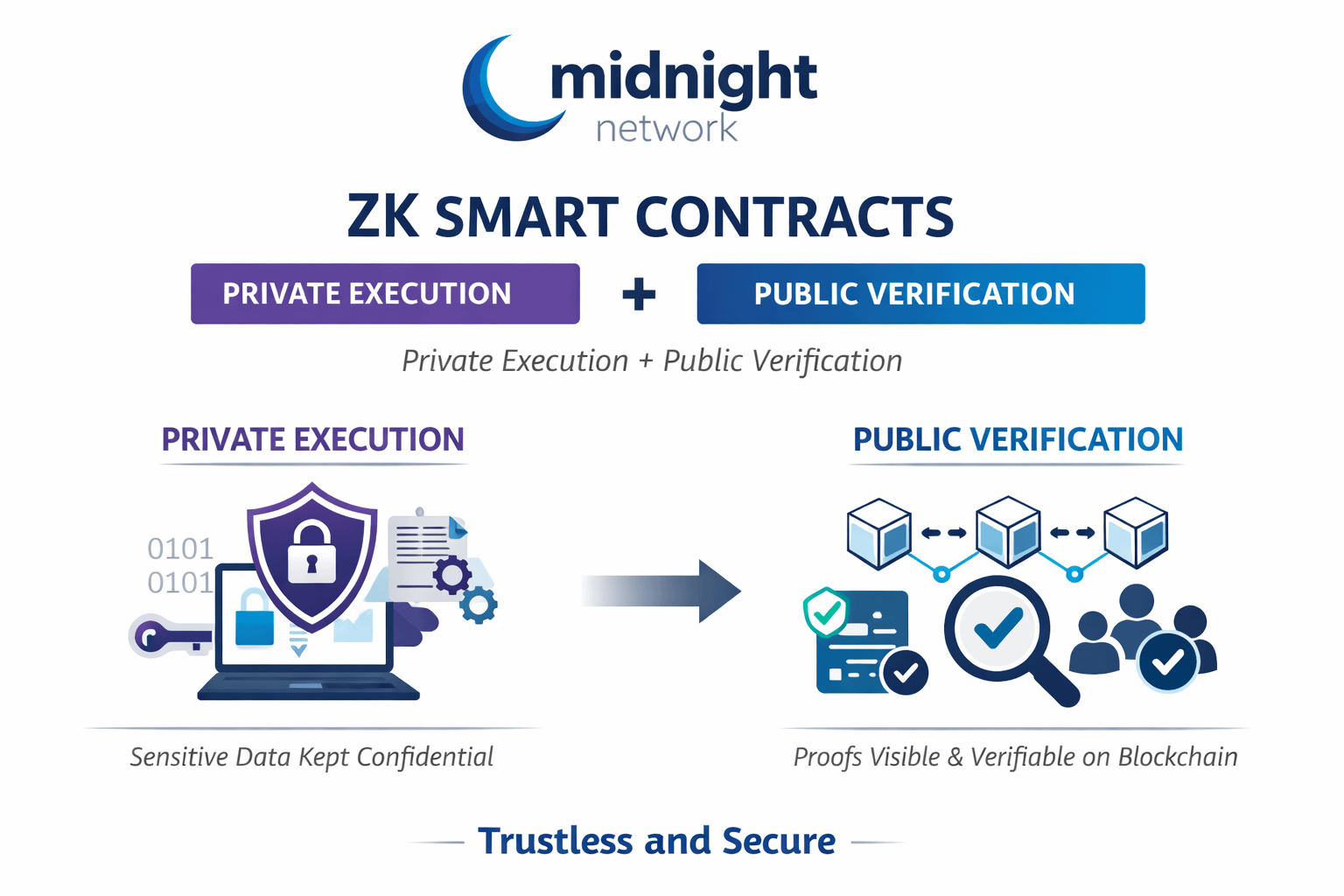

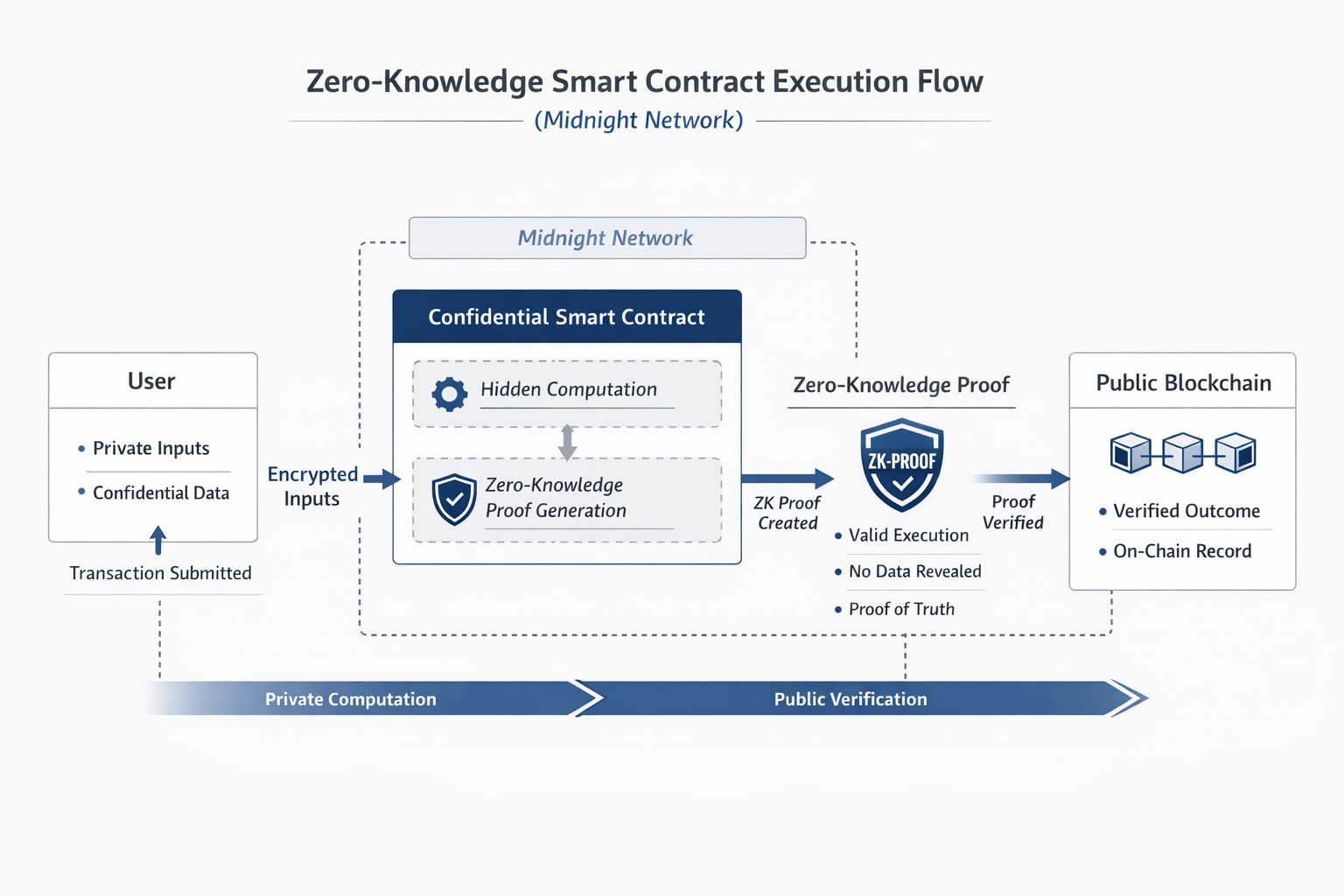

It introduced zero knowledge smart contracts and that distinction took time to fully register.

These aren’t just private transactions.

The contracts themselves can maintain confidential state, executing logic on hidden inputs while producing outputs that remain publicly verifiable. The system doesn’t ask you to trust what happened, it proves that it happened correctly, without exposing the underlying data.

At first, this felt almost counterintuitive.

But upon reflection, it felt closer to how trust actually works outside of blockchains.

We rarely require full visibility. We require reliable outcomes.

What stood out wasn’t privacy itself.

It was control over what gets revealed.

In #night model, privacy isn’t fixed, it’s programmable. Developers define, at the contract level, which data remains private and which parts are exposed for verification.

That creates a different kind of system.

Not opaque. Not fully transparent. But selectively legible.

And that subtlety changes everything.

In most blockchain environments today, transparency acts as a substitute for trust. You verify everything because you can see everything.

But that model doesn’t extend cleanly into all use cases.

Financial strategies, institutional flows, governance decisions, these often require confidentiality, not because they are malicious, but because they are sensitive. When fully exposed, participants adapt in ways that reduce efficiency.

Midnight reframes this dynamic.

It separates execution from exposure.

The contract executes privately. The outcome is proven publicly.

And that distinction reduces the need for behavioral distortion.

The more I thought about it, the more it aligned with how people actually behave under observation.

When actions are constantly visible, risk taking narrows. Exploration becomes constrained. Even well intentioned participants begin to optimize for perception rather than substance.

Privacy, in this context, isn’t just protective.

It’s enabling.

It allows participants to act based on strategy rather than surveillance, while still maintaining verifiable integrity at the system level.

There’s also a meaningful shift in how builders approach design.

In transparent systems, developers implicitly design for visibility. Data becomes part of the interface, whether intended or not.

With confidential smart contracts, the design question changes.

It becomes: what needs to be proven?

Not everything needs to be shown. Only what is necessary for verification.

This leads to more precise systems. Less noise. Clearer intent.

Another aspect that became clearer over time is how this model aligns with real-world constraints.

Most privacy systems struggle with a key tension, privacy often comes at the cost of auditability.

Midnight approaches this differently.

Because outcomes remain verifiable, it introduces a form of controlled auditability. Sensitive data can remain hidden, while proofs provide assurance that rules were followed.

This begins to align with institutional requirements where confidentiality is necessary, but so is compliance.

Stepping back, Midnight doesn’t feel like a replacement for existing blockchains.

It feels more like a complementary layer, one that introduces confidentiality where full transparency becomes a constraint rather than a benefit.

As ecosystems mature, this distinction becomes more relevant.

Not every interaction needs to be public. But every interaction needs to be trustworthy.

$NIGHT separates those requirements instead of forcing them into the same layer.

There’s a broader shift happening here.

We’re moving beyond the assumption that more visibility always leads to better systems.

Early crypto relied on radical transparency as a foundation. And it worked up to a point.

But as usage expands, the limitations of that model become more visible. Different users, different contexts, different expectations.

Privacy is no longer optional in many of these environments.

It’s structural.

I still think about that moment before signing the transaction.

The hesitation wasn’t about risk. It was about exposure.

About whether every action needed to become part of a permanent, public narrative.

Midnight doesn’t remove transparency.

It refines it.

I used to think trust in crypto came from seeing everything.

Now I’m starting to think it comes from proving what matters,

and leaving the rest unobserved.