When I hear “AI outputs verified on-chain,” my first reaction isn’t awe. It’s caution. Not because verification is unimportant, but because the phrase risks sounding like a magic stamp — as if adding blockchain automatically turns probabilistic systems into sources of truth. It doesn’t. What it does, at best, is change how confidence is produced, measured, and trusted.

Most AI systems today operate on statistical likelihoods. They generate answers that sound correct, often without a reliable mechanism to prove they are. The real issue isn’t that models make mistakes — it’s that users have no structured way to distinguish between a confident guess and a validated claim. That gap is where reliability breaks down, especially in finance, automation, and decision systems.

The traditional model places the burden of verification on the user. You receive an output, cross-check sources, compare responses, and decide what to trust. This works for low-stakes use, but collapses at scale. Autonomous systems can’t pause to “Google the answer,” and enterprises can’t afford workflows that depend on manual validation. The friction isn’t just inconvenient — it makes AI unsuitable for critical operations.

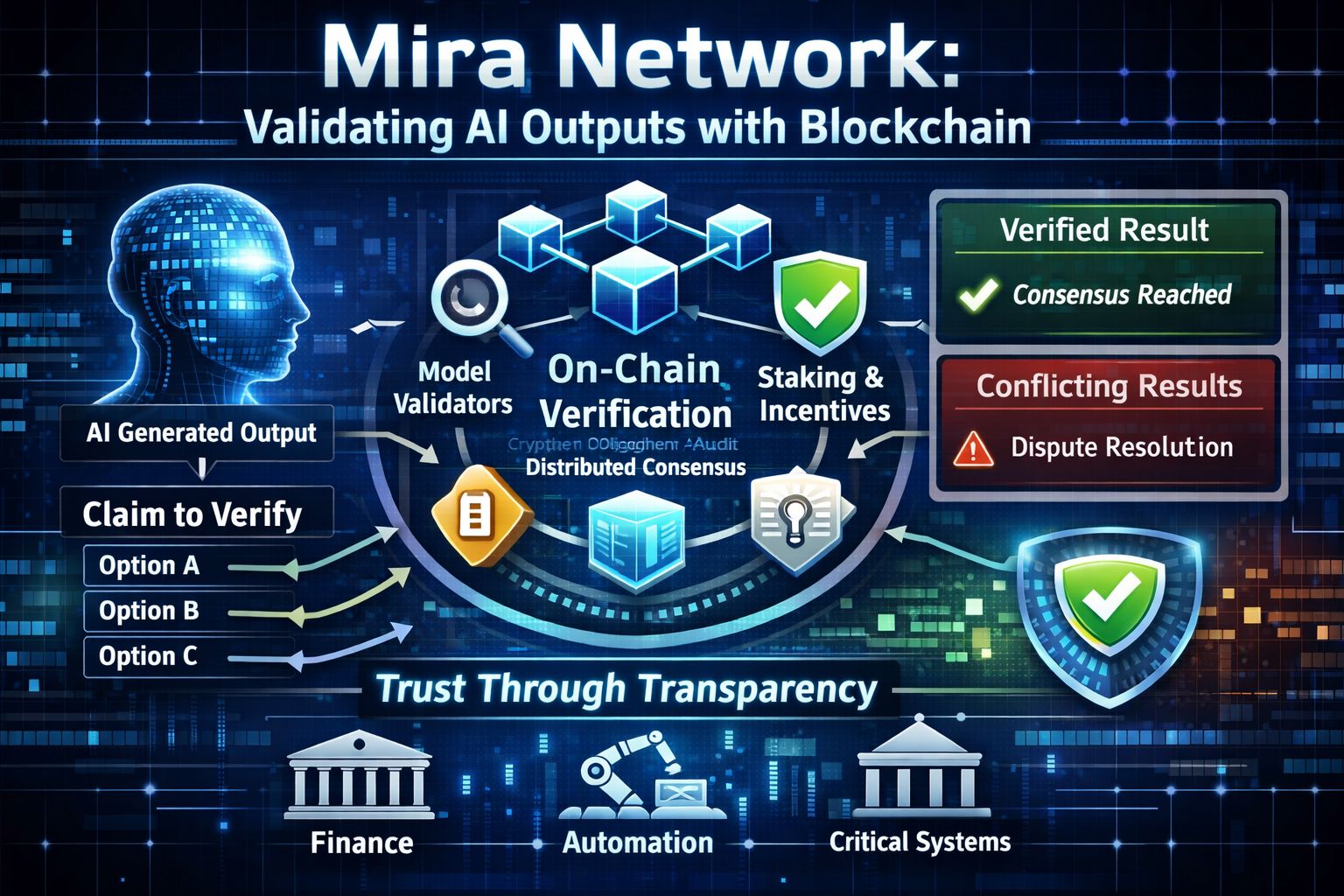

Mira Network shifts that responsibility away from the end user. Instead of presenting AI responses as finished products it breaks them into verifiable claims that can be independently checked. These claims are then distributed across multiple models and validators creating a consensus layer around the output. The result isn’t a claim that something is true but a transparent record of how many independent systems agreed it was.

Of course, verification doesn’t happen in a vacuum. If a claim is validated through multiple models, there must be a process determining which models participate, how disagreements are resolved, and how results are recorded. Sometimes this involves staking mechanisms. Sometimes it relies on reputation systems or cryptographic attestations. Whatever the method, it introduces a new surface for incentives — one that shapes how truth is economically enforced.

This is where the deeper shift emerges. Verification becomes a market. Validators are rewarded for accuracy and penalized for false attestation. Model diversity become an asset rather than a redundancy. Instead of trusting a single provider’s training data and alignment choices, users rely on a competitive process that surfaces agreement across independent systems. Trust moves from brand reputation to verifiable consensus.

But consensus is not the same as correctness. If multiple models share the same blind spots, they can agree on the same wrong answer. This is a failure mode that looks like reliability until it fails catastrophically. In centralized AI errors are opaque but localized. In consensus driven verification errors can become systemic if validator diversity is insufficient or incentives encourage conformity over scrutiny.

That shifts the trust boundary upward. Users are no longer just trusting a model; they are trusting the validator set, the staking rules, the dispute mechanisms, and the economic incentives that govern participation. If those layers behave predictably under stress, the system earns confidence. If they don’t, verification becomes theater — a process that signals rigor without delivering it.

There’s also a subtle change in security posture. When AI outputs are tied to cryptographic attestations, they become composable. Other systems can rely on them automatically. This enables automation at a scale that was previously unsafe. But composability raises the stakes: a flawed verification pipeline doesn’t just misinform a user — it can propagate errors across dependent systems in real time.

As a result, applications built on verified AI inherit a new responsibility. They can no longer treat outputs as advisory. If they advertise verification, users will assume reliability. That expectation transforms verification from a technical feature into a product guarantee. When something goes wrong, the question won’t be which model erred — it will be why the verification layer failed to catch it.

This creates a new competitive arena. AI platforms won’t just compete on model performance; they’ll compete on verification quality. How transparent is the validator set? How quickly are disputes resolved? How resistant is the system to coordinate manipulation? How does it behave under adversarial conditions? In high stakes environments these questions matter more than marginal gains in benchmark scores.

The strategic shift here is subtle but significant. Mira Network treats verification as infrastructure — something specialized actors maintain — rather than a burden placed on every user. The goal isn’t to eliminate uncertainty, but to make confidence measurable and auditable. In calm conditions, this looks like a trust upgrade. In volatile or adversarial conditions, it becomes a stress test of incentives, diversity, and governance.

So the real question isn’t whether blockchain can validate AI outputs. It’s who defines validation how incentives shape agreement and what happens when consensus collides with reality.

$MIRA @Mira - Trust Layer of AI #Mira