I used to think game rewards were straightforward. Give players something valuable, make them feel good, and that should be enough. But in Pixels, that idea kept falling apart. Rewards were everywhere. The first day felt exciting. The second day still had energy. By the third, the world started to feel quiet again. That was the real question sitting underneath all of it: did the reward actually change anything, or did it just create a short burst of noise?

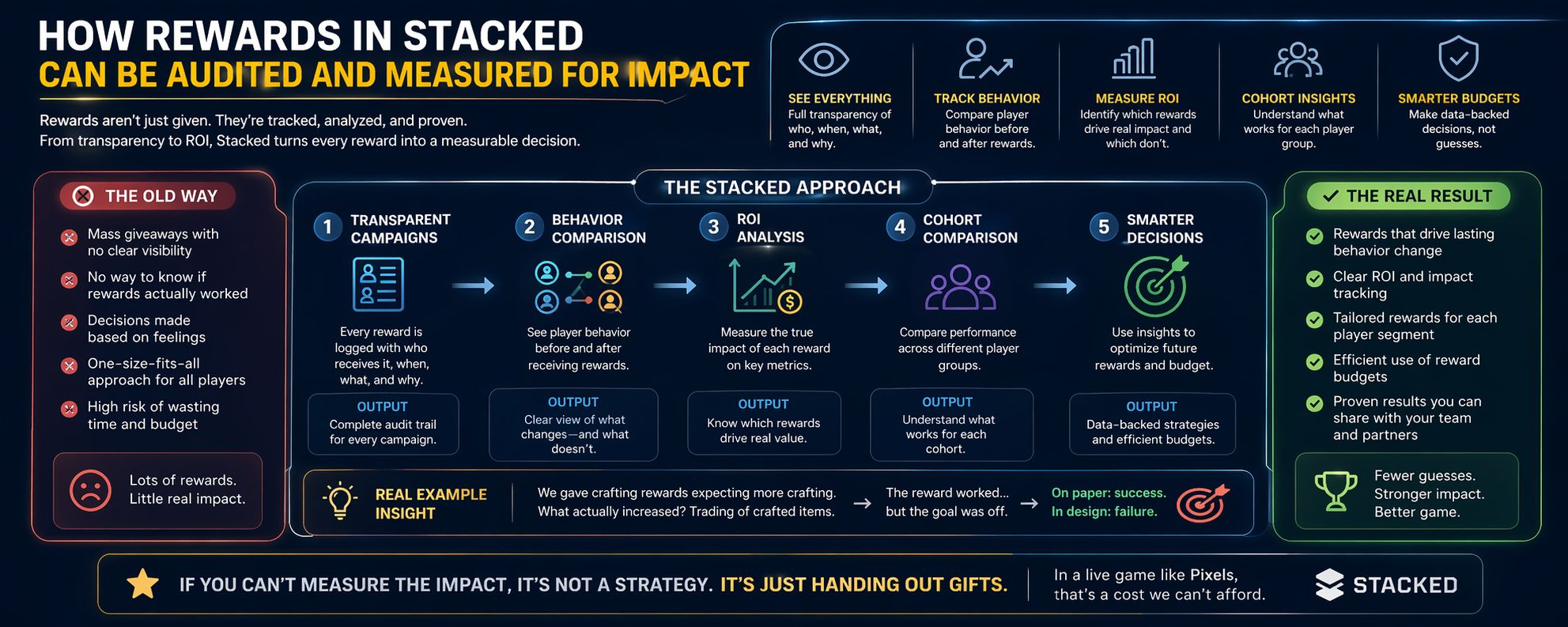

What stands out to me in Stacked is that it changes the conversation around rewards. The focus is no longer only on what is being handed out. It is on what can be seen, tracked, and understood afterward.

The first thing that matters is clarity. Every campaign can be traced. You can see who received the reward, when they received it, and why they were selected in the first place. It is not just a broad distribution with no clear logic and no real visibility once it is done.

That alone changes a lot. If a reward fails, there is less room for vague explanations. You can look at the trail and understand where it broke down.

The second shift is in behavior. You are no longer left assuming that a reward worked simply because it was claimed. You can compare what players were doing before the reward and what they did after it. Maybe a player used to log in once a day and now returns three times. Maybe nothing changes at all. That difference matters.

Because of that, rewards stop being judged by appearance alone. Some of them actually move behavior. Some of them only pass through the system without leaving much behind.

The third thing is that return on investment becomes much easier to judge. One reward might bring a farming cohort back into real activity. Another might only pull hunters in for a quick visit before they disappear again. Once that data is visible, decisions become more precise. They stop being driven by instinct alone. And honestly, that was one of the most common problems in Pixels. A lot of reward decisions were made on feeling rather than evidence.

There is also a more uncomfortable truth hidden inside all this. A reward can seem successful while still missing the point. We once gave out crafting rewards because we believed they would increase crafting activity. Instead, what really increased was trading around the crafted items. So yes, something moved. On paper, the reward looked effective. But it did not accomplish the design goal we actually cared about.

That kind of outcome is easy to misread if you are only looking for surface-level success. The numbers may look positive while the intent quietly fails underneath them.

Another important layer is cohort comparison. A reward that works for new players may do almost nothing for whales. And something that motivates whales may be irrelevant for early users. In the past, these differences often disappeared inside overall averages. Once you can break performance down by cohort, that kind of flattening becomes harder to get away with.

Over time, this changes how reward budgets are used. It becomes less about trying things blindly and hoping the effect is there somewhere. Rewards can be reviewed, explained, and defended. Teams can examine them properly. Partners can see the logic behind them. The process becomes easier to trust because it is no longer hidden behind loose assumptions.

That, to me, is the deeper change. Rewards are no longer treated like harmless experiments. In a live game, they are expensive levers. If you cannot tell whether they are shaping behavior in the way you intended, then you are not really running a strategy. You are just distributing value and hoping the outcome justifies it later.

And that is the question that still matters most: did the reward create lasting impact, or did it only create a brief moment of excitement?

Back then, if I am being honest, we did not really know.