There is a strange moment in live games when a reward looks successful from the outside but feels uncertain from the inside.

Players show up. Activity rises. The campaign gets attention. For a short while, everything appears to be working. But anyone who has watched these systems closely knows how easily that first signal can lie. A spike is not always a change. A crowd is not always commitment. Sometimes rewards create movement without creating meaning.

That is the uncomfortable lesson behind reward design in games like Pixels. Giving players something is easy. Understanding what that gift actually does is much harder.

For a long time, rewards were treated almost like a lever. Pull it, and activity should rise. Drop enough incentives into the world, and players should respond. And they often do, at least briefly. The map fills up. People return. Metrics wake up for a day or two. Then, just as quickly, the energy fades, and the same question comes back: did the reward actually improve anything, or did it simply create noise?

This is where Stacked changes the conversation.

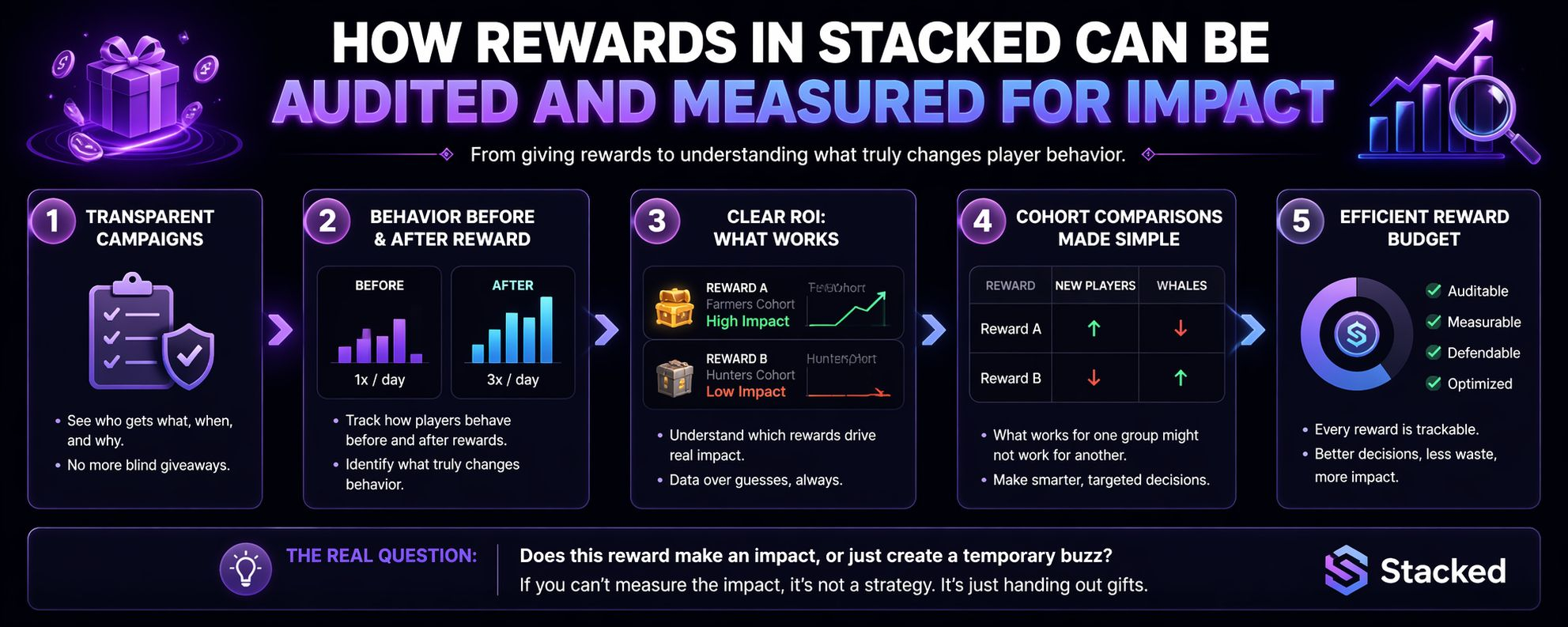

The value is not only in distributing rewards. It is in making rewards visible, traceable, and accountable. A campaign is no longer just an event that happens and then disappears into vague results. It can be examined. Who received the reward? Why were they selected? When did they receive it? What did they do before? What changed after?

That kind of clarity matters more than it first seems.

Without it, every campaign becomes a story people can interpret however they want. If activity goes up, someone calls it a win. If activity drops, someone blames timing, audience, or reward size. The discussion stays soft because the evidence is soft. Teams end up trusting instinct because there is nothing firm enough to argue with.

But once rewards are measurable, the conversation becomes less comfortable and much more useful.

A player who logged in once a day before a reward may suddenly start showing up several times. Another player may take the reward and behave exactly the same. A cohort may return for a specific activity, while another only appears long enough to claim the benefit and leave again. These differences are easy to miss when everything is averaged together. They become obvious when the data is close enough to the player.

This is where reward design becomes less about generosity and more about learning.

A reward might look good because it increases activity, but the wrong kind of activity can still point to a design failure. If the goal is to encourage crafting, but the reward only causes players to trade the crafted items, then the system did create movement. It just did not create the movement intended. On a dashboard, that may look positive. In the design room, it tells a different story.

That distinction is important. Rewards do not only answer whether players want something. They reveal what players are willing to do because of it. Sometimes the answer confirms the design. Sometimes it exposes that the team was asking the wrong question.

The bigger shift is that reward impact can finally be separated by audience. New players, regular players, whales, farmers, hunters, traders — they do not respond the same way. A reward that brings one group back into the loop may mean nothing to another. Before, those differences often disappeared inside broad numbers. Now they can be seen for what they are.

And once that happens, reward budgets stop feeling like hopeful spending.

They become something a team can defend. Something it can review with partners. Something it can improve instead of merely repeat. The point is not to remove experimentation from live games. Experimentation will always be part of the work. The point is to stop pretending every burst of activity is proof that the experiment worked.

Because rewards are expensive, not only in tokens, items, or budget, but in the habits they teach players. If players learn that every return needs a prize, the game slowly trains them to respond to giveaways instead of systems. That is a cost that does not always appear immediately.

The real question, then, is not whether players liked the reward. Most players like receiving things. The harder question is whether the reward moved them toward the behavior the game actually needed.

That is the difference between a campaign and a strategy.

A campaign can create attention. A strategy has to create understanding. And in a live game, that understanding is what separates a useful reward from a temporary spark that burns brightly and leaves very little behind.