I wasted like three days trying to wrap my head around how Walrus actually stores data. And I'm not talking about the slick marketing explainers—I mean the real technical guts of it. Every time I thought I was getting close, I'd hit another wall of confusion.

My main question was: why make it so complicated? Why not just do erasure coding the normal way like everyone else?

Then something clicked, and honestly, once you see it, the whole thing is pretty damn clever.

So here's the deal. Most decentralized storage systems use Reed-Solomon encoding in one dimension. You take your file, chop it up into pieces, add some redundancy, and scatter those pieces across a bunch of nodes. Pretty straightforward. If you lose some pieces, you can still rebuild the original from whatever's left. Works perfectly fine—until nodes start dropping offline and you need replacements.

That's where things get messy. Say a new node joins to replace one that failed. It needs to recover the data that was lost. With regular one-dimensional encoding, that new node basically has to download the entire freaking file from other nodes, piece it back together, and then re-encode just the one chunk it's supposed to hold. You're moving massive amounts of data just to recover one tiny piece. The bandwidth costs are insane.

Keep doing this as nodes come and go (which happens constantly), and you've basically thrown away all the efficiency you were supposed to get from erasure coding. You're just shuffling huge amounts of data around the network nonstop.

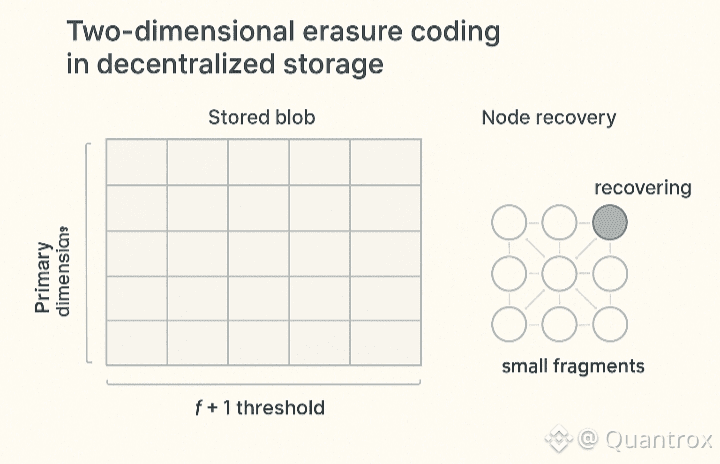

Walrus does something different with this two-dimensional thing they call Red Stuff. Instead of chopping your file into a single row of pieces, they arrange it like a grid—a matrix. Both the rows and columns get encoded separately, and they use different thresholds for each. The primary dimension uses 2f+1, the secondary uses f+1.

Here's where it gets cool. When a node needs to recover its missing piece, it doesn't rebuild the whole file. Instead, it just asks other nodes for the tiny bits where their pieces cross paths with its spot in the grid. Each node sends back a small fragment. The recovering node collects enough of these fragments to rebuild only its own piece—nothing more.

The bandwidth savings are nuts. Instead of costs scaling with the file size, they scale with piece size divided by the number of nodes. In a network with hundreds of nodes, that's the difference between moving gigabytes versus megabytes.

I kept thinking this was just some nerdy academic trick that wouldn't matter in the real world. Then I looked at what happens during epoch transitions when the storage committees change over.

Mainnet epochs run for two weeks. Every time one ends, some nodes bounce, new ones join, and shards get shuffled around. All the data stored on Walrus has to stay available through this whole transition. The old nodes need to hand off their shards to the new ones somehow.

With traditional one-dimensional encoding, this would be a nightmare. Departing nodes would have to push their entire chunk of stored data to new nodes. If they're sitting on terabytes, that transfer would drag on forever and burn through massive bandwidth.

Red Stuff flips the script. Incoming nodes can recover their shards using the two-dimensional process, spreading the work across the whole network instead of piling it all on the nodes that are leaving. No bottlenecks. The protocol actually gets better as the network grows.

There's also this neat side effect: nodes can go offline temporarily without breaking anything. As long as enough honest nodes stay up, any node can recover its data when it comes back. The network just... fixes itself. Traditional setups don't really do that.

Right now, there are 1,000 shards spread across 105 storage nodes. That's going to keep shifting as stake moves between operators and new nodes pop up. Without efficient recovery, those transitions would be a total pain. With Red Stuff, they're actually manageable.

The two different thresholds each serve a purpose. The higher one on the primary dimension stops bad actors from piecing together data during challenges. The lower one on the secondary dimension makes recovery work even when a bunch of nodes are unavailable during writes.

Now here's where security gets interesting. Walrus can handle storage challenges in an asynchronous network, which apparently no one else has pulled off properly. The challenge system uses the two-dimensional structure. When a challenge kicks off, honest nodes stop answering read requests, which blocks adversaries from grabbing enough data to fake having their assigned pieces stored.

Most challenge protocols assume synchronous networks—they assume bad actors can't read missing data from honest nodes fast enough to fake a response. But that assumption falls apart in real networks where timing is all over the place. Walrus sidesteps this because the two-dimensional encoding creates different security properties.

I'm probably butchering this explanation compared to the whitepaper, but basically: the way the encoding is structured gives you security guarantees that don't rely on perfect network timing.

Bottom line: storage nodes actually have to hold the data they claim to hold. They can't cheat by reconstructing it on the fly during challenges. That matters a lot in a permissionless system where anyone can spin up a node and where you need economic incentives to keep people honest.

The tradeoff is a 5x storage overhead. You're storing about five times the raw data size across the network to get high availability and security. But compare that to the 25x overhead you'd need if you just replicated everything for the same level of security—it's way better.

For projects like Pudgy Penguins storing media or Claynosaurz doing NFT drops, that efficiency difference hits the bottom line directly. Less overhead means cheaper storage fees, which makes the whole thing more practical for real use cases.

What took me way too long to get is that the two-dimensional encoding isn't just some performance tweak. It fundamentally changes what you can do—recovery efficiency, security guarantees, how well the system scales. The added complexity actually buys you something real.

Once you understand why it's built this way, it's kind of hard to picture doing large-scale decentralized storage any other way.