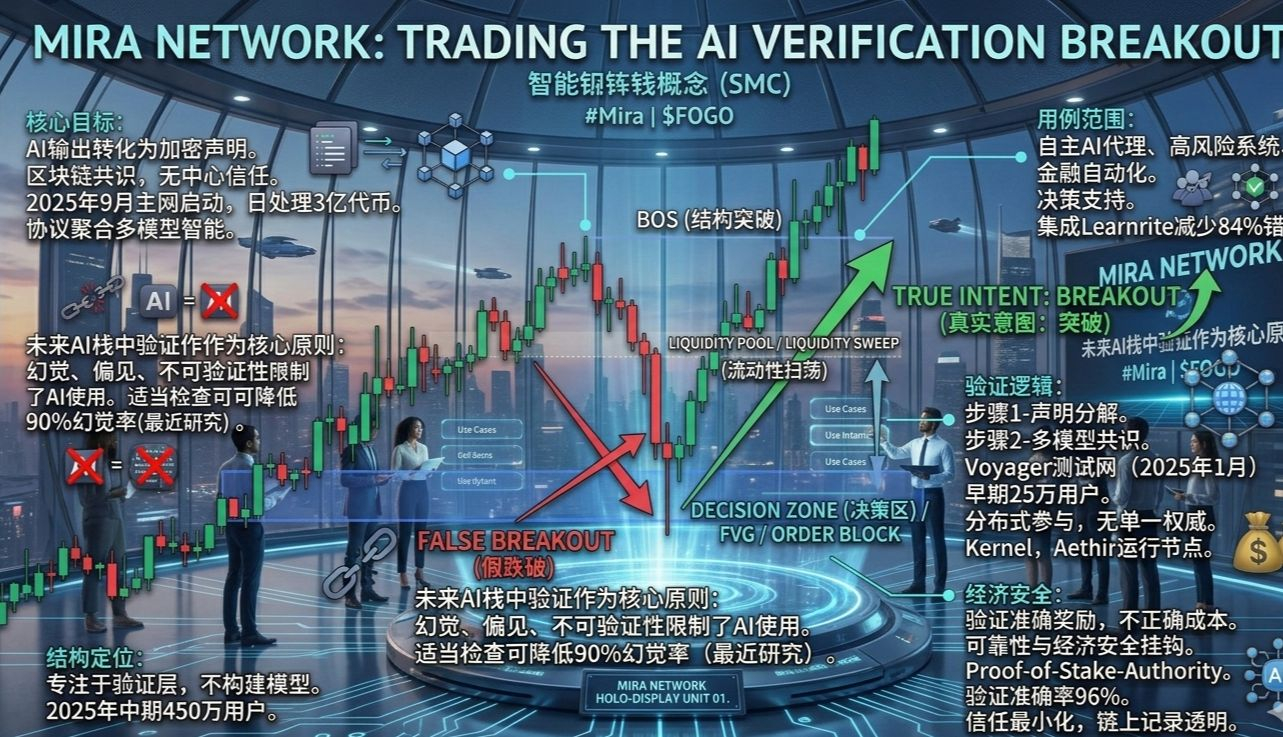

The Mira network operates as a decentralized AI verification protocol.

It transforms AI output into cryptographically verified statements.

The mainnet launched in September 2025, processing up to 300 million tokens daily.

This setup addresses key issues in modern AI systems.

The problem lies here

Current AI models often generate hallucinated information.

They may produce biased responses.

Output lacks verifiability.

These defects limit their role in critical applications.

Recent studies show that with proper checking, the hallucination rate has been reduced by 90%.

Core Objective

Mira transforms AI output into verifiable statements.

It uses blockchain-based consensus for validation.

This eliminates reliance on centralized trust.

Protocols aggregate collective intelligence from diverse models.

By January 2026, community builders emphasized their infrastructure role.

How it works (Step 1)

AI output is broken down into individual statements.

Each statement serves as a validation unit.

This approach reduces ambiguity in complex responses.

In practice, the Mira system handles complex responses efficiently.

Cross-model checks ensure higher accuracy.

How it works (Step 2)

Statements are distributed to independent AI models.

Each statement is verified multiple times.

Results are compared through a consensus mechanism.

Mira's Voyager testnet starts large-scale testing from January 2025.

Early on, over 250,000 users joined.

Consensus layer

No single authority controls validation.

It relies on distributed participation.

Economic incentives drive participation.

Blockchain coordination process.

Partners like Kernel and Aethir operate validation nodes.

Economic model

Participants validating accurate statements receive rewards.

Incorrect validation incurs economic costs.

This links reliability with economic security.

Network protected through Proof-of-Stake-Authority staking.

The model supports the continuous growth of the ecosystem.

Security model

Minimize trust.

Multi-model protocols build validation.

On-chain records provide transparency.

Validation logic remains open.

Validation output accuracy reaches 96%.

All use case scope

Applicable to autonomous AI agents.

Supports high-risk information systems.

Auxiliary financial automation.

Enhanced decision support.

Integration like Learnrite reduces errors by 84%.

Structural positioning

Mira avoids building AI models.

It focuses on the validation layer.

The model generates output.

Validation confirms integrity.

The ecosystem reaches 4.5 million users by mid-2025.