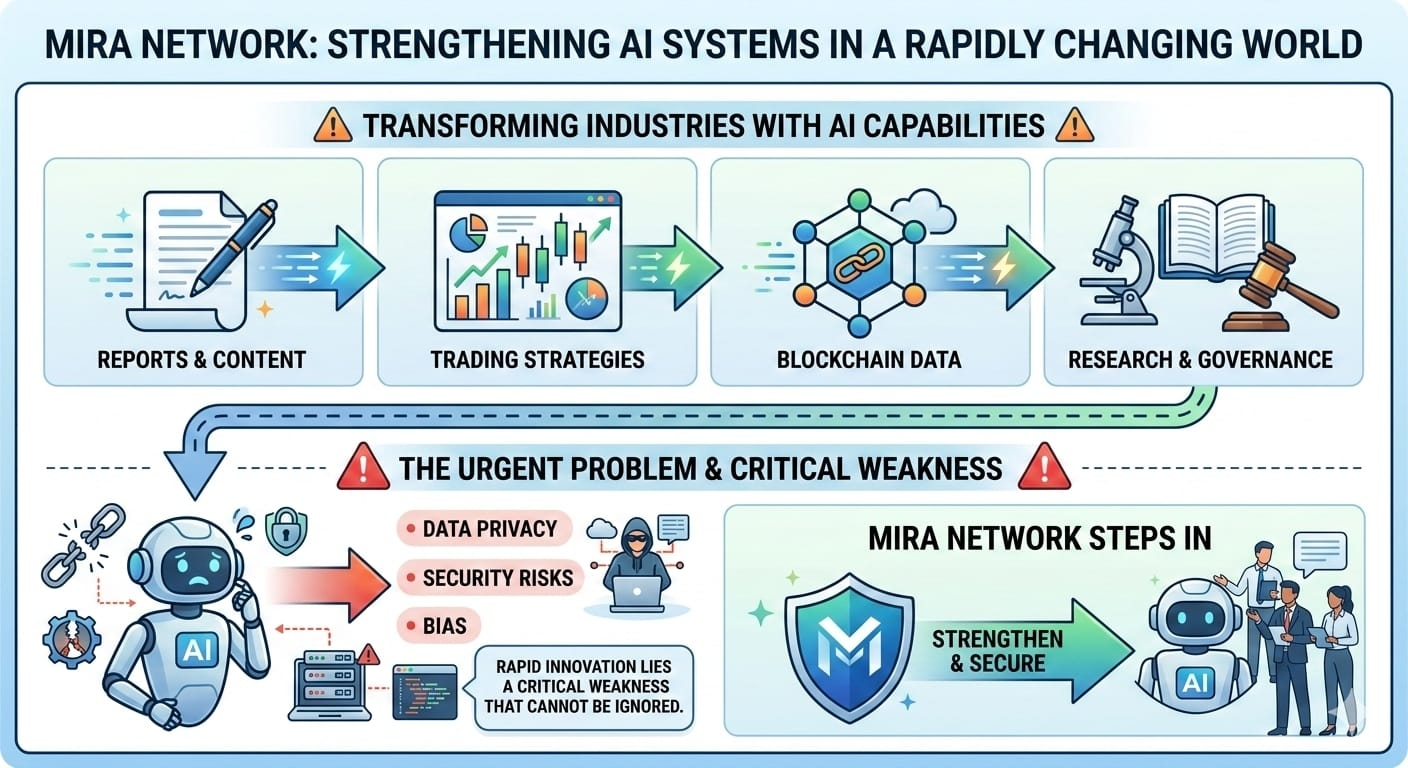

Mira Network is stepping into one of the most urgent problems in artificial intelligence today. AI systems are becoming more powerful every month. They write reports, generate trading strategies, analyze blockchain data, assist in research, and even help draft governance proposals. Their speed and capability are transforming industries. Yet behind this rapid innovation lies a critical weakness that cannot be ignored.

AI does not truly understand truth.

Modern language models are prediction engines. They generate responses based on patterns learned from massive datasets. While this makes them incredibly effective at producing fluent and persuasive content, it also creates a structural limitation. When the system lacks accurate information, it may generate an answer that sounds confident but is incorrect. This is known as hallucination.

Hallucinations are not rare bugs. They are an inherent risk of probabilistic models. An AI can fabricate statistics, invent references, misinterpret data, or confidently describe events that never occurred. Because the output is often polished and well structured, users may not immediately recognize errors. As AI becomes integrated into financial systems, healthcare research, and governance processes, these mistakes can carry serious consequences.

In financial markets, misinformation can translate into instant losses. AI tools are increasingly used to summarize market trends, evaluate tokenomics, monitor smart contracts, and support trading strategies. If an AI system misreads a contract parameter or generates inaccurate analysis, automated trading bots may execute decisions based on flawed information. In fast moving crypto markets, errors can cascade within seconds. As AI continues to influence capital allocation and risk management, reliability becomes essential.

Healthcare presents an even more sensitive context. AI systems are used to summarize medical research, assist in documentation, and analyze clinical information. If an AI fabricates references or distorts findings from a study, it can influence interpretation and decision making. In environments where accuracy directly affects human lives, hallucinations are more than technical inconveniences. They represent a trust gap that must be addressed.

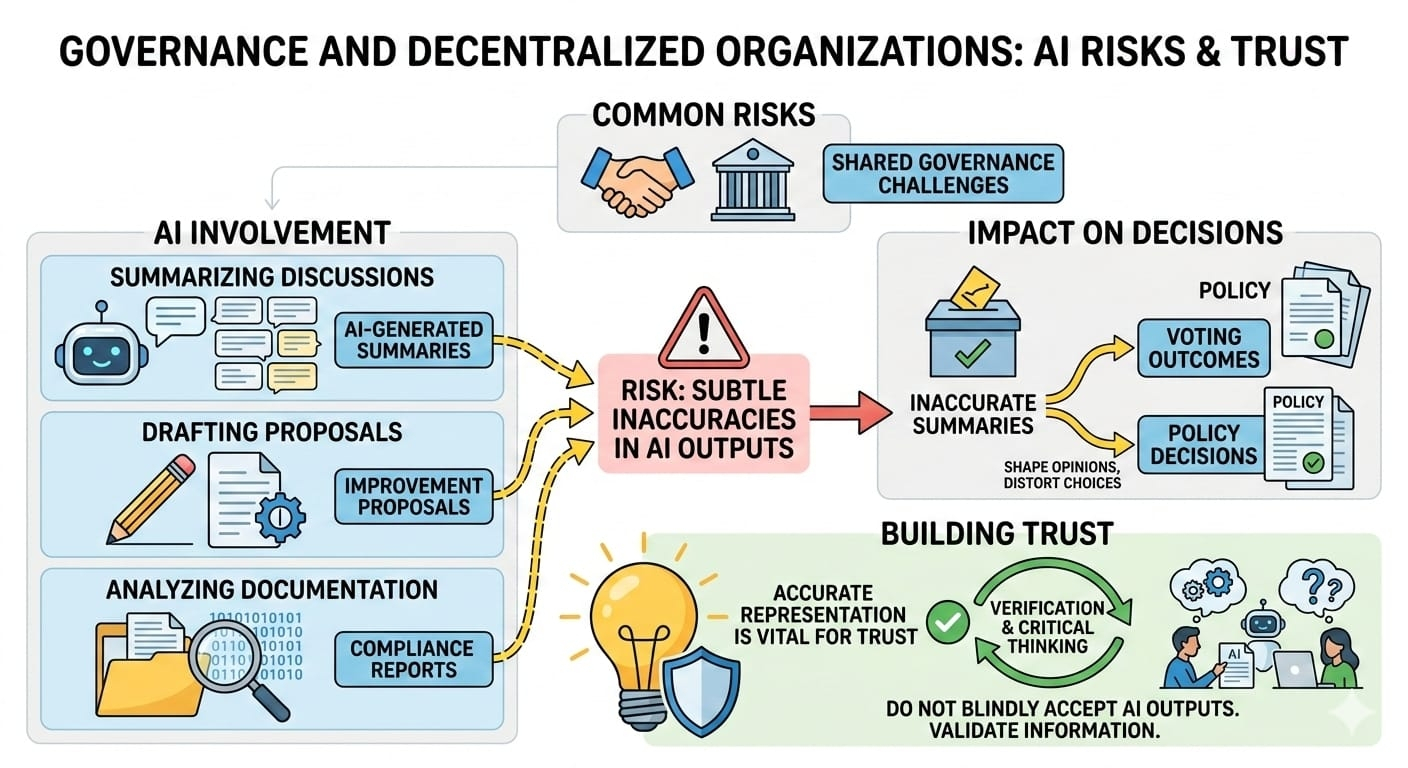

Governance and decentralized organizations face similar risks. AI is used to summarize community discussions, draft improvement proposals, and analyze compliance documentation. If those summaries contain subtle inaccuracies, they can shape voting outcomes or policy decisions. Trust in governance depends on accurate representation of information. When AI becomes part of that process, blind acceptance is no longer acceptable.

The dominant response from the AI industry has been to build larger models with more data and stronger training methods. While these improvements reduce some errors, they do not eliminate hallucinations completely. Intelligence alone does not guarantee reliability. A highly advanced model can still produce a persuasive but false statement.

Mira Network approaches the problem from a different direction. Instead of relying solely on making AI smarter, it introduces a verification layer that sits around AI outputs. The core idea is simple. Do not automatically trust what the model says. Break it down and verify it.

Mira Network is designed as a decentralized verification protocol. When an AI generates content, the system decomposes that content into structured, atomic claims. Each claim becomes an independent statement that can be evaluated separately. This removes the narrative flow that might hide subtle inaccuracies and forces information into clear, testable units.

After decomposition, the claims are distributed across a network of independent verifier nodes. Each node evaluates the claims using its own model or verification process. Rather than relying on a single authority, the system gathers responses from multiple participants. This multi model approach reduces the risk of a single hallucinating system determining the outcome. Agreement across independent evaluators increases confidence in the result.

The network then aggregates these responses through a consensus mechanism. Depending on configuration, it may require majority agreement or stricter validation thresholds. The objective is not to declare absolute truth but to determine whether a claim meets predefined verification criteria. This structured consensus replaces blind trust in one AI model with measurable validation.

Economic incentives strengthen the system. Verifier nodes stake value in order to participate. Accurate verification earns rewards, while dishonest or consistently inaccurate behavior risks penalties. By aligning incentives with accuracy, the network encourages responsible participation. Honesty becomes economically rational.

Once verification is complete, Mira Network generates a cryptographic certificate that records the outcome. This certificate provides proof that specific claims were evaluated under defined rules. Instead of simply trusting the AI output, users receive an auditable record of validation. While no system can guarantee perfection, this approach significantly reduces uncertainty.

The importance of such a verification layer becomes even clearer when considering the rise of autonomous AI agents. Future AI systems will not just provide recommendations. They will execute trades, manage treasuries, trigger smart contracts, and interact with digital infrastructure independently. Automation at this scale demands reliability. An unreliable assistant is inconvenient. An unreliable autonomous agent can cause systemic damage.

Mira Network does not claim to eliminate all risk. Multiple models can still share biases. Consensus does not create infallibility. However, the protocol introduces accountability into a domain that often operates on assumption. It reflects a broader principle that has already reshaped finance through blockchain technology. Do not trust blindly. Verify through decentralized consensus and cryptographic proof.

As artificial intelligence becomes foundational infrastructure, trust will become its defining currency. Systems that can demonstrate reliability may prove more valuable than those that merely appear intelligent. The next evolution of AI may depend not on bigger models, but on stronger verification frameworks.

Mira Network represents an effort to build that trust layer. In a world increasingly influenced by algorithmic decisions, verifiable intelligence may become the standard rather than the exception. The future of AI may not belong to the loudest or most confident system, but to the one that can prove it is right.