:

How big is the model?

How fast?

How natural does it write?

But one question is often missed:

If AI makes a mistake — who pays for it?

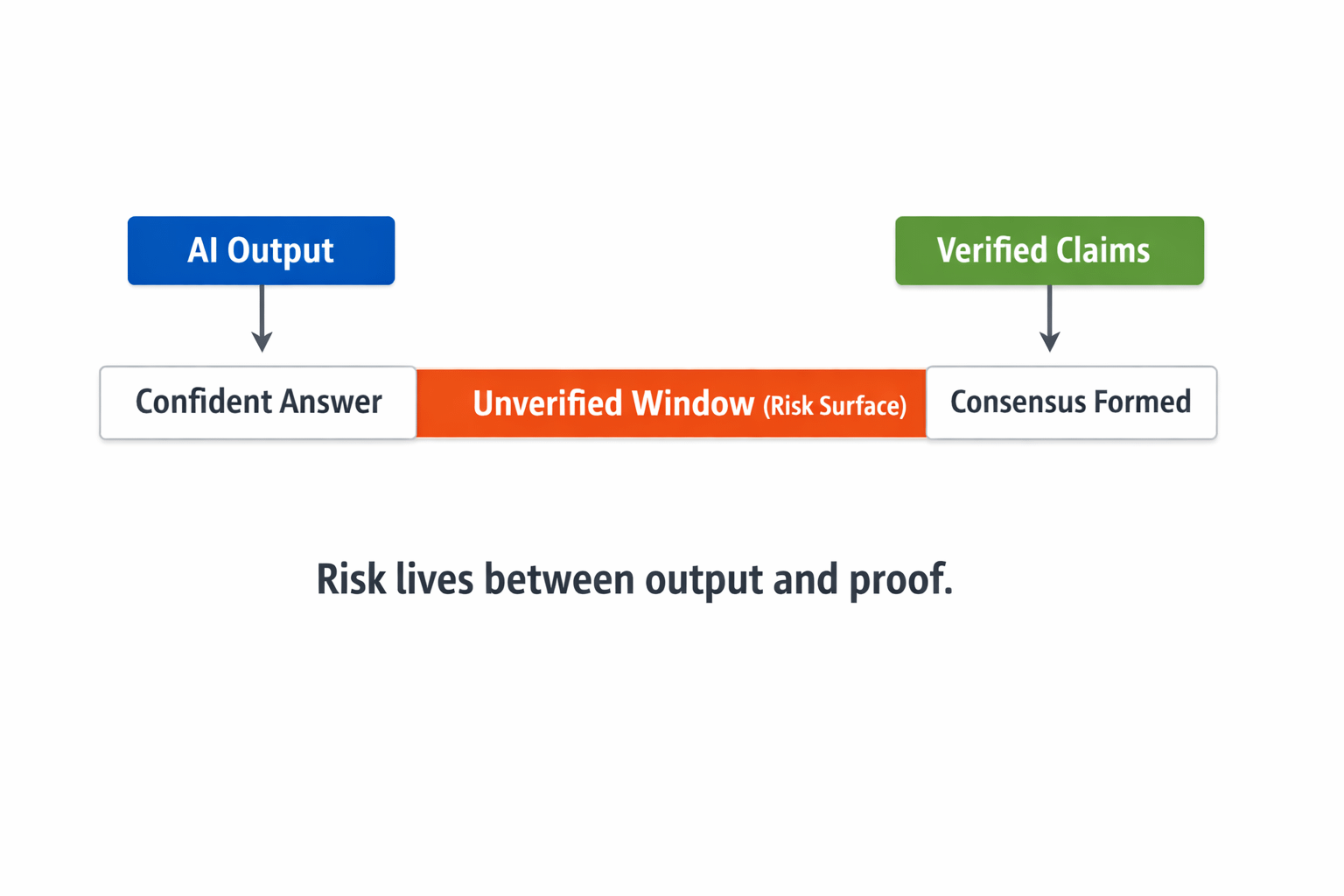

Because AI generally does not make mistakes strongly.

It makes mistakes slowly.

Small distortions that appear right.

Claims that sound confidently unqualified.

An analysis that is close to reality but completely incorrect.

These do not immediately set off alarms.

But they slip into decisions.

Fluency is not Truth.

AI models have an important feature:

They will have the ability to speak clearly.

But clarity (fluency) does not guarantee truth.

An answer can sound clean.

It may appear logical.

Doubt can be avoided.

#

But has it been validated?

This is where Mira enters.

Mira is not trying to make models smarter yet.

It is asking another fundamental question:

"Can this output hold?"

Claim-Level Accountability

Mira's key thought looks simple but is deep:

Instead of fully trusting an AI response,

It should be divided into small claims.

Each claim should be checked independently.

It must be ensured that it is correct.

If it is wrong, it should have financial implications.

This is not just a technical upgrade.

This is an accountability system.

AI will not have punishment now.

Mira is looking at that gap.

Speed vs accountability.

Yes — Verification will be slow.

Latency is increasing.

The process will be lengthy.

But what if AI moves money?

What if it affects governance?

What if it triggers smart contracts?

Then speed alone is not enough.

Speed + accountability is necessary.

Errors at the system level should not be cheap.

Because cheap mistakes occur more frequently.

The next stage of AI.

We are now using AI as a 'advice engine'.

But in the future AI:

Forms agreements.

Affects investment decisions.

Prepares policy drafts.

Is part of operational automation.

There is a mistake, not an 'oops'.

It has financial implications.

Mira is establishing its position here:

It is not just about responding to AI —

Making that answer stand.

Why is Trust Infrastructure important?

Looking at history — the end product does not create more value.

A layer that coordinates it.

Markets do not run on claims.

It only runs if those claims can be audited.

AI is also at the same stage.

Intelligence is increasing.

But without verification — it is extended uncertainty.

What I am observing

Not hype.

It's not about model size.

Benchmark scores do not.

What I am observing:

Are systems emerging that provide financial accountability for AI output?

Will AI's mistakes become costly?

Because for a system to truly become trustworthy —

It is not enough to provide the right answers.

Mistaken responses should have costs.

Final thought.

AI does not need a lot of noise.

It is already speaking strongly.

What it needs:

Receipts.

Verification.

Accountability.

That is where Mira's original experiment is.