Last night I noticed something strange while watching a Fabric dashboard.👀

One robot had completed 1,842 tasks.

Perfect validation record.

Zero slashing events.

Every skill chip verified.

Next to its ID was a simple tag:

“High Trust Operator.”

At first that sounded reassuring.

Then I realized something uncomfortable.

Trust is supposed to belong to humans.

But Fabric is slowly teaching the network to trust machines instead.

Not emotionally.

Economically.

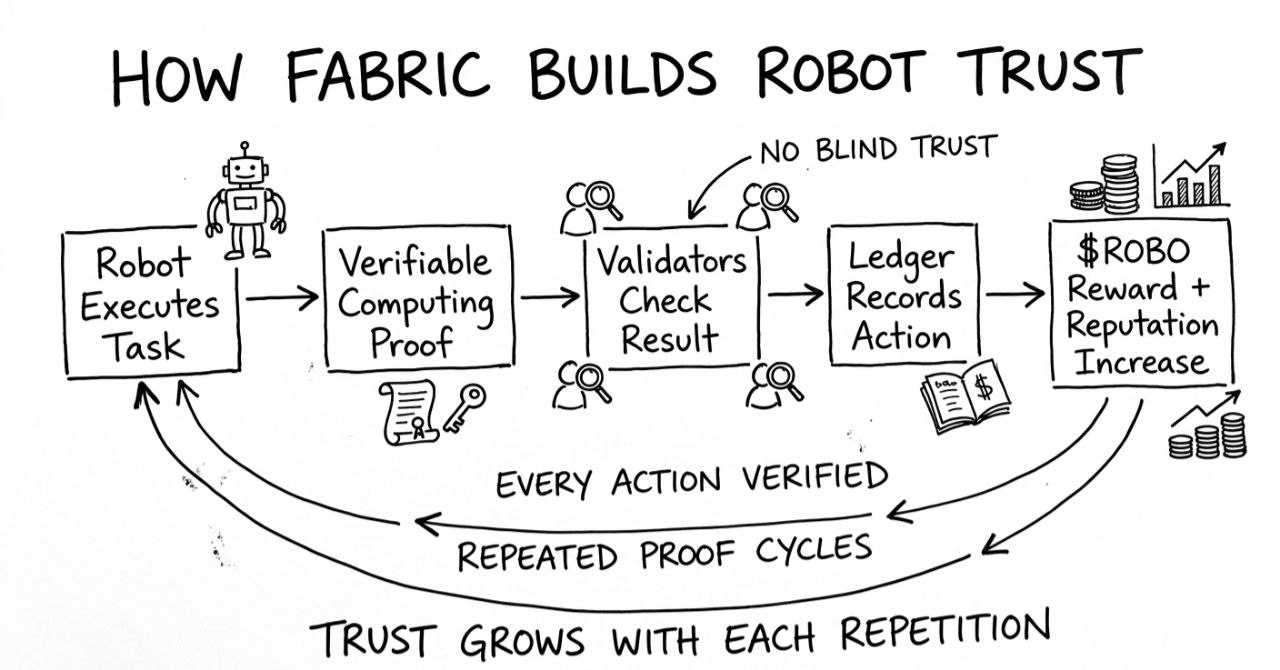

Here’s how it happens.

Every robot on Fabric builds a history.

Tasks executed.

Skills verified.

Validator confirmations.

$ROBO rewards earned.

Over time, the network starts to treat that history like a reputation score.

A robot that consistently performs well gets:

• faster task assignment

• higher validator confidence

• fewer disputes

• more economic weight

In other words:

The robot becomes predictably reliable.

And that’s where the interesting shift happens.

Because Fabric doesn’t really trust the robot.

It trusts the evidence the robot leaves behind.

That evidence lives on-chain.

Verifiable computing proves the code.

Validators confirm the results.

The ledger records the history.

The robot’s reputation isn’t a story.

It’s a trail of math.

But reputation systems always create a strange tension.

The more reliable a participant becomes, the more the system assumes they’ll continue behaving the same way.

Humans fall into this trap constantly.

Banks trusted AAA ratings.

Platforms trusted “verified” users.

Markets trusted reputation until they didn’t.

Fabric tries to avoid that trap by making every action re-verifiable.

Past success doesn’t remove scrutiny.

Every task still goes through validators.

Every result still produces proof.

Reputation helps with coordination — but verification never disappears.

That design choice might be more important than it looks.

Because robot networks will eventually scale beyond human oversight.

Thousands of agents.

Millions of tasks.

Entire cities of machines negotiating work with each other.

At that point, the real danger isn’t bad robots.

It’s unquestioned reputation.

Systems fail when trust becomes automatic.

Fabric’s bet is that trust should always be re-earned through verification.

So maybe the most interesting thing about Fabric isn’t that robots can earn $ROBO .

It’s that the network refuses to believe them

no matter how good their history looks.

And honestly…

that might be the safest way to run a robot economy.

One question I keep thinking about:

If a robot builds a perfect reputation on-chain…

should the network ever start trusting it more?

Or should every machine stay permanently under proof? 🤔