One evening I was reading an AI-generated report someone shared in a crypto group. It looked polished. Charts, technical language, confident statements. Everything felt… convincing.

But I caught one mistake. Just one.

And suddenly the whole thing felt shaky.

That small moment is basically the reason networks like Mira exist.

Instead of asking people to trust AI output, Mira built a system where the verification itself becomes a network process. Not one machine. Not one company. A distributed group of independent nodes checking the same claims.

Here’s how it works.

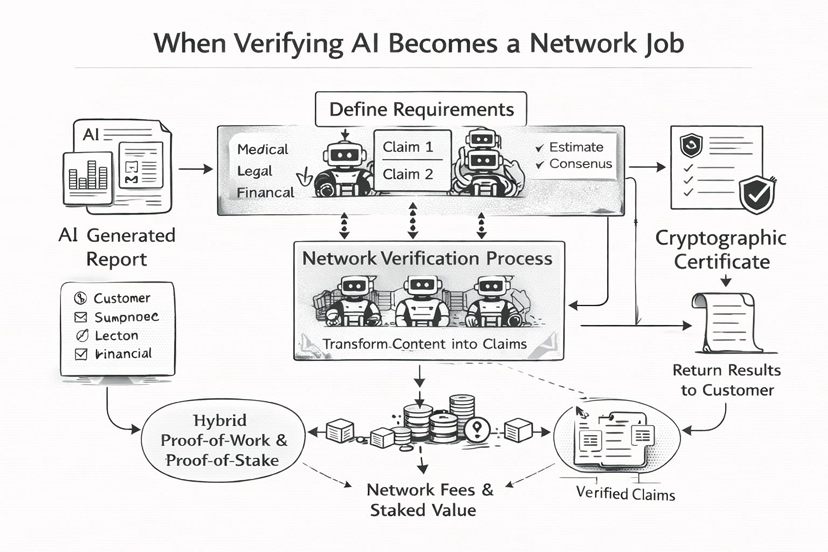

A customer submits content they want verified. It could be a document, a technical explanation, even a piece of code. Along with the content, they define verification requirements things like the knowledge domain or the consensus threshold.

Medical. Legal. Financial.

Then the network begins its quiet work.

The system transforms the content into individual claims while preserving their logical relationships. These claims are distributed across verifier nodes running AI models. Each node processes the claim and submits verification results.

Simple idea.

But powerful.

Nodes don’t just appear in the system randomly. They operate independently but must maintain performance and reliability standards to remain part of the network. If their responses consistently deviate from consensus or show signs of poor verification, their participation becomes risky.

Once the nodes submit results, the network aggregates them and determines consensus.

And then something interesting happens.

A cryptographic certificate is generated.

This certificate records the verification outcome including which models agreed on each claim. The customer receives both the result and the certificate, essentially a verifiable proof that the information has been checked.

Almost like a receipt for truth.

Behind this system sits a hybrid economic model combining Proof-of-Work and Proof-of-Stake. Instead of miners solving meaningless puzzles, the work here actually has value: verifying information.

Customers pay network fees to obtain verified outputs, and those fees are distributed to node operators and data contributors as rewards.

But Mira’s designers noticed a strange problem.

If verification tasks are standardized for example, multiple-choice questions the probability of guessing becomes non-trivial. A binary choice gives a 50% chance of guessing correctly. With four options, it’s 25%.

Too easy.

So nodes must stake value to participate. If their behavior suggests random guessing rather than real inference, their stake can be slashed.

Suddenly the economics flip.

Guessing becomes expensive.

Honest verification becomes rational.

And as more nodes join, something else improves: diversity.

Different models bring different training data, different reasoning patterns, different strengths. This diversity reduces bias and strengthens the reliability of the final consensus.

The system even evolves over time.

At first, node operators are carefully vetted. Later, the network decentralizes further with duplicated verifier models processing the same tasks. Eventually verification requests are randomly sharded across nodes, making collusion extremely difficult.

Quiet layers of security.

What fascinates me about this architecture is that it treats information the same way blockchains treat transactions.

You don’t trust the sender.

You trust the network verification.

And maybe that’s where AI systems are heading.

Not toward smarter models alone…

but toward networks that make intelligence accountable.

@Mira - Trust Layer of AI #Mira $MIRA