What keeps pulling me back to Fabric Protocol is that it is looking at a side of the AI economy that still feels strangely ignored.

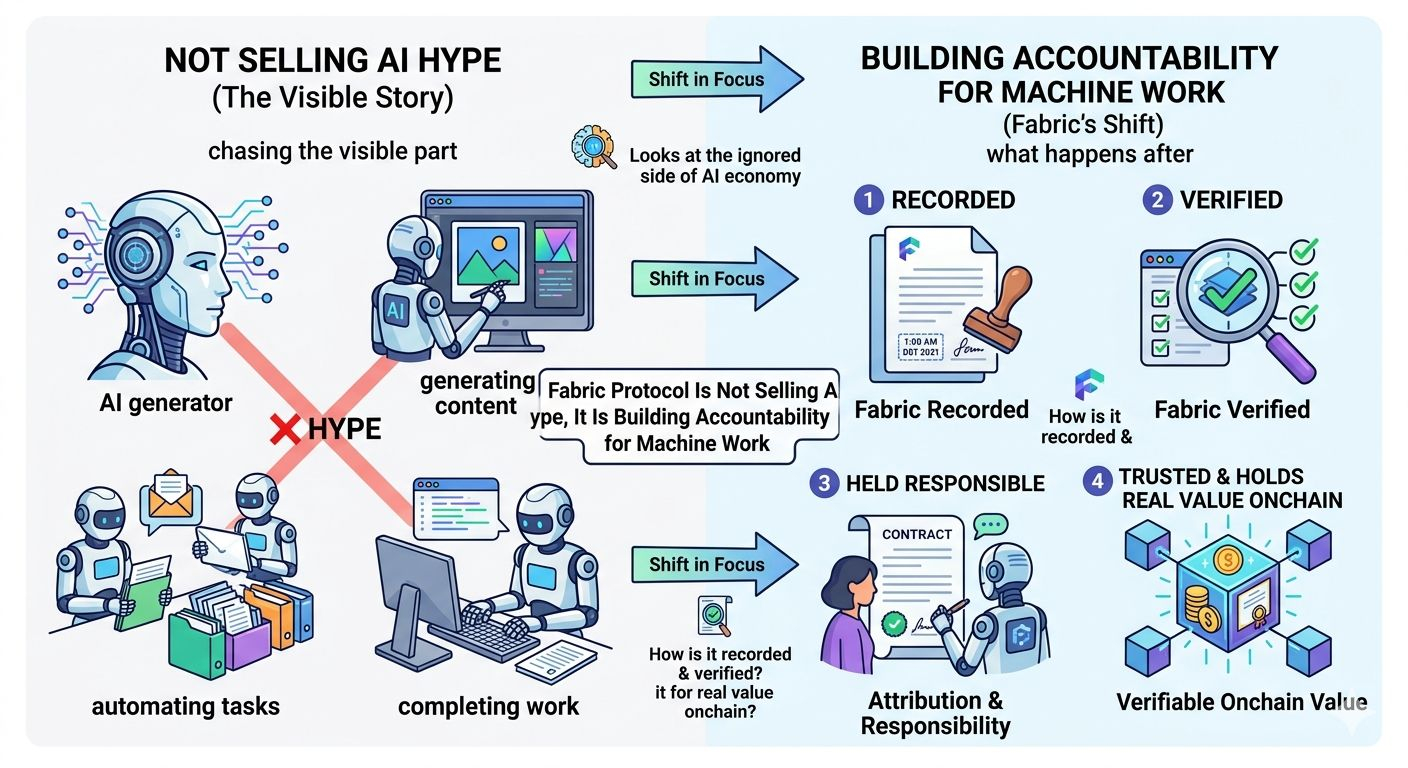

Most projects in this space are chasing the visible part of the story. They want to show what a machine can generate, automate, or complete. Fabric is more interested in what happens after that moment. Once the work is done, how is it recorded, how is it verified, who is held responsible for it, and why should anyone trust it enough for that work to hold real value onchain?

That shift in focus is what makes it feel more serious than the usual AI narrative.

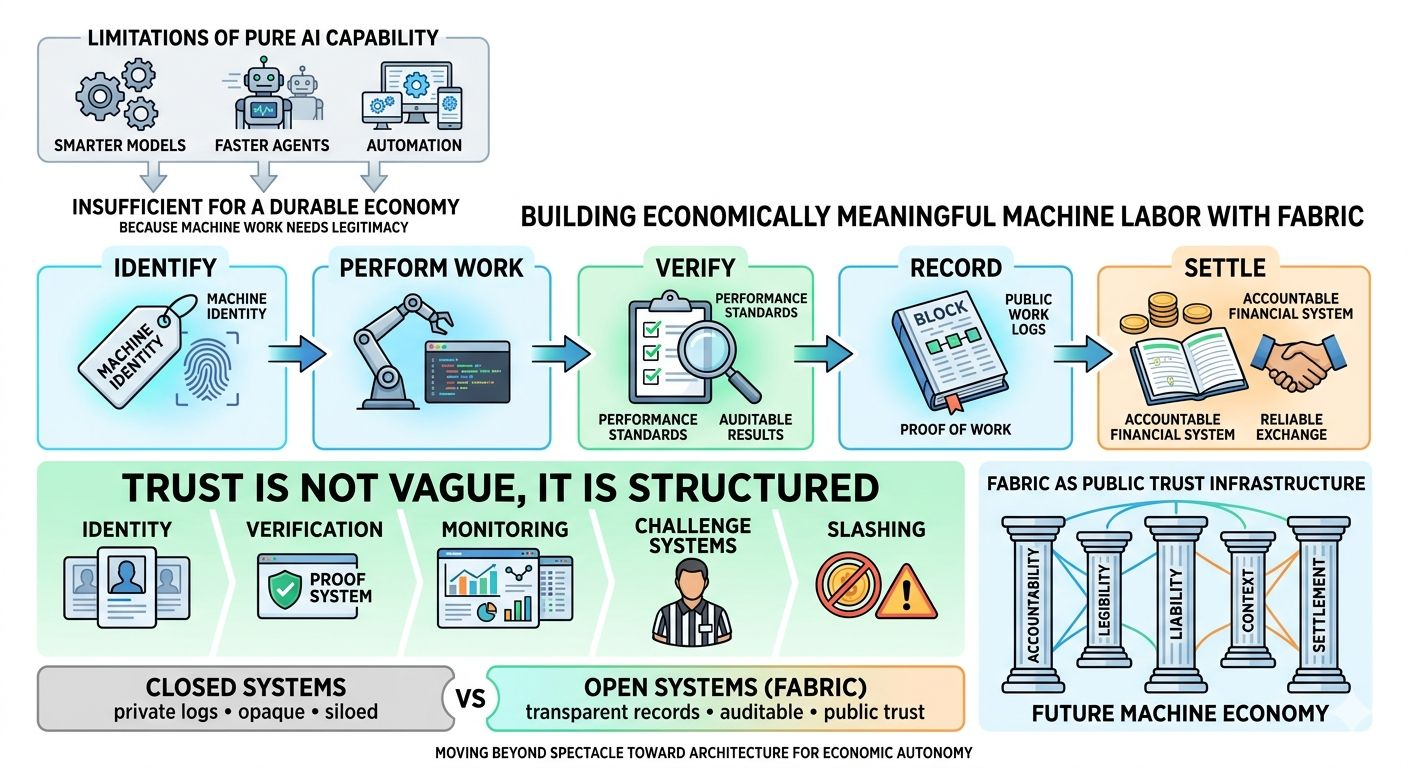

A lot of AI talk still lives in the language of capability. Smarter models. faster agents. better outputs. more automation. But capability on its own does not create an economy. The moment machines start taking part in economic activity, a harder layer appears underneath everything else. Someone has to know who performed the work, what was actually done, whether the task met a real standard, and what happens if the machine fails, lies, disappears, or produces something unreliable. Without that layer, the output may look impressive, but it does not become durable infrastructure.

That is where Fabric starts to matter.

It does not feel like a project trying to borrow attention from the AI wave. It feels like a project trying to build the rails that a machine economy would eventually need if it ever wants to move beyond demos, speculation, and closed systems. The interesting part is not just that autonomous agents might earn or interact onchain. The interesting part is that Fabric is asking how that activity becomes measurable, accountable, and financially native instead of remaining a loose promise wrapped in futuristic language.

That is a much stronger idea than most of what gets marketed under AI right now.

The reason is simple. Machine labor cannot become economically meaningful just because a machine produced an output. It has to become legible. It has to carry proof, context, and trust. There has to be a system that does more than display the result. It has to show who did the work, under what conditions it happened, how performance is evaluated, and whether there is a penalty when reality falls short of the claim. That is a very different design problem from building a model or launching a token. It is closer to building public trust infrastructure for autonomous work.

Fabric seems to understand that.

What stands out is that it is not treating trust as a vague marketing word. It is treating trust as something that has to be structured. In that kind of system, identity matters. Verification matters. Monitoring matters. Challenge systems matter. Slashing matters. That may sound cold compared to the usual AI optimism, but that is exactly why it feels grounded. When real economic value enters the picture, nobody cares about clean branding around autonomy. They care about whether the machine can be held to a standard and whether responsibility remains visible after the fact.

That is the part many people still miss.

There is a big difference between a machine doing work and a machine doing work inside an environment where strangers can rely on the record of that work. Closed systems can hide behind internal logs and private oversight. Open systems cannot. If autonomous agents and robotics are going to interact economically in a more public way, then the entire question changes. It becomes less about raw capability and more about whether machine action can be audited, challenged, settled, and trusted without everyone needing to know or trust the operator personally.

Fabric feels like one of the few projects trying to live inside that question.

That is why it seems underexplored compared to the size of the opportunity. The market still tends to price AI stories at the surface level. It reacts to the idea of intelligence, automation, and future scale. But if machine labor actually grows into something real, the center of gravity will eventually move toward settlement, accountability, reliability, and proof. Those are not exciting words in the way hype cycles like, but they are usually the words that end up mattering most once an industry starts maturing.

And this is where Fabric gets interesting in a deeper way.

It is not really making the easy claim that machines will matter. That part is already obvious. The harder claim is that machine work will need a public coordination layer around it, something that can give identity to the actor, attach consequences to bad behavior, reward verified contribution, and create a financial structure around work that can actually be trusted. That is a different class of project. It moves the conversation away from spectacle and toward architecture.

I think that is why Fabric feels stronger than a lot of AI-linked protocols. It is not trying to win by sounding futuristic. It is trying to solve a boring problem that becomes critical the second autonomous work starts touching money, markets, and real operational value. And historically, boring problems like verification, settlement, and accountability tend to become the most valuable ones later, because everything else ends up depending on them.

There is also something important in the timing.

We are still early enough that most people are not thinking seriously about what a machine economy would require underneath the surface. They are still focused on what agents can do, not on what a credible system around those agents would need to look like. Fabric is already leaning into that missing layer. It is building around the idea that output is not enough. Work has to be recognized. Performance has to be tracked. Value has to be assigned in a way that survives scrutiny. If that vision is right, then the protocol is not just part of the AI conversation. It is closer to the accounting system for a future machine economy.

That does not mean everything is solved. It is still early, and early infrastructure projects always carry execution risk. A strong idea is not the same as guaranteed adoption. There is a long distance between having the right thesis and proving it in real markets with real participants, real workloads, and real incentives. That part still matters. But even with that caution, the core concept feels sharper than most.

Because the truth is, the AI economy does not become meaningful just because machines become more capable.

It becomes meaningful when machine labor can be trusted enough to hold value.

That is the real issue underneath all the noise. Not whether a machine can produce something once, but whether the system around that machine can prove what happened, preserve accountability, and make the result economically credible. That is the layer Fabric is trying to build. And to me, that is exactly why it stands out. It is not chasing the loudest part of the AI narrative. It is working on the part that may quietly matter more than all of it.