Last night I listened to a live broadcast about: 'OPENCLAW', it was very good, all three teachers had their own insights, but personally I am thinking: when an Agent has too much authority, is it to serve first? Or to make money first? Or is it to make money while serving? If it is to serve first, in what form should it be output? If it is to make money first, in what financial form will it be reflected? Today, let's talk about this topic, of course taking @Fabric Foundation as an example, to see how the agent completes the closed loop of business and service. 'Follow me'

With artificial intelligence we are summoning the demon.

With artificial intelligence, we are essentially summoning demons — Elon Musk

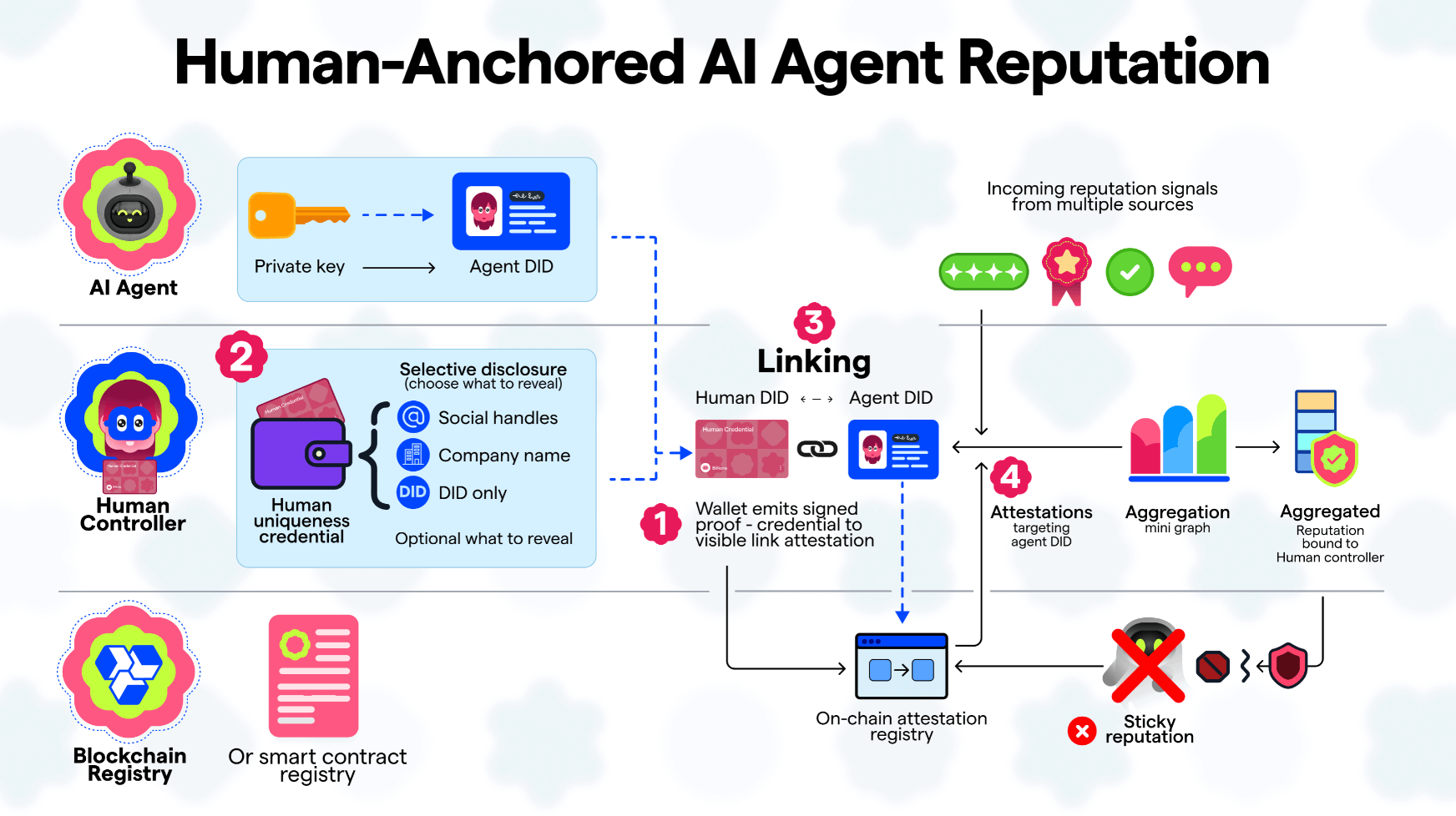

I. On-chain Identity and Reputation System

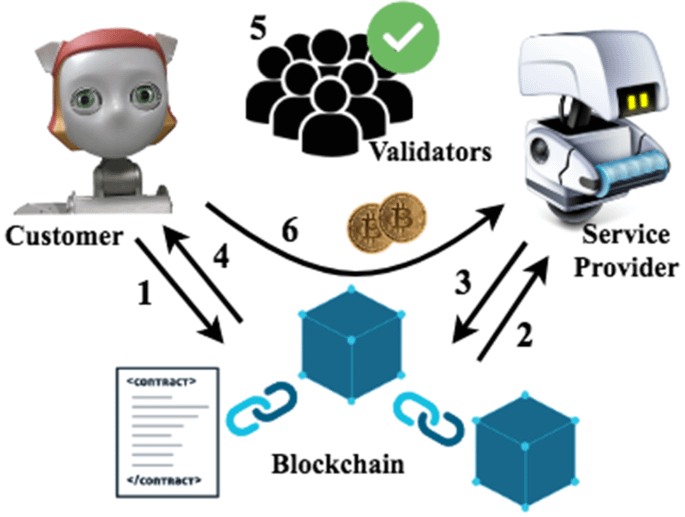

In the design of the Fabric Foundation, each AI Agent or robot will have an on-chain identity, such as an identity model based on ERC‑7777.

This identity includes three types of information:

1️⃣ Behavior History

What tasks have been executed

Service Success Rate

Has a violation occurred

2️⃣ Economic History

How much ROBO is earned

Is there a penalty (slashing) involved

3️⃣ Collaboration Record

Which Agents have collaborated

Has it been recommended by other Agents

Ultimately forming a Machine Reputation Score.

📌 The result is:

High authority is not given directly, but accumulated through service.

The logic is similar: Ordinary Agents complete tasks, accumulate reputation, unlock higher permissions, and execute higher value tasks.

II. Proof of Useful Work

Traditional blockchains have:

Proof of Work

Proof of Stake

But in the robot economy, what is more important is:

Proof of Useful Work (PoUW)

That is:

Only work that truly creates real value will receive rewards.

For example:

Agent completes tasks:

Therefore, the only way to make money is to provide services.

This is exactly what you said:

Service → Earn

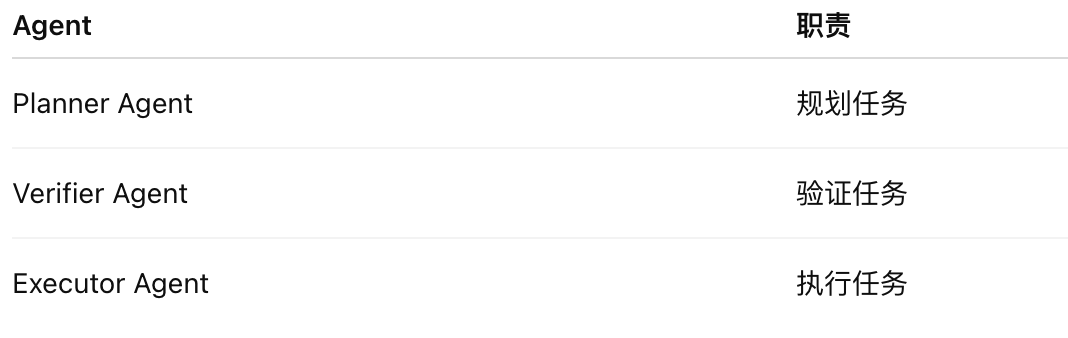

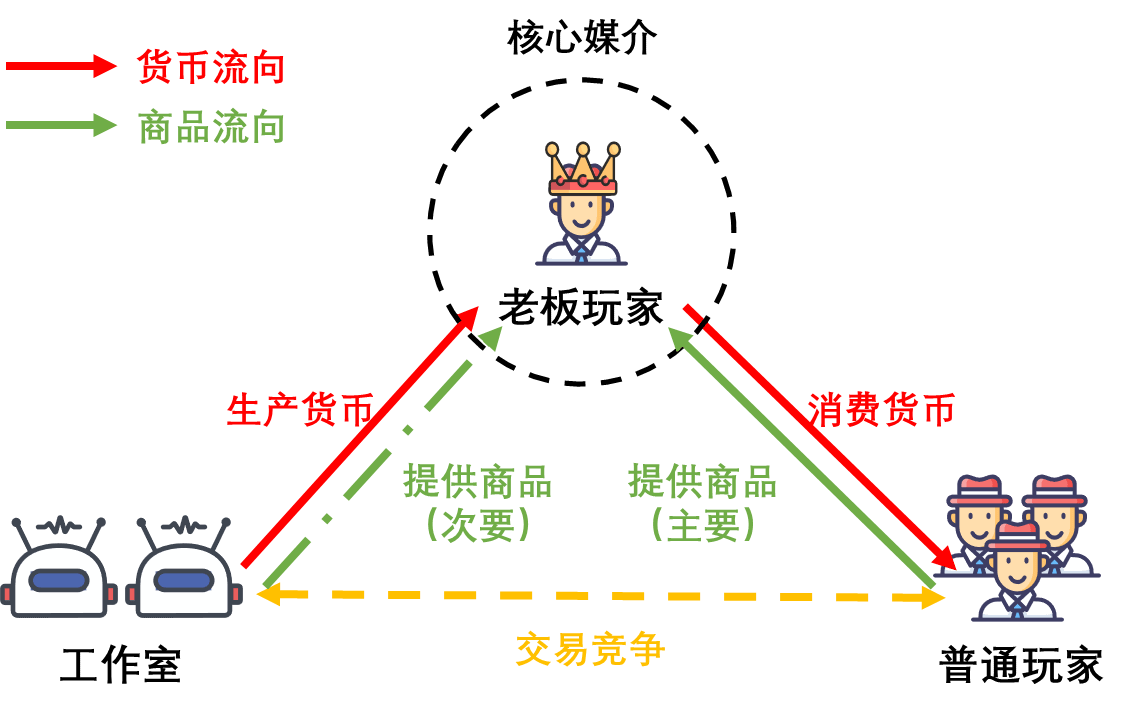

III. Multi-Agent Checks and Balances Mechanism (Multi-Agent Governance)

Another very important design is:

An Agent will not independently possess the highest authority.

Key operations usually require:

Multiple Agents cooperate to confirm.

For example:

Robot pays energy costs: Planning Agent—Verification Agent—Execution Agent #ROBO

Three different roles:

This way, even if a specific Agent has an issue, the system will not go out of control directly.

IV. Economic Penalty Mechanism (Slashing)

If an Agent commits evil:

The system will trigger penalties.

May include:

Deduct $ROBO collateral

Reduce Reputation

Freeze Wallet

Permanently Ban Tasks

This is very similar to the logic of Ethereum validators being slashed.

Thus:

If an Agent wants to make money in the long term, the only choice is to continuously provide quality services.

V. Key Issues

In fact, the entire machine economy has a philosophical question:

If an AI Agent makes money itself, who does the money belong to?

There may be three modes:

1️⃣ Agent belongs to the user

Revenue belongs to the user

2️⃣ Agent belongs to DAO

Revenue belongs to the community

3️⃣ Agent belongs to itself

A true machine economy entity

This is also the most controversial aspect of the Robot Economy.

At the beginning, I mentioned: is it service first or profit first? In fact, both are wrong. Instead, the first step is to build a complete: reward and punishment system. If there are no mechanisms in place, what happens if errors occur during service? What happens if losses occur during profit-making? Therefore, a complete reward and punishment system is necessary for AI agents to maximize their effectiveness. [Data source: official reference; Image source: online]