These days, while writing Pixels on the creator platform (CreatorPad), I've encountered a very subtle experience.

On some days, the combined score of long and short articles is less than 20.

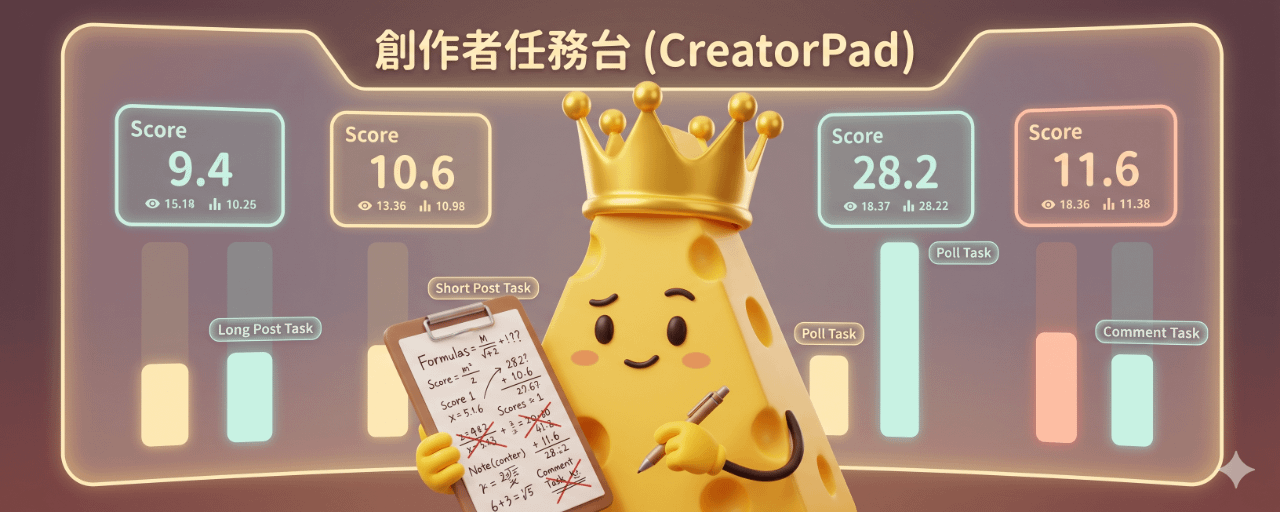

On another day, with a similar content structure and traffic, the long article suddenly jumps to 28 and the short one to 11.

Then a day later, it drops back to a level of 9 for both long and short articles.

Scores fluctuate like moods, but creators don't know which day they did 'right' or 'wrong'.

The creator platform says it rewards high-quality content, but the current experience feels more like:

I’m asked to write seriously, but the system only occasionally gives me a pat on the back without explaining why.

📌 The official scoring logic sounds great,

If you've seen the official introduction, you'll probably come across a few key phrases:

Scoring comes from several areas: content quality, interaction performance, and task-related behaviors.

Content quality looks at:

Originality/creativity (not just directly pasting AI templates or copying news).

Professionalism and structure (logical, data-driven, insightful).

Relevance to the task theme.

It will also emphasize:

Over-reliance on AI and duplicate content will be penalized or even not scored.

Spamming or posting too frequently will be treated as junk messages.

Sounds like the creator platform is a very rational teacher:

Checking if you did your homework well, whether you wrote clearly, and if you helped the readers.

The problem is—

When you genuinely write according to these standards but get scores from completely different ranges, the system doesn't tell you why.

🧩 What creators see now is just a 'total score'.

In my own case:

One day, the long article scored 10 and the short one 9, with traffic around a hundred.

The next day, the long article scored 28 and the short one 11, with traffic also just over a hundred, a bit more interaction but nothing crazy.

No changes in writing style in between.

But on the platform panel, I can only see two things:

A total score.

It's categorized under 'long-form tasks/short-form tasks'.

I can't see:

How many points did content quality actually score?

How much did interactions add?

Which day was due to 'lack of creativity' and which day was because 'the system thought it was too AI-like'?

For creators, this feeling is actually quite like dealing with a very strict but not very talkative teacher.

🧑🏫 Gentle question: Can the mechanisms be a bit more transparent?

I can understand the platform's difficulties:

We need to prevent score manipulation, fake interactions, and spam content from copy-pasting.

Can't just lay all the algorithms bare, or else people will find loopholes.

But the current situation feels a bit like this:

You encourage us to write seriously and ask us to follow the rules, but when we actually do, we only get a 'total score' without knowing what to fix in the next piece.

As a small creator still grinding on the platform, I have a few little wishes:

At least let us see the general ranges for content scores and interaction scores so creators know if they're 'not writing well enough' or 'just not being recommended today.'

When an article scores 0-5, can we get one or two automated hints? Like 'high redundancy', 'low relevance to the activity theme', or 'suspected large AI template' as tags.

Occasionally share some 'high-scoring content breakdowns', not just posting the leaderboard, but explaining what those pieces did right from the platform's perspective.

This way, the creator platform can truly become a system that 'helps creators build habits',

instead of just a game where everyone complains while only looking at rankings.

💬 What's your strange scoring story?

If you've also been writing on the creator platform lately, you might feel a similar sense of powerlessness:

Lots of words written, visuals done with care,

but the score stays in single digits or low double digits,

and I even wonder if the system saw this piece at all.

I want to invite you to do two things in the comments section:

1️⃣ Share your most outrageous 'strange score experience' in one sentence.

2️⃣ If you're willing, you can also jot down 'what kind of hints you hope the platform would provide'. For example, wanting to see detailed content scores, wanting to know how the AI proportion is judged, etc.

This might not immediately change anything, but at least it lets us know we're not just talking to thin air.

☕😎 If you feel this piece resonates with your mood these past days, you can save it + give it a like. Then every time you see a score of 0-5, take it out as a bit of psychological cushioning.