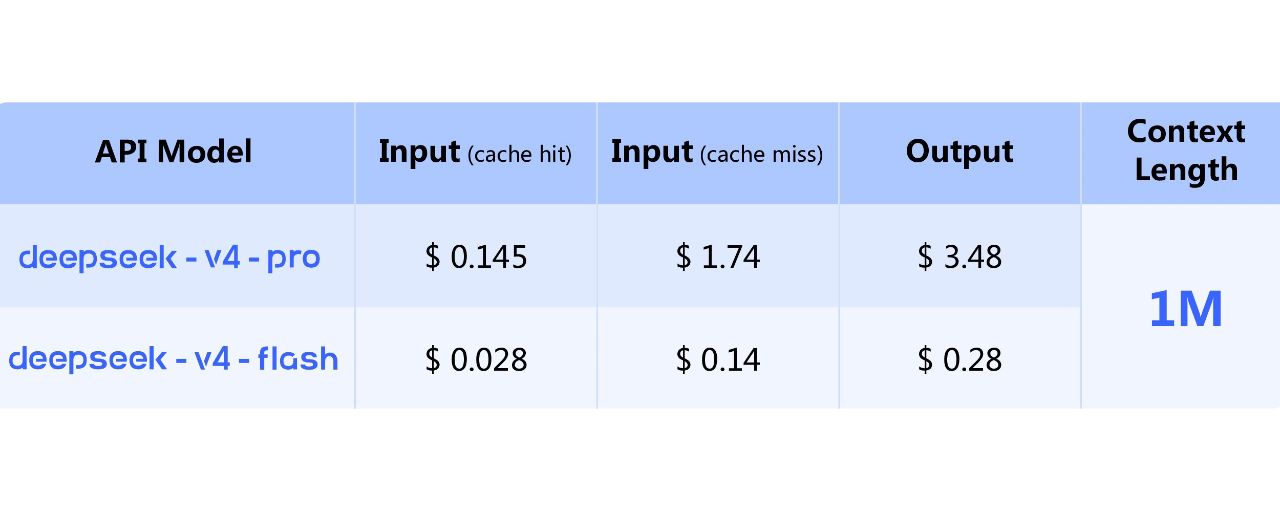

DeepSeek has unveiled the preview version of its V4 series open-source models, licensed under MIT, with weights now available on Hugging Face and ModelScope. According to Odaily, the series includes two MoE models: V4-Pro, with approximately 1.6 trillion total parameters and 49 billion parameters activated per token, and V4-Flash, with 284 billion total parameters and 13 billion parameters activated. Both models support a context of 1 million tokens. The official statement highlights that compared to version V3.2, the new models significantly reduce memory usage and computational costs during long-text inference.

Article

AI TRENDS | DeepSeek Releases V4 Series Open Source Model Preview

Disclaimer: Includes third-party opinions. No financial advice. May include sponsored content. See T&Cs.

1

688

Join global crypto users on Binance Square

⚡️ Get latest and useful information about crypto.

💬 Trusted by the world’s largest crypto exchange.

👍 Discover real insights from verified creators.

Email / Phone number