Let's get straight to the point

This week, oh-my-coder completed the most intense iteration ever, transitioning from a command line tool to a desktop app, evolving from a single feature to a full-fledged Agent collaboration system; we've basically polished the entire project.

If you checked out this project last week, coming back this week, you'll see it's a whole new ball game.

What we accomplished this week

🖥️ The desktop version is officially live

This is the biggest update of the week.

oh-my-coder now has a real desktop app, so you don't have to stare at the black command line anymore; you can execute all your trades in a clean interface:

Left sidebar: historical sessions are clear at a glance, switch anytime.

Right settings panel: each model can be configured with its own API Key, no more confusion.

Cmd+K for quick access: call up the command panel anytime without interrupting your workflow.

Markdown real-time rendering: AI responses are displayed in formatted text, no more piles of symbols.

Difference comparison view: code changes before and after are clear, you can see at a glance what has changed.

The design philosophy of the desktop version is: terminal efficiency, desktop experience. We don't want to create a bloated IDE, but a lightweight shell that allows command line users to enjoy the convenience of a graphical interface.

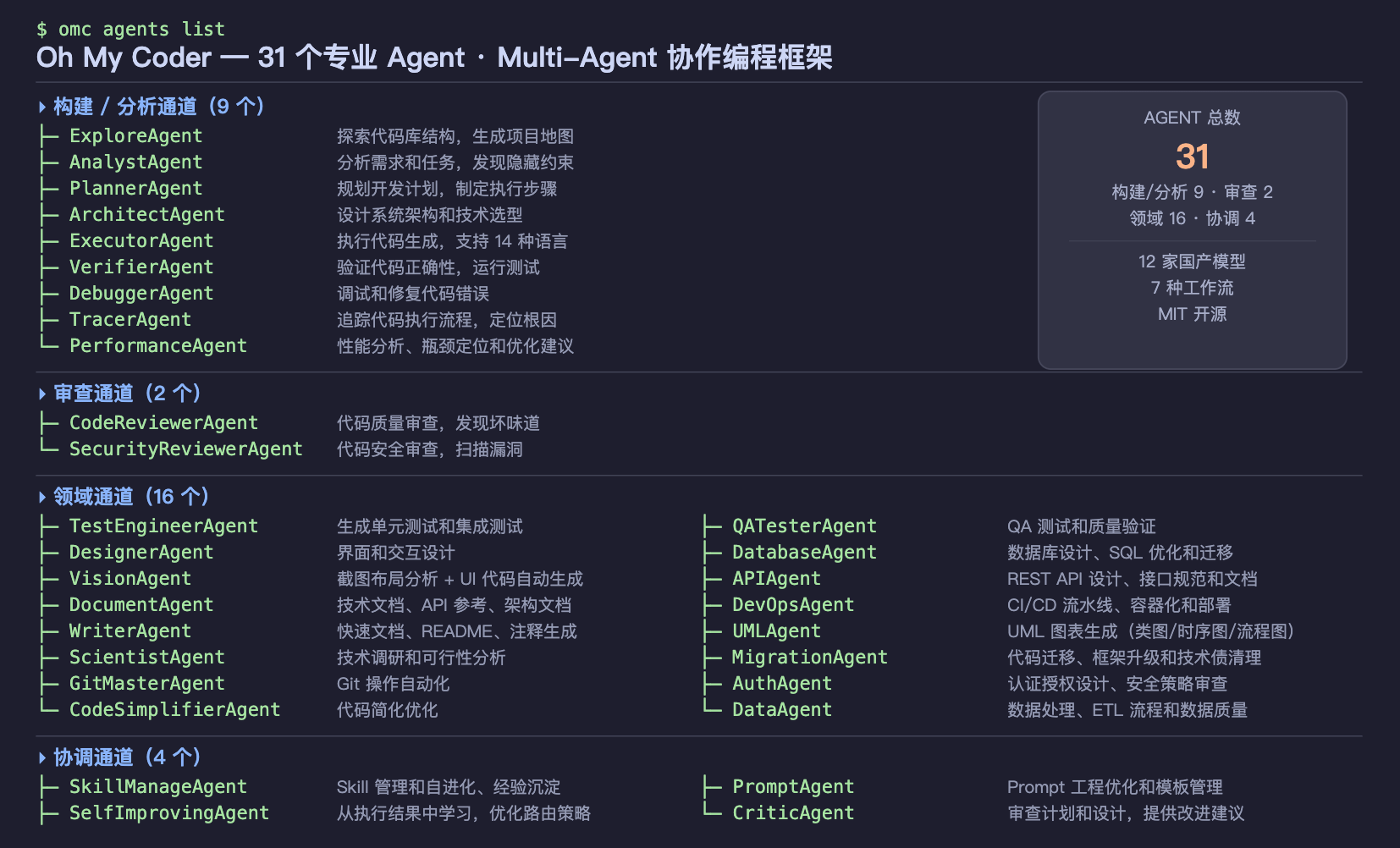

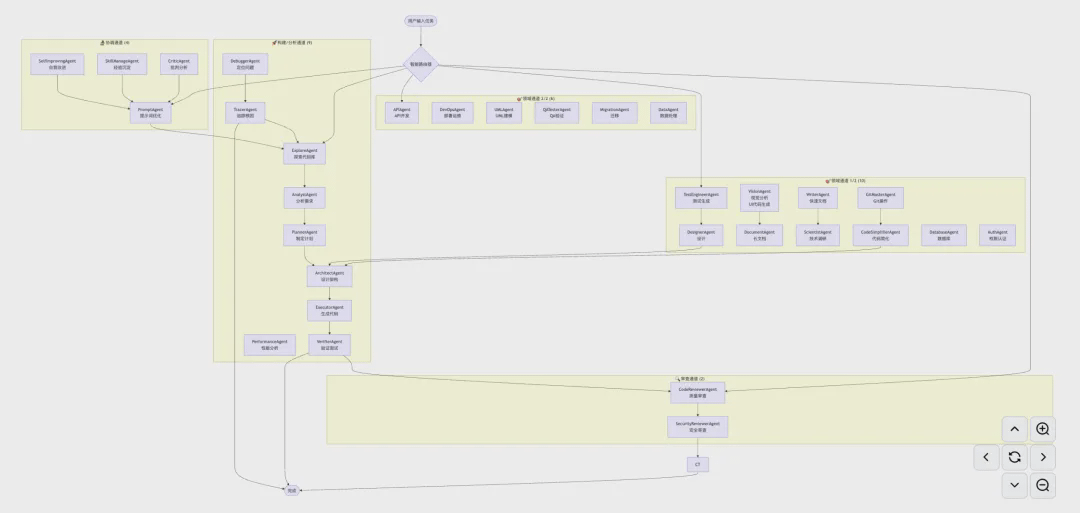

🤖 31 Agents, how do they coordinate their work?

This is the question users ask the most, so let's answer it seriously today.

Many people see '31 Agents' and wonder: with so many Agents, how do they divide their work? Will they clash? Will they run amok?

Simply put: they are a well-organized team, not a bunch of lone wolves.

Every time you issue a task, oh-my-coder won't send all 31 Agents out. It will automatically select the most suitable combination of Agents based on the task type, for example:

Write code → Call code generation Agent + code review Agent.

Fix bugs → Call debugging Agent + testing Agent.

Write documentation → Call documentation Agent + format check Agent.

These Agents work in parallel and cross-validate results. If two Agents come to different conclusions, the system will automatically flag it for you to decide which to adopt.

Why cross-validation?

Because a single AI can make mistakes, can 'hallucinate', and confidently provide incorrect answers, but the probability of two independent Agents making the same mistake at the same time is far lower than that of one. This is an experience we've summarized from engineering practice, not just a gimmick.

Moreover, each Agent has a health check mechanism. If an Agent doesn't respond within 60 seconds, the system will automatically reassign its task to other Agents, ensuring the whole process doesn't freeze.

💰 Token consumption issues, how do we view it?

This is also the most common concern among users: multi-Agent collaboration, does it consume a lot of Tokens?

To be honest: yes, it's more than a single Agent.

But we've done several things to control this issue:

First, to show you where the money is being spent.

After each task is completed, oh-my-coder generates an execution tracking report, telling you how many Tokens each Agent used and which step was the most expensive, so you won't have that 'where did my money go' feeling again.

Second, only production-grade models are enabled by default.

This week, we've added a model filtering feature. By default, oh-my-coder only displays and uses verified production-grade models, hiding those experimental models still in testing to avoid using expensive models for simple tasks and preventing unstable models from messing up important work.

Third, GLM-4.7-Flash is completely free.

If you just want to test the waters without spending a dime, go straight for GLM-4.7-Flash. It's a free model released by Zhipu AI, capable enough to handle most everyday programming tasks. We've set it as the default recommendation, with a three-step configuration and zero cost to start.

🚀 Newbie guide: get started in three steps.

This week, we've added an interactive quick start guide.

Previously, new users using oh-my-coder for the first time had to look at documentation, find configurations, and guess commands. Now it's different:

Run omc quickstart.

The system guides you to select models (with recommendations, no need to research yourself).

Paste API Key, and you're done.

The whole process takes less than 3 minutes, without needing to read any documentation.

🔒 Security hardening

This week we also did something not so eye-catching but very important: security hardening.

Fixed a logical flaw in API Key configuration (Issue #7, thanks to user shiflymoon for the feedback).

Added protection rules to the main branch of GitHub to prevent accidental code overwrites.

All error messages no longer expose sensitive system details.

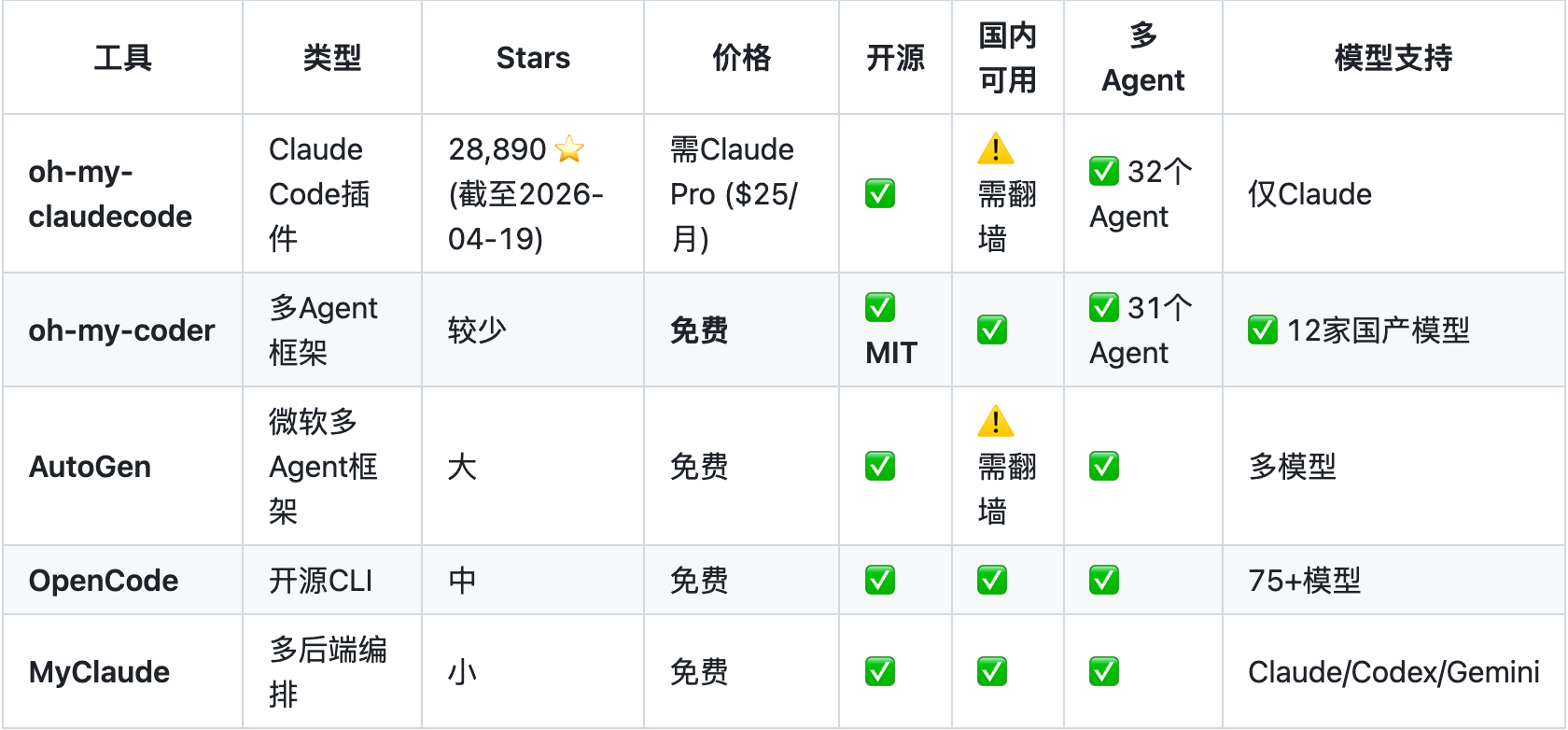

What sets us apart from competitors?

There are quite a few similar tools on the market, the most famous being OpenCode (146K stars) and Claude Code.

OpenCode supports 75+ models, with a very advanced architecture, but it's designed for global users, making it unfriendly for domestic users - many models require a VPN, configurations are complex, and there's no optimization for domestic models.

Claude Code is officially produced by Anthropic, providing a great experience, but it only supports the Claude series models, and recently, Anthropic's account bans for Chinese users have led many to look for alternatives.

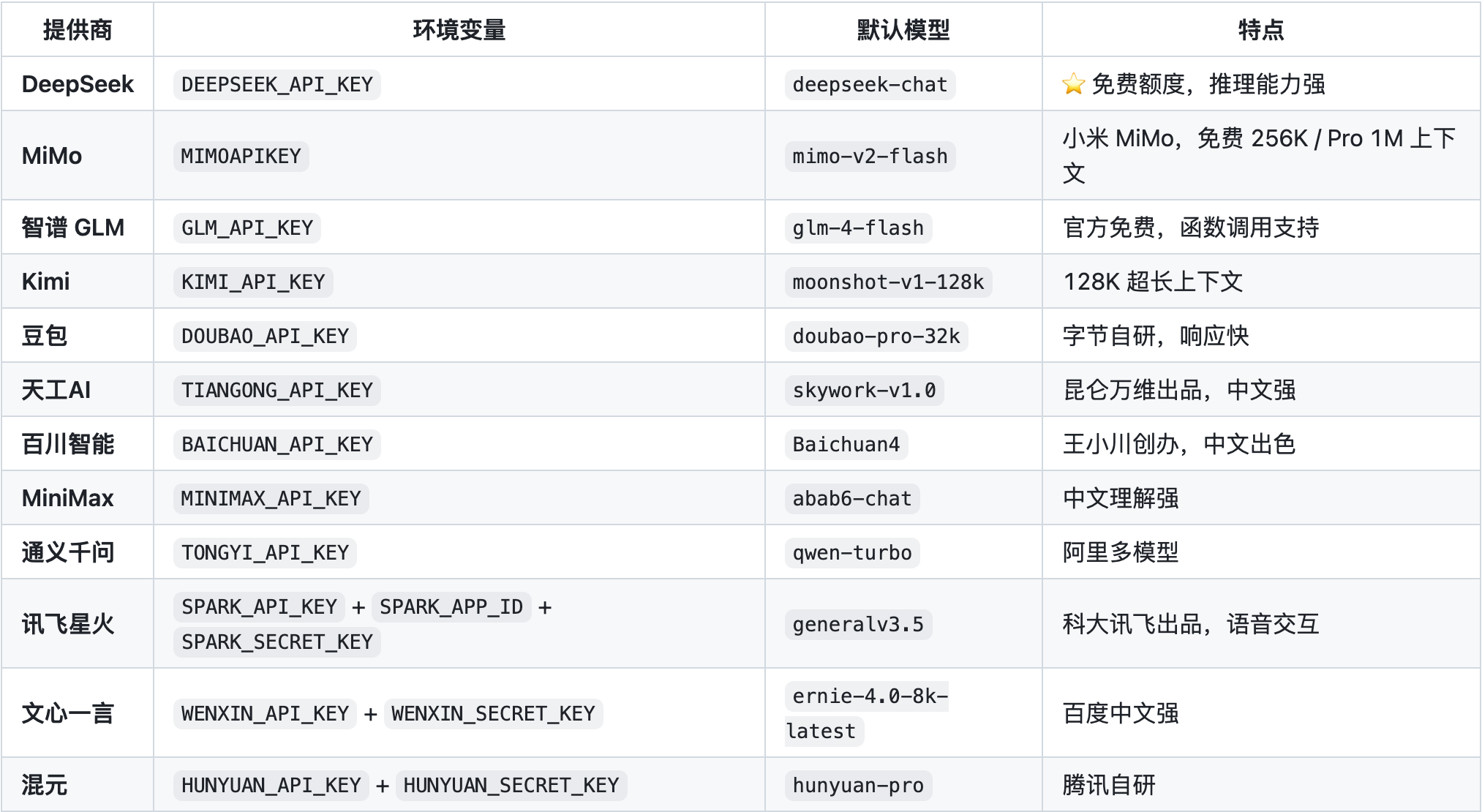

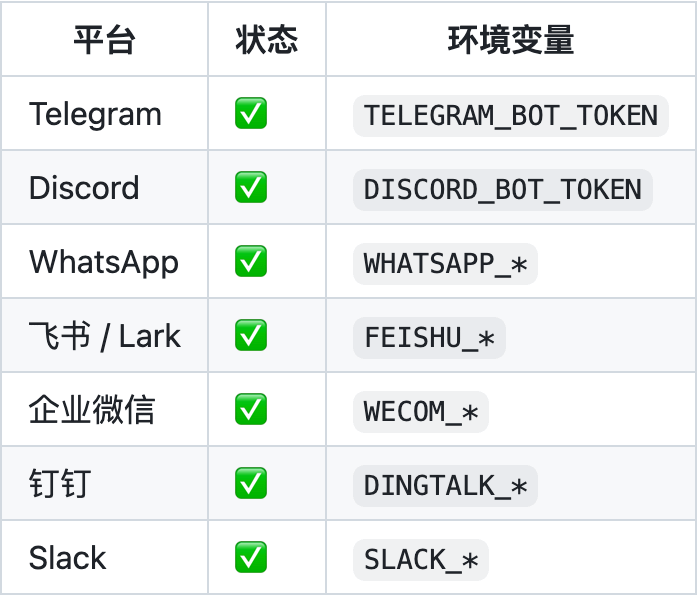

oh-my-coder's positioning: a multi-Agent programming assistant specifically designed for domestic developers.

12 domestic large models, all directly connected, without needing any proxies.

31 professional Agents cover the complete development process from requirement analysis to code review.

Completely open-source, MIT license, the code is in your hands, not relying on any cloud services.

Self-evolution system: after each task is completed, the system writes the experience into memory, making it more accurate for similar tasks next time.

Self-evolution: this feature deserves a separate mention.

oh-my-coder has a feature that many people have overlooked: it learns.

After each task is completed, the system automatically summarizes the experience of this task - what methods were used, what pitfalls were encountered, which Agent performed best - and writes it into a hierarchical memory system.

Next time you encounter a similar task, it will refer to this memory instead of starting from scratch.

This means: the longer you use it, the better it understands your project and your habits.

This isn't just marketing talk; it's a design concept we learned from Claude Code's architecture, then implemented in oh-my-coder.

What's next?

VS Code plugin: lets you directly call oh-my-coder in the editor without switching windows.

Token auto-compression: long conversations automatically compress context to reduce unnecessary consumption.

Web interface: giving users who aren't used to command lines a friendlier entry point.

Finally,

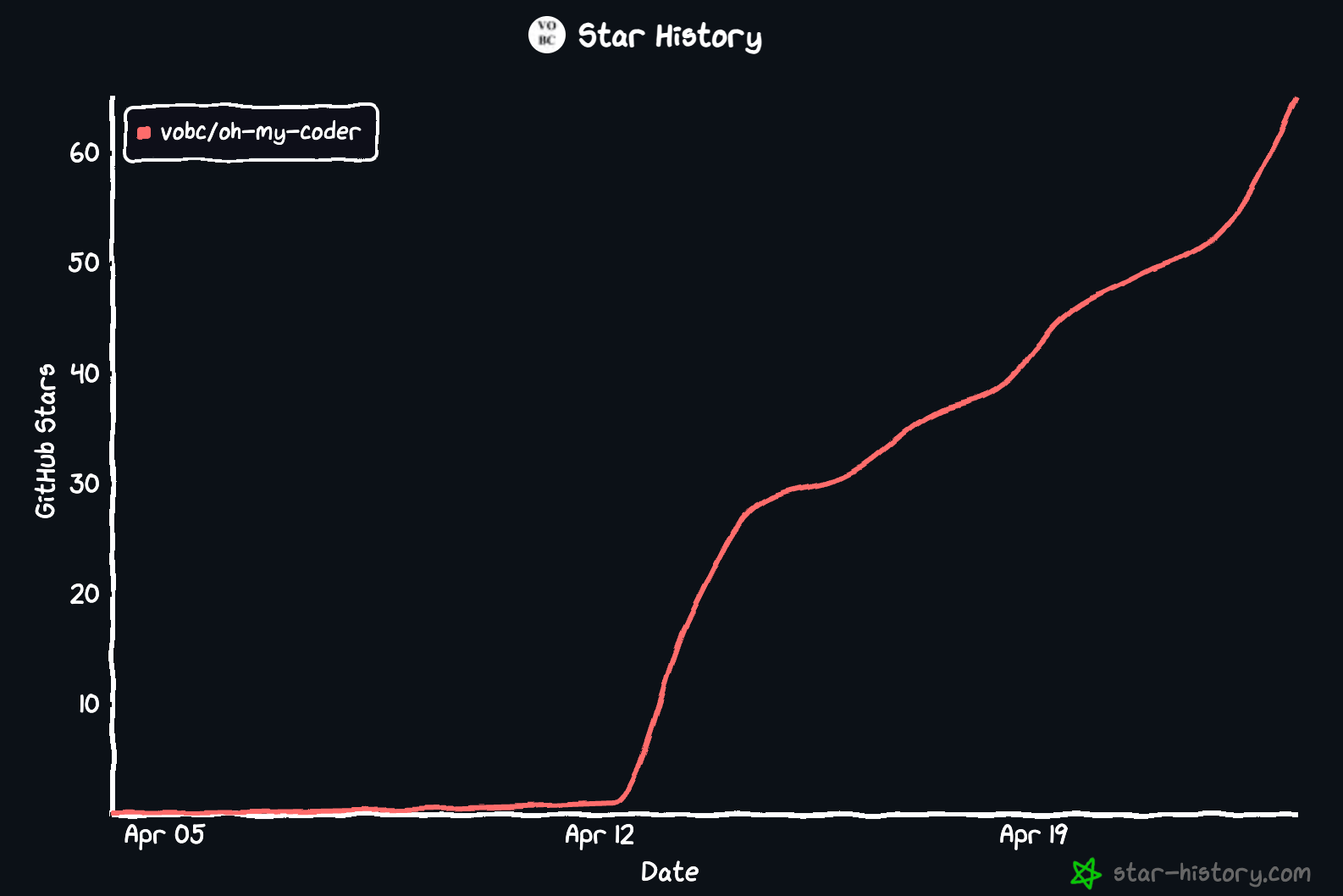

oh-my-coder is a rapidly growing project; we update it daily and introduce new features weekly.

If you encounter issues, feel free to raise them on GitHub. If you find it useful, a Star is the greatest encouragement for us.

GitHub:

https://github.com/VOBC/oh-my-coder

#OhMyCoder #claude_code #AIAgent #vibecoding #AI

The OpenSea content you're concerned about

Browse | Create | Buy | Sell | Auction

Collect and follow OpenSea Binance channel.

Stay updated with the latest news.