A lot of folks check out token projects and usually start by looking at how much is issued, how it's distributed, and the release schedule. That's all good, but if you only focus on that layer, you might miss out on the more crucial variables.

If we stretch out the timeline a bit, what often determines long-term value isn't the token itself, but the capabilities that accumulate around it. Some projects accumulate users, while others build out traffic channels, leaning more towards data.

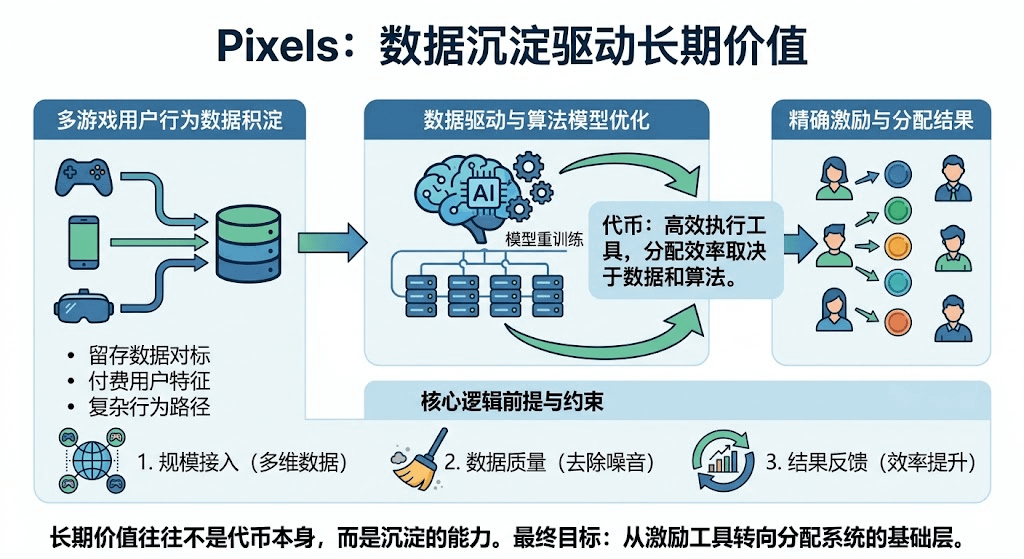

The core action is pretty straightforward: it tracks user behavior across different games and then uses that data to fine-tune reward distributions. The whitepaper keeps stressing that it will optimize incentives based on retention, spending, and behavioral paths, and will continuously retrain the model.

The key issue is whether this data can accumulate and become more useful over time.

The data value from a single game is limited; only after onboarding multiple games does comparison and structure begin to emerge. Differences in retention across gameplay styles, the impact of different incentives on behavior, and which users are more likely to convert will gradually crystallize. Once the data dimensions widen, the distribution logic will start to shift.

Rewards are increasingly determined by model judgments. Tokens are still circulating, but they're more like execution tools, with efficiency hinging on data and algorithms.

This path is somewhat similar to ad systems. Initially, it relies on experience, then shifts to data-driven and automated distribution. Whoever has more complete data and a more accurate model will have lower costs.

However, this logic has a premise.

First is scale. Not enough games are onboarded, and the data dimensions can’t support it, making it tough for the model to gain an edge.

Second is quality. If the data is filled with short-term behaviors or noise, the model can be misled.

Data only starts to generate value when it's sufficiently abundant and relatively clean.

There’s also a more direct constraint: results. If the distribution efficiency doesn’t significantly improve, the project team may lack the motivation to onboard, and data growth will slow.

So, shifting the perspective from tokens to the platform is a reasonable direction, but the conclusion is still up in the air.

The token distribution method impacts efficiency. The former can be designed, while the latter relies on operational outcomes.

If the data later forms a scale and feedback loop,$PIXEL its position will gradually shift from an incentive tool to the foundational layer of the distribution system.

At this stage, it feels more like the structure is set up, but capabilities are still being validated.#pixel #广场征文