There is nothing to put human oversight back faster than an authentic outcome that you still have to second-guess before you can act upon. I continue to observe this trend of AI implementation: the model is correct, the verification dashboard is green, and the team waits before implementation.

This is the seam that is being built by @Mira - Trust Layer of AI . The project is worth consideration, not because it is what the verification demonstrates, but because it is where the verified output is going further.

Why Blob Trust Still Runs Most AI Systems

Majority of AI products work using blob trust. In a model you are provided with paragraph and your choice is either to accept or reject the entire thing. When a sentence is invalid you do away with the whole product. Mira works differently. The whitepaper explains the decomposition of output into verifiable statements that can be verified independently and the routing of those to a variety of verifier models and the finalisation of the results using the distributed consensus and cryptographic attestation.

The claim decomposition approach gives you granular error isolation. A single faulty output does not infect an entire output. The code base and developer tools are public, including an API compatible with standard interfaces.

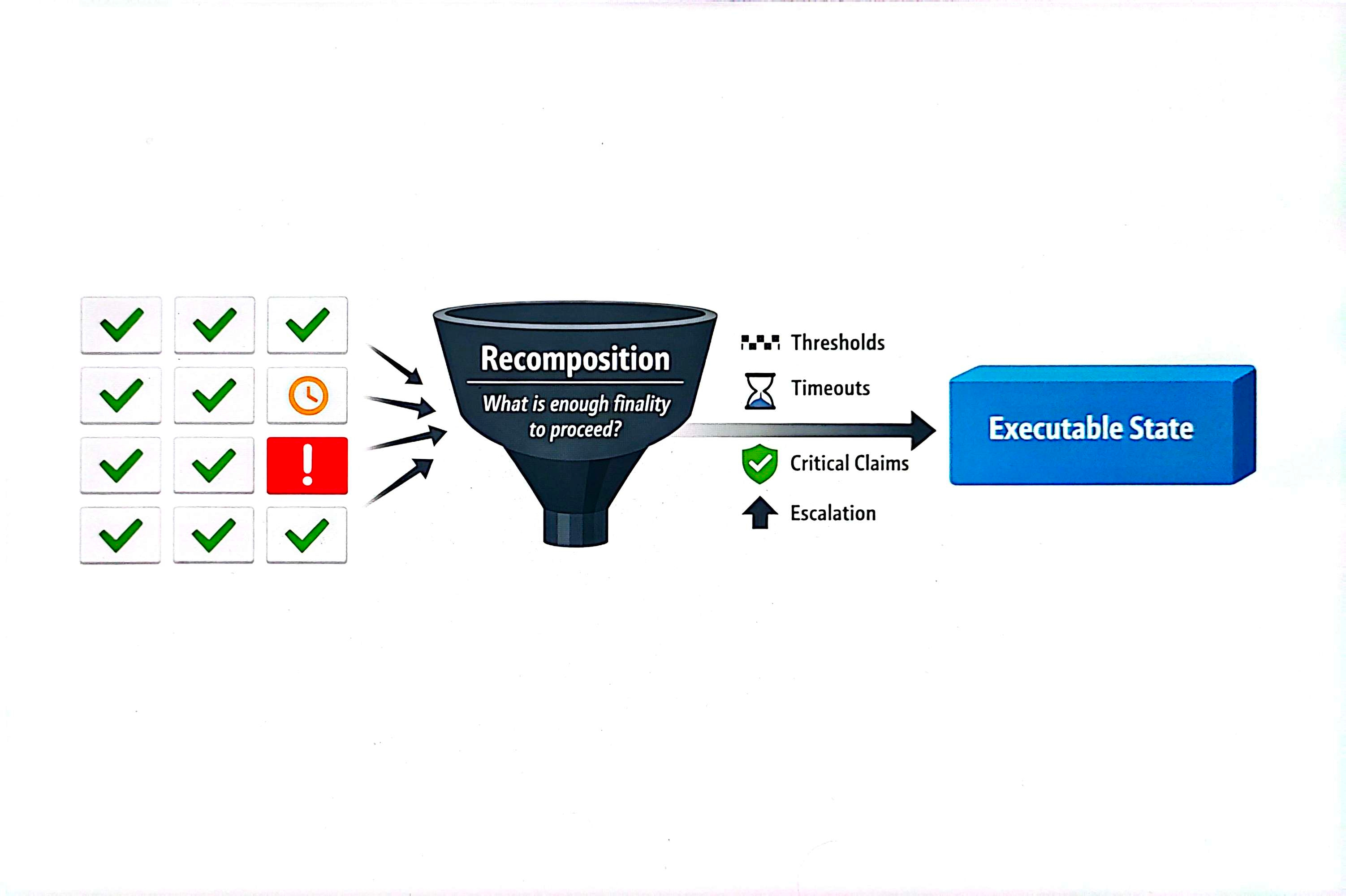

Decomposition Is Clean but Recomposition Is the Real Challenge

This is what most verification stories do not cover. Output The division of output statements provides accuracy. However, it is not executed at the level of statement. Workflows need closure. One of the seven statements is accepted, one is pending, one is disputed by a system. Operations rely on a more difficult question: when is it enough finality to do so?

A less strong protocol submerges the uncertainties within the confidence measures. Mira makes the seam visible. Safe to execute, and mostly verified are not similar. It is the recognition of this which forms the commencement of serious system design.

Why Centralizing Action Finality Is the Red Flag

Action finality remains concealed within the confines of private policy in case AI reliability remains concentrated. This is a company that defines thresholds. Acceptable risk is determined by one API vendor. Smooth in demos. Not trustless. Not portable.

Mira provides an alternative way: explicit semantics of the incomplete bundles, the challenged statements and loops of re-verification. Verified output to executable state can be readable at the network level. The word governance gets thrown around loosely in crypto, but here the application is concrete: who decides when verified output is final enough to act on?

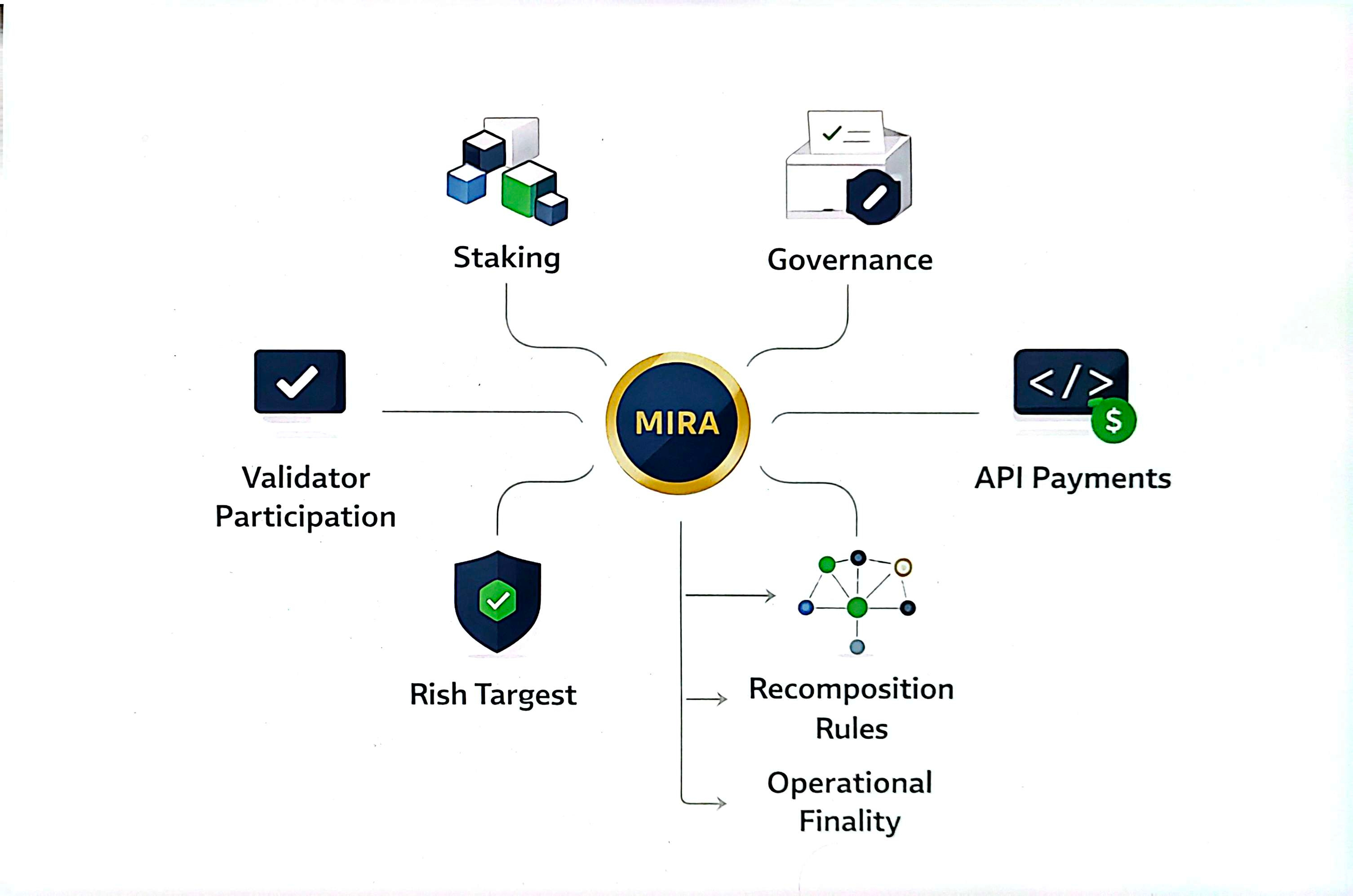

Where the Token Funds the Hard Half

$MIRA is the staking and governance token of the network and the API payment and validator token. There is player node stake to engage in verification. It is a matter of choice based on stake and reputation.

In case the token simply compensates verifiers to verify statements, it finances the half of the effort which is reliability. The reward for the harder problem is much bigger. In case the network will be used to resolve disputes, recomposition reasoning and the cost of enforcing action-ready trust in adversarial circumstances, the token will begin to settle the operational finality. The competition between centralized and decentralized verification comes down to who absorbs the cost of turning fragmented checks into a single executable state. Mira is positioned to solve this at the protocol layer rather than leaving every integrator to earn their own solution through private middleware.

What I Want to Learn Next About #Mira

In 2024, #Mira raised seed funding and has accelerated protocol design, as well as ecosystem development. The team has proclaimed integrations of developers and builder grants. Part of the adoption numbers should be audited separately, but the trend is evident.

The right test is whether, under stress, the protocol keeps moving responsibility into shared rules instead of private workarounds. When controversial assertions touch off obvious network-level treatment, then the system functions. Each packet of trust the protocol handles is one less box of private policy an integrator needs to maintain.

The free market will sort this. I want to see how the trending conversation around AI reliability evolves as production pressure increases. The trading of attention between verification projects will settle on whoever makes finality cheap and legible. On any day the protocol absorbs more of the action-finality burden, Mira moves closer to what the industry needs. I believe the team is developing on the appropriate level.