Fragment 102 stalled at 72%.

That used to be enough.

Not anymore.

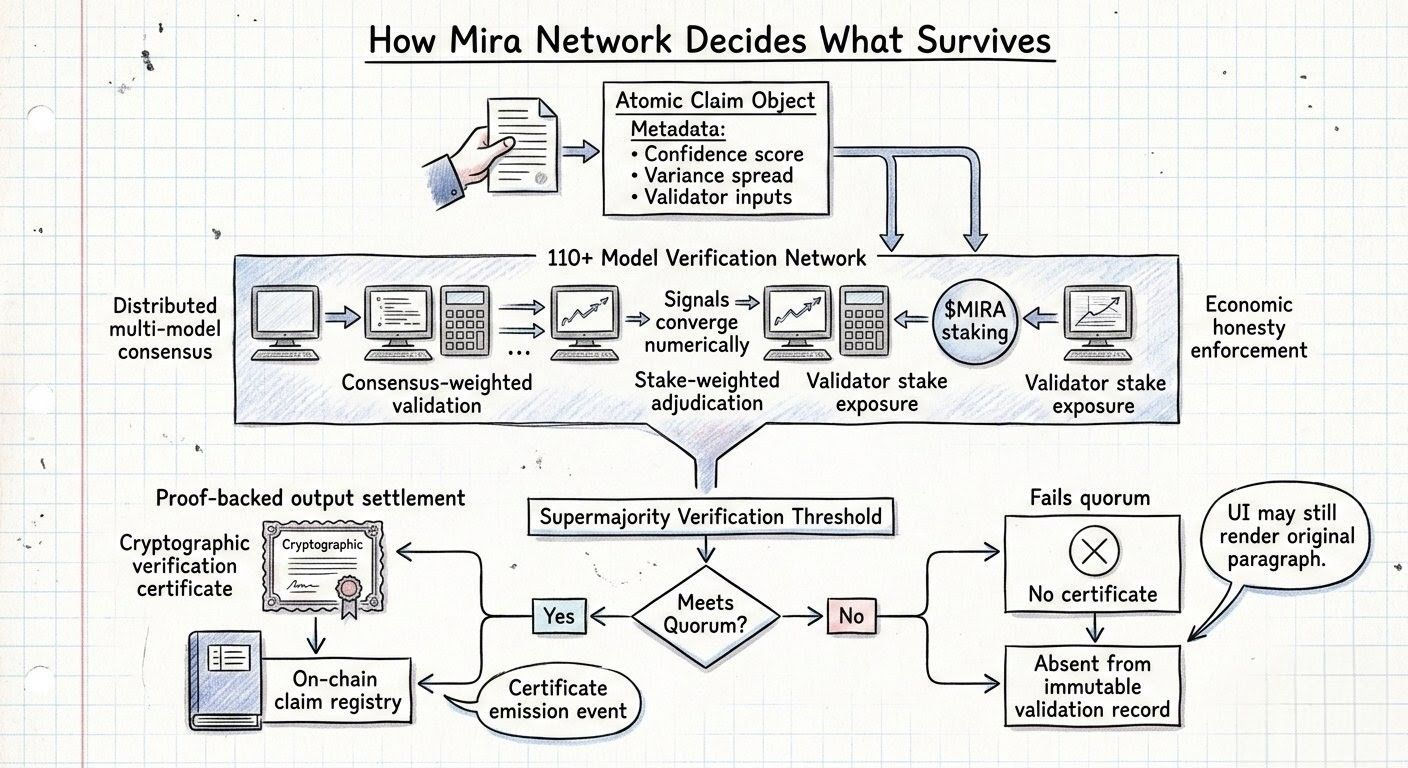

Mira network validator panel had been holding it there long enough that I checked the reasoning traces twice just to make sure I hadn’t missed a disagreement. Same evidence graph. Same archive branch. Same conclusion from every node that had touched it so far.

Nothing wrong with the fragment.

Plenty of agreement. Wrong number.

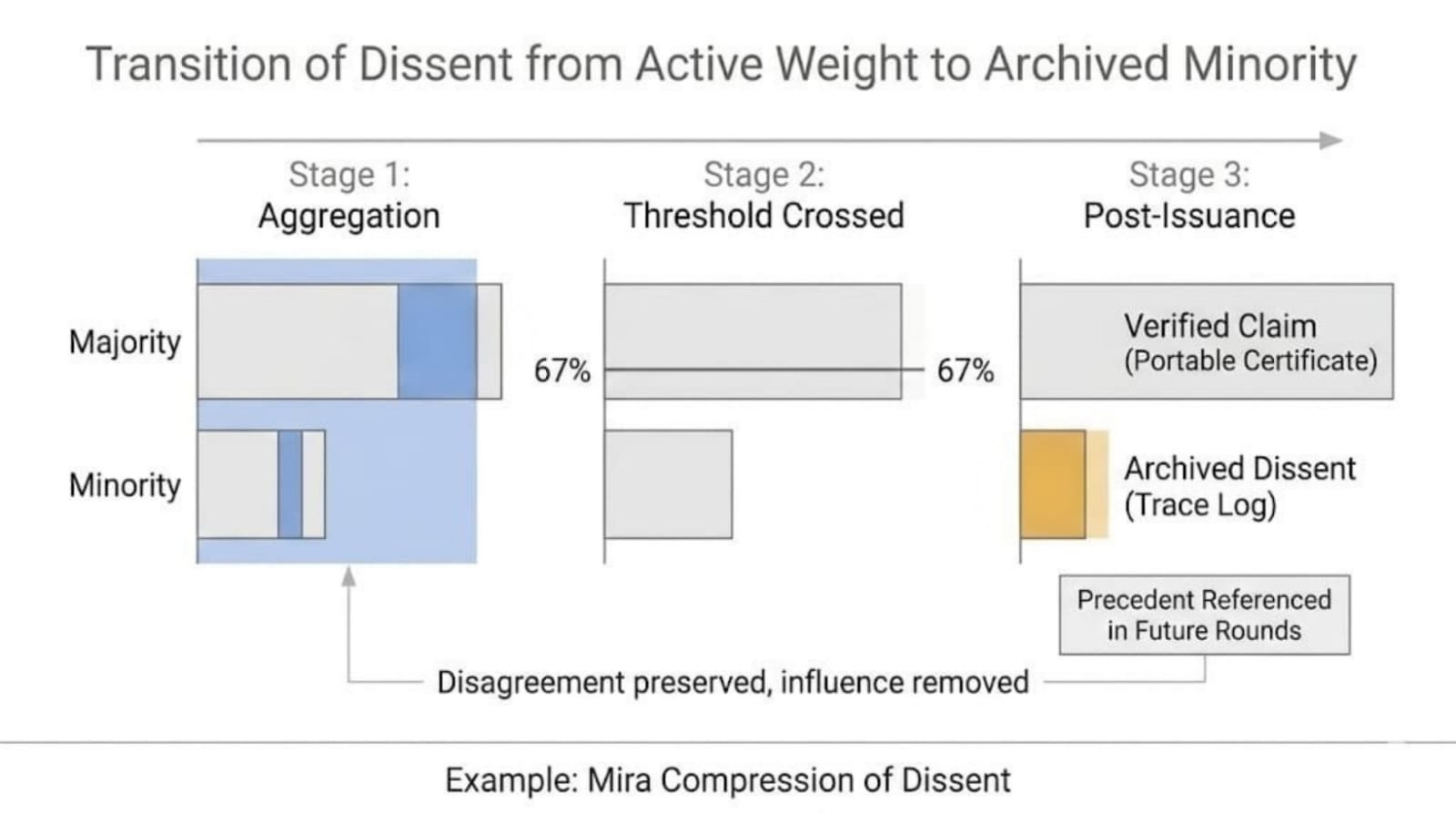

A week earlier the governance proposal had looked harmless sitting in the $MIRA token queue. Small parameter change. One line in the config diff. Raise the verification supermajority from 67% to 75%.

Clean logic. Fewer false positives. Harder to push weak fragments through certificate issuance. Large validator operators liked it. Most delegates did on Mira. I almost skipped the vote myself because it sounded like the kind of security upgrade nobody wants to argue against in public.

I voted for it anyway.

Safer sounded easy when the queue wasn't mine yet.

It passed comfortably.

Now it was sitting inside every live verification round.

supermajority_requirement: 75%

Fragment 102 had already collected four affirmations by the time I opened its trace. Short claim. Clean archive reference. No context warnings. No dataset conflicts. No secondary branch reopening the evidence walk.

Under the old threshold, it would have sealed before I even bothered staring at it.

Now it just sat there at 72%, looking finished and refusing to close.

verification_queue_depth: rising

Another fragment on Mira verification queue drifted into the same zone a few minutes later. 70%. Then another. The queue wasn’t broken. It just stopped shedding work at the pace it used to.

More validators had to spend time repeating the same clean read.

By the third clean affirm, the fragment wasn’t getting safer. It was just using more of the round.

I watched another node open Fragment 102.

Same model family. Same evidence path. Same answer.

affirm

The band climbed again.

74%

Still not enough.

That looked wrong in a way clean metrics rarely do. Not because the fragment was uncertain. Because the network was still making it wait after certainty had already arrived in every way that felt operationally honest.

I almost moved on to the next fragment.

Didn’t.

Another validator came in slower, deeper archive check, longer model pass, but the result was still the same.

affirm

76%

Mira sealed the certificate.

No disagreement. No correction loop. No hidden contradiction surfacing late. Just extra passes to reach the same conclusion the round had basically reached earlier.

And now the queue had one less validator available for everything else.

Nothing failed. Capacity just got spent proving the obvious.

I scrolled back through the governance thread again.

Security language everywhere. Validity checks. Network hardening. Safer certification. All true.

What it didn’t say was what happens when every easy fragment starts consuming one more validator on Mira than it used to.

The queue started teaching a new rhythm.

Clean fragments lingered.

Messy fragments lingered longer.

Verification rounds stopped looking like fast filter passes and started looking like waiting rooms. Not because evidence got worse. Because the threshold moved.

Another panel opened on the side. Different fragment. Same pattern. The reasoning traces matched. The archive branch was shallow. The confidence band crossed 70% and just stayed there while the validator mesh looked for one more node to say the same thing again.

validator_pass_count: 5 certificate_latency: extending

You don’t notice it in one round.

Then three panels stop clearing.

I caught myself hoping for one messy fragment to fail cleanly just so the panel would look honest again.

Instead everything looked almost finished.

Cleaner-looking. Slower-moving. Worse.

Another fragment crossed 71% and stalled.

The traces matched.

Mira's verification queue didn’t care.