I keep coming back to a simple question: when an AI system makes a decision what exactly should be left behind as evidence? My sense is that “auditable inference” matters most when we stop treating an output as a magic answer and start treating it as an event that should carry a record. In Fabric Protocol at least as the project describes itself today the point is not just to run AI or robotics workloads but to coordinate data computation and oversight through public ledgers so that work can be observed rewarded and challenged instead of merely trusted.

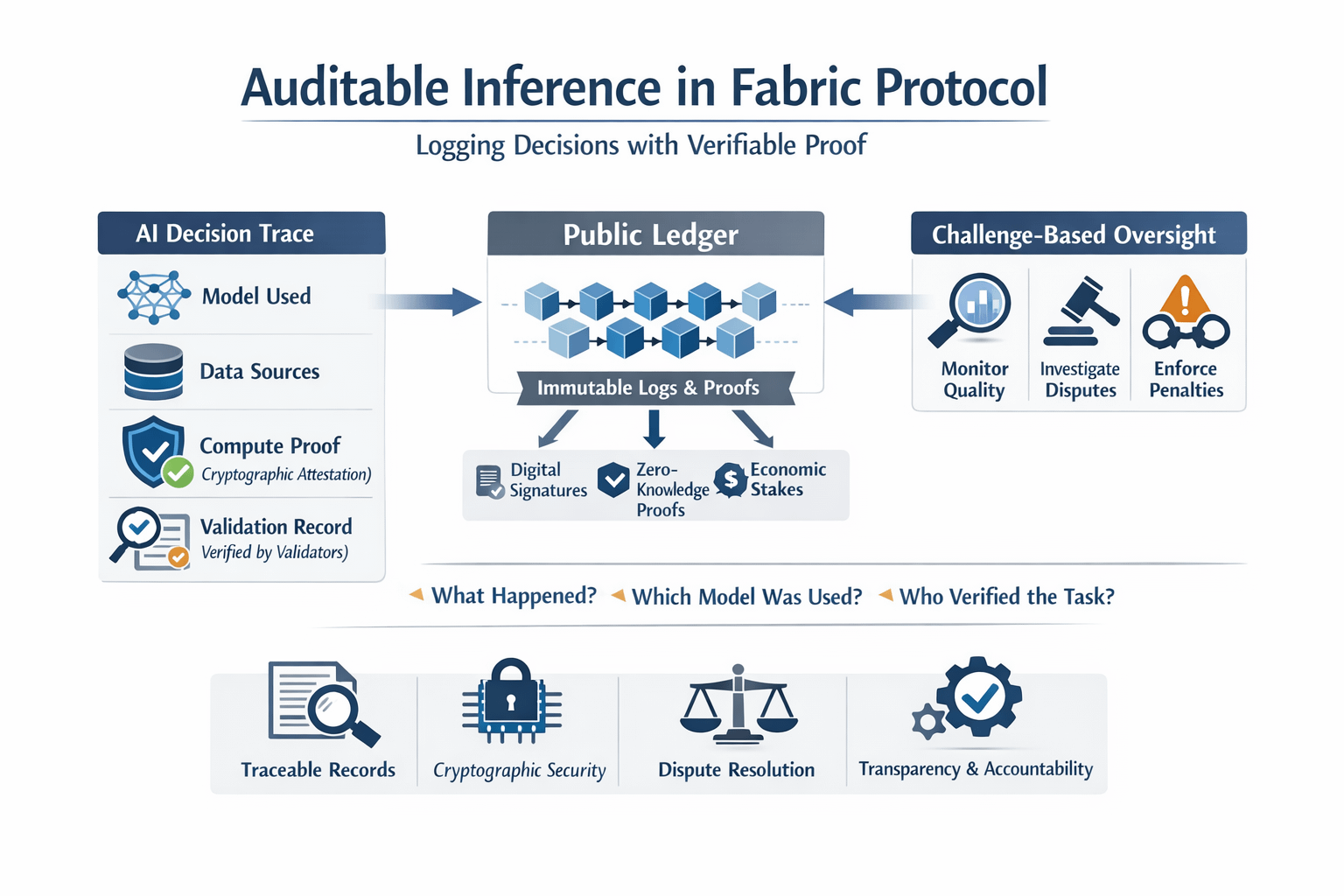

What I find helpful is to separate explanation from proof because people often want an AI system to explain itself and that is only part of the problem. A smooth explanation can still be wrong incomplete or convenient while proof is something else entirely. In Fabric’s design “verifiable” work is tied to measurable contributions such as completed tasks data provision compute provision and validation work. The whitepaper even says compute provided for model training or inference should come with cryptographic attestation that the work was completed. That does not mean every decision becomes perfectly understandable. It means the system is trying to leave behind a trace showing that a certain model run task or contribution happened under stated conditions and that others can check those claims later.

I used to think that if you had enough logging you were basically done but I do not think that anymore because logs by themselves can be edited hidden or written in ways that tell a flattering story. What makes the Fabric idea more interesting is that it tries to pair logging with economic and cryptographic checks while also being unusually direct about a hard limit. It does not claim that every physical task can be universally verified because that would be too expensive and in many cases not technically realistic. Instead it proposes a challenge-based system in which validators monitor quality and availability investigate disputes and can trigger penalties when fraud is proven. I appreciate that honesty because in real systems the question is often not “Can we prove everything?” but “What evidence can we preserve cheaply enough that cheating becomes unattractive?”

This is also why the subject is getting more attention now than it would have five years ago because the technology side has moved in ways that make the idea feel more practical. Recent research on end-to-end verifiable AI pipelines says advances in digital signatures cryptographic commitments and zero-knowledge proofs are making it more plausible to prove pieces of the AI lifecycle including inference without exposing sensitive inputs or model details. At the same time that research is clear that a fully end-to-end verifiable pipeline is still more of a framework and research direction than a finished reality. In other words the tools are becoming real enough to build with even though the finished architecture is not here yet and that feels like exactly the stage when design choices about auditability start to matter.

The pressure is not only technical because it is regulatory and cultural too. NIST’s AI Risk Management Framework and its playbook both lean hard on documentation traceability and mechanisms that make AI systems auditable including logging processes outcomes and impacts. The EU’s AI Act goes further for high-risk systems by explicitly requiring logging of activity to ensure traceability of results with major transparency rules arriving in 2026. Once systems are used in hiring credit healthcare public services or robotics “trust us” stops sounding like a serious governance model because the record around a decision starts to matter almost as much as the decision itself.

So when I think about auditable inference in Fabric Protocol I do not hear a promise that machines will become morally self-explaining. I hear a more modest and more useful claim that a decision should leave behind enough structured evidence for another party to ask what happened what model or workflow was used who vouched for it what can be checked and what happens if the record does not hold up. That strikes me as a healthier standard because it does not promise certainty or perfect visibility. It asks for something more grounded than that. It asks for a system that expects doubt keeps receipts and treats verification as part of the job rather than an afterthought.

@Fabric Foundation #ROBO #robo $ROBO