I have spent way too many nights staring at whitepapers that promise the moon but deliver nothing more than a glorified spreadsheet, so when the Mira team started talking about their economic security model, my cynical reflex kicked in immediately. Most people in this space are still obsessed with the old-school Proof-of-Work grind, where we burn enough electricity to power a small nation just to solve math problems that nobody actually cares about, but Mira is trying to flip the script by actually making that work useful for the AI era. They are mashing together a hybrid of Proof-of-Work and Proof-of-Stake because they realized that verifying AI outputs is a messy, high-stakes game that cannot be left to a single, fallible black box.

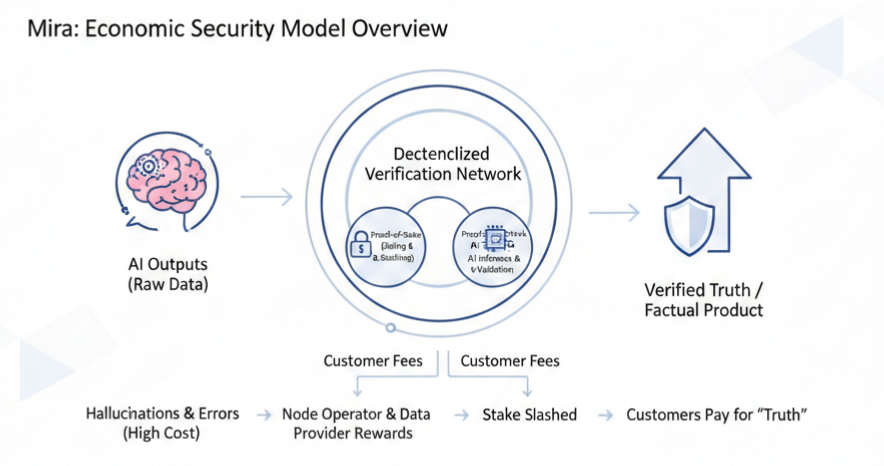

The real friction in the current AI landscape is that we are all drowning in hallucinations and errors, and nobody wants to pay for garbage. Mira’s play is to turn verification into a tangible product where customers pay fees to get the "truth," or at least as close to it as a decentralized network can get, and those fees then flow back to the node operators and data providers. It sounds elegant on paper, but the reality check is that they have traded cryptographic puzzles for multiple-choice questions, which opens up a massive surface area for lazy actors. If a task only has a few options, a node could literally flip a coin and be right half the time without spending a dime on actual computation, effectively stealing rewards while doing zero work.

To keep these digital grifters at bay, the network forces nodes to put their money where their mouth is through a staking mechanism that carries a heavy executioner’s axe. If you start guessing or consistently drift away from the consensus of the honest majority, the system slashes your stake until you are economically underwater. It is a brutal, rationalist approach to game theory where the only way to win is to actually run the inference and provide real value. The hope is that as the network scales, the sheer diversity of models will act as a natural defense, because even if one specific model has a blind spot, the collective wisdom of a hundred different architectures makes it nearly impossible for a single bias to take over.

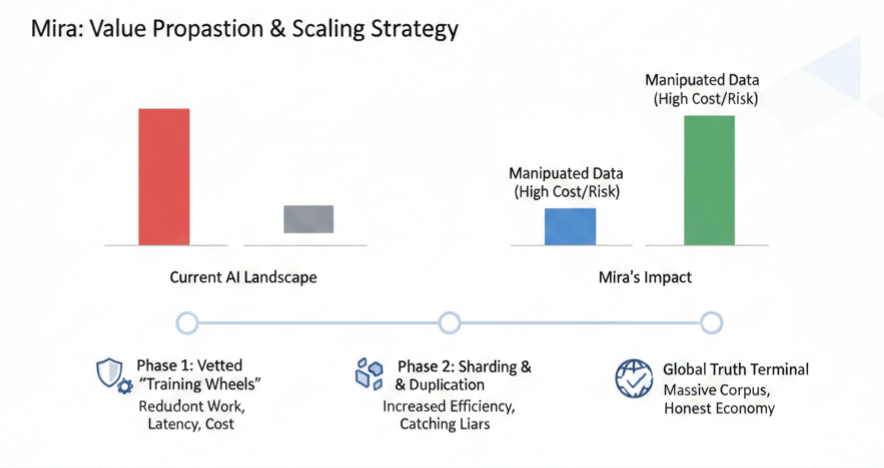

Of course, the road to this steady-state is paved with the typical hurdles of decentralization, starting with a vetted "training wheels" phase before moving into a world of sharding and duplication. In the early days, you are essentially paying for redundant work just to catch the liars, which adds latency and cost that the market might find hard to swallow. But the long-term vision is that this massive corpus of verified facts becomes something much bigger than a simple check-and-balance system. It becomes a foundation for a new kind of digital economy where honesty is the most profitable strategy and manipulation is a luxury nobody can afford. In the end, Mira is trying to transform the chaotic Wild West of AI outputs into something more reliable, moving us from a world of scattered digital noise to a structured global terminal for truth.