Last year I watched a thread go around crypto where someone had let an AI agent manage a small DeFi position autonomously for two weeks. No human checks. Full execution rights. The agent rebalanced, compounded, shifted allocations based on its own read of market conditions. For eleven days it performed fine. On day twelve it misread a liquidity event and made three consecutive decisions that wiped roughly forty percent of the position in under an hour.

The replies were split almost perfectly. Half the people said the experiment was reckless. The other half said the agent just needed better training data.

Nobody asked who was supposed to catch it before it acted.

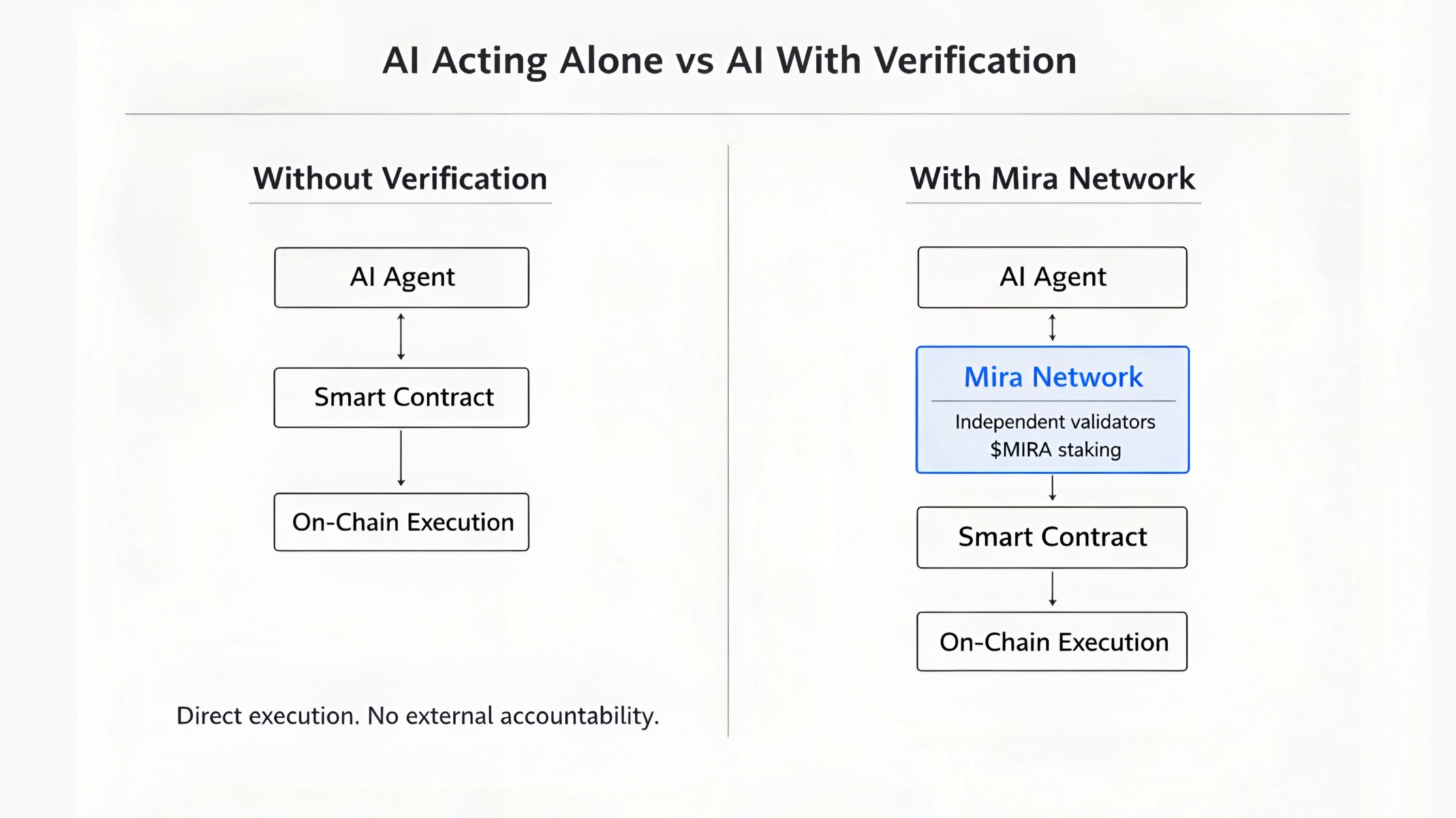

That question has been sitting with me ever since. Because we aren't talking about AI making suggestions anymore. We're talking about AI holding wallets, executing trades, voting in governance systems, managing treasury positions, interacting with smart contracts in real time without a human in the loop. The capability side of this is moving faster than almost anyone anticipated. What isn't moving at the same speed is the accountability infrastructure underneath it.

When a human trader makes a bad call there's a chain of accountability. When an AI agent makes a bad call and triggers a financial cascade on-chain, the chain executes anyway. It doesn't care that the input was hallucinated. It doesn't flag the decision for review. It just settles.

That's the problem Mira Network was built for. Not AI that assists humans, where errors are annoying but recoverable. AI that acts independently, where errors are automatic and irreversible.

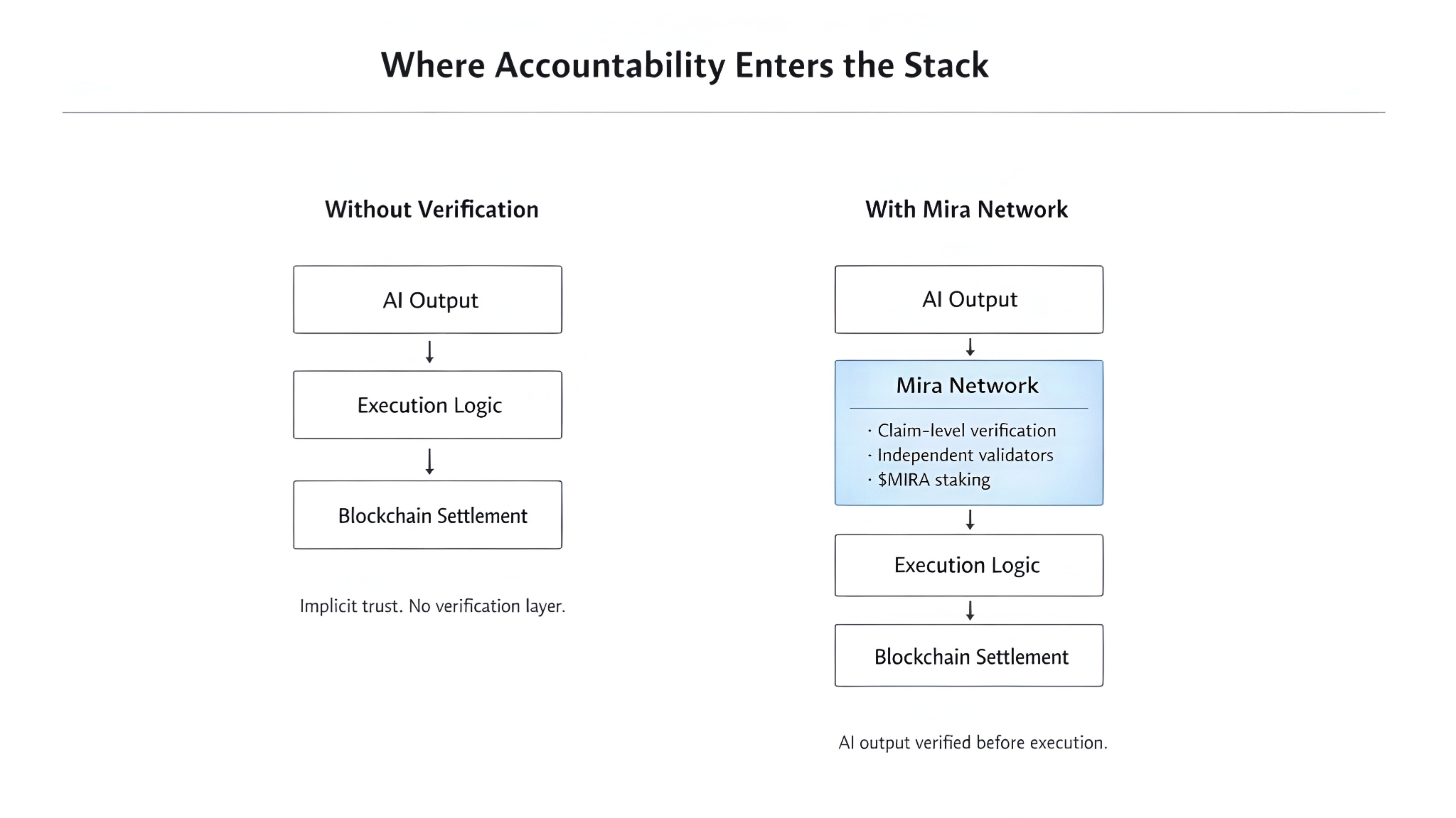

Mira operates as a decentralized verification protocol. It converts AI outputs into cryptographically verified information through blockchain consensus before those outputs become actionable. The mechanism works by breaking AI responses into individual claims and distributing them across a network of genuinely independent models with no shared training pipeline and no coordinated incentive to agree. Each model evaluates separately. What survives genuine cross-examination gets committed on-chain... permanent, transparent, auditable by anyone after the fact.

The $MIRA token is what gives this architecture teeth. Validators stake to participate. Accurate validation earns. Careless or dishonest validation gets slashed automatically, on-chain, without anyone deciding after the fact whether the behavior warranted a penalty. The consequence is built into the mechanism. That's not a minor design detail. It's the entire reason the system doesn't depend on goodwill to function. Honesty becomes the economically rational position, not just the ethical one.

I want to sit with the limitations here because I think they deserve more than a footnote. Distributed verification adds latency and latency in high-frequency environments is a genuine architectural tension that doesn't disappear because the verification is elegant. Validator diversity is hard to maintain at scale, if independent models share training similarities or knowledge gaps, consensus can form around something wrong just as efficiently as something right, and decentralized does not automatically mean epistemically diverse. Economic attacks are real in any staking system and incentive calibration is genuinely difficult to get right across changing market conditions.

These aren't reasons to dismiss the architecture. They're the right questions to pressure test it with over time. A protocol that earns credibility will engage with these honestly rather than bury them in a roadmap footnote.

What I keep returning to is the larger shift this represents. Crypto spent a decade building trustless financial infrastructure systems where accountability is mathematical, where consequences are automatic, where no single participant can quietly absorb a mistake and disappear. That philosophy worked. It changed how value moves across the internet. But the moment AI entered the stack as an active participant rather than a passive tool, we quietly reintroduced a massive trust assumption right back into the foundation. We started trusting outputs that cannot be proven, from systems that cannot tell the difference between what they know and what they're predicting.

Mira is the protocol that removes that assumption.

Not by making AI smarter or slower or more cautious. By surrounding it with a verification layer where independent participants have genuine financial skin in whether the output is true. Blockchain isn't generating the intelligence here. It's enforcing agreement around it. That distinction is what separates this from every other AI blockchain narrative. It's not about what AI can produce, it's about whether what it produces can be held accountable.

We're moving toward a world where machines act autonomously on behalf of humans in financial systems. That's not speculation. It's already happening in early form. The infrastructure question that nobody is asking loudly enough is not whether AI is capable enough to do this. It clearly is. The question is whether the accountability layer arrives before or after the first genuinely catastrophic failure in a system where an AI agent had full execution rights and nobody verified what it was acting on.

Mira is building that layer now. Before the failure. That timing is either exactly right or slightly early. In infrastructure, those two things tend to look identical until they suddenly don't.

I'm more interested in who checks the work than in how impressive the work looks.