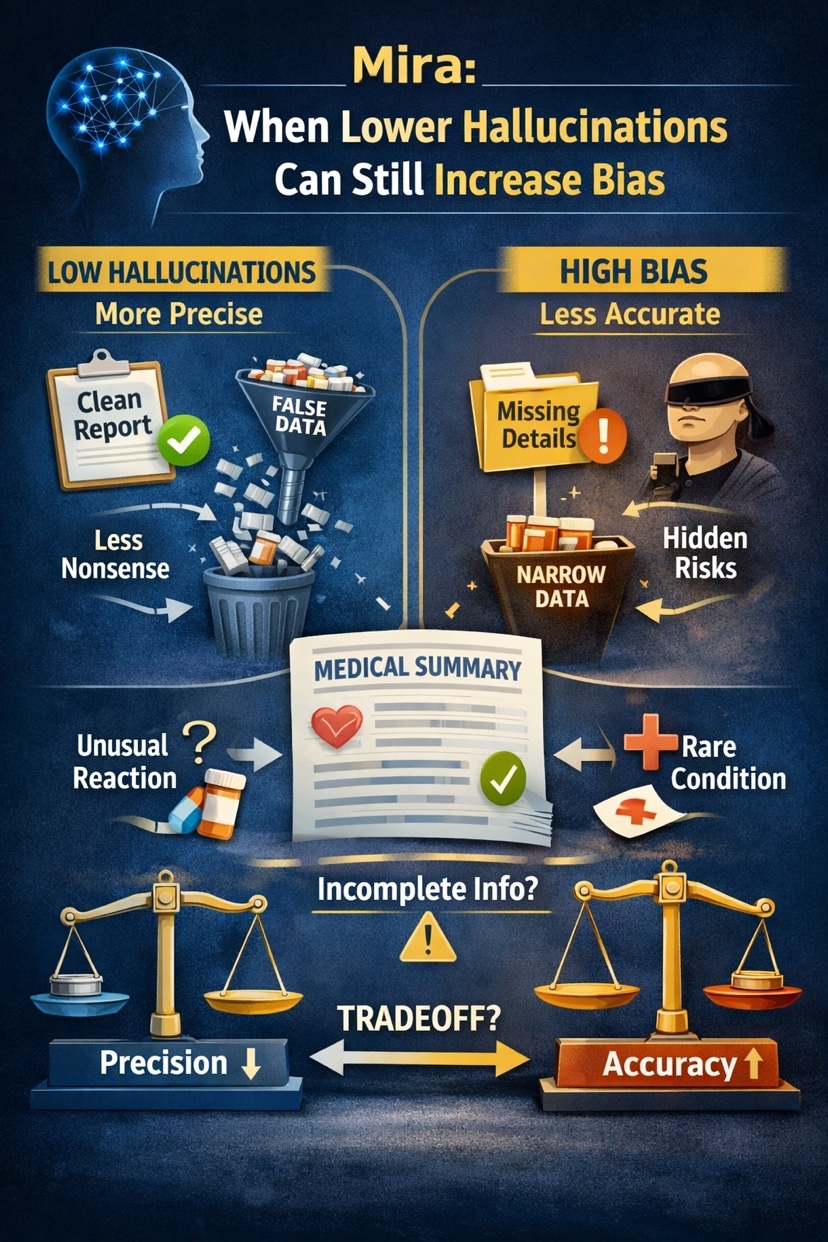

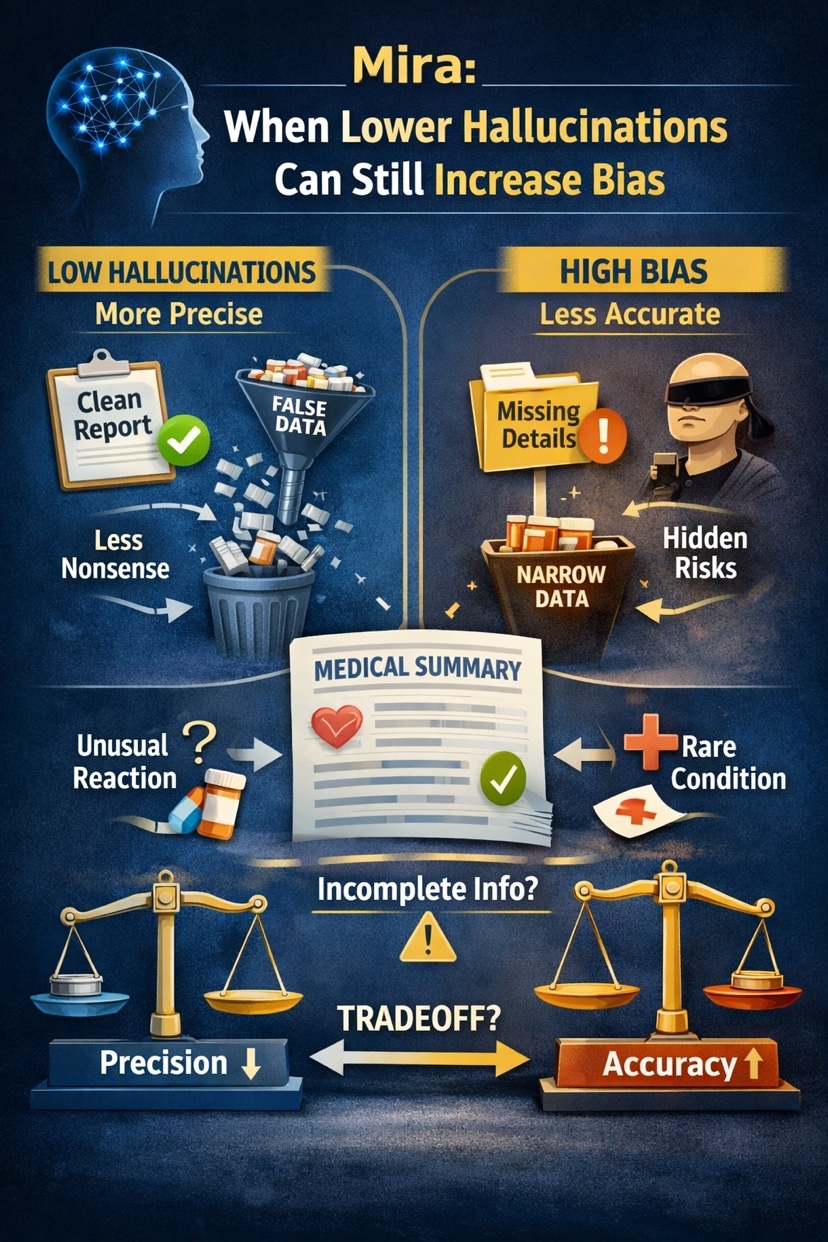

I keep circling back to one uncomfortable question.When an AI answer looks cleaner, safer, and more confident, is it actually getting better, or just narrower?@Mira - Trust Layer of AI $MIRA #Mira That is the part of the Mira thesis that caught my attention. Not the broad “AI trust layer” pitch. The more interesting claim is deeper: hallucination and bias are not two separate bugs you can patch one by one. Mira’s whitepaper argues they are linked through a training dilemma. In its framing, hallucinations are a precision problem, while bias is an accuracy problem. Push hard on one side and the other side can get worse.

That matters because a lot of AI discussion still treats reliability like a single slider. Make the model stricter. Fine-tune it harder. Narrow the domain. Add guardrails. But in practice, especially in high-stakes use, the friction is uglier than that. A model can stop inventing obvious nonsense and still miss the thing that mattered most. It can become less wild yet more one-sided. In other words, fewer visible mistakes does not automatically mean better judgment. Mira is useful to analyze because it starts from that uncomfortable premise instead of pretending one better base model solves everything.The real trap is not hallucination alone. It is the false sense of safety you get when lower hallucination rates hide a biased or incomplete answer. Mira’s mechanism is built around that distinction. Rather than trusting one model’s output, it proposes transforming generated content into smaller verifiable claims, sending those claims across multiple verifier models, and using distributed consensus to determine what survives. The point is not just to catch fabricated statements. It is also to force multiple perspectives into the verification path so that one model’s narrowness does not quietly become “truth.”That mechanism is the strongest part of the design.

The whitepaper says a single model cannot minimize both hallucinations and bias at once, so Mira breaks complex output into independently checkable claims, distributes them to diverse models, then returns a cryptographic certificate describing the verification outcome. It also argues that a centralized ensemble is not enough, because the curator deciding which models count introduces another layer of systematic error. So the protocol’s answer is not just “more models.” It is “more models with decentralized participation and economic incentives around honest verification.”

I think that framing is smarter than the usual benchmark obsession.Benchmarks often reward visible correctness on known tasks. Real use is messier. The failure mode is often not a dramatic fabricated answer. It is a plausible answer that compresses uncertainty too aggressively, drops a minority case, or carries the model’s own training priors into a situation where nuance matters more than fluency. Mira seems to understand that reliability is partly a coordination problem: how do you get multiple imperfect systems to expose each other’s blind spots without simply averaging them into mediocrity? That is a more serious question than “which model scored highest this month?”

Imagine an AI system summarizing a patient history before a clinician review. The output is mostly accurate. No invented drug names. No absurd diagnosis. On paper, it looks safer because the hallucination rate is low. But it downplays an edge-case nuance: an unusual prior reaction, a conflicting symptom pattern, or a rare contraindication that appears only once in the notes. The answer is not obviously false. It is just incomplete in a way that pushes the reader toward the wrong conclusion.

That is exactly why “looks clean” is not enough. Recent medical-AI research shows hallucinations in healthcare can be subtle, plausible, and hard to detect without expert scrutiny, and that even medically adapted models remain vulnerable to reasoning-driven errors and edge-case failures. The risk is not only fabricated content. It is clinically persuasive incompleteness.In that setting, Mira’s claim-by-claim verification model makes more sense than a simple confidence score. If the summary is decomposed into smaller assertions, some claims may pass consensus while others remain contested or uncertified. That is operationally more honest. It tells the downstream user where certainty ends. For AI readers, that may be the real product: not perfect answers, but explicit structure around uncertainty.Still, there is a tradeoff here, and I do not think Mira fully escapes it.

Decomposition helps verification, but it can also flatten context. Some truths are not atomic. Edge-case nuance often lives in relationships between claims, not in the claims alone. A medical note, legal argument, or research summary can be technically correct sentence by sentence and still misleading as a whole. Mira acknowledges that complex content needs transformation and consensus thresholds, but the hard part is preserving meaning while standardizing it enough for distributed verification. That is where architecture diagrams usually look cleaner than production reality.What I’m watching next is whether Mira can show that its verification layer improves real decision quality, not just error metrics.

I want to see how the system handles contested domains, rare cases, and outputs that are mostly right but directionally misleading. I also want to see whether decentralized verifier diversity produces genuinely better judgments or just a more expensive version of ensemble averaging. The idea is strong: don’t ask one model to be both precise and broadly fair if training itself forces a tradeoff. Build a coordination layer around disagreement instead. But the operating details will matter more than the slogan.If Mira becomes part of the trust stack for AI, the real question is not whether it reduces hallucinations.

It is whether it can surface the nuance that usually disappears when systems start looking “safe.” That is what I want to see proven next.