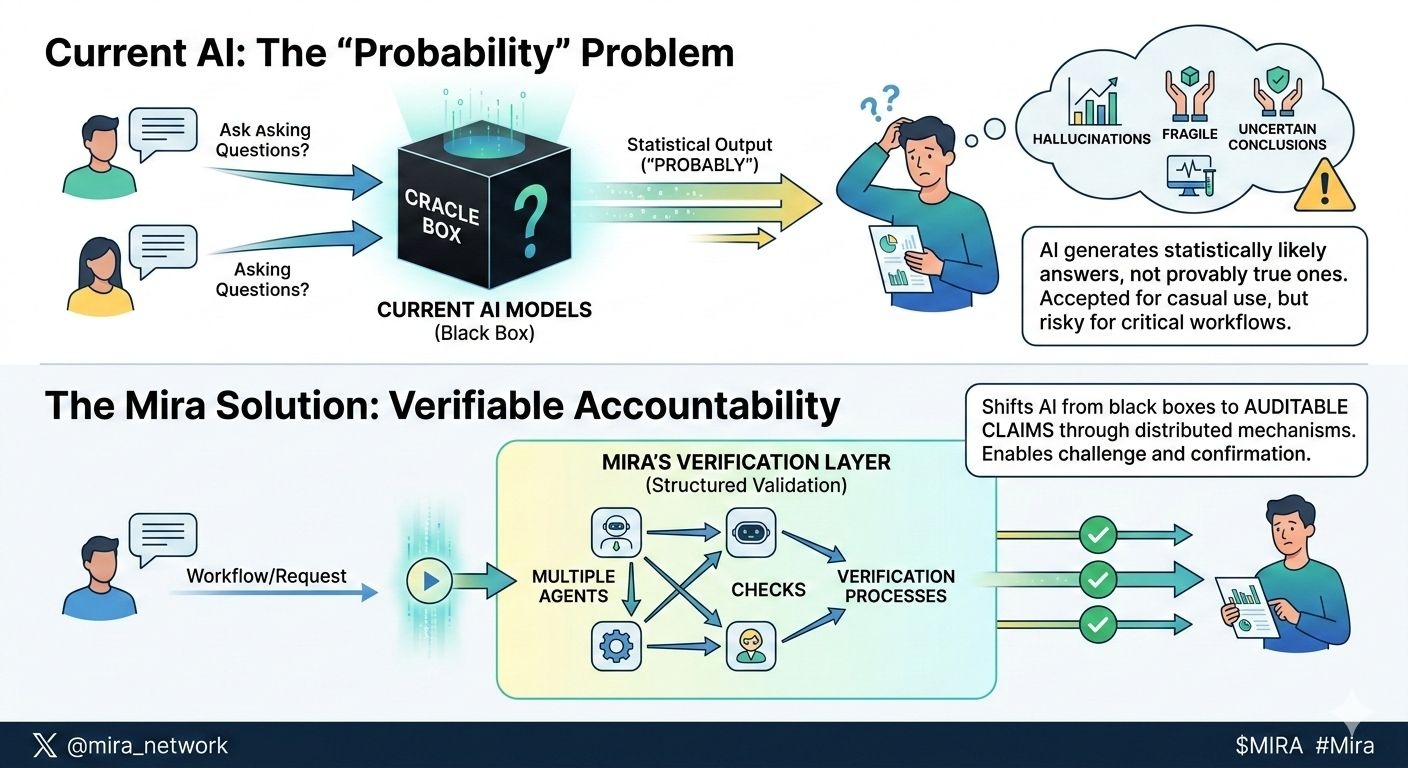

When I first started looking closely at Mira Network, what stood out wasn’t throughput metrics or abstract decentralization rhetoric. It was a discomfort with something most of us have already normalized. AI systems today operate on probability. They generate answers that are statistically likely, not provably true. For casual use, that’s fine. But as AI moves into finance, research, healthcare triage, and autonomous workflows, “probably” begins to feel fragile.

The idea that really clicked for me was this: Mira isn’t trying to replace AI models. It’s trying to hold them accountable.

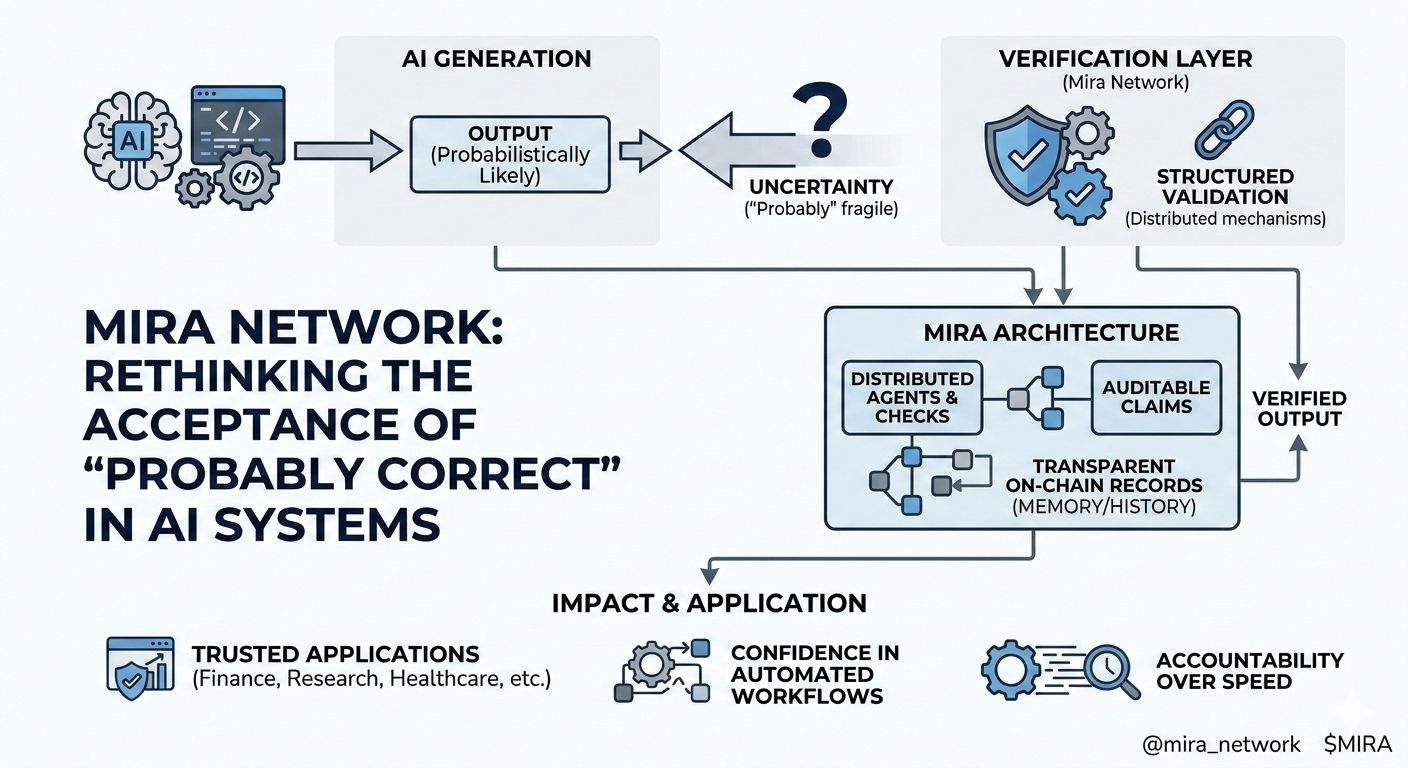

At its core, Mira introduces a verification layer around AI outputs. Instead of accepting a single model’s response as sufficient, it enables structured validation through distributed mechanisms. Multiple agents, checks, or verification processes can evaluate whether an output meets defined standards before it’s accepted. This shifts AI from a black box oracle into something closer to a system of auditable claims.

That sounds technical, but the human implication is simple. When you ask an AI to draft a contract clause, assess a dataset, or execute a decision in a workflow, you shouldn’t have to wonder whether it hallucinated a detail. Mira’s architecture creates space for challenge and confirmation. It treats AI outputs less like gospel and more like proposals that can be verified.

Another aspect that struck me is how this reframes trust. Most AI infrastructure today optimizes for speed and convenience. Mira leans into reliability. By anchoring verification logic on chain, it creates transparent records of how decisions were validated. Stepping back, that feels less like adding friction and more like adding memory. Systems remember how conclusions were reached.

In practical terms, this opens the door for AI powered applications that require stronger guarantees. Think automated research pipelines, on chain agents executing financial logic, or gaming environments where AI driven actions must be provably fair. In these contexts, “good enough” answers can erode confidence. A verification layer makes those products more defensible and more trustworthy.

Of course, there are tradeoffs. Verification adds overhead. It can slow processes that, in many cases, users expect to be instantaneous. There’s also a philosophical question: how much certainty is enough? Absolute truth is rarely achievable, even in human systems. Mira doesn’t eliminate uncertainty; it structures it. That nuance matters.

But I keep coming back to the cultural shift embedded in this design. We’ve been racing to make AI more capable, more creative, more autonomous. Mira asks a quieter question:

what if the next leap is not more intelligence, but more accountability?

If Mira succeeds, most users won’t think about verification layers or distributed validation. They’ll simply feel more comfortable letting AI handle important tasks. The anxiety of double checking every output might fade. The blockchain won’t be the headline. It will be the invisible scaffolding that makes machine intelligence safer to rely on.

And that might be the most human strategy of all: not chasing spectacle, but building the kind of infrastructure that earns trust precisely because it fades into the background.